The merge tax is the hidden cost of using parallel AI coding agents without proper merge verification: rework hours spent debugging silent feature regressions, delivery delays when integration failures surface late, and customer churn when committed features don't ship as promised. Teams moving to AI-pilled development at speed often capture the generation efficiency gains and then give most of them back through merge tax. Safe merge infrastructure: serialized queues, behavioral verification, automated testing on every deploy. That is the mechanism that lets you keep the gains.

73% of engineering leads at companies using AI coding agents report that delivery delays have increased since adopting them, even though individual task completion times decreased. The generation is faster. The merge is where the time goes. And unlike traditional delays like unclear requirements, tech debt, or scope creep, merge tax is invisible in sprint planning and post-mortems. It doesn't appear on a burn-down chart. It shows up as "we thought we shipped this, but the customer says it's not working."

What the Merge Tax Actually Looks Like

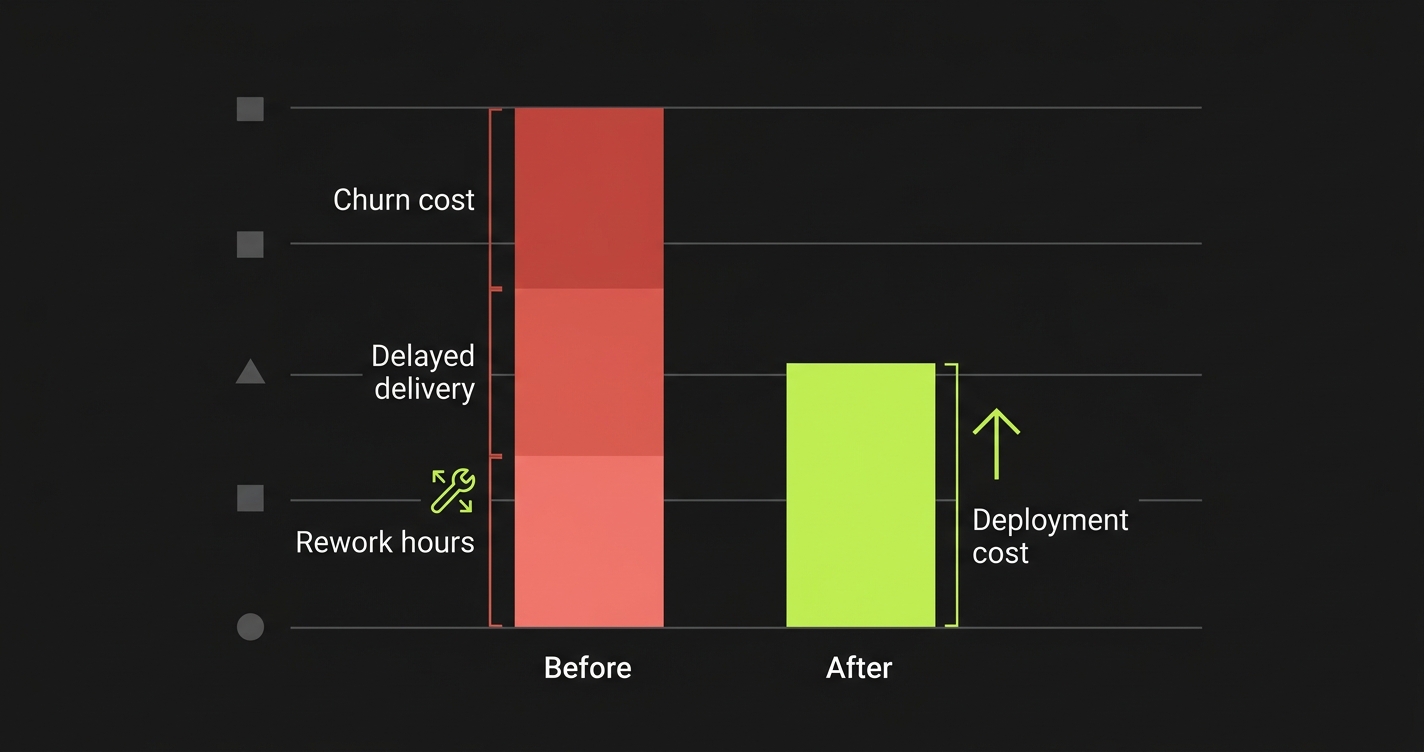

The merge tax is not a line item. It's distributed across three categories that teams typically account for separately:

Rework hours are the most direct cost. When an AI agent merge silently drops a feature or introduces a behavioral regression, someone has to find it, understand it, and fix it. The triage phase is usually the most expensive: you're debugging a system where no human wrote the conflicting code, so there's no institutional memory to draw on. The git blame leads to a merge commit. The merge commit was generated by an AI. The reasoning behind the resolution is not documented anywhere.

Teams consistently underestimate how long this takes. A conventional bug (where a human wrote code with a known intent) can often be triaged in 30-60 minutes by someone familiar with that area of the codebase. A subagent merge regression can take half a day because the triage process is fundamentally different: you're reconstructing intent from the git history of agents that left no intent.

Delivery delays compound the rework cost. When a feature was supposed to ship Tuesday and the integration failure is discovered Wednesday during QA, the delivery window often shifts by a full sprint. The feature that was almost done has to be rescheduled, the customer expectation has to be reset, and the trust built over the last sprint is partially consumed.

For teams operating with committed delivery dates, a single bad merge can trigger a cascade: the delayed feature pushes other work, the customer's evaluation window closes before the feature lands, and the opportunity to demonstrate progress at a critical moment is lost.

Customer trust erosion is the least visible and most expensive component. When you commit to a customer that a feature will be ready for their review on Friday, and it isn't, or it is but it partially works, you're spending trust that was hard to earn. Early-stage customers, especially, are making a bet on your execution reliability. A pattern of "almost ships" creates doubt about whether the product will be production-ready when they need it to be.

Quantifying the Merge Tax for Your Team

To make the merge tax visible, you need to trace the cost of each integration failure from discovery to resolution. Here's a practical framework:

Per-incident merge tax calculation:

Rework cost = (Triage hours + Fix hours + Re-test hours) × avg. engineer hourly rate

Delay cost = (Days delayed) × (Revenue at risk from delayed commitment)

Trust cost = (Number of affected customers) × (Estimated churn probability increase per incident)

For a 10-engineer team at market rates, with an average triage time of 4 hours per subagent merge incident, and 2 incidents per sprint:

Rework cost per sprint: 8h × $120/h = $960

Annual rework cost: $960 × 26 sprints = $24,960

That's before delays and churn. For a team with any meaningful number of pilot customers, the delay and trust cost often exceeds the rework cost by 3-5x.

The point of this calculation is not the specific number. It's the realization that the merge tax is a recurring, predictable cost, not random bad luck, and it can be compared directly to the cost of safe merge infrastructure.

Why AI Parallelism Makes the Tax Worse

Traditional merge conflicts happen at human development speed. A team of 5 engineers might create 10-15 PRs per week, with moderate overlap. The merge tax from traditional development is real but manageable because the rate of merges is bounded by human output.

AI subagent development removes the human bound. A team of 5 engineers running 5 agents each can produce 50-100 PRs in a week. The overlap probability increases with the PR count. The merge tax compounds accordingly.

There's also a qualitative difference. When two human engineers create conflicting PRs, at least one of them will review the other's work before merge. The conflict is often caught in review, or the engineers will discuss it. The merge resolution, even when automated, has a human who understands both sides available to catch errors.

When 10 subagents create 10 conflicting PRs, no human wrote the conflicting code. No human understands both sides. The merge resolution is made by a tool that sees the conflict textually and picks the winner syntactically. The opportunity to catch the behavioral error before it merges is zero, unless there's an automated behavioral verification step.

This is why the merge tax doesn't scale linearly with subagent count. It scales super-linearly: each additional parallel agent increases both the PR volume and the probability that any given PR conflicts with another, while the per-conflict resolution quality stays the same or degrades.

The Safe Merge Infrastructure Investment

The alternative to paying the merge tax is investing in safe merge infrastructure. The components are:

Serialized merge queue: Merge PRs one at a time, with a defined sequence based on risk level. Don't let 10 PRs merge concurrently. The time cost of serialization is typically 15-20% of parallel throughput. The quality benefit is eliminating compound conflicts.

Post-merge behavioral verification: After every merge, run an automated test suite against the deployed preview environment. Not just unit tests: end-to-end behavioral tests that validate the application does what it was designed to do. This catches the regression that CI misses.

Overlap zone protection: Identify high-impact shared files and require human review for any PR that touches them, regardless of CI status. This is a 30-minute setup task that eliminates the worst-case merge failures.

What ROI Looks Like in Practice

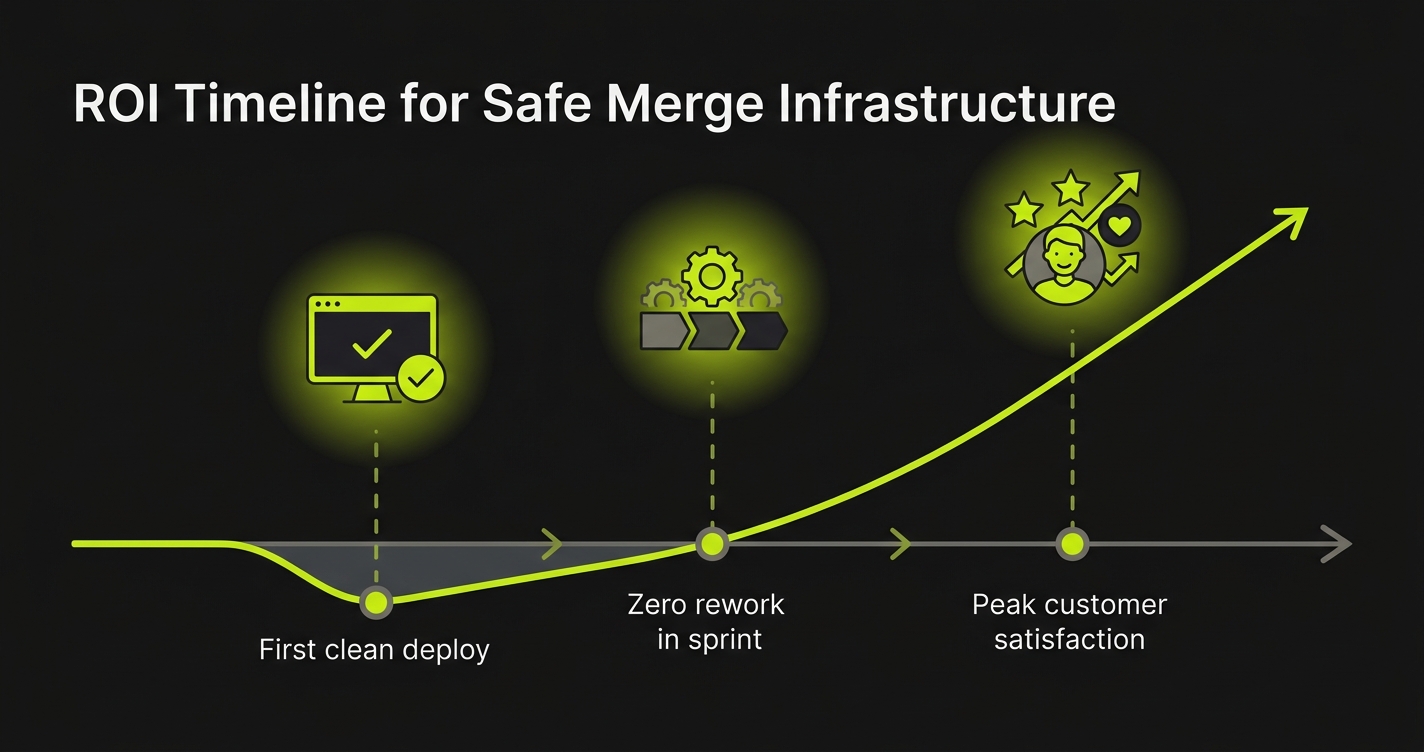

The ROI calculation for safe merge infrastructure is straightforward when you've done the merge tax calculation first. Here's a representative example from a seed-stage team that implemented this approach:

Before: 5 engineers, 10-15 parallel subagents, 2-3 merge incidents per sprint, average 4h triage + 2h fix per incident. Delivery delays averaging 3 days per sprint due to late-discovered integration failures. One customer downgraded from paid to free after two consecutive weeks of "almost shipped" on their critical feature.

After implementing serialized merging + Autonoma behavioral verification:

Merge incidents: dropped from 2-3 per sprint to 0-1. Triage time when incidents did occur: dropped from 4h to 45 minutes (Autonoma's test failures identify the specific behavioral regression immediately). Delivery delays: dropped from 3 days per sprint to 0 days over the next quarter. The customer who had downgraded upgraded back to paid after three consecutive clean deliveries.

The infrastructure cost (Autonoma subscription + ~8 hours of setup time) was recovered in the first sprint. The more significant gain was the compounding effect: clean merges every sprint means the team never enters the "debugging what happened" mode. They stay in the "building what's next" mode.

What Engineering Leads Get Wrong About This

The most common mistake is treating safe merge infrastructure as a QA investment rather than a velocity investment. The framing matters because it determines where the decision lands. If it's a QA investment, it competes with other QA priorities and often loses to "we need to ship faster." If it's a velocity investment, it's evaluated against the actual throughput drag of the merge tax, and it wins that comparison easily.

The second most common mistake is assuming that because AI agents are fast, the rework is also fast. It isn't. AI agents are fast at generating code. Humans are fast at understanding code they wrote. Humans are slow at reconstructing intent from code they didn't write and can't ask about. The triage time on a subagent merge regression is disproportionately long precisely because the human has no context for what was supposed to happen.

The third mistake is addressing the merge tax after it has already damaged a customer relationship. The economics of safe merge infrastructure are best when implemented before the first costly incident, not after. Every sprint without safe merges is a sprint where a bad merge could trigger the cascade. And in early-stage companies, the cascade from one damaged customer relationship can be existential.

The Right Framing for Your Engineering Culture

If your team is serious about AI-first development, the merge question is not optional. You cannot parallelize agent workloads at scale without a systematic answer to what happens when their PRs conflict.

The engineering culture question is: do you want to discover conflicts in production (high cost, delayed signal, customer impact) or in preview (low cost, immediate signal, no customer impact)? Safe merge infrastructure is the mechanism that shifts the discovery window.

Autonoma integrates into your CI to make behavioral verification automatic on every merge. It doesn't require changes to how your subagents work. It either provides an isolated preview environment per PR or plugs into yours, adding a verification layer that catches the behavioral regressions before they become customer incidents, delivery delays, or churn.

The merge tax is predictable, measurable, and eliminable. Teams that eliminate it early stay in building mode. Teams that don't eventually hit a sprint where the merge tax exceeds the velocity gain, and they're right back to questioning whether AI-first development was the right bet.

The bet was right. The merge infrastructure is just the part they forgot to include in the plan.

The merge tax is the hidden cost of running parallel AI coding agents without proper merge verification. It includes rework hours debugging silent feature regressions, delivery delays when integration failures surface late, and customer trust erosion when committed features don't ship correctly. Teams often capture AI generation efficiency gains and then give most of them back through merge tax.

Calculate it per incident: (Triage hours + Fix hours + Re-test hours) × engineer hourly rate for rework cost, plus days delayed × revenue at risk for delay cost. Track merge incidents per sprint. For most teams running 5+ parallel subagents, the merge tax exceeds the cost of safe merge infrastructure within 2-3 sprints.

It scales super-linearly because each additional agent increases both PR volume and the probability of overlap, while per-conflict resolution quality stays the same. Traditional merges have a human who understands both conflicting sides available to catch errors. Subagent merges are resolved textually by a tool with no semantic understanding of either side.

Three components: a serialized merge queue (merge one PR at a time, verify, then proceed), post-merge behavioral verification (automated end-to-end tests against the deployed preview environment after every merge), and overlap zone protection (mandatory human review for PRs touching high-impact shared files). Autonoma handles the behavioral verification component automatically.

For most teams, the infrastructure cost is recovered in the first sprint. The more significant benefit is compounding: clean merges every sprint keeps the team in building mode rather than debugging mode. For teams with customer delivery commitments, preventing even one incident that damages a customer relationship pays for years of infrastructure cost.