Manual testing vs. automated testing is no longer a quality debate. With AI coding tools accelerating feature output by 5-10x, it is a cost and capacity problem. A 10-person dev team that ships 50 features per week can not be covered by 3 manual QA testers. Scaling manual QA to match costs $1.2M/year in headcount alone. Traditional test automation costs $460K-$510K/year with a 3-6 month ramp. AI-native testing approaches the same coverage at $120K-$240K/year, with zero test authoring or maintenance burden. The difference compounds over 3 years.

One million dollars. That is the annual difference between scaling manual QA to match an AI-accelerated development team and using a modern testing approach to do the same job. Not over three years. Per year.

That number comes from a real cost model, not a vendor slide. It accounts for salaries, tooling, ramp time, and the ongoing maintenance burden that traditional test automation generates but rarely gets attributed correctly in budget reviews. Most engineering teams skip this test automation ROI calculation entirely. We did not skip it. We built Autonoma specifically to eliminate the largest line items in that cost model.

The model below uses a 10-person engineering team shipping 50 features per week as the baseline, which is a realistic output for a team that adopted AI coding tools in the last 12 months. If your team looks anything like that, the numbers apply directly.

The Baseline: Before AI Coding Tools

To model the cost gap accurately, start with a concrete baseline.

The company: 10 developers, SaaS product, pre-AI tooling adoption. Output: 5 features per week, roughly one per developer. QA team: 3 manual testers handling a mix of regression, exploratory, and smoke testing. Cost: 3 x $80K average salary = $240K/year in QA headcount, plus management overhead and tooling.

Coverage is adequate but not comfortable. The testers are busy. Regressions occasionally slip, but the team catches most issues before production. The process is understood, predictable, and funded.

Then the company adopts Cursor, GitHub Copilot, or a similar AI coding platform. Developer output increases to 50 features per week. This number is not hypothetical -- it reflects what engineering leaders are reporting six months into AI-assisted development cycles. Some teams see 3x. Some see 10x. The median for active AI tool users is somewhere in the 5-8x range.

The QA team is still three people.

The QA Bottleneck: What It Actually Costs

Before comparing solutions, it helps to put a number on the cost of the bottleneck itself.

If features wait 3 weeks in a QA queue, and the average feature drives $10K-$50K in annual recurring revenue (not uncommon for a $5M ARR SaaS with 100 features), then each week of delay on 15 features in queue represents $150K-$750K in delayed revenue. Even at the conservative end, a 3-week QA backlog on a 50-feature-per-week output team is a $450K-$2.25M annualized revenue timing problem.

This is the number that turns the manual testing vs. automated testing debate into a finance conversation. It is not about testing philosophy. It is about whether the testing function can scale at the same rate as the development function, and it is the exact problem Autonoma was designed to solve. For a deeper look at how this bottleneck is structurally changing QA's role, see our analysis of the QA process improvement and AI bottleneck problem.

Option A: The True Cost of Scaling Manual QA

The instinct for many teams is to hire more testers. If 3 testers covered 5 features per week, 50 features per week requires 30 testers at the same ratio, right? In practice, most teams settle for a 5:1 developer-to-QA ratio as a rough ceiling. That means 10 developers and 50 features per week calls for approximately 10-15 manual QA testers depending on feature complexity.

Call it 15, which is the high end but reflects the reality that features generated by AI tools tend to be larger in scope and touch more code paths than manually written features.

Year 1 cost breakdown:

- 15 manual testers at $80K average: $1,200,000

- Management overhead (1 QA lead, estimated 20% of manager time): $40,000-$60,000

- Tooling (test case management, bug tracking): $20,000-$40,000

- Recruiting and onboarding (12 new hires at $15K average loaded cost): $180,000

Year 1 total: approximately $1,440,000-$1,480,000

The hidden cost is time. Hiring 12 QA testers takes 3-6 months in a competitive market. For the first 3-6 months, the bottleneck persists at full severity. The revenue delay cost calculated above continues throughout the hiring and ramp period.

By Year 2 and Year 3, recruiting costs drop but all other costs remain. The team also begins experiencing structural issues inherent to manual testing at scale: inconsistent coverage (different testers test different things), knowledge concentration (turnover destroys institutional knowledge), and burnout (high-velocity manual regression testing is not sustainable at 50 features per week).

Retention becomes an active cost. Manual QA testers in high-throughput environments have high burnout rates. Turnover adds rehiring costs and coverage gaps.

Option B: Traditional Test Automation

The conventional answer to the manual testing vs. automated testing cost debate is to invest in test automation. Build a Playwright or Selenium suite, hire QA automation engineers, run tests in CI/CD.

Year 1 cost breakdown:

- 3 QA automation engineers at $120K average: $360,000

- Test automation tooling (Playwright is free; CI infrastructure, reporting, parallelization): $50,000-$100,000

- Initial suite build (first 3-6 months is primarily setup, not coverage): included in salaries

Year 1 total: $410,000-$460,000

This is already 3x cheaper than Option A before you account for the other advantages. But the Year 1 number is misleading in an important way.

The first 3-6 months of a new test automation program are not delivering coverage. They are delivering infrastructure. The team is evaluating frameworks, establishing CI integration, writing page object models, creating test data management patterns, and building the first 20-50 test cases. Meaningful regression coverage -- the kind that would catch most production bugs before deployment -- typically requires 6-12 months of sustained investment for a 50-feature-per-week product.

During those 6-12 months, the bottleneck continues. Either manual testers are still needed as a bridge (adding back headcount cost), or features are going to production with incomplete coverage.

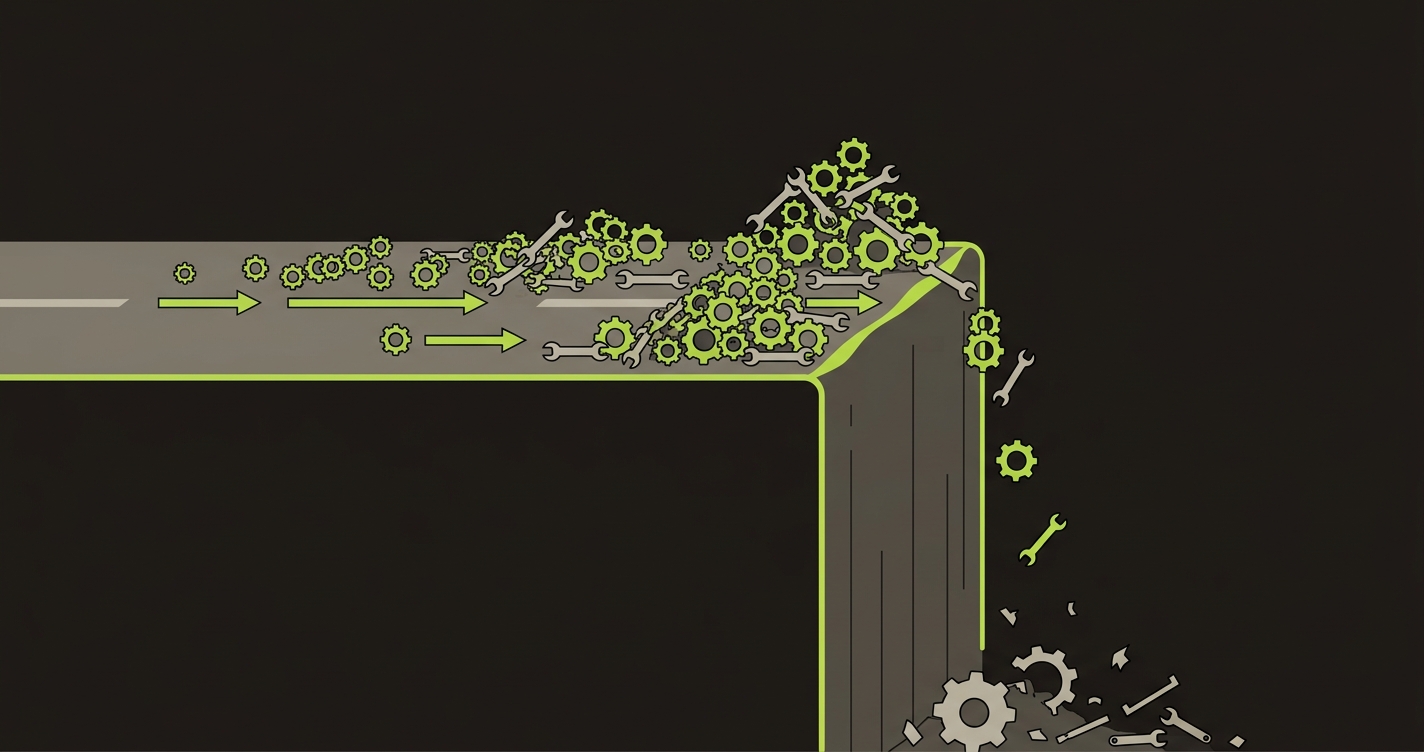

The maintenance cliff. This is the factor most cost models undercount. A traditional Playwright suite is written against specific selectors, specific page structures, specific API contracts. When those change -- and at 50 features per week, they change constantly -- tests break. Not because there's a regression in the application. Because the test was written against an implementation detail that no longer exists.

The Playwright community calls this the "flaky test problem." At scale, it is more accurately described as a maintenance tax. Teams routinely find that 30-50% of engineer time on a mature automation suite goes to fixing broken tests rather than writing new ones or investigating actual failures. For a 3-person automation team, that is 1-1.5 FTE permanently assigned to test upkeep.

Year 2 and Year 3 costs hold steady in headcount but the maintenance burden grows. By Year 3, a team that started with 3 automation engineers often needs 4-5 to maintain the same effective coverage, because the test suite has grown and the maintenance work has grown with it.

Option B-Lite: Playwright Plus GitHub Actions

Worth addressing separately because it is the most common approach for cost-conscious teams: Playwright (free) with GitHub Actions (free tier for public repos, low cost for private).

Personnel cost: 1-2 senior engineers who own the test suite alongside their other responsibilities (common at early-stage startups). Tooling cost: $0-$5,000/year.

This approach works genuinely well for teams of 3-8 developers shipping 5-10 features per week. It is the right starting point for teams that cannot yet justify dedicated automation headcount.

It breaks down at scale for the same reasons as full Option B, but faster: there is no dedicated automation team to manage the maintenance cliff, so the suite starts degrading the moment the engineers maintaining it get pulled onto other priorities. At 50 features per week with AI-generated code touching broad swaths of the codebase, a part-time Playwright suite will be in permanent debt within 6-9 months.

Option C: AI-Native Testing

This is where the comparison changes structurally, not just numerically.

Traditional test automation solves the manual testing scaling problem but creates a new one: test maintenance. The maintenance cliff exists because test automation tools were designed to execute tests written by humans. When the implementation changes, a human has to update the test.

This is the approach we took when building Autonoma. Instead of recording or scripting test cases that will need updating, Autonoma reads the codebase itself. The Planner agent analyzes routes, components, and user flows to derive test cases. The Automator agent executes them. The Maintainer agent keeps tests passing as the code evolves, without human intervention. The codebase is the spec.

This is not a subtle difference. It means there is no initial test authoring phase, no test suite that needs updating when UI selectors change, and no maintenance cliff as the codebase grows. For a team shipping 50 AI-generated features per week, those eliminated costs are structural. Where traditional automation shifts the cost from testers to test engineers, Autonoma removes the cost category altogether.

Year 1 cost breakdown:

- 1-2 QA engineers (oversight, coverage strategy): $120,000-$240,000

- Autonoma licensing: a fraction of a single automation engineer's salary

- No test authoring cost (agents derive tests from codebase analysis)

- No test maintenance cost (Maintainer agent self-heals as code changes)

Year 1 total: approximately $160,000-$320,000 (including licensing)

The team gets full E2E coverage of critical user flows without a 3-6 month ramp. Because Autonoma generates tests from codebase analysis rather than from recordings or scripts, coverage arrives with the first connected codebase rather than at the end of a suite-building project. You can try it yourself and see tests generated from your own repo within minutes. For a detailed comparison of how this approach differs from traditional automation, see our Autonoma vs. QA Wolf comparison.

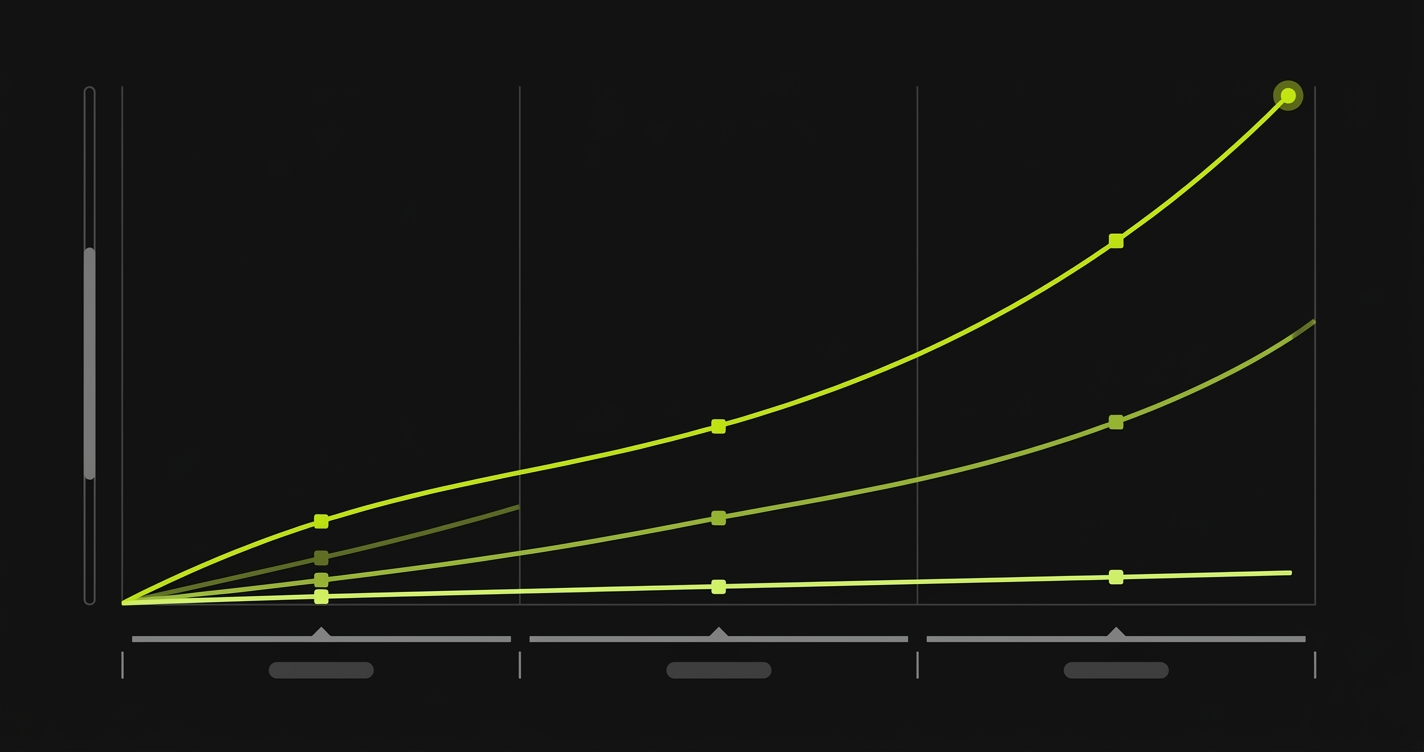

Manual vs Automated Testing: Three-Year TCO Comparison

The single-year snapshot understates the divergence. The real cost comparison emerges over time.

The Traditional Path: Manual QA vs Scripted Automation

| Cost Category | Scale Manual QA | Traditional Automation |

|---|---|---|

| Personnel - Year 1 | $1,200,000 (15 testers) | $360,000 (3 automation engineers) |

| Tooling - Year 1 | $20,000-$40,000 | $50,000-$100,000 |

| Recruiting/Onboarding | $180,000 (12 new hires) | $45,000 (3 new hires) |

| Management Overhead | $40,000-$60,000 | $15,000-$20,000 |

| Year 1 Total | ~$1,440,000-$1,480,000 | ~$470,000-$525,000 |

| Year 2 Total | ~$1,300,000 | ~$480,000-$540,000 (maintenance grows) |

| Year 3 Total | ~$1,350,000 (turnover resurfaces) | ~$540,000-$640,000 (4-5 engineers) |

| 3-Year TCO | ~$4,090,000-$4,130,000 | ~$1,490,000-$1,705,000 |

| QA Bottleneck Risk | High Year 1, then reduced | High Year 1, then reduced |

| Coverage at Scale | Inconsistent (human variance) | Good but degrades without investment |

The Modern Path: DIY Open-Source vs AI-Native

| Cost Category | Playwright + GitHub Actions | AI-Native (Autonoma) |

|---|---|---|

| Personnel - Year 1 | $120,000-$180,000 (1-1.5 engineers) | $120,000-$240,000 (1-2 QA engineers) |

| Tooling - Year 1 | $0-$5,000 | Autonoma licensing |

| Recruiting/Onboarding | $15,000 (1 new hire) | $15,000-$30,000 (1-2 new hires) |

| Management Overhead | $5,000-$10,000 | $5,000-$10,000 |

| Year 1 Total | ~$140,000-$210,000 | ~$140,000-$280,000 + licensing |

| Year 2 Total | ~$130,000-$200,000 (debt growing) | ~$130,000-$250,000 + licensing (flat) |

| Year 3 Total | Suite breaking down or rewrite needed | ~$130,000-$250,000 + licensing (flat) |

| 3-Year TCO | ~$400,000-$610,000 (before rewrite) | ~$400K-$800K (flat, no maintenance growth) |

| QA Bottleneck Risk | Persistent (suite can't keep up) | Minimal (coverage starts immediately) |

| Coverage at Scale | Limited (part-time maintenance ceiling) | Consistent (derived from codebase analysis) |

The Option B-Lite numbers deserve special attention. The raw cost looks lowest, and for a small team shipping slowly, it genuinely is the right answer. The hidden variable is the maintenance cliff: by Year 3, the Playwright suite written by part-time contributors is not an asset. It is a liability requiring either abandonment or a full rewrite, which effectively restarts the clock on Year 1 investment.

What the Numbers Mean for Engineering Leadership

The manual testing vs. automated testing decision is often framed as a quality conversation. It is actually a capital allocation decision with a time dimension.

Option A ($4M+ over 3 years) is not just expensive. It is structurally fragile. A QA team of 15 manual testers is highly sensitive to turnover, burnout, and knowledge concentration. The institutional knowledge that makes manual testing effective lives in testers' heads. When they leave -- and at high-velocity AI-assisted teams, burnout is a real risk -- coverage degrades in ways that are hard to measure until a major regression hits production.

Option B ($1.5M-$1.7M over 3 years) is the rational choice for teams that can staff dedicated automation engineers and have the patience for a 6-12 month ramp. The risk is the maintenance cliff: the team must budget for it explicitly or the suite becomes a burden rather than an asset. For continuous testing at AI-development pace, see our guide on continuous testing in AI development for patterns that keep automation sustainable.

Option C, powered by Autonoma, costs the least over 3 years, starts delivering coverage immediately, and is the only approach where costs do not grow with team or codebase size. Because Autonoma's agents derive tests from the codebase rather than from human-authored scripts, the cost structure stays flat as your product scales. The tradeoff is the organizational adjustment of not owning a handwritten test suite. For teams that care more about coverage outcomes than test ownership, the financial case is decisive.

How to Calculate Your Test Automation ROI

The right answer depends on three variables that differ by company.

Current team size and burn rate. Early-stage teams with limited runway should start with Option B-Lite (Playwright, one engineer, GitHub Actions). The maintenance cliff is 2-3 years away and the team will have grown or changed before it becomes critical.

Rate of AI-driven output increase. Teams that have already seen 5x+ output increases from AI coding tools are already past the point where B-Lite scales. The maintenance cliff arrives faster when the codebase is changing at AI speed. For these teams, the choice is between Option B (invest now, budget for maintenance) or Option C (skip the maintenance problem entirely).

Tolerance for QA bottleneck delay. If the revenue impact of a 3-week feature queue is $500K+ annually, the cost of any testing investment below $500K is justified on timing alone, independent of long-term cost savings. The bottleneck calculus often makes Autonoma the fastest path to positive ROI even if the absolute cost were higher than Option B, because coverage starts on day one rather than after a 6-month ramp.

For teams navigating this decision actively, the honest recommendation is to model it with your own numbers. Substitute your feature count, your average feature ARR value, your current headcount costs, and your tolerance for a ramp period. The framework above holds at any scale; the specific numbers vary.

What does not vary is the underlying dynamic: AI coding tools have permanently changed the ratio of code produced to code tested. Teams that treat QA as a headcount function will find that ratio unsustainable. Teams that treat testing as a system design problem -- one with architectural solutions rather than just headcount solutions -- will find that the cost curve bends in their favor. That is the bet we made when building Autonoma, and the cost model above shows why it pays off.

For a 10-developer SaaS team shipping 50 features per week after AI adoption, scaling manual QA costs approximately $1.44M in Year 1 (15 testers at $80K plus recruiting and overhead). Traditional test automation costs $470K-$525K in Year 1 (3 automation engineers at $120K plus tooling). The gap grows over 3 years: manual QA reaches $4M+ in total cost of ownership, while automation ranges from $1.5M-$1.7M depending on maintenance growth. AI-native testing platforms like Autonoma land at $400K-$800K over 3 years with flat cost curves and zero maintenance growth.

When AI tools increase feature output by 5-10x but QA capacity stays flat, features queue in QA for 2-4 weeks before deployment. For a $5M ARR SaaS with 100 features, each week of delay on queued features represents $150K-$750K in delayed revenue at the conservative end. A 3-week bottleneck on 15 features in queue can cost $450K-$2.25M in annualized revenue timing. This is the business case for addressing the QA bottleneck structurally rather than hoping output slows down.

The maintenance cliff refers to the growing cost of maintaining a traditional automation suite as the codebase evolves. Playwright and Selenium tests are written against specific selectors, page structures, and API contracts. When those change (constantly, in AI-assisted development), tests break even without a real regression. Teams typically find that 30-50% of automation engineer time eventually goes to fixing broken tests rather than catching bugs. By Year 3, a suite that started with 3 engineers often requires 4-5 to maintain the same coverage.

Yes, for small teams. Playwright with GitHub Actions free tier is the right starting point for teams of 3-8 developers shipping 5-10 features per week with 1-2 engineers managing the suite part-time. It breaks down at scale because there is no dedicated team to manage the maintenance cliff, so the suite starts degrading as AI-generated code changes selectors and page structures. Most teams find the suite requires a full rewrite or abandonment by Year 3 at high-velocity output rates.

Traditional automation tools (Playwright, Selenium, Cypress) execute tests that humans write and maintain. When implementations change, humans update the tests. AI-native testing platforms like Autonoma read the codebase directly: the Planner agent analyzes routes, components, and user flows to derive test cases, the Automator agent executes them, and the Maintainer agent self-heals tests as code evolves. This eliminates the initial authoring phase (tests are generated from codebase analysis) and the ongoing maintenance burden (the Maintainer agent handles updates automatically).

Based on the model in this article (10 developers, 50 features per week post-AI adoption): Option A (scale manual QA) reaches $4M+ over 3 years. Option B (traditional automation with 3 dedicated engineers) reaches $1.5M-$1.7M, growing as the maintenance burden increases. Option B-Lite (Playwright plus GitHub Actions with part-time coverage) runs $400K-$610K before the suite requires a rewrite. Option C (AI-native testing with Autonoma) runs $400K-$800K over 3 years with costs that stay flat because the Maintainer agent self-heals tests as code evolves, eliminating the maintenance cliff entirely.

Start with the revenue delay cost: multiply your average feature ARR value by the number of features queued in QA by the weeks of delay. For a $5M ARR SaaS shipping 50 AI-generated features per week with a 3-week QA queue, the annualized delay cost is $450K-$2.25M. Any testing investment that eliminates that queue delivers ROI on timing alone. Then compare 3-year TCO: manual QA ($4M+), traditional automation ($1.5M-$1.7M), or AI-native testing ($400K-$800K). The ROI calculation changes when AI coding tools are in play because the maintenance cliff in traditional automation arrives faster. The codebase changes too quickly for human-maintained test suites to keep pace.