Continuous testing pipelines were architected for human-paced development: nightly test runs, manually curated suites, feedback measured in hours. When AI coding tools push teams from 5 PRs/day to 50, those pipelines collapse. Test queues never clear, feedback arrives too late, flaky tests multiply, and test selection logic breaks down. This guide covers the 5 requirements for continuous testing at AI velocity, includes a complete GitHub Actions + Playwright setup, and compares 6 tools across the dimensions that actually matter.

If your test suite runs 45 minutes and your team merges 40 PRs a day, you need roughly 30 hours of CI capacity per day just to keep the queue from growing. Most teams have a fraction of that provisioned.

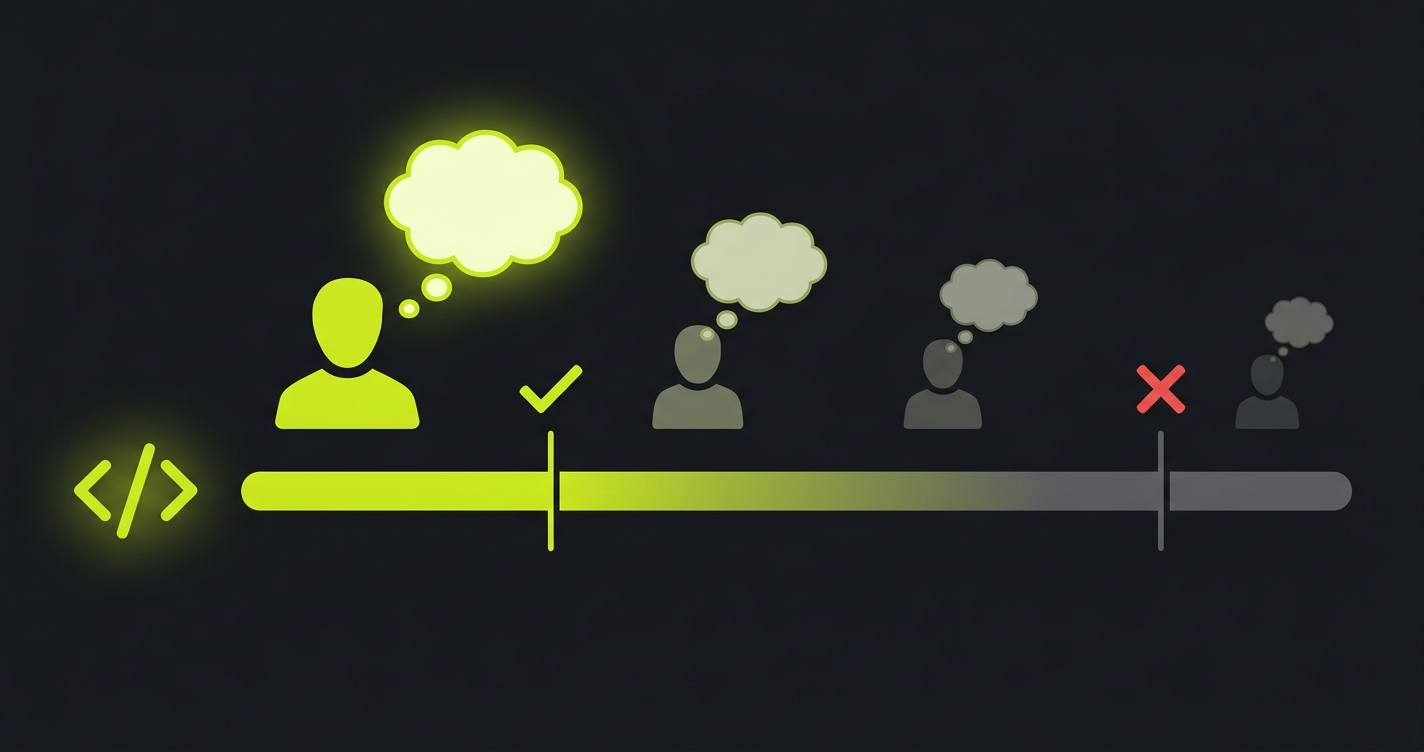

That capacity gap is the infrastructure problem platform engineers are quietly hitting in 2026. AI coding assistants pushed the average team from 8 to 35 pull requests per day, and the number keeps climbing. The result is not just slow feedback; it is a queue that never clears, which means developers stop waiting for CI before merging, which means continuous testing stops being continuous.

The fix is not more runners. It is rethinking which tests run when, what gets parallelized, and where AI-generated code needs different verification treatment than human-written code. That rethinking is exactly what led us to build Autonoma: a system that derives tests directly from your codebase and keeps them passing as code changes, without anyone manually writing or maintaining test cases.

Why Traditional Continuous Testing Breaks at AI Velocity

The original assumptions behind continuous testing made sense for the environment they were designed for. A team of ten engineers might open five to ten pull requests on a busy day. A nightly test run catching issues the next morning was acceptable. An 8-hour feedback loop was a bit slow but workable.

Those assumptions are wrong now. A single mid-sized engineering team using Cursor, Copilot, or Claude Code regularly ships 30 to 50 pull requests per day. Each AI-assisted PR touches more files than a manually written one, because the AI doesn't limit its changes to the minimum necessary surface area. And because the developer often doesn't deeply understand every line the AI wrote, they're less likely to catch a subtle regression in code review.

The result is a set of compounding failures. Test queues back up because your runner capacity was provisioned for the old PR volume. Feedback arrives too late, often after three more PRs have already landed on top of the broken commit. Developers can't tell which PR introduced a failure. Flaky tests multiply because AI-generated code tends to introduce more timing and state assumptions than carefully handwritten code. And test selection logic that worked fine when PRs were small becomes inaccurate when each PR touches a third of the codebase.

This isn't a problem you can solve by buying more GitHub Actions minutes. You need different architecture. (For the fundamentals of continuous testing and why test maintenance is the hidden cost, that article covers the baseline. This guide picks up where it leaves off: pipeline architecture at AI-development scale.)

5 Requirements for a Continuous Testing Pipeline at AI Velocity

Here's the framework we use when thinking about whether a continuous testing setup can handle AI development velocity. These five requirements are not equally important, and the order matters.

Intelligent test selection is the foundation. Running your full test suite on every commit is not continuous testing at AI velocity, it's a slow burn that defeats the purpose. Intelligent selection means understanding which tests are actually relevant to a given change: which modules were touched, which user flows depend on them, which tests have historically caught bugs in those areas. Without this, every other optimization is fighting the wrong problem. You're parallelizing a suite that shouldn't be running in full.

Parallel execution at scale is what you layer on top of intelligent selection. Once you're running only the relevant tests, you need them to complete fast. That means splitting across multiple runners, ideally dynamically, with the test orchestrator distributing work based on historical run times to avoid a slow runner becoming the bottleneck. The target for AI-velocity teams is under 10 minutes for the relevant test subset on any given PR.

Self-healing tests are where most teams underestimate the problem. AI-generated code produces UI changes at a pace that breaks selector-based tests constantly. A test that uses data-testid="checkout-button" breaks the moment the AI decides to rename that attribute. At 50 PRs/day, if 10% of PRs trigger a broken selector, you have five broken tests to triage every single day. That's not sustainable. Self-healing tests need to understand intent, not just selectors, so they adapt when the implementation changes. This is one of the problems we focused on early at Autonoma: our agents interact with your application the way a real user would, so they don't break when selectors change.

Instant feedback is the developer experience layer. A 45-minute feedback loop means the developer has already started three other things by the time the failure report arrives. They've lost context on the original change. The investigation takes twice as long. For continuous testing to actually change developer behavior, feedback needs to arrive while the context is fresh, ideally within 10 minutes of the push. This requires the combination of intelligent selection, parallel execution, and a test infrastructure that starts immediately rather than queuing.

Automatic test generation for new code paths is the last requirement and the hardest. AI developers are generating new user flows constantly. If your test suite only covers flows that existed six months ago, you're not doing continuous testing, you're doing regression testing on legacy behavior. New code needs new tests. And the team writing 50 PRs/day cannot also be writing test cases for every new flow they introduce. This is where Autonoma's Planner agent reads your codebase, identifies routes and components, and derives test cases automatically. No one writes a test. The coverage grows with your code.

For context on how these requirements relate to test placement earlier in the development cycle, the shift-left testing article covers why AI code generation breaks the traditional shift-left model.

CI/CD Testing Foundation: GitHub Actions + Playwright Setup

Before evaluating commercial tools, it's worth knowing exactly what you can build with open-source. A well-configured GitHub Actions + Playwright setup can cover requirements one through four reasonably well. Requirement five (automatic test generation) remains manual, but the rest is achievable.

Here's a complete working configuration. This is copy-paste ready for a standard web application:

# .github/workflows/continuous-testing.yml

name: Continuous Testing

on:

push:

branches: [main, develop]

pull_request:

branches: [main, develop]

concurrency:

group: ${{ github.workflow }}-${{ github.ref }}

cancel-in-progress: true

jobs:

# Step 1: Determine which tests are relevant to this change

test-selection:

runs-on: ubuntu-latest

outputs:

test-shards: ${{ steps.select.outputs.shards }}

affected-paths: ${{ steps.select.outputs.paths }}

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- name: Detect changed files

id: changed

uses: tj-actions/changed-files@v44

with:

json: true

- name: Select relevant test shards

id: select

run: |

# Map changed files to test directories

# Adjust this logic to match your project structure

CHANGED='${{ steps.changed.outputs.all_changed_files }}'

# Default: run all shards unless we can narrow it down

SHARDS='[1,2,3,4]'

# If only docs changed, skip tests entirely

if echo "$CHANGED" | grep -qv '\.md\|\.txt\|docs/'; then

echo "shards=$SHARDS" >> $GITHUB_OUTPUT

else

echo "shards=[]" >> $GITHUB_OUTPUT

fi

# Step 2: Run tests in parallel shards

e2e-tests:

needs: test-selection

if: needs.test-selection.outputs.test-shards != '[]'

runs-on: ubuntu-latest

strategy:

fail-fast: false # Don't cancel other shards if one fails

matrix:

shard: ${{ fromJson(needs.test-selection.outputs.test-shards) }}

steps:

- uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Install Playwright browsers

run: npx playwright install --with-deps chromium

# Only install Chromium for speed; add firefox/webkit if needed

- name: Start application

run: |

npm run build

npm run start &

npx wait-on http://localhost:3000 --timeout 60000

env:

NODE_ENV: test

- name: Run Playwright tests (shard ${{ matrix.shard }}/4)

run: |

npx playwright test \

--shard=${{ matrix.shard }}/4 \

--reporter=blob

env:

CI: true

BASE_URL: http://localhost:3000

- name: Upload test results

if: always()

uses: actions/upload-artifact@v4

with:

name: playwright-results-${{ matrix.shard }}

path: blob-report/

retention-days: 7

# Step 3: Merge reports and provide unified feedback

merge-reports:

needs: e2e-tests

if: always()

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '20'

cache: 'npm'

- name: Install dependencies

run: npm ci

- name: Download all blob reports

uses: actions/download-artifact@v4

with:

path: all-blob-reports

pattern: playwright-results-*

- name: Merge into HTML report

run: |

npx playwright merge-reports \

--reporter=html \

./all-blob-reports/*/

- name: Upload merged report

uses: actions/upload-artifact@v4

with:

name: playwright-report

path: playwright-report/

retention-days: 30And the matching Playwright configuration:

// playwright.config.ts

import { defineConfig, devices } from '@playwright/test';

export default defineConfig({

testDir: './tests/e2e',

// Fail fast on CI to preserve runner minutes

forbidOnly: !!process.env.CI,

// Retry flaky tests once before marking as failed

retries: process.env.CI ? 1 : 0,

// Parallel workers per shard

workers: process.env.CI ? 2 : undefined,

reporter: process.env.CI ? 'blob' : 'html',

use: {

baseURL: process.env.BASE_URL || 'http://localhost:3000',

// Collect traces on failure for debugging

trace: 'on-first-retry',

// Screenshot on failure

screenshot: 'only-on-failure',

// Video for slow tests (>30s)

video: 'on-first-retry',

// Reasonable timeouts for CI

actionTimeout: 15000,

navigationTimeout: 30000,

},

projects: [

{

name: 'chromium',

use: { ...devices['Desktop Chrome'] },

},

// Add these back when you need cross-browser coverage:

// { name: 'firefox', use: { ...devices['Desktop Firefox'] } },

// { name: 'webkit', use: { ...devices['Desktop Safari'] } },

],

// Global setup/teardown for database seeding

globalSetup: './tests/global-setup.ts',

globalTeardown: './tests/global-teardown.ts',

// 10-minute timeout per test

timeout: 600000,

// 30-minute timeout for the full suite

globalTimeout: 1800000,

});A few things worth noting about this setup. The concurrency block at the top cancels in-progress runs when a new commit arrives on the same branch. This is essential for keeping the queue clear: if you push twice in 30 seconds, the first run is killed and the second starts fresh. Without this, the queue backs up with stale runs.

The sharding strategy here is simple: four parallel runners. For larger suites, you can increase this. The fail-fast: false setting ensures that a failure in shard 1 doesn't cancel shards 2, 3, and 4, which you need to understand the full scope of a regression. The blob reporter plus merge step gives you a single unified report instead of four separate ones.

This setup is solid for getting started. But it has a hard ceiling: test selection is limited to file-path heuristics, self-healing is nonexistent, and every new flow requires someone to manually write a test. When you hit that ceiling, the upgrade path we recommend is connecting your codebase to Autonoma and letting the Planner derive your test cases automatically. You keep your GitHub Actions workflow; Autonoma handles the test intelligence layer on top.

For deeper coverage of what tests to put in this pipeline, the automated regression testing guide has detailed guidance on test selection strategy and suite composition.

Tool Comparison: What Handles AI Development Velocity

The open-source setup above handles requirements one through four reasonably well, but has a ceiling. Intelligent test selection is limited to file-path heuristics rather than semantic understanding of which tests are truly relevant. Self-healing is nonexistent: a broken selector is a broken test. And test generation is entirely manual.

Here's how the main options compare across the five requirements:

| Tool | Test Selection | Parallel Scale | Self-Healing | Feedback Time | Test Generation | Setup Complexity |

|---|---|---|---|---|---|---|

| GitHub Actions + Playwright | File-path heuristics | Up to ~20 shards | None (manual fixes) | 15-30 min (large suites) | Manual only | Low (YAML config) |

| GitLab CI + Selenium | File-path heuristics | Up to ~20 parallel | None (manual fixes) | 20-45 min (large suites) | Manual only | Medium (GitLab config) |

| Momentic | AI-driven selection | Cloud-managed | Yes (AI-assisted) | 5-15 min | AI-assisted (with human review) | Medium (platform onboarding) |

| Mabl | Impact analysis | Cloud-managed | Yes (auto-healing) | 5-20 min | Manual (low-code) | Medium (browser extension setup) |

| LambdaTest SmartUI | Visual diff only | High (cloud grid) | Visual only | 5-15 min | None (bring your own tests) | Medium (SDK integration) |

| Autonoma | Code-derived (semantic) | Cloud-managed | Yes (self-healing agents) | Under 10 min | Automatic (from codebase) | Low (connect codebase) |

A few things worth unpacking in that table.

Momentic does the CI integration story well, but it's fundamentally a platform where your team authors tests with AI assistance. There's still a human in the loop deciding which flows to cover. At 50 PRs/day, that human is a bottleneck.

Mabl has one of the strongest auto-healing implementations in the market and its CI/CD story is solid. The constraint is test creation: tests are built through a low-code UI, which means someone still has to manually identify and create each test case. The self-healing is excellent once the tests exist.

LambdaTest's SmartUI is primarily an infrastructure play with visual diffing layered on. It's great if you need cross-browser parallel execution at scale, but it doesn't solve test selection intelligence or test generation. Bring your own tests, run them faster.

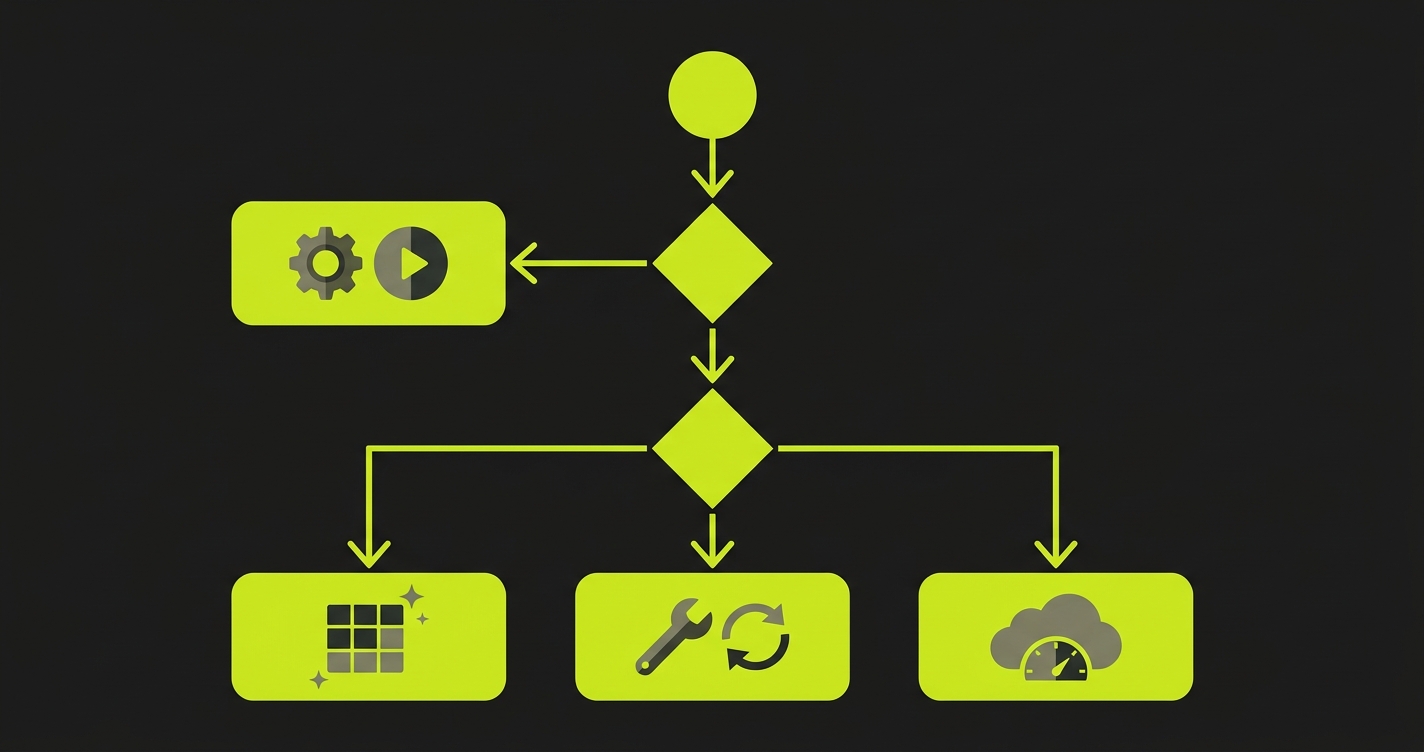

Autonoma takes a fundamentally different approach: instead of a human deciding which flows to test, three specialized agents handle the entire lifecycle. The Planner reads your codebase and derives test cases from your actual routes and components. The Automator executes those tests against your running application. And the Maintainer keeps them passing as your code evolves. New flows introduced by AI-generated code get covered automatically, without anyone writing a test case. That closed loop is the difference between a tool that helps you test faster and a system that actually keeps up with AI development velocity.

For a broader view of how these approaches fit into the manual vs automated testing cost comparison, that article covers the ROI math in detail.

The Feedback Loop Problem in Test Automation CI/CD

There's a metric that doesn't appear in tool comparison tables but matters more than most: time-to-context-loss. This is how long after a push a developer still has the mental context to fix a failure quickly.

The answer is roughly 15 minutes. After 15 minutes, the developer has moved on. They're in another file, another PR, another task. When the failure notification arrives 45 minutes later, diagnosing it requires reconstructing context that's been lost.

Traditional continuous testing optimized for catching failures. AI-velocity teams need to optimize for feedback arriving while context is still live.

This is why the under-10-minute target in this guide is not an arbitrary benchmark. It's the threshold below which feedback is actionable rather than disruptive. Under 10 minutes, the developer reads the failure, fixes it in the same working session, and moves on. Over 10 minutes, the failure becomes a context switch and an investigation.

The architecture implications are significant. Getting under 10 minutes requires all five requirements working together: intelligent selection reduces the test count, parallel execution reduces the wall time, self-healing eliminates false failures that drain time, and the infrastructure starts immediately rather than queueing. Achieving any one of these without the others gets you part of the way but not all the way. This is why we designed Autonoma to handle all five layers in a single system rather than asking teams to stitch together separate tools for selection, execution, healing, and generation.

What to Build vs. What to Buy

The GitHub Actions + Playwright configuration above is genuinely good for teams with a stable, well-understood codebase and a dedicated platform engineer who can maintain the test infrastructure. The open-source path costs mostly engineering time, and it gives you complete control over the pipeline.

Where it breaks down is at the intersection of two AI-era problems: test volume and test freshness. When your codebase is changing fast because of AI-assisted development, the test suite needs to grow at the same pace. Manually adding test cases for every new flow isn't sustainable when you're shipping 50 PRs/day. And when tests break because AI-generated code changed a selector or a component, the manual triage cycle is a constant tax.

The build-vs-buy inflection point is roughly here: if your team is spending more than one full engineer-day per week on test maintenance, you're past the point where the open-source path is economically rational. That threshold is reached faster on AI-assisted teams than on traditional ones.

Commercial tools offer different tradeoffs along the spectrum. Mabl's auto-healing is worth the cost for teams with a large existing test suite that keeps breaking. LambdaTest is worth it for teams whose primary bottleneck is parallel execution across browsers. Momentic is a good fit for teams that want AI assistance with test authoring but are comfortable keeping humans in the loop on test design.

Autonoma is designed for teams where the bottleneck is coverage itself: flows that aren't tested because no one had time to write the test cases. Three specialized agents work together: the Planner reads your codebase and derives test cases from your actual routes and components, the Automator executes those tests against your running application, and the Maintainer keeps them passing as your code changes. You connect your codebase, and the pipeline starts generating and running tests within minutes. No test authoring, no selector maintenance, no manual triage. Check current pricing to see where it fits your team size.

Setting Up Your CI/CD Testing Pipeline

Here is how to set up continuous testing in your CI/CD pipeline, starting with the highest-leverage changes. The practical path follows a specific sequence. Don't try to solve all five requirements at once.

Start with parallel execution. This is the highest-leverage change you can make to an existing pipeline with the least risk. Use the GitHub Actions configuration above (or its equivalent for your CI provider) to split your existing test suite across four to eight runners. This alone often cuts feedback time by 60 to 70%.

Then add intelligent cancellation. The concurrency configuration in the YAML above ensures stale runs are killed when new commits arrive. Combine this with a simple file-path based test selection rule (skip E2E tests entirely if only documentation changed) to reduce unnecessary runs.

Address self-healing next. If you're on Playwright, identify your most frequently broken selectors and migrate them to role-based locators (getByRole, getByText, getByLabel) rather than CSS selectors or data-testid attributes. Playwright's built-in locators are more resilient to minor DOM changes.

Only after these three are in place should you evaluate whether you need a commercial tool. The honest assessment is: if you've done the above and feedback is still over 15 minutes, or if broken tests are taking more than a few hours per week to fix, you're past the threshold where commercial tooling pays for itself. For most AI-velocity teams, we've found that connecting Autonoma on top of your existing CI pipeline is the fastest path to closing the gap. You keep GitHub Actions for orchestration; Autonoma handles test generation, execution, and maintenance as a layer on top.

Frequently Asked Questions About Continuous Testing

Continuous testing in CI/CD means running automated tests automatically on every code change as part of the deployment pipeline. Rather than running tests manually or on a nightly schedule, tests execute on every pull request and every commit to main branches, providing immediate feedback about whether a change introduced a regression. A well-configured continuous testing pipeline combines test selection (which tests to run), parallel execution (how fast to run them), and failure reporting (how to surface results to developers quickly).

Set up GitHub Actions continuous testing by creating a workflow file that triggers on pull_request and push events. The key elements are: a matrix strategy to split tests across parallel runners (sharding), a concurrency group to cancel stale runs when new commits arrive, an artifact upload step to preserve test results, and a merge step to combine results from multiple shards into a single report. The full YAML configuration in this article is copy-paste ready for most web applications using Playwright.

AI coding assistants increase PR volume 5 to 10x compared to manual development. A team that previously opened 5-10 PRs/day may open 40-50 PRs/day with AI assistance. Traditional continuous testing pipelines, with single-runner setups, nightly test runs, or manual test selection, were not provisioned for this volume. Tests queue up, feedback arrives late, developers lose context, and maintenance burden compounds. AI-velocity teams need parallel execution, intelligent test selection, self-healing tests, and ideally automatic test generation for new code paths.

Intelligent test selection is the practice of running only the subset of tests that are relevant to a given code change, rather than running the full test suite every time. Basic implementations use file-path heuristics: if only frontend files changed, skip backend API tests. More sophisticated implementations use semantic analysis to understand which components, routes, and user flows depend on the changed code. Intelligent selection is the highest-leverage optimization for reducing continuous testing feedback time, because it reduces the work rather than just parallelizing the same work.

Self-healing tests are tests that automatically update themselves when the application's UI or structure changes in ways that break test selectors, without requiring manual intervention. Traditional tests break when a button's CSS selector, data-testid attribute, or DOM position changes, even if the underlying behavior is identical. Self-healing tests understand the intent of the interaction (click the checkout button) rather than the implementation detail (the element with id='btn-checkout-v2'). This matters especially for AI-assisted development teams, where AI-generated code changes selectors and component structure frequently.

Test automation refers to the practice of writing automated tests (unit tests, integration tests, E2E tests) instead of running tests manually. Continuous testing refers to when and how those automated tests run: integrated into the CI/CD pipeline, triggered on every code change, with fast feedback loops. You can have test automation without continuous testing (a well-written test suite that you run locally before pushing). Continuous testing requires test automation as a prerequisite, then adds the infrastructure layer: pipeline integration, parallel execution, test selection, and reporting.

Continuous integration (CI) is the practice of merging code changes into a shared repository frequently, typically multiple times per day, with automated builds to verify each merge. Continuous testing is the verification layer that runs on top of CI: automated tests execute on every CI event to confirm that the merged code actually works as expected. CI ensures code compiles and integrates; continuous testing ensures it behaves correctly. They are complementary practices, not competing ones. A mature pipeline runs both: CI triggers the build, and continuous testing validates the result before the change progresses through the deployment pipeline.