Vibe coded app testing is the practice of getting retroactive E2E coverage on an AI-generated codebase after the app is already live, without rewriting the code or hiring a QA team. The answer is a codebase-aware pipeline that reads your routes, components, and auth flows to derive intent from the code itself, then generates and runs coverage automatically.

Your vibe-coded app has users. There are no tests. Hiring a QA engineer takes weeks; writing Playwright tests yourself isn't realistic if you didn't write the code. We built Autonoma to bolt coverage onto an existing app, no test code, no rewrites, because that's exactly the situation we keep finding ourselves in.

The "shipped without tests" reality

This is the most common startup state in 2026, and it's not because founders are reckless. It's because the tooling made shipping easy before it made testing easy.

Cursor, Claude Code, Bolt, Lovable: these tools collapsed the gap between idea and deployed product. A solo founder can build a checkout flow in a weekend. A two-person team can ship a full SaaS in a sprint. The velocity is genuinely new. The problem is that the loop that emerged looks like this: AI writes the feature, founder ships it, users arrive, AI rewrites the adjacent code to handle an edge case the first version missed, something that used to work stops working, and the founder discovers they have no safety net.

The agent-rewrites-tomorrow loop is the structural risk every vibe-coded app carries. Each AI pass touches code that other parts of the app depend on. Without coverage, those dependencies are invisible until a user hits them. By the time you see the bug report, the problematic change is buried under two more AI sessions.

The instinct is to slow down. Build the test suite before adding more features. But founders with users can't slow down. Users have expectations, competitors are shipping, and the window for momentum is short. The answer isn't to stop shipping. It's to get coverage without it costing founder time.

Why writing tests yourself is the wrong play here

There's a version of this conversation where the advice is "just write the Playwright suite." It sounds reasonable. It is wrong, for three reasons that compound each other.

The opportunity cost is too high. Founder time is the scarcest resource in the company. Every hour spent writing test infrastructure is an hour not spent talking to users, closing deals, or shipping the next feature. At the seed stage, writing tests is rarely the highest-leverage use of that time. The QA tooling industry was built for teams with dedicated QA engineers. You don't have one. The economics don't transfer.

The timeline doesn't work. Setting up a real Playwright suite from scratch on a codebase you only partially understand takes three to six weeks. Not because Playwright is hard. Because you need to understand every flow well enough to write tests for it, set up a test environment that doesn't pollute production data, write selectors that survive AI-driven UI changes, and build the CI plumbing to run it all on every PR. That's engineering infrastructure work. It takes engineering infrastructure time. The QA mental model for vibe-coders goes deeper on why the traditional QA setup assumes a human-written codebase with stable architecture.

Tests you write today will be wrong tomorrow. This is the most underrated problem. Your app's code is going to keep changing, and it's going to change via AI. Every Playwright test you write targets specific selectors, specific component behavior, specific API contracts. When an AI agent refactors the component tree next week to add a new feature, your tests break. Not because the feature regressed. Because the selectors changed. You spend time updating tests instead of shipping. The maintenance debt on a human-written test suite in an AI-rewritten codebase grows faster than the test suite itself. You end up with tests that are out of date and a founder who resents them.

What "bolted-on coverage" actually looks like

The phrase "add tests" frames this as a writing problem. You sit down, you write test code, you commit it. That framing is wrong for this context. The right framing is coverage as something the system provides, not artifacts the founder produces.

Bolted-on coverage starts with codebase reading. A system that can read your routes, your components, your state management, your auth flows, and your API surface can infer what your app is supposed to do. It doesn't need a spec document. It doesn't need a QA brief. It reads the code and derives intent from structure. If there's a /checkout route with a paymentForm component and a submitOrder handler, the system understands that checkout is a critical flow without anyone explaining that.

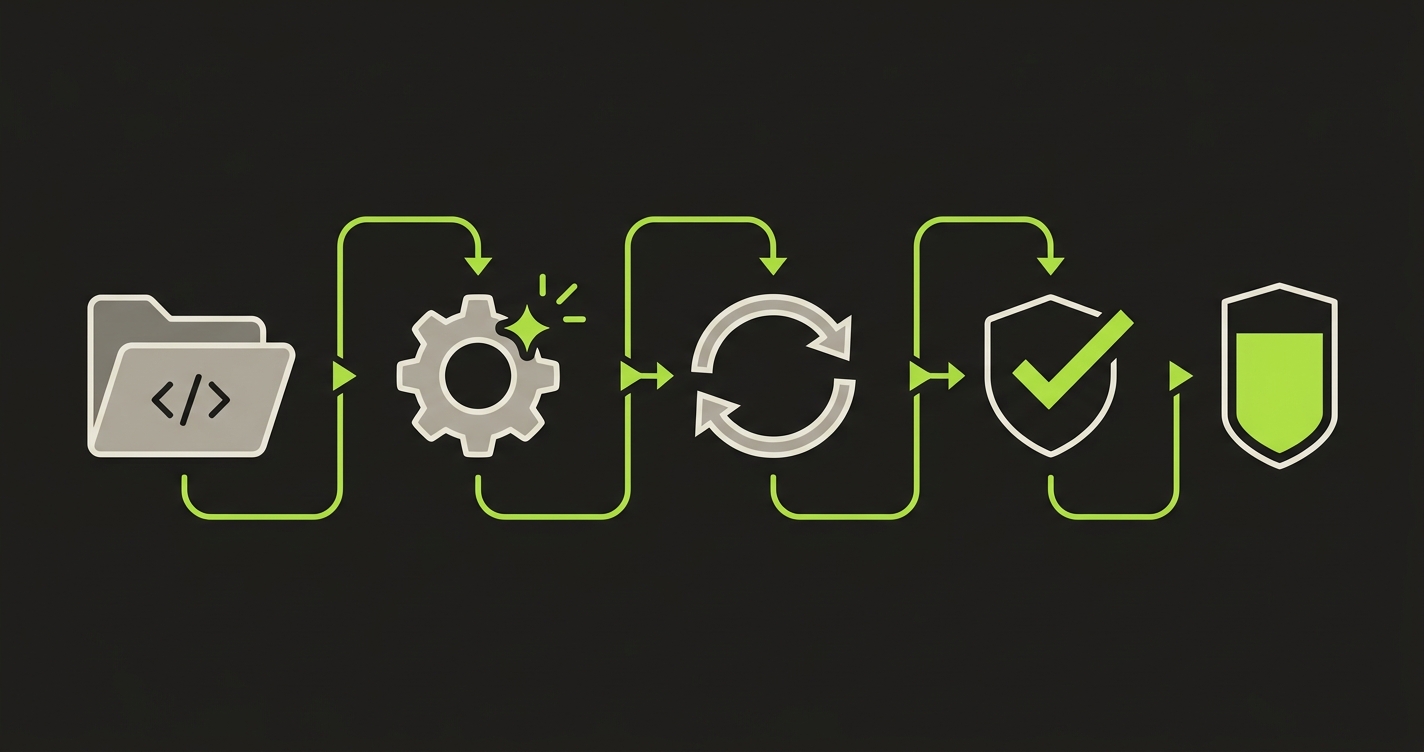

From that intent extraction, the four-stage pipeline runs. Planner derives the test cases from the codebase. Generation executes those cases against the live application. Replay re-runs the same flows on every subsequent deploy to catch regressions. Verification confirms that failures are real regressions and not environmental noise. The founder doesn't author any of this. The system does.

This is the contrast with "write tests" as a verb. Writing is a human action that requires human knowledge of the codebase. Bolted-on coverage is a system action that requires only that the system can read the codebase. For the testing-strategy version of this conversation, read the earlier piece on pre-shipping test strategy. This article is specifically for the state where users are already there. For teams starting from absolute zero, there's a dedicated piece on that starting point.

How Autonoma generates coverage retroactively

Here's what this looks like inside our system.

The Planner is the first agent in the pipeline. It reads your full repository: every route, every component, every state store, every auth flow, every API surface it can find. Not the README. Not the Jira tickets. Not the spec documents the founder never wrote. The code itself. The Planner extracts intent from structure. A protected route with a redirect-on-unauthenticated pattern tells the Planner that auth is a gate. A form with validation schema tells the Planner that the validation rules are part of the user experience. A sequence of API calls in an event handler tells the Planner that multi-step operations exist and need to succeed atomically.

From that intent extraction, the Planner generates test cases. Not generic "click through the app" scripts. Codebase-aware E2E scenarios tied to specific flows the code documents. Checkout. Onboarding. Settings update. Password reset. Auth edge cases. The test cases reflect what the app actually does, because the Planner read what the app actually does.

Generation runs those test cases against your live application. The Planner-generated database state setup handles the preconditions, because the Planner also read your data models and knows what state each flow needs to start from. No manual fixture writing. No seeded test accounts that drift out of sync with production. The Planner handles it.

Replay re-runs those same test cases on every deploy. Same flows, same deterministic execution, against the updated app. When an AI agent rewrites the checkout component next week, Replay reruns the checkout E2E. If the flow still works, green. If something broke, the Verification layer flags it as a real regression and surfaces exactly where the failure happened. The founder sees "checkout broke at the payment step" not "five E2E tests failed."

Verification is what makes retroactive coverage trustworthy. Any coverage system will eventually produce false positives. An environment issue, a slow third-party API, a race condition in the test setup. Without a Verification layer that distinguishes real regressions from environmental noise, founders learn to ignore the failures. Ignoring failures is worse than having no tests. The Verification layer ensures the signal stays clean, which is why teams actually act on it.

This four-stage pipeline only works because it's codebase-aware from the start. A tool that generates generic test scripts from a URL will produce generic failures that tell the founder nothing useful. A tool that reads the codebase generates test cases that reflect the codebase, which means failures point directly at the code that broke. That specificity is the difference between coverage that ships confidence and coverage that ships noise.

For more on how the agentic approach applies at scale, the deeper piece on the agentic testing pipeline covers how the same architecture extends to teams shipping with multiple parallel agents.

The first 24 hours: a step-by-step guide

The founders who get coverage fastest don't overthink the setup. They connect, run, read, and decide. Here's what those 24 hours look like in practice.

Step 1: Connect the repo

Connect your GitHub or GitLab repository to Autonoma. The connection gives the Planner read access to your codebase. No environment variables, no test accounts, no configuration files to write. The Planner reads what's already there. If your app uses environment-specific config, the Planner reads from your environment file patterns and maps the structure without needing the actual secret values.

Most founders who ship vibe-coded apps have a GitHub repo, even if the codebase is partially AI-generated. That's all the Planner needs. If you have a monorepo, point the Planner at the specific app directory. If you have multiple services, start with the frontend. Coverage of the user-facing flows gives you the fastest signal on what's working.

Step 2: Run the Planner

Once the repo is connected, run the Planner. The Planner reads your codebase and generates the test plan. For a small app (five to fifteen routes, a few critical flows), the Planner typically completes in under an hour. For a larger app, expect two to four hours for the first full read.

The Planner output is a test plan: a structured list of the flows it identified, the test cases it generated for each flow, and the preconditions it needs to set up. You can read this plan before running anything. Most founders scan it and immediately recognize their app's critical paths. That recognition is a useful signal: if the Planner identified your three most important flows, it's reading the codebase correctly.

Step 3: Review Generation traces

Generation runs the Planner's test cases against your live application and produces execution traces. Each trace is a record of what the agent did, what it saw, and what the outcome was.

Read the traces. Not to debug them. To understand what the system thinks your app does. The traces are the most honest documentation your app has ever had: they show the actual user experience, not the intended one. Founders often find minor UX issues in the Generation traces that they hadn't noticed before because they knew too much about how the app was supposed to work to see how it actually behaved.

If a trace fails at Generation, it usually means a flow assumption was wrong (a button label the Planner read from a component prop that's dynamic at runtime, for example) or an environment issue (the test account doesn't exist yet). Fix those before moving to Replay.

Step 4: Watch the Replay

Replay re-runs the successful Generation traces deterministically. This is the step that turns one-time coverage into continuous coverage. Every time you deploy, Replay runs. Every time an AI agent ships a change, Replay runs.

Watch the first Replay run end-to-end. Not the subsequent ones (those are automated), but the first one. Seeing your critical flows run automatically, without you clicking through them, is the moment founders understand why this is different from maintaining a Playwright suite. The replay is the coverage. You didn't write it. You're just reading the output.

Pay attention to the Verification results at the end of the first Replay. If everything is green, you have a clean baseline. If there are failures, you may have real bugs that the Generation traces caught before Replay did. Either way, the Verification layer tells you which failures are environmental and which are real. Act on the real ones.

Step 5: Decide what goes into CI

After the first successful Replay, connect Autonoma to your CI pipeline. Most teams use GitHub Actions or a similar tool. The connection adds a Replay run to every PR and every deploy.

Decide upfront what a failing Replay means for your release process. Two common setups: blocking (a Replay failure blocks the merge, required approval to override) and informational (Replay runs and reports, but doesn't block). For a founding team moving fast, informational is the right starting point. It gives you the signal without slowing the deploy cycle. Upgrade to blocking once you've seen three or four real regressions caught by Replay and you trust the signal.

Coverage is the goal. Writing tests is just one path to it.

The question isn't "how do I write tests for my vibe-coded app." The question is "how do I get coverage." Those are different questions with different answers.

Writing tests requires knowledge of the codebase, time to maintain the tests as the code evolves, and tolerance for the maintenance burden growing every time an AI agent ships a change. Coverage from Autonoma requires connecting a repo. The Planner reads, the four-stage pipeline runs, and you get retroactive coverage on the app that already has users.

If you have an existing app, users who depend on it, and no time to build a test suite from scratch, that's the path forward.

Frequently asked questions

Yes. Bolted-on coverage from Autonoma runs alongside whatever you already have. If you have five Playwright tests from an early attempt at testing, they keep running. The Autonoma Replay layer adds on top. You don't replace what exists; you fill the gaps the existing coverage misses. For most vibe-coded apps, the gaps are large, because the Playwright tests (if any exist) were written against an early version of the codebase and haven't kept up with the AI-driven changes.

Hours, not weeks. First Replay traces typically land same-day for a small app. A five-route app with three critical flows can have its first Replay results within four to eight hours of connecting the repo. Larger apps take longer because the Planner reads more code, but the first Planner output (the test plan you review in Step 2) usually arrives within the same business day. Compare that to the three-to-six-week estimate for building a Playwright suite from scratch, and the economics of bolted-on coverage are obvious.

Codebase-aware mode runs against any live URL with a test account. You don't need a dedicated staging environment to start. Point the Planner at your production URL with a test user account that's isolated from real customer data. Generation and Replay run against that URL. Most vibe-coded apps run in this configuration initially. Once you want PR-level isolation (running Replay against the specific code in each PR before it merges), a staging environment or preview environment becomes valuable. But it's not a requirement for day one.

Routes, components, state stores, auth flows, and API definitions. Not your README. Not your comments. Not your test files (if any). The Planner reads the structural code: your routing configuration to identify the app's surfaces, your component tree to understand the UI, your state management setup to understand how data flows, your auth middleware to identify protected resources, and your API handlers to understand the contract between client and server. From those reads, it derives what your app does and generates test cases that exercise the real behavior, not an idealized version of it.