Environment drift is the gap between your pre-production environment and production, accumulated across five surfaces: data shape, third-party API contracts, env vars and secrets, feature flag states, and browser-rendered cache. Shared staging accumulates drift structurally because a single runtime absorbs every PR, every seed script, and every manual toggle over its lifetime. Per-PR provisioned preview environments can be production-shaped at the moment of validation rather than diverging continuously.

The number on the bug report says staging. The number on the incident says production. They are two different environments, and they have been slowly becoming two different systems for months.

Environment drift is not a configuration accident. It is the natural outcome of running a shared runtime that absorbs every PR, every seed script, every manual fix, and every credentials rotation over its lifetime. The question is not whether staging will drift; it is how many surfaces it will drift across before you notice. Most teams discover the answer in post-mortems.

Preview environments exist precisely to answer this problem. A preview environment provisioned fresh per PR, through the full ephemeral infrastructure lifecycle, is production-shaped at the moment it matters: right before merge. This article walks the five surfaces of drift, explains why shared staging accumulates each one structurally, and maps how per-PR provisioning changes the equation.

Surface 1: Data Shape

Data shape drift happens when your staging database no longer reflects the structure or content distribution of production. It accumulates in layers.

A developer runs a one-off migration to unblock a test. Another developer adds rows to a reference table that never exist in production. A seed script from six months ago creates user records with a schema that has since changed. None of these individual changes are catastrophic. Together, they mean that a query which succeeds against staging data will silently behave differently against production data, either because the indexes are optimized for a different data distribution or because the edge cases in production simply do not exist in your seeded dataset.

A less obvious but equally damaging form of data shape drift is the column rename. A production migration renames user_identifier to external_id. The application code is updated. The staging database is not backfilled until someone manually runs the migration script, which may happen days later or not at all. In the interim, staging still has user_identifier. Any query that uses the old column name succeeds against staging and fails silently in production. Worse: because both columns temporarily coexist in staging (the old column orphaned but not yet dropped), tests that should surface a missing-column error pass cleanly. The orphaned column acts as a buffer that masks real bugs, and the test suite reports green against a schema that no longer matches what production is running.

The standard remediation is a periodic staging reset from a production snapshot. This works, but it is expensive: snapshots need to be sanitized for PII, the reset window creates deployment contention, and staging immediately begins accumulating new divergence the moment the reset completes. The reset does not solve the structural problem. It just resets the clock.

Per-PR provisioned preview environments address data shape drift differently. Each environment starts from a defined baseline: a seed that is version-controlled, reviewed, and tied to the PR's migration state. The seed is not a snapshot of accumulated mutations; it is a deterministic starting point that matches the schema at the tip of the branch. Data shape cannot drift because there is no persistent state to drift from.

Surface 2: Third-Party APIs

Third-party API drift is the hardest surface to observe. Your staging environment points at sandbox or test-tier versions of external services. Those sandboxes have their own lifecycle: they receive schema changes from the vendor, they enforce different rate limits, they occasionally reset or expire. Meanwhile, production points at the real API with its current contract.

When the vendor updates a field name in their sandbox before pushing it to production, staging sees the change first. Your tests pass against the new field. Production still uses the old field. The bug ships silently.

The inverse happens too. A vendor deprecates a sandbox endpoint earlier than the production endpoint. Your staging tests start failing. You patch staging. The fix never makes it to production because the production endpoint is still working. When the production endpoint eventually drops, the bug re-surfaces.

Payment gateways are a particularly sharp example. Stripe's test mode exposes endpoints and event shapes that diverge from the live API in ways that matter for testing: webhook signatures are validated with a different secret, certain event fields are omitted in test mode that are present in production, and Stripe periodically changes the test-mode behavior to reflect upcoming production API versions ahead of the rollout date. A team running checkout flow tests against staging will validate against the test-mode signature. When the signature scheme changes on Stripe's side, the webhook handler that staging tests pass against will start failing in production. The bug was not in the code. It was in the assumption that the test-mode contract is stable.

The root cause in both cases is that staging points at a different API tier with a different lifecycle. Per-PR provisioned preview environments do not automatically solve this, but they create the right forcing function. When each environment is provisioned fresh through isolated runtime infrastructure, the configuration of which API tier and which API key that environment uses is explicit and version-controlled. Discrepancies between staging and production API config become visible at provision time rather than at incident time.

Surface 3: Env Vars and Secrets/Config Propagation

Secrets and config propagation is where drift becomes a security and correctness problem simultaneously. Shared staging has a config snapshot that was set at some point in the past. Since then, credentials have been rotated in production. Service endpoints have changed. New services have been added to the production config that staging does not know about.

The team that rotated the credentials meant to update staging. The ticket was created. The ticket was closed as duplicate of another ticket. The staging DATABASE_URL now points at a connection string for a database that was decommissioned three months ago. Tests that do not touch the database still pass. Tests that do touch it are mysteriously flaky on the network timeout, not on an authentication error, because the old host still resolves to something.

The more insidious version of this failure is the rotated credential that technically still works. A read-only database credential is rotated in production for security reasons. The old credential is not revoked immediately because several services depend on it and the rotation is being done in phases. Staging still has the old credential. Tests pass. When the old credential is finally revoked three weeks later, staging breaks in a way that looks like a database connectivity issue, not a secrets management issue. The team spends time debugging infrastructure before realizing the root cause was a credential rotation that never made it into the staging config. Meanwhile, the same rotation had already propagated correctly to production, which means staging was running tests against a credential profile that no longer reflects the production security posture.

Secrets/config propagation in per-PR provisioning is a first-class concern, not an afterthought. Each environment is provisioned with the current secrets at provision time, injected from a vault or secrets manager that is kept in sync with production. There is no accumulated divergence between what staging knows and what production uses, because staging in this model does not exist as a persistent artifact.

Surface 4: Feature Flags

Feature flag drift is subtle because it looks like a testing decision, not a configuration problem. A developer needs to test a feature behind a flag. They toggle the flag on in staging. The feature ships. The flag is cleaned up in production. Staging still has it on.

Six months later, a different developer is testing a different feature in the same code path. Their test passes because the first feature's flag is still on in staging, creating a different execution path than any production user will ever see. The test result is technically correct against the staging environment. It is meaningless as a signal about production behavior.

Feature flag states in shared staging accumulate this way across every team, every sprint, and every experiment. The staging flag configuration eventually represents a cohort of users that does not exist: a combination of active experiments, completed experiments, and deprecated experiments that no real user has ever seen simultaneously.

A second form of flag drift is cohort divergence. Feature flag systems segment users into cohorts: a percentage rollout, a beta group, a geographic segment. In staging, the user pool is typically a small fixed set of seed accounts. Those accounts end up in the same cohort every time because their identifiers hash deterministically against the flag configuration. If the production rollout is targeting 10% of users by account ID, but all staging accounts fall into that 10%, staging always tests the flagged-on path. When the flag is off in production for 90% of users, the bugs in the off path go untested. No one notices until the rollout expands and bug reports arrive from users who were never in the staging user pool.

Per-PR preview environments can be provisioned with feature flags set to match the production baseline, or to match a specific experimental cohort, at provision time. Because each environment is isolated and ephemeral, a flag toggled for testing one PR does not persist into the next PR's environment. Every environment starts with an intentional flag configuration rather than an accumulated one.

Surface 5: Browser-Rendered State

Browser-rendered state drift is the surface that catches teams by surprise because it lives outside the server entirely. Shared staging runs against a URL that developers have been visiting for months. Their browsers have cached assets, service workers, old JavaScript bundles, and stale API responses that the staging server is no longer serving.

A new deployment to staging changes the bundle. But a developer testing from their laptop is still running the old bundle from the browser cache. Their test passes. The test on a fresh browser fails. The CI pipeline, which always runs against a fresh browser, catches the failure. But the developer's manual smoke test missed it, and the team lost confidence in the CI result because "it works on my machine."

LocalStorage and IndexedDB accumulate a similar problem on a longer timescale. A developer tests a multi-step onboarding flow in staging over several sessions. Each session leaves partial state in browser storage: a partially completed form, a cached authentication token, a wizard step indicator set to step three. The next time someone tests the onboarding flow from the same browser profile, the flow starts at step three instead of step one, because the storage state from the last test session is still there. First-load regressions in the onboarding flow go undetected because no one is ever actually experiencing first load. The bug only surfaces when a real new user hits it in production.

Service workers compound this. A service worker registered against the staging URL can intercept requests and serve cached responses long after the staging server has changed. A per-PR preview environment, reached through a unique URL, starts with a clean browser context. There is no accumulated service worker registration, no stale asset cache, no old bundle competing with the new deployment.

Why Shared Staging Accumulates Drift

Each of the five surfaces above has its own drift mechanism. What they share is the same root cause: a single long-running runtime that absorbs writes from many sources over time.

Shared staging is, by design, a shared resource. Every developer, every PR, every automated job, and every manual intervention touches the same database, the same config store, the same flag configuration, and the same URL. Every write is a permanent mutation of the shared state. Over weeks, the shared state becomes a layered artifact of every change that has passed through it. No individual change creates a crisis. The accumulation does.

Periodic resets interrupt the accumulation, but they do not change the model. Between resets, staging re-accumulates state from every active PR and every active developer session. The reset frequency becomes a negotiation between freshness and release-window contention. The team that needs to merge before the weekend reset pushes staging into a state that persists until the next reset. The team that was depending on a clean staging environment loses the window.

The second-order costs of resets are rarely accounted for. Each reset requires a coordination window where staging is unavailable, which means affected teams block their merges, shift their release timelines, or accept the risk of merging without a clean pre-merge validation. That calendar contention compounds when more than one product team writes to the same staging environment: each team's cadence collides with the others', and the reset schedule becomes a shared bottleneck that no single team owns or can fix unilaterally. Drift compounds alongside this: the longer the interval between resets, the more accumulated divergence any one reset has to clear, which makes the reset itself more risky and more likely to introduce its own instability.

This is not a process failure. It is a structural property of the shared-resource model.

Why Per-PR Provisioned Preview Environments Can Be Production-Shaped Fresh

The per-PR model inverts the accumulation problem by eliminating persistence entirely. Each PR triggers a provisioning event that creates a new, isolated runtime from a defined starting state. The environment exists for the life of the PR. When the PR closes, the environment is torn down and its state is discarded.

Because each environment starts fresh, there is no accumulated data shape divergence. Because secrets/config propagation happens at provision time, there is no stale credentials problem. Because feature flags are set explicitly at provisioning, not toggled manually over time, there is no accumulated flag state. Because the URL is unique per PR, there is no browser cache contamination.

The per-PR orchestration cost is real. Creating an isolated runtime for every PR requires infrastructure automation: provisioning scripts, secrets injection, database seeding, service routing, and teardown. This is the managed preview infrastructure problem, and it is the reason most teams start with shared staging and migrate to per-PR provisioning only after the drift pain becomes visible.

What changes with managed preview infrastructure is who absorbs the orchestration cost. When the provisioning is handled by a platform layer, the developer experience looks like a URL in the PR comment. The isolation is real; the complexity is invisible.

The Five Drift Surfaces, Side by Side

| Drift surface | Where drift originates | Why staging accumulates it | Why per-PR previews avoid it |

|---|---|---|---|

| Data shape | One-off migrations, seed mutations, schema evolution | Mutations persist; reset windows create contention | Each environment seeds from a versioned baseline |

| Third-party APIs | Vendor sandbox lifecycle diverging from production tier | Single config points at a sandbox that evolves independently | API config is explicit and version-controlled per provision |

| Env vars / secrets | Credential rotations and new service endpoints | Config is set once; updates are missed or deprioritized | Secrets injected from vault at provision time; always current |

| Feature flags | Manual flag toggles for testing that are never cleaned up | Toggles accumulate; staging cohort matches no real user | Flag state set intentionally at provision; no carryover |

| Browser-rendered state | Cached assets, service workers, stale bundles | Shared URL accumulates cache across all developer sessions | Unique URL per PR; clean browser context by default |

How Autonoma Configures Preview Environments for Production Parity

Drift is structurally a shared-resource problem. A single staging environment absorbs writes from every PR, every developer, and every automated job. No process discipline fully counteracts the accumulation; the model itself is the source of the divergence. The only complete solution is to stop sharing the runtime.

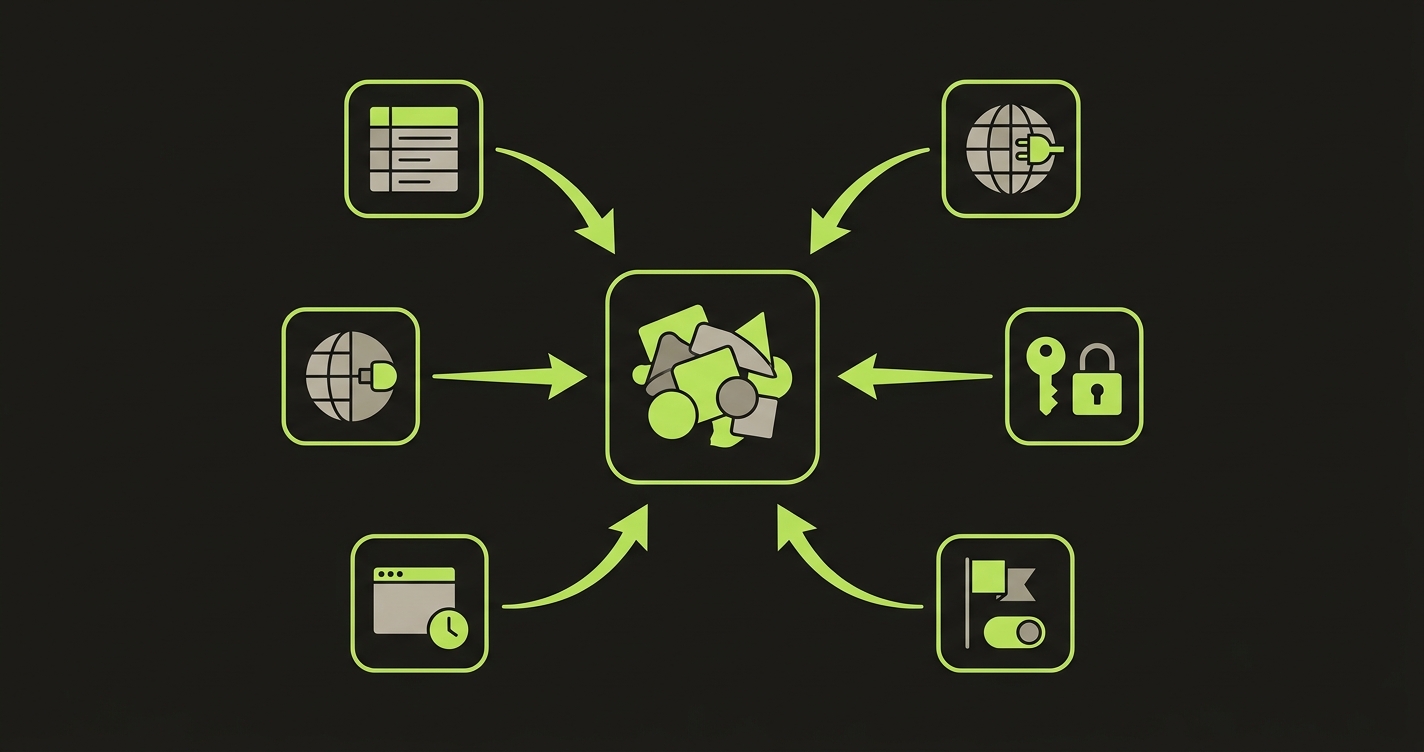

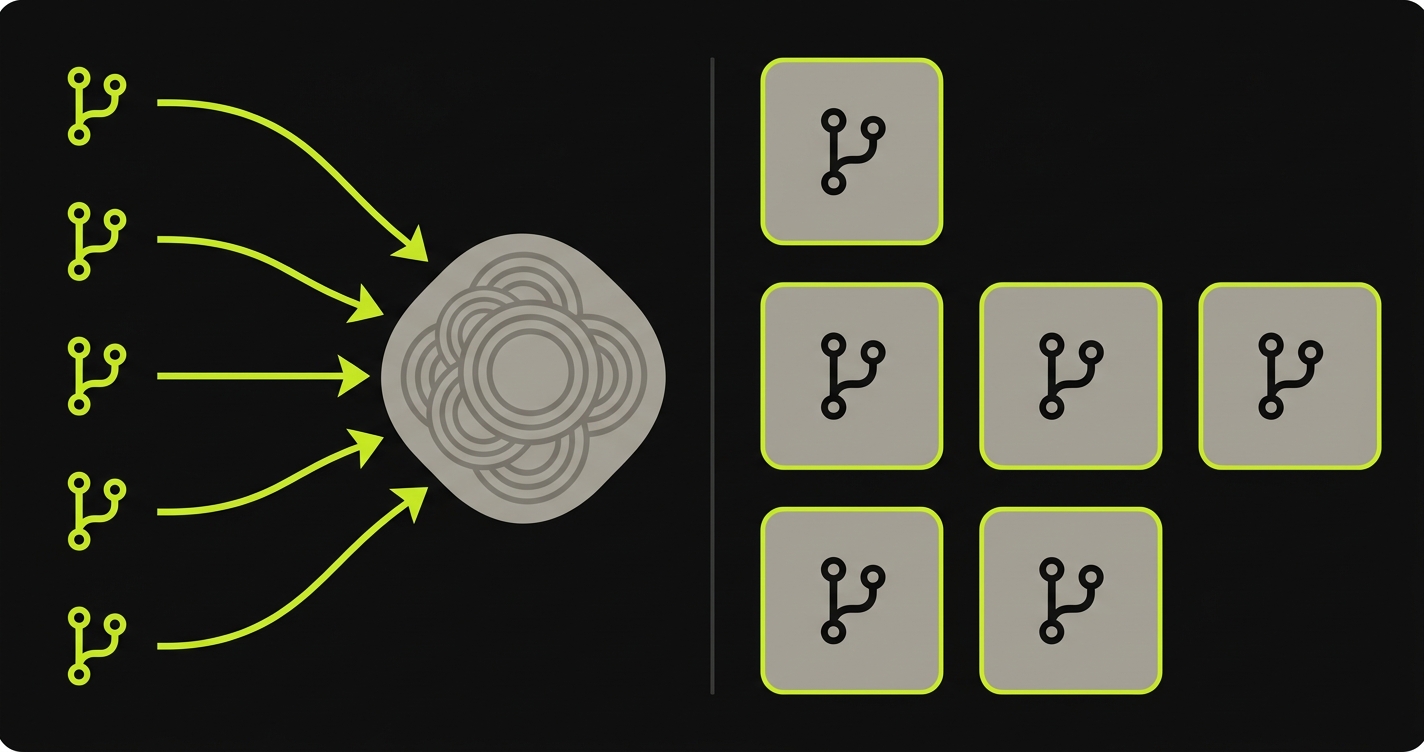

Autonoma approaches this as a two-layer problem. Layer 1 is per-PR orchestration: each PR triggers the provisioning of an isolated runtime with production-shaped config, current secrets injected at provision time through automated secrets/config propagation, a seeded database matching the branch's migration state, and feature flags set to the production baseline. This is the managed preview infrastructure layer. Layer 2 is the testing layer: a Planner agent reads the codebase, plans test cases for the new PR's code paths, and an Automator agent runs those tests against the fresh preview environment before merge. A Maintainer agent keeps those tests passing as the codebase evolves. Because the environment is provisioned fresh per PR through the ephemeral infrastructure lifecycle, the tests run against a production-like environment rather than an accumulated one. Drift does not contaminate the result. The full architecture is documented in how Autonoma preview environments work.

If you're sizing how much drift your shared staging absorbs and what changes when previews are fresh per PR, our co-founder Eugenio walks teams through it weekly. Grab 20 min with a founder

FAQ

Environment drift is the gap between your pre-production environment and production, accumulated over time through data mutations, API contract changes, config updates, feature flag states, and browser-cached responses. It matters because drift makes your pre-merge tests unreliable: a green result against a drifted staging environment does not guarantee the same behavior in production.

Shared staging is a single runtime touched by every developer, every PR, and every seed script. Each write operation, each credentials rotation, each feature flag toggle compounds on the previous state. Over weeks or months, the environment state is a layered artifact of every change that has passed through it. Per-PR isolated environments start fresh, so there is no accumulation.

Production parity means the preview environment mirrors production on the dimensions that affect test reliability: data shape, API contracts, config and secrets, feature flag states, and cached assets. A production-like environment does not mean identical data volume; it means the same structural conditions that determine whether a feature works correctly.

Periodic resets help but do not eliminate drift. Between resets, staging re-accumulates state from every active PR. The reset window itself creates contention: teams cannot merge or deploy during the reset. And the reset frequency is always a compromise between freshness and disruption. Per-PR provisioning removes the tradeoff by making each environment fresh by design.

Feature flag states in staging are often toggled manually to unblock specific tests or demos. Those toggles persist. Over time, staging ends up with a mix of flag states that does not match any real production cohort. A PR that looks correct in staging may behave differently in production simply because the flag state it was tested against no longer exists.

Secrets and config propagation refers to how environment variables, API keys, and service credentials are delivered to a preview environment at provision time. In shared staging, config is set once and rarely updated, so rotated credentials or new service endpoints can leave staging pointing at stale values. Per-PR provisioning with automated secrets injection means each environment gets the current config at the moment it is created.