API testing automation is the practice of programmatically verifying that your API endpoints behave according to their contract: correct status codes, consistent response shapes, proper validation, and predictable error handling. When teams use AI code generators to build backends, this discipline becomes critical. AI tools like Cursor, Copilot, and Claude Code can generate five new endpoints in the time it takes to write one contract test. That asymmetry creates an invisible gap between your deployed API surface and your verified API surface. This guide covers the specific bugs AI introduces in API code, the tools used to catch them (open-source and commercial), and a practical strategy for closing the contract gap before it becomes a production incident.

Your AI coding tool just shipped your fastest sprint ever. Twenty-three endpoints in two days. The frontend team is already building against them.

Nobody asked: are these endpoints actually correct?

Not "does the code compile" correct. Not "does the happy path return data" correct. Contract correct. Do they use the right HTTP status codes? Do they validate inputs consistently? Will userId in one response quietly become user_id in another?

That inconsistency won't show up in your IDE. It won't fail a type check. It will fail at 2am when a client SDK parses a response shape it has never seen before.

This is the specific problem with AI-generated backends: the velocity is real, but the contract quality is unpredictable. And most teams are not running enough API testing automation to catch what's coming.

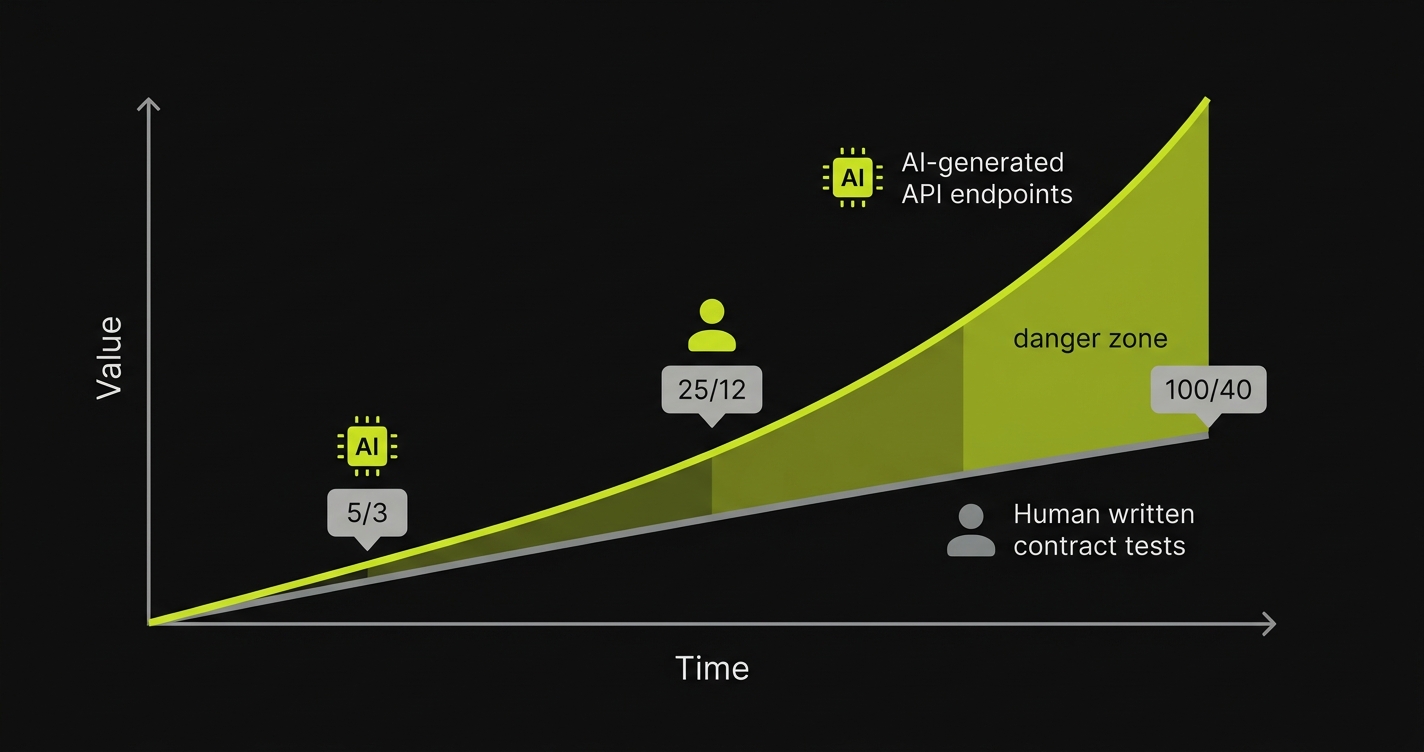

The Contract Gap Is a Math Problem

Here's a pattern we see consistently. A team adopts an AI IDE. Endpoint velocity goes up immediately: that part is real. But test coverage doesn't scale at the same rate, because writing contract tests still requires human time. The gap compounds:

Day 1: 5 new endpoints, team writes contracts for 3. Two endpoints are unverified.

Day 5: 25 endpoints in the system, 12 contracts written. Thirteen endpoints have no contract validation.

Day 20: 100 endpoints shipped. 40 contracts exist. Sixty percent of your API surface is untested.

The dangerous part is that the gap is invisible. Your CI pipeline passes. Your end-to-end tests exercise the happy paths. The problem isn't missing functionality. It's unverified behavior in the corners: error responses, edge case inputs, field naming consistency across endpoints.

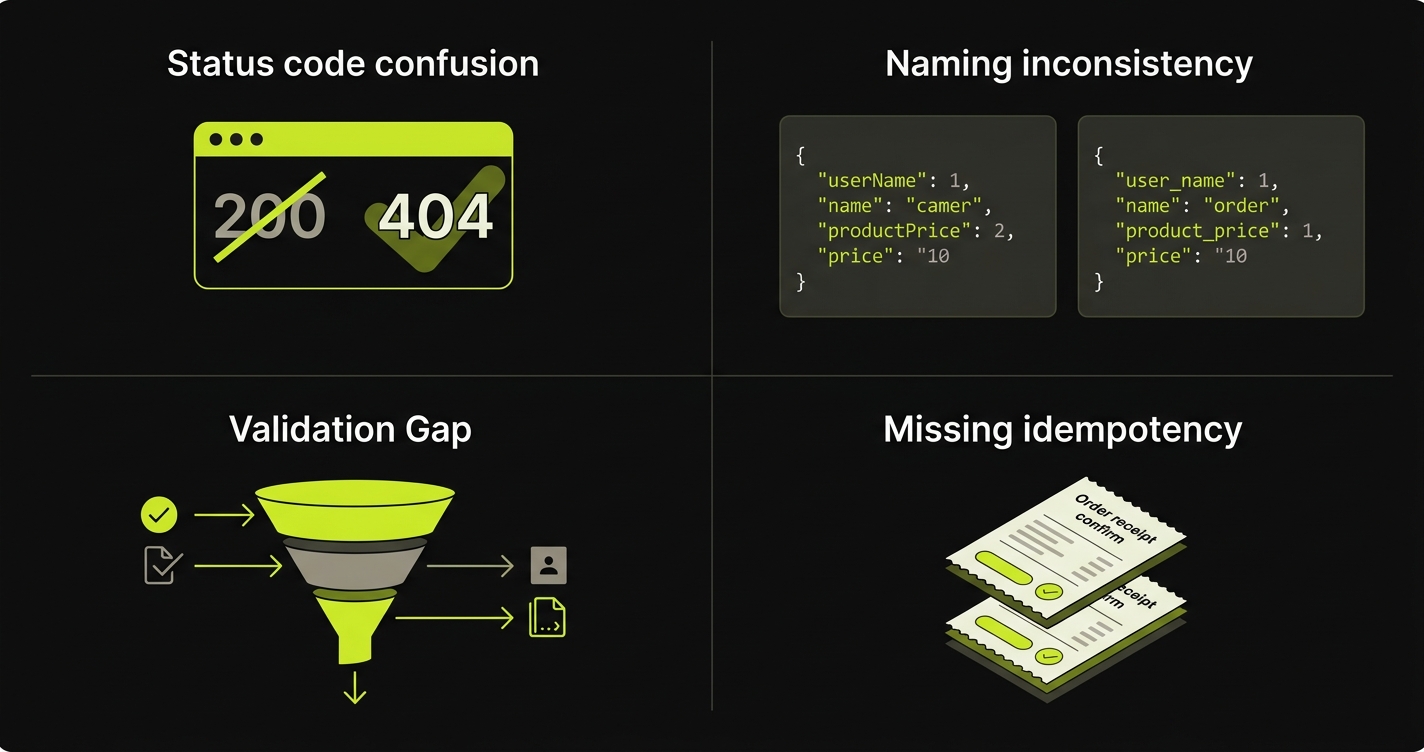

The Specific Bugs AI Introduces in API Code

AI code generators produce different failure modes from human-written code. A senior engineer who has built five APIs knows that a "not found" response should return 404, not 200. They've been burned by inconsistent field naming before. They know that email validation needs length limits, not just format checks.

AI doesn't carry that institutional memory. It pattern-matches from its training data, which means it reproduces common patterns and common mistakes at equal confidence.

Status code confusion. The most frequent: AI returns 200 OK with a body like { "error": "User not found" } instead of returning a 404. This is a real issue because it looks correct in testing (the response is a valid JSON object), but client code checking response.status === 200 will treat it as a success. The error is silently swallowed.

Inconsistent field naming. AI generates each endpoint independently, often from different prompts or on different days. One endpoint returns { "userId": 123 }. Another returns { "user_id": 123 }. Both work in isolation. The naming inconsistency only surfaces when a client tries to use both and finds that the same concept has two different field names in the same API.

Validation surface gaps. AI tends to validate format without validating boundaries. It will add a regex check for email format but miss that an email string longer than 10KB is a denial-of-service vector. It validates that a date string is in ISO format but doesn't reject dates a thousand years in the future. The validation looks complete in a code review but has gaps that only matter at the extremes.

Missing idempotency. AI generates a POST /orders endpoint correctly: it creates an order, returns a 201, looks clean. What it doesn't add: idempotency key handling. A network timeout causes the client to retry. Two identical orders are created. The endpoint was never designed to handle double-submission. A human engineer with payment system experience would have caught this. The AI generated the obvious case and stopped.

These aren't hypothetical. They're the class of bugs that API testing automation is specifically designed to catch, and that only surface in production when you haven't written the tests.

Autonoma complements API testing by validating the full user experience — AI agents navigate your application end-to-end on real browsers, catching the frontend-backend integration issues that API tests alone miss.

API Test Automation Tools: The Open-Source Baseline

Before comparing tools, it's worth establishing what a working API testing setup looks like for a team using AI-generated code. Two open-source tools cover most of the ground: Postman/Newman for exploratory validation and CI execution, and REST Assured for Java backends that need code-level contract testing.

Postman + Newman for Contract Validation in CI

Postman is the gold standard for manual and exploratory API testing. Newman is its CLI runner, which turns a Postman collection into a CI step. Together, they're the fastest way to put a validation gate on AI-generated endpoints.

A collection for an AI-generated user service might look like this. You create requests for each endpoint, add test assertions in the Tests tab, and run the collection through Newman in your pipeline:

// Postman test assertions for a POST /users endpoint

pm.test("Status code is 201 for successful creation", function () {

pm.response.to.have.status(201);

});

pm.test("Response body has consistent field naming (camelCase)", function () {

const json = pm.response.json();

pm.expect(json).to.have.property("userId");

pm.expect(json).to.not.have.property("user_id"); // catch naming inconsistency

});

pm.test("Error response uses 400 not 200", function () {

// run against a request with invalid email

pm.response.to.have.status(400);

pm.expect(pm.response.json()).to.not.have.property("error"); // error should be in status, not body

});

pm.test("Email input rejects oversized strings", function () {

// run against a request with a 10KB email string

pm.response.to.have.status(400);

});Newman CI integration in GitHub Actions:

# .github/workflows/api-contract-tests.yml

name: API Contract Tests

on:

push:

branches: [main, develop]

pull_request:

branches: [main]

jobs:

api-tests:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v3

- name: Install Newman

run: npm install -g newman newman-reporter-htmlextra

- name: Run API Contract Tests

run: |

newman run ./tests/api-contracts.postman_collection.json \

--environment ./tests/staging.postman_environment.json \

--reporters cli,htmlextra \

--reporter-htmlextra-export ./reports/api-test-report.html \

--bail

- name: Upload Test Report

uses: actions/upload-artifact@v3

if: always()

with:

name: api-test-report

path: reports/The --bail flag stops the run on first failure, which matters in CI. You want a clear signal, not a report showing 40 passing tests that obscures the 3 contract violations.

REST Assured for Java Teams

For teams running Spring Boot or any JVM-based backend, REST Assured integrates directly with JUnit and gives you contract validation as part of your existing test suite:

// REST Assured contract test for an AI-generated endpoint

import io.restassured.RestAssured;

import org.junit.jupiter.api.Test;

import static io.restassured.RestAssured.*;

import static org.hamcrest.Matchers.*;

public class UserApiContractTest {

@Test

public void createUser_shouldReturn201NotError200() {

given()

.contentType("application/json")

.body("{\"email\": \"test@example.com\", \"name\": \"Test User\"}")

.when()

.post("/api/users")

.then()

.statusCode(201) // not 200 with error body

.body("userId", notNullValue()) // camelCase, not user_id

.body("user_id", nullValue()); // catch naming inconsistency

}

@Test

public void createUser_withOversizedEmail_shouldReturn400() {

String oversizedEmail = "a".repeat(10000) + "@example.com";

given()

.contentType("application/json")

.body("{\"email\": \"" + oversizedEmail + "\"}")

.when()

.post("/api/users")

.then()

.statusCode(400); // AI often skips length validation, this test catches it

}

@Test

public void createOrder_isIdempotent_withSameKey() {

String idempotencyKey = "test-key-12345";

// First request - should succeed

given()

.header("Idempotency-Key", idempotencyKey)

.contentType("application/json")

.body("{\"productId\": 1, \"quantity\": 1}")

.when()

.post("/api/orders")

.then()

.statusCode(201);

// Second request with same key - should NOT create a duplicate

given()

.header("Idempotency-Key", idempotencyKey)

.contentType("application/json")

.body("{\"productId\": 1, \"quantity\": 1}")

.when()

.post("/api/orders")

.then()

.statusCode(200) // 200 = returned cached result, not 201 = created again

.body("duplicate", equalTo(false));

}

}This is the manual baseline. It works. The problem is that writing these tests takes time, and the AI backend keeps generating new endpoints while you're writing them. That's how the contract gap widens.

API Testing Tools Comparison: Open-Source vs Commercial

| Tool | Test Creation | Contract Testing | AI-Code Bug Detection | CI/CD Integration | Language Support | Pricing |

|---|---|---|---|---|---|---|

| Postman / Newman | Manual (GUI + JS assertions) | Good (schema validation, status checks) | Only what you write manually | Excellent (Newman CLI) | Language-agnostic (HTTP) | Free tier; Teams from $29/user/mo |

| REST Assured | Code (Java/Groovy DSL) | Strong (code-level assertions) | Only what you write manually | Excellent (JUnit integration) | Java, Kotlin, Groovy | Free (open source) |

| Karate | BDD-style DSL (Gherkin-like) | Good (schema validation) | Only what you write manually | Good (Maven/Gradle) | Language-agnostic (Karate DSL) | Free (open source) |

| Hoppscotch | Manual (GUI) | Basic (manual assertions) | Minimal | Limited | Language-agnostic (HTTP) | Free; Cloud from $12/user/mo |

| ReadyAPI | Visual + scripting (Groovy) | Strong (WSDL/Swagger validation) | Only what you configure | Good (CLI runner) | Java, REST, SOAP, GraphQL | $749+/year per user |

| Mabl | Low-code + AI suggestions | Moderate | Limited (surface-level) | Good (native CI plugins) | Language-agnostic (HTTP) | Custom pricing (contact sales) |

| TestSigma | NLP + low-code | Moderate | Limited | Good | Language-agnostic | From $499/mo (platform) |

| Autonoma | Automatic (reads codebase) | Strong (validates against code spec) | Built-in (catches AI contract violations) | Native (CI/CD + terminal) | Language-agnostic | Free / Open Source |

A few things worth unpacking here.

Postman and REST Assured are the workhorses. Every team should have them. Postman is invaluable for exploration: you want someone to manually poke at a new AI-generated endpoint before it goes to production. Newman makes that collection a CI gate. REST Assured is the right choice for Java backends where you want contract tests to live alongside unit tests.

ReadyAPI is powerful but heavy. It made sense in an era of SOAP services and enterprise integration testing. For teams running REST APIs on modern frameworks, the cost and complexity are hard to justify unless you're already in a SmartBear ecosystem.

The gap all the manual tools share is the same: they only catch what you wrote tests for. If you don't have a test asserting that a 404 should return 404 and not 200-with-error-body, no tool will flag it. That's not a tooling limitation. It's a coverage limitation.

This is where the equation changes for teams dealing with the contract gap. Postman covers the endpoints you've already thought about. The problem is the 60 endpoints you haven't gotten to yet.

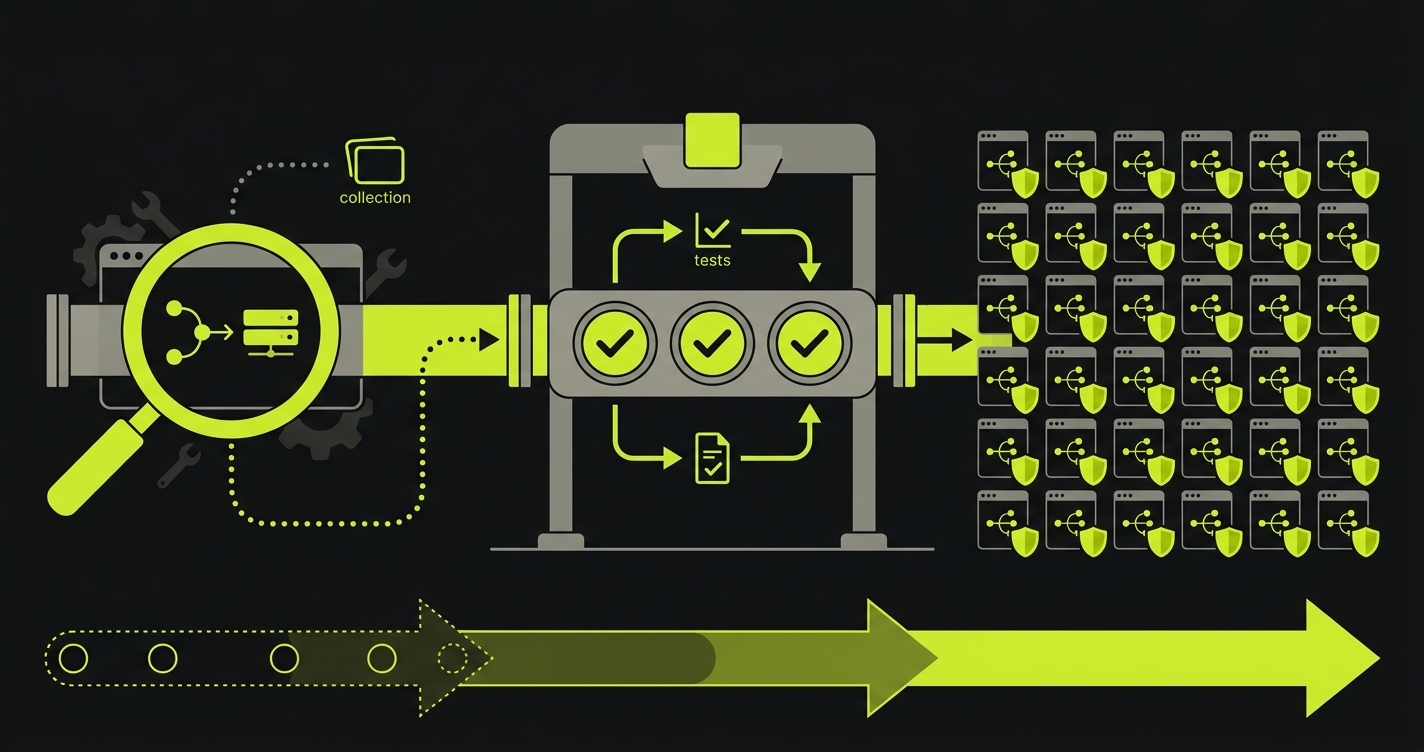

Closing the API Contract Testing Gap

The tools above solve the execution problem. A team using Postman with Newman and a good collection has a solid api testing automation foundation. The problem they don't solve is the creation problem: who writes the contracts for the 60 endpoints that haven't been tested?

The answer is shifting toward automated contract generation. Instead of a human manually writing assertions for every endpoint, the next generation of tooling reads your codebase, infers what each endpoint is supposed to do, and generates validation tests from the code itself. No recording, no manual scripting, no maintaining a separate collection by hand.

This is the approach we took with Autonoma. Our Planner agent analyzes your API code, understands the intended behavior from the implementation, and produces contract tests automatically. An endpoint that returns 200 with an error body when the code clearly intends to signal failure? Flagged. An endpoint where the field naming diverges from the rest of the API? Caught from code analysis before it reaches production. The bugs described earlier in this guide are exactly the class of issues automated contract generation is built to surface.

The practical setup looks like this: use Postman for exploration and manual verification when endpoints first appear. Use Newman to run your existing collection in CI. Layer in Autonoma for continuous validation across the full API surface, including the endpoints nobody has manually tested yet. You're not replacing your existing workflow. You're closing the gap between the endpoints you've covered and the ones that are silently accumulating risk.

This connects to a broader pattern in teams adopting AI development at scale. The continuous testing for AI development problem is fundamentally about closing the gap between generation velocity and verification velocity. API testing is one of the clearest places where that gap becomes visible and measurable.

What to Do This Sprint

The contract gap is not a future problem. It's current. Here's the practical path:

Start by auditing your existing endpoint count versus your existing contract test count. Count the gap. If it's more than 20%, you have a coverage debt problem that's growing with every sprint.

For the endpoints you do have, run them through a Postman collection with the specific assertions described earlier: status code validation, field naming consistency checks, input validation boundary tests. These four assertions will catch the most common AI-generated contract bugs.

For new endpoints from AI, establish a rule: no endpoint merges to main without a status code test and a field naming test at minimum. Fifteen minutes of Postman work per endpoint is enough to catch the critical class of bugs.

For the longer tail, add automated contract validation to your pipeline. Tools like Autonoma generate contract tests from your codebase — it's open source and self-hostable, with a free tier — which means every endpoint gets coverage regardless of whether a human has written a test for it yet. The contract gap compounds because manual test creation can't scale to match AI generation velocity. Automated validation closes that gap continuously instead of sprint by sprint.

If you're thinking about the broader test coverage picture beyond API contracts, the automated regression testing guide covers how to structure the full testing layer for codebases that are evolving faster than teams can manually verify.

FAQ

API testing automation is the practice of programmatically verifying that API endpoints behave according to their intended contract. This includes checking HTTP status codes, response body structure, input validation behavior, error handling consistency, and performance characteristics. Automated API tests run in CI/CD pipelines and catch regressions before they reach production. Tools like Postman/Newman, REST Assured, and Karate are commonly used for automated API testing.

AI code generators pattern-match from training data rather than applying accumulated engineering judgment. A senior engineer remembers being burned by 200-with-error-body responses and won't repeat the mistake. AI generates the statistically common pattern, which sometimes includes common mistakes. Specific issues include wrong HTTP status codes, inconsistent field naming across endpoints (camelCase vs snake_case), missing input boundary validation, and absent idempotency handling. These bugs don't fail type checks or unit tests. They require explicit contract testing to surface.

Postman is a GUI-based API testing tool used for manual exploration, request building, and writing test assertions. Newman is Postman's command-line collection runner, which executes Postman collections in CI/CD pipelines without a GUI. Typically, engineers build and validate collections in Postman, then run them automatically via Newman in GitHub Actions, GitLab CI, or other pipeline tools. Newman supports multiple reporters (CLI, HTML, JUnit XML) and integrates with test result dashboards.

End-to-end tests verify that user flows work from the UI to the database. They typically exercise the happy path and a limited set of error states. Contract testing specifically verifies the interface agreement between an API and its consumers: exact field names in responses, precise HTTP status codes for every response type, input validation behavior at the boundaries, and error response format consistency. A flow-based E2E test will pass even if an endpoint returns 200 with an error body, because the test is checking that the user can complete the flow, not that the status code is semantically correct.

Add explicit status code assertions to every endpoint's contract tests. In Postman, use pm.response.to.have.status(expectedCode) for each response type. In REST Assured, use .then().statusCode(expectedCode). For AI-generated code specifically, check for the pattern where error conditions return 200: test with invalid inputs, missing required fields, and non-existent resource IDs, then assert that the response code is 400 or 404 respectively. If the response is 200 for those requests, you have the error-body-masking bug.

Use Postman when you want to manually explore new endpoints, build interactive documentation, or write targeted contract tests for known endpoints. Postman is excellent for the API surface you've already analyzed. Autonoma addresses a different problem: the endpoints you haven't gotten to yet. When AI code generation means your endpoint count grows faster than your test count, Autonoma reads the codebase and generates contract validation automatically for the full API surface, including the 60% that no human has manually tested. The two tools are complementary, not competing.

Testing AI-generated APIs requires contract-level validation beyond what unit tests and end-to-end tests cover. Start by auditing every endpoint for correct HTTP status codes (AI frequently returns 200 for error conditions), consistent field naming across endpoints (camelCase vs snake_case mixing), and input validation at boundaries (format checks without length limits). Use Postman collections with explicit assertions for these patterns, run them via Newman in CI/CD, and consider automated contract validation tools for the endpoints your team hasn't manually tested yet. The key difference from testing human-written APIs: AI-generated code introduces consistent, predictable bug patterns that you can systematically test for.

The fastest path is Postman plus Newman. Build a Postman collection with contract assertions (status codes, response shapes, field naming consistency), export the collection as JSON, and add a Newman step to your GitHub Actions or GitLab CI workflow. The Newman CLI runs the collection headlessly and fails the pipeline on assertion violations. For Java backends, REST Assured tests integrate directly with JUnit and run as part of your existing test suite. Both approaches give you a CI gate that catches contract violations before they merge to main.