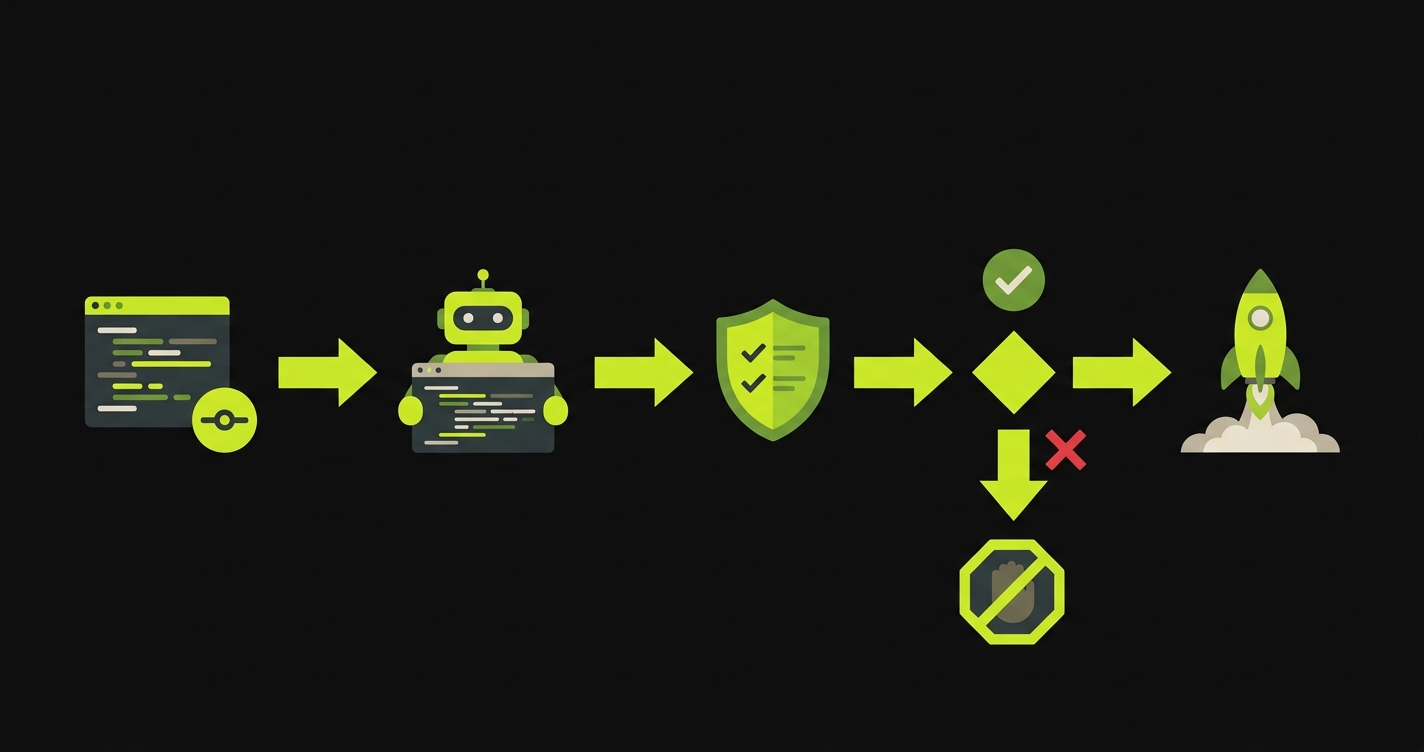

TLDR: 500 Global LatAm Demo Day is attended by both US and LatAm investors simultaneously. Your LatAm pilot customers are the primary evidence that your traction is real and the market is real. US investors who don't know the market need that proof to be clean and unambiguous. A bug-filled pilot converts your best evidence into a question mark. This post covers how to protect the critical paths in your active pilots so the traction you built stays intact when the room is watching.

The dual-audience problem at 500 Global LatAm Demo Day

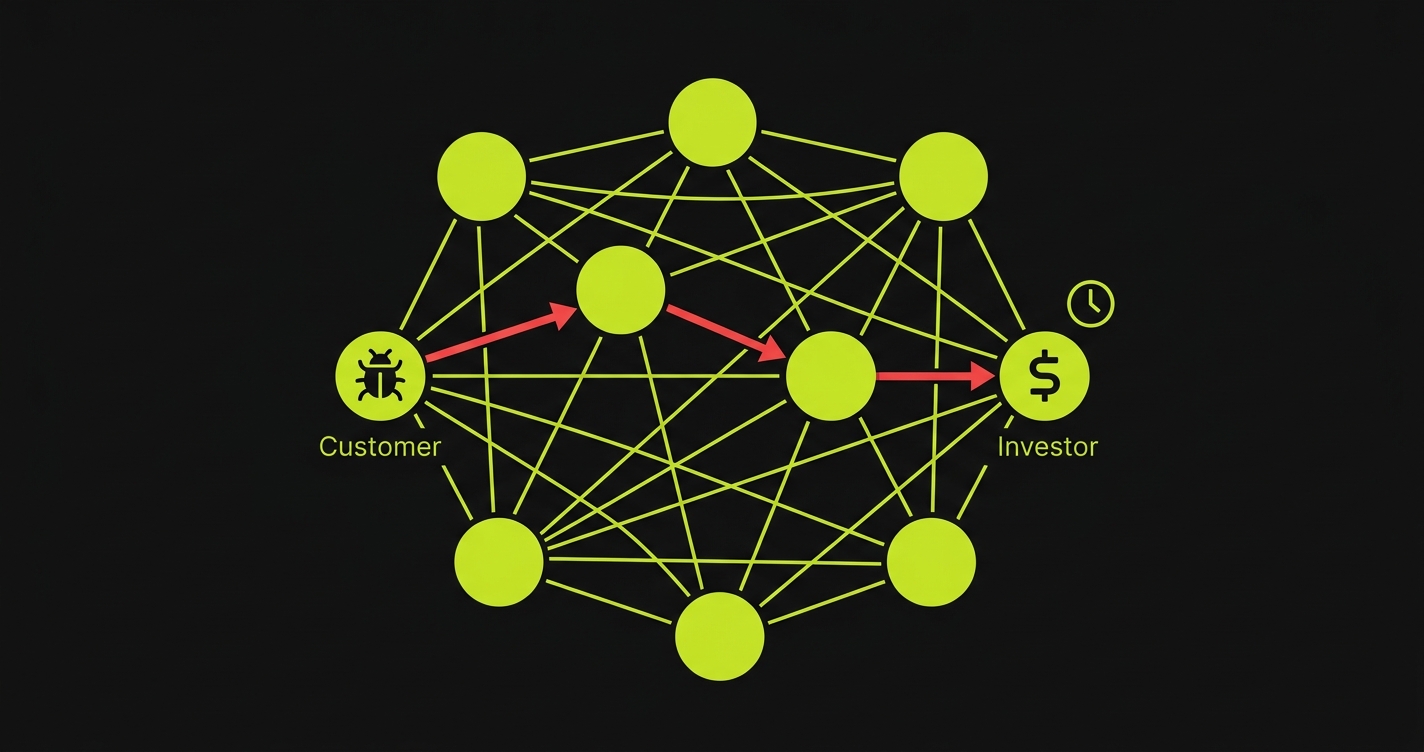

500 Global's LatAm program is unusual in the accelerator landscape because its Demo Day is genuinely cross-border. You are presenting to a room that includes LatAm investors who know the market well, and US investors who are betting on LatAm as a growth opportunity but have limited direct experience with LatAm enterprise buyers.

These two audiences need different things from your traction story.

LatAm investors look at your pilot customers and immediately understand the context. They know what it means to have a pilot with a Brazilian retailer, a Colombian bank, or an Argentine logistics company. They can evaluate the quality of the reference, the likely path to expansion, and the competitive dynamics in that vertical. They are assessing whether you have the right customers and whether the deal mechanics make sense.

US investors look at your pilot customers and ask a simpler, more fundamental question: is this real? Is this a product that a Latin American company actually uses and pays for, or is this a friendly trial with someone who knows the founder? They are not being cynical. They are being appropriately rigorous about a market they are less familiar with. They need proof that product-market fit exists in the region, and they need that proof to be clean enough to explain to their LPs.

This dual-audience dynamic means your traction story has to satisfy two different standards simultaneously. For the LatAm investors: the right customers with the right deal mechanics. For the US investors: unambiguous evidence that the product works and is being actively used.

A bug-filled pilot undermines both standards. For LatAm investors, it signals that you haven't fully figured out the enterprise relationship. For US investors, it raises the question of whether the product is really ready.

The metrics story that 500 LatAm wants

500 Global is metrics-focused. Month-over-month revenue growth. User growth. Engagement. Retention. These are the numbers they want on your Demo Day slide.

The problem is that enterprise pilot metrics can look good in aggregate while hiding a serious underlying risk. You can show 40% MoM revenue growth and three active pilots on your slide. But if one of those pilots had a stability incident 2 weeks ago and engagement dropped off, you know that the slide is technically accurate but strategically fragile.

An investor who does diligence on your traction will call your pilot customers. They will ask about usage patterns. If the customer says "we were using it heavily, then had a technical issue, and we're just getting back into it," the investor hears "retention risk." The 40% MoM growth slide becomes a question about whether you can sustain it.

The pilot customers that make your metrics look clean are the ones that have been using the product consistently, without interruption, for the entire pilot period. That consistent usage story comes from a product that didn't break on them. It is directly enabled by stable critical path coverage.

Why LatAm enterprise traction translates badly when explained through bugs

US investors who are evaluating LatAm opportunities face a specific challenge: they cannot easily fact-check the context. They know what a Fortune 500 reference looks like. They know what AWS or Salesforce as a customer means. They know what a signed contract with a Series B SaaS company looks like.

They are less certain about what a signed pilot with a Brazilian retail chain means. Is this a meaningful enterprise? Is the contract size significant in local terms? Is the usage pattern typical for this industry? They are learning as they evaluate.

In this context, any ambiguity in your traction story creates more uncertainty than it would in a US market context. When a US investor asks your pilot customer "how's the product working for you" and the customer says "it's been good, had a few hiccups early on," the US investor doesn't have the context to know whether "a few hiccups" is normal for LatAm enterprise deployments or a signal of product immaturity. Without context, they default to skepticism.

A clean pilot story, where the customer says "it's been solid and we're planning to expand," is a much cleaner signal. The US investor does not have to apply judgment about LatAm norms. The signal is unambiguous. Product works. Customer is expanding.

The 500 LatAm batch timeline and where bugs surface

500 Global LatAm batches run intensive programs designed to drive metrics growth. The program involves weekly check-ins on growth metrics, and the implicit expectation is that you are pushing hard on every lever: acquisition, activation, retention, and revenue.

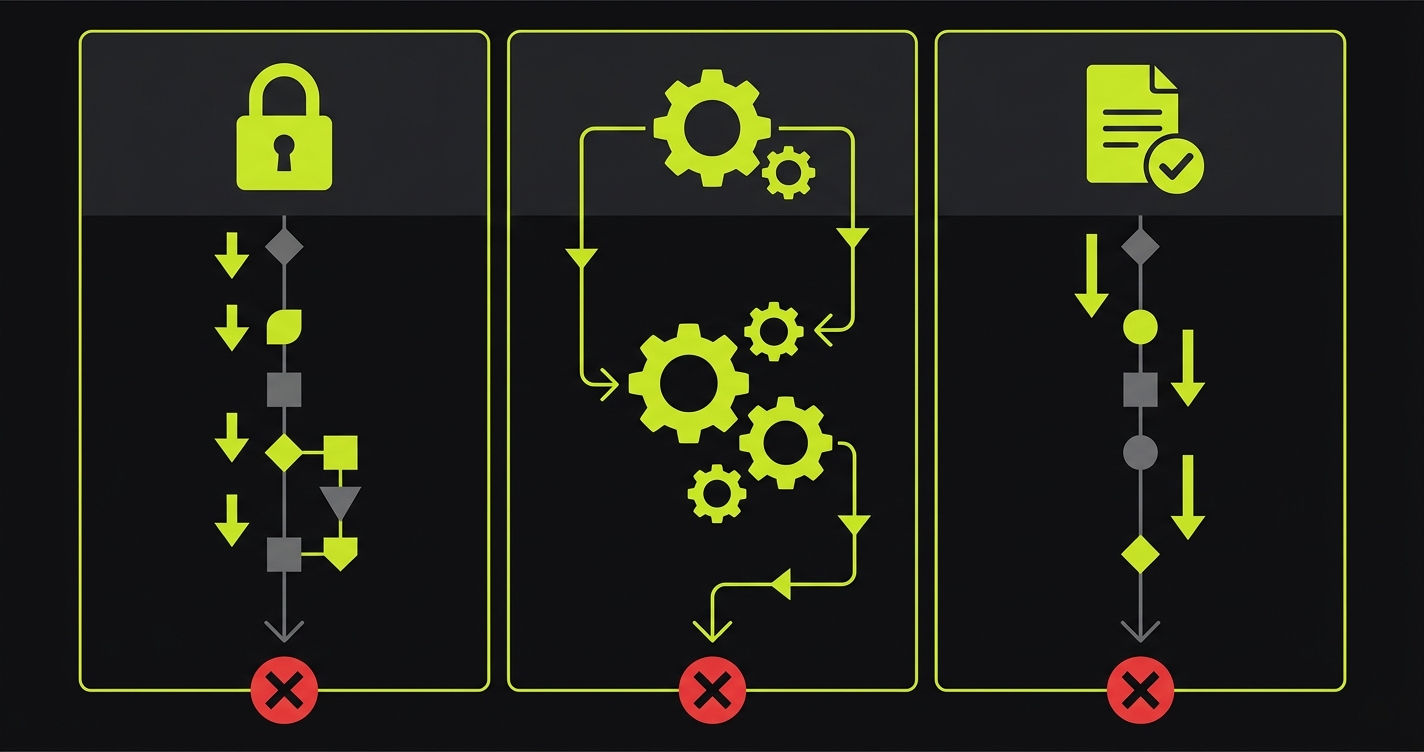

That intensity creates a specific bug pattern. Founders are shipping fast to hit metric targets. New features get pushed to improve activation. Onboarding flows get redesigned to hit weekly retention targets. Pricing pages get updated to run conversion experiments. Each of these changes is legitimate product growth work. Each of them is also an opportunity to break something a pilot customer depends on.

The weeks when your metrics are growing fastest are also the weeks when your deployment velocity is highest. High deployment velocity without critical path coverage is when bugs happen. And bugs in weeks 4-6 of a batch, when pilot customers are supposed to be in their active usage phase, are the most damaging to your Demo Day story.

The compounding effect: 500 Global coaches you to show engagement metrics on your Demo Day slide. Engagement means users logging in, completing actions, and returning. If a bug causes a 3-day engagement dip in week 5, it shows up in your weekly active user chart. The chart has a visible dip. Investors notice dips. They ask what happened.

"We had a bug" is a recoverable answer if it's followed by "and we've implemented test coverage to prevent it from happening again." It is a much weaker answer if it's just "we had a bug."

The geographic coverage problem for 500 LatAm companies

500 Global LatAm companies are often operating across multiple countries simultaneously. You might have one pilot in Mexico, one in Brazil, and one in Colombia. Each of these customers is using the same product but in different environments: different languages (Spanish vs Portuguese), different payment methods, different tax systems, different device and browser distributions.

A deploy that works perfectly for your Mexican customer may break something for your Brazilian customer because of a Portugese locale bug. A change that your Colombian customer's flow handles fine may create an edge case in your Mexican customer's flow because of a different date format.

This is the multi-country testing problem. Most early-stage companies don't have test coverage for their non-primary market. If you built the product in Mexico and then expanded to Brazil, your test suite (if you have one) is probably full of Spanish field labels, Mexican peso formats, and Mexican tax identifiers. Brazil is not covered.

Autonoma addresses this by reading your actual codebase, including the locale-specific and market-specific branches. It generates tests that reflect the actual flows for each configuration. You run separate test configurations for each market. Every deploy passes all three before it ships.

The critical path framework for multi-country 500 LatAm pilots

The same three-path framework applies, but you apply it per market:

Authentication per market. If you have SSO in Mexico but password-based auth in Brazil, both auth flows need coverage. If you added RUT validation for Chile or CPF validation for Brazil, those specific validation flows need tests.

Core action in each market's configuration. If your product's primary action involves local payment processing, local tax calculation, or local document formats, each market's version of that action needs its own test. A deploy that breaks the Brazilian nota fiscal generation but not the Mexican factura generation will pass a Mexico-only test suite and ship a bug to your Brazilian pilot customer.

Output in each market's language and format. Reports, emails, and exports in different languages and number formats. Spanish decimal separators (comma) vs English decimal separators (period). Brazilian real (R$) vs Mexican peso (MX$). Colombian date format vs Argentine date format. These differences cause bugs that only surface when a specific market's customer generates output.

The coverage matrix for a 3-country 500 LatAm company looks like this:

| Path | Mexico | Brazil | Colombia |

|---|---|---|---|

| Auth | Email/password + 2FA | CPF-based login | CC (cedula) validation |

| Core action | Factura generation | NF-e emission | Electronic invoice |

| Output | Spanish PDF, MXN format | Portuguese PDF, BRL format | Spanish PDF, COP format |

Each cell in that matrix is a test that needs to exist and run on every deploy.

Building the coverage with Autonoma

Here's what the test structure looks like for a multi-country deployment:

// 500 LatAm multi-country critical path coverage

// Parametrized to run against Mexico, Brazil, and Colombia configurations

import { test, expect } from '@playwright/test';

interface MarketConfig {

locale: string;

baseUrl: string;

testUser: string;

testPassword: string;

idField: string;

idValue: string;

currencySymbol: string;

invoiceType: string;

}

const markets: Record<string, MarketConfig> = {

mx: {

locale: 'es-MX',

baseUrl: process.env.MX_BASE_URL!,

testUser: process.env.MX_TEST_USER!,

testPassword: process.env.MX_TEST_PASSWORD!,

idField: 'rfc-input',

idValue: 'TEST010101ABC',

currencySymbol: 'MX$',

invoiceType: 'CFDI',

},

br: {

locale: 'pt-BR',

baseUrl: process.env.BR_BASE_URL!,

testUser: process.env.BR_TEST_USER!,

testPassword: process.env.BR_TEST_PASSWORD!,

idField: 'cpf-input',

idValue: '123.456.789-00',

currencySymbol: 'R$',

invoiceType: 'NF-e',

},

co: {

locale: 'es-CO',

baseUrl: process.env.CO_BASE_URL!,

testUser: process.env.CO_TEST_USER!,

testPassword: process.env.CO_TEST_PASSWORD!,

idField: 'cc-input',

idValue: '1234567890',

currencySymbol: 'COP',

invoiceType: 'Factura Electrónica',

},

};

for (const [market, config] of Object.entries(markets)) {

test.describe(`${market.toUpperCase()} pilot critical paths`, () => {

test('authentication with local ID format', async ({ page }) => {

await page.goto(`${config.baseUrl}/login`);

await page.fill(`[data-testid="${config.idField}"]`, config.idValue);

await page.fill('[data-testid="password"]', config.testPassword);

await page.click('[data-testid="login-submit"]');

await expect(page).toHaveURL(`${config.baseUrl}/dashboard`);

});

test('core action: create local invoice type', async ({ page }) => {

await page.goto(`${config.baseUrl}/dashboard`);

await page.click('[data-testid="nueva-factura"]');

await expect(page.locator('[data-testid="tipo-documento"]')).toContainText(config.invoiceType);

await page.fill('[data-testid="monto"]', '10000');

await page.click('[data-testid="emitir"]');

await expect(page.locator('[data-testid="emision-exitosa"]')).toBeVisible({ timeout: 20000 });

});

test('output: verify currency format in export', async ({ page }) => {

await page.goto(`${config.baseUrl}/reportes`);

const reportTotal = await page.locator('[data-testid="total-mes"]').textContent();

expect(reportTotal).toContain(config.currencySymbol);

// Ensure numbers are properly formatted for locale

expect(reportTotal).not.toContain('undefined');

expect(reportTotal).not.toContain('NaN');

});

});

}Autonoma generates this parametrized structure from your codebase. When it reads your repo and finds locale-specific code branches, it produces tests that run against each locale configuration. You end up with a test suite that covers Mexico, Brazil, and Colombia (or whatever markets you're in) with a single CI run.

The 48-72 hour stability window per market

For a multi-country 500 LatAm company, the 48-72 hour window before a customer's first solo session applies to each market independently. You might have onboarded Mexico in week 2, Brazil in week 4, and Colombia in week 6. Each of those onboarding events has its own critical window.

The calendar to maintain: for each active pilot, write down the date of the last guided session. Add 48 hours. That is your next highest-risk window. In the 48 hours before that date, no deploys that touch that market's critical paths unless all tests pass.

If you are managing three pilots in three markets, you will sometimes have overlapping windows. When that happens, the protocol extends: no Red deploys in any market's critical path until the overlapping window is clear. The cost of holding a deploy is lower than the cost of a bug incident in a market you haven't yet fully onboarded.

How the traction story holds together at Demo Day

The 500 Global LatAm Demo Day pitch structure rewards metrics density. You are expected to show MoM growth rates, active user counts, retention curves, and revenue figures. These metrics are your primary evidence for investors.

But metrics are snapshots. They can spike and fall. What investors are actually trying to assess is whether the metrics are durable. Is this growth a real signal or is it a temporary spike caused by launch promotion? Is the retention real or will it fall off?

Your pilot customers are the evidence of durability. When an investor asks "is this growth sustainable," the best answer is not another chart. It is "we have three active enterprise pilots where usage has been growing month over month, and I can put you in touch with the decision-makers." That answer requires pilots that have been consistently active, with growing usage, and no major interruptions.

A pilot that was interrupted by a bug incident in week 4 and is now recovering has inconsistent usage data. The MoM chart has a dip. The customer's enthusiasm is more guarded. The investor diligence call surfaces the incident. The traction story becomes less clean with every piece of context.

Stable critical paths are what keep the usage data clean. No dip in week 4. No recovery period. Just consistent, growing usage from a customer who has had a reliable product since day one.

The investor due diligence call you want your customer to receive

When a US investor who attended 500 Global LatAm Demo Day calls your pilot customer for diligence, the ideal call sounds like this:

Investor: "How long have you been using the product?" Customer: "About 8 weeks. We went live in week 2 of the pilot."

Investor: "Has it been reliable?" Customer: "Yes, we've had no technical issues. The team is responsive and they've added features we asked for."

Investor: "Are you planning to continue using it?" Customer: "Yes, we're already talking about expanding to two other business units."

That call closes your round. The US investor who had no context for LatAm enterprise now has unambiguous evidence: paying customer, reliable product, expansion planned.

The alternative call:

Investor: "Has it been reliable?" Customer: "Mostly yes. We had some issues in the middle of the pilot, but they fixed them. The product has been better since then."

That call introduces doubt. The investor cannot quantify "some issues in the middle." They don't know if it was one small bug or a week-long outage. They don't know if "better since then" means fully stable or just less bad. They ask more questions. The diligence process extends. The valuation comes in lower.

The difference between those two calls is the stability of your critical paths for 8 weeks. That is a solvable problem.

| Investor diligence question | Clean pilot answer | Qualified pilot answer |

|---|---|---|

| "Has the product been reliable?" | "No issues, it's been solid." | "Mostly, had a few hiccups early." |

| "Are you using it more or less than expected?" | "More. We're expanding." | "About the same. Still evaluating." |

| "Would you recommend it?" | "Yes, we already have." | "Yes, with the caveat that they're early-stage." |

| "Is the team responsive?" | "Yes, and we haven't needed to contact them much." | "Yes, they fixed things quickly when issues came up." |

FAQ

Short-term contracts mean the renewal decision happens more frequently. A stability incident in month 1 creates renewal hesitation in month 2. The customer has not had enough positive experience to offset the negative memory of the bug. With long-term contracts, a single incident is absorbed into a larger relationship. With short-term contracts, it can be the primary thing the customer remembers when they're deciding whether to renew. Stability is directly correlated with renewal rate when contracts are short.

Connect both repos to Autonoma separately. Generate critical path tests for each. Run both test suites in CI when you deploy to either environment. If the two codebases share significant logic (a monorepo with market-specific configuration, or a core repo with market-specific frontends), the test coverage can be shared with parametrization. If they are genuinely separate codebases, treat them as separate products with separate critical path coverage.

The growth work and the pilot protection are not in conflict as long as you know which code paths touch your pilot customer flows. Growth experiments (new onboarding flows, pricing changes, new activation features) can ship fast as long as they don't touch the critical paths. The critical path tests are the gate. If a growth experiment deploys code that touches a critical path, the test suite flags it in CI before it ships. You either fix it or route it around the critical path.

Yes, and this is good practice regardless of investor background. Brief your pilot customers before they receive an investor call. Tell them: an investor may reach out, here's roughly what they'll ask, here's the context I've shared about our traction. This preparation serves two purposes: the customer gives better context on the call because they understand the investor's frame, and you demonstrate to your customer that your fundraise is going well, which reinforces their confidence in you as a vendor.

The test suite continues running in CI regardless of the batch timeline. The coverage you built for Demo Day is the same coverage that protects your post-Demo Day pilots. In fact, the coverage becomes more valuable after Demo Day, because post-Demo Day you're often in a fundraise sprint and your team's attention is split between investors and product. Having automated critical path coverage means pilots stay protected even when your engineering focus is elsewhere.

Autonoma was built specifically for teams without dedicated QA. The value proposition is that your engineers do not write the tests. Autonoma reads your codebase and generates them. Your engineers review the generated tests (which takes hours, not days) and connect them to CI. Once that is done, the tests run automatically on every push. The ongoing maintenance burden is low because Autonoma updates tests when your codebase changes. A 3-person team can run this without a QA hire.