TLDR: Wayra's value is direct access to Telefonica as a pilot customer. That access is also its highest-stakes risk. A bug in a Telefonica pilot is not evaluated by a startup-friendly product manager. It is evaluated by corporate IT, procurement, and security simultaneously. One failure can delay a multi-country rollout by 6 months. This post covers how to protect the three critical paths in your Telefonica pilot using automated testing, so you ship the features they need without breaking the things they already depend on.

The Wayra deal is unlike any other pilot you've run

Most accelerator programs give you customers. Wayra gives you Telefonica.

That distinction changes everything about how you manage a pilot. Telefonica is one of the largest telecoms in the world, operating in Spain and across 10+ countries in Latin America. When you get a Wayra cohort spot and a path to a Telefonica pilot, you are not getting access to a startup-friendly enterprise that will tolerate rough edges. You are getting access to a corporation with formal IT governance, procurement committees, security review processes, and legal requirements that apply across multiple jurisdictions simultaneously.

The upside: a successful Telefonica pilot can be the foundation for a multi-country rollout and a reference that opens doors across LatAm. The investor story that comes with a Telefonica contract is genuinely differentiated.

The downside: a bug in a Telefonica pilot is not a one-team problem. It is a multi-team incident. Corporate IT logs it. Procurement notes it in the vendor evaluation. Legal may ask about it in the contract review. Security may flag it as part of their compliance assessment. And all of this happens simultaneously, not sequentially. You do not get a chance to fix the bug before procurement hears about it. The information propagates instantly inside a large organization.

This is the setup. The tension: you are still a startup, still shipping fast, still making product changes to satisfy Telefonica's requirements. Every change is a risk. And the consequence of a bug in this specific customer's environment is a 6-month delay in a deal that could define your company's trajectory.

Therefore: your critical path coverage for a Telefonica pilot needs to be air-tight before any deploy that touches the flows their users are on.

What a Telefonica pilot evaluation actually looks like

To understand why stability matters so much here, you need to understand how a large telecom evaluates a startup vendor.

When Telefonica's business unit agrees to a pilot, they do not run it in isolation. They loop in IT from the start, because IT has to approve any external software that touches their network or data. They loop in procurement, because the pilot is the precursor to a commercial contract and procurement wants visibility from day one. In some cases, they loop in legal and security before the pilot even starts.

These teams are not there to be helpful to your startup. They are there to protect Telefonica. Their job is to find reasons to say no. A bug is exactly the kind of evidence they use.

When IT sees a bug, they document it in their vendor evaluation report. When procurement sees that IT documented a bug, they add a stability requirement clause to the proposed contract. When legal sees a stability requirement clause, they add an SLA provision. By the time you have fixed the original bug and thought the issue was resolved, it has propagated into three different internal documents and shaped the terms of the deal you're trying to close.

This is not an exaggeration. This is how large LatAm corporates, and especially Telefonica's subsidiaries, work. The due diligence process is thorough, cross-functional, and documented. Every incident is on record.

The multi-country rollout math

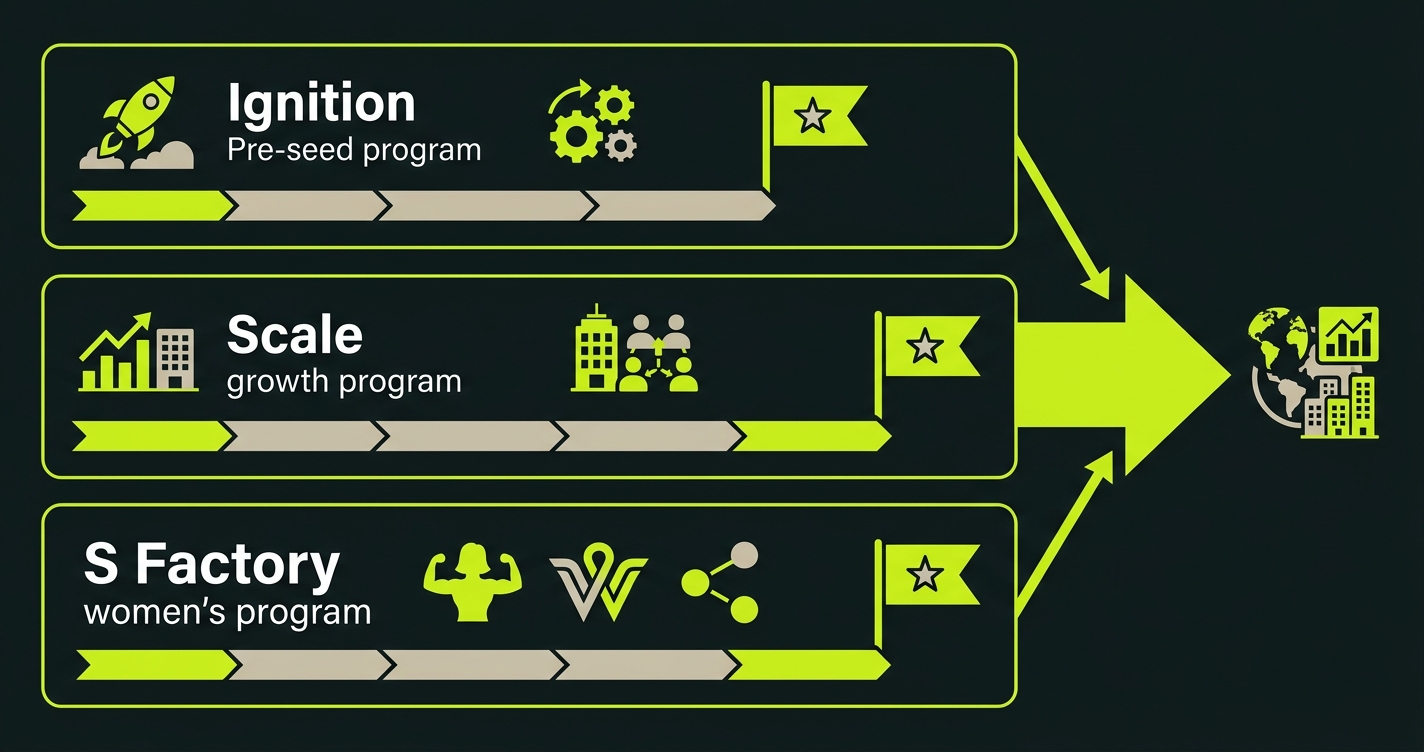

Wayra's pitch to portfolio companies is that a successful pilot in one Telefonica market can become a multi-country deal across their 10+ LatAm operations. This is real. Telefonica does standardize vendor relationships across subsidiaries.

But this also means that a failed pilot in one country can delay or block rollout across all of them. If your pilot in Telefonica Mexico has a stability incident that IT documents, that documentation can be shared with Telefonica Colombia, Brazil, and Argentina during their own vendor evaluation processes. You are not starting fresh with each subsidiary. You are carrying the record of every previous engagement.

The math is stark. A successful 8-week pilot in one country can unlock a 10-country rollout worth an order of magnitude more in contract value. A bug incident in week 5 of that same pilot can delay the whole thing by 6 months while additional review processes are added. The difference between those outcomes is whether your critical paths were stable for the duration of the pilot.

The three paths in a Telefonica pilot context

The critical paths for a Telefonica pilot are specific to how large telecoms operate internally. Let's be concrete.

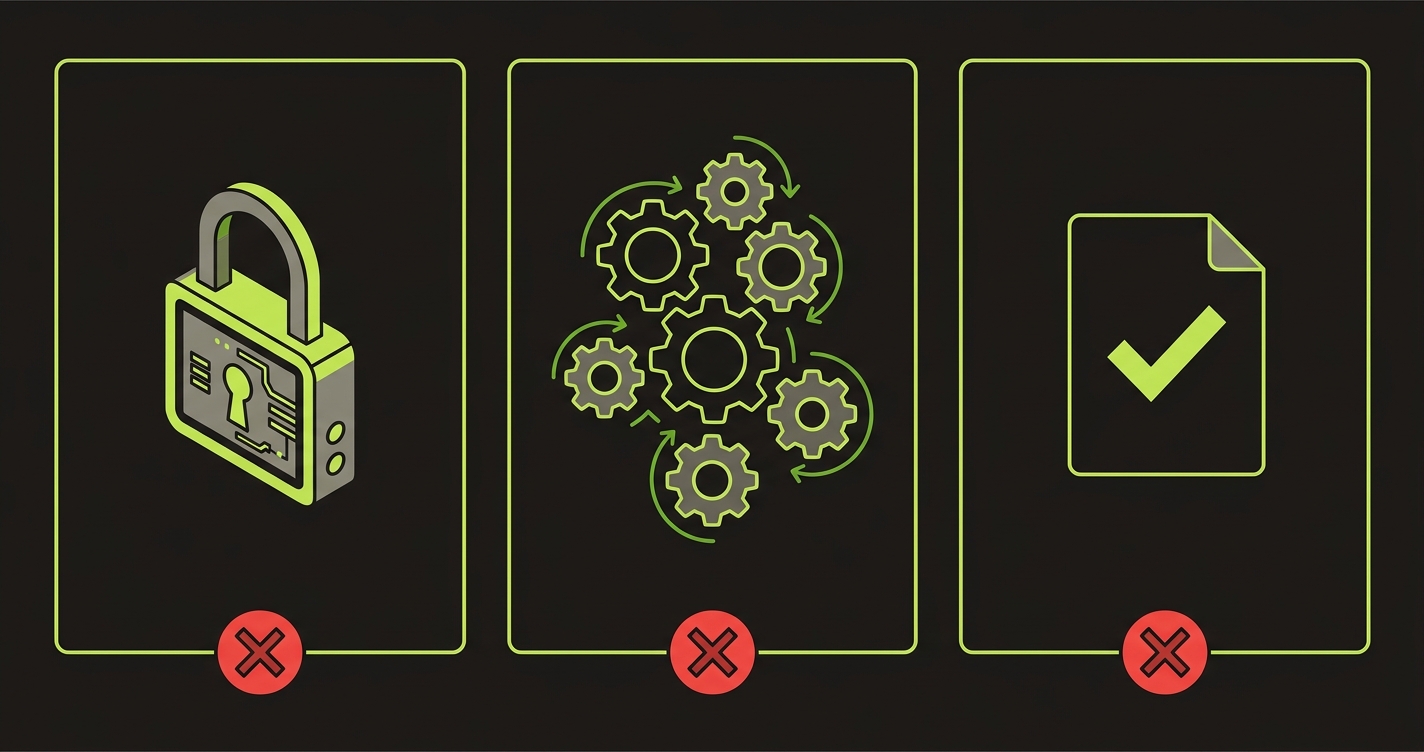

Authentication and identity management. Telefonica may require your product to integrate with their enterprise SSO. If you've done that integration, it is the single most fragile part of your entire deployment. SSO configurations are environment-specific and can break in ways that are hard to reproduce locally. If login breaks for a Telefonica employee, they file a ticket with their IT helpdesk. IT logs it. You do not find out from your champion. You find out from an IT report.

The core workflow their team has been trained on. Telefonica will have onboarded a specific team to use your product. That team was trained on a specific set of flows. If those flows change or break after onboarding, you create a support burden that Telefonica IT interprets as product immaturity. The flows that the trained team was shown are the ones you protect. Do not change them without explicit notice and re-training.

Any data output that travels outside your product. Telecoms are heavily regulated. Any report, export, or notification that your product generates may be treated as business-critical data. If that output is wrong, incomplete, or formatted incorrectly, it can create compliance concerns that are far out of proportion to the actual severity of the bug. Protect your output flows.

How to set up critical path coverage for a corporate pilot

The setup is the same as any startup critical path coverage, but the test scenarios need to reflect the corporate environment specifics.

// Example: Critical path tests for a Wayra/Telefonica pilot

// Covers SSO login, core workflow, and data export

import { test, expect } from '@playwright/test';

test.describe('Telefonica pilot: SSO auth and compliance export', () => {

test.use({ storageState: 'playwright/.auth/telefonica-sso-session.json' });

test('SSO session is valid and dashboard loads', async ({ page }) => {

await page.goto('/dashboard');

// Should not redirect to login if SSO session is valid

await expect(page).toHaveURL('/dashboard');

await expect(page.locator('[data-testid="user-org"]')).toContainText('Telefonica');

});

test('core workflow: create and submit service ticket', async ({ page }) => {

await page.goto('/dashboard');

await page.click('[data-testid="nuevo-ticket"]');

await page.fill('[data-testid="descripcion"]', 'Test ticket for automated validation');

await page.selectOption('[data-testid="categoria"]', 'red-movil');

await page.selectOption('[data-testid="prioridad"]', 'alta');

await page.click('[data-testid="enviar-ticket"]');

await expect(page.locator('[data-testid="ticket-id"]')).toBeVisible({ timeout: 10000 });

await expect(page.locator('[data-testid="ticket-estado"]')).toContainText('Recibido');

});

test('data export: compliance report generation', async ({ page }) => {

await page.goto('/reportes');

await page.click('[data-testid="generar-informe-mensual"]');

// Wait for report generation (telecom data volumes may take time)

await expect(page.locator('[data-testid="informe-listo"]')).toBeVisible({ timeout: 60000 });

const download = await Promise.all([

page.waitForEvent('download'),

page.click('[data-testid="descargar-informe"]'),

]);

expect(download[0].suggestedFilename()).toMatch(/informe-compliance-\d{4}-\d{2}\.xlsx/);

});

});Note the SSO-specific setup in this example. Testing SSO flows requires maintaining a valid session state and understanding what happens when that session expires or becomes invalid. Autonoma handles this setup automatically when it reads your codebase and detects the SSO integration pattern. You review the generated tests to confirm they match your specific Telefonica SSO configuration, and then they run in CI.

The corporate-specific details, the Spanish field names, the Telefonica-specific category options, the compliance report format, are picked up from your codebase because they're already in your components and API contracts. The generated tests reflect the actual product, not a generic template.

The 48-72 hour window in a Telefonica context

In a standard startup pilot, the 48-72 hour window before the customer's first solo session is the highest-risk moment. In a Telefonica pilot, there are multiple such windows.

The initial onboarding session with IT is one. If IT sees anything that looks like instability during their security review, it gets documented immediately.

The first session with the business unit team is another. These are the people your champion trained. If their first independent session breaks, your champion has to explain it to IT and to their own manager.

The first monthly review meeting is a third. Telefonica will likely have a formal review meeting to assess the pilot. The week before that meeting, you should be in a stability-only mode. No new features. No risky deploys. The test suite runs, and if it flags anything, you fix it on a hotfix branch with minimal scope.

Map these three windows in advance. Put them in your engineering calendar. Communicate the no-deploy policy to your team before you're in the window, not during it.

The Open Future presentation angle

Wayra's Open Future events are the moment you demonstrate to Telefonica leadership and external investors that you've delivered value during the cohort. The Telefonica pilot is the centerpiece of that story.

Telefonica leadership in the room will already have some awareness of your pilot's status. They will have heard from the business unit running it. If the pilot had stability issues, those issues may come up in the room. If the pilot was clean and the business unit is enthusiastic, you may get an on-stage endorsement that is worth more than any investor testimonial.

The way to get the clean pilot story is to protect the critical paths throughout the cohort, not just in the final 2 weeks. Stability is a cumulative property. A pilot that had incidents in weeks 3 and 4 but was clean in weeks 5 and 6 is still remembered as unstable. A pilot that was clean from day one is remembered as solid.

The investor angle: US and EU investors at Open Future events who are less familiar with LatAm enterprise sales will take the Telefonica reference at face value. A "Telefonica pilot" that went well is a strong signal. A "Telefonica pilot that went well after some early issues" creates questions about whether you're ready for enterprise scale. You want the first version of the story.

| Pilot outcome | Open Future presentation impact | Investor impression |

|---|---|---|

| Clean, expanding | Telefonica business unit in the room, enthusiastic | "Enterprise-ready, strong LatAm reference" |

| Mixed, recovering | Pilot still active but no expansion yet | "Promising but needs more validation" |

| Paused due to bugs | Hard to include in Demo Day story | "Product maturity questions" |

| Fully churned | Cannot use as reference | "No enterprise traction" |

Why your engineer wants to fix bugs after they ship

One dynamic specific to startup teams: engineers almost always want to fix bugs after they're found, not before they're deployed. The instinct is "ship it, we'll fix anything that comes up." This instinct is correct in most contexts. In a Telefonica pilot, it is wrong.

The problem is the multi-team escalation dynamic described above. In a normal startup context, fixing a bug quickly is a demonstration of responsiveness. The customer appreciates the rapid fix. But in a Telefonica context, the bug is already in IT's documentation before you know it exists. The fix is noted, but the incident is not removed from the record. You cannot retroactively make the bug not have happened.

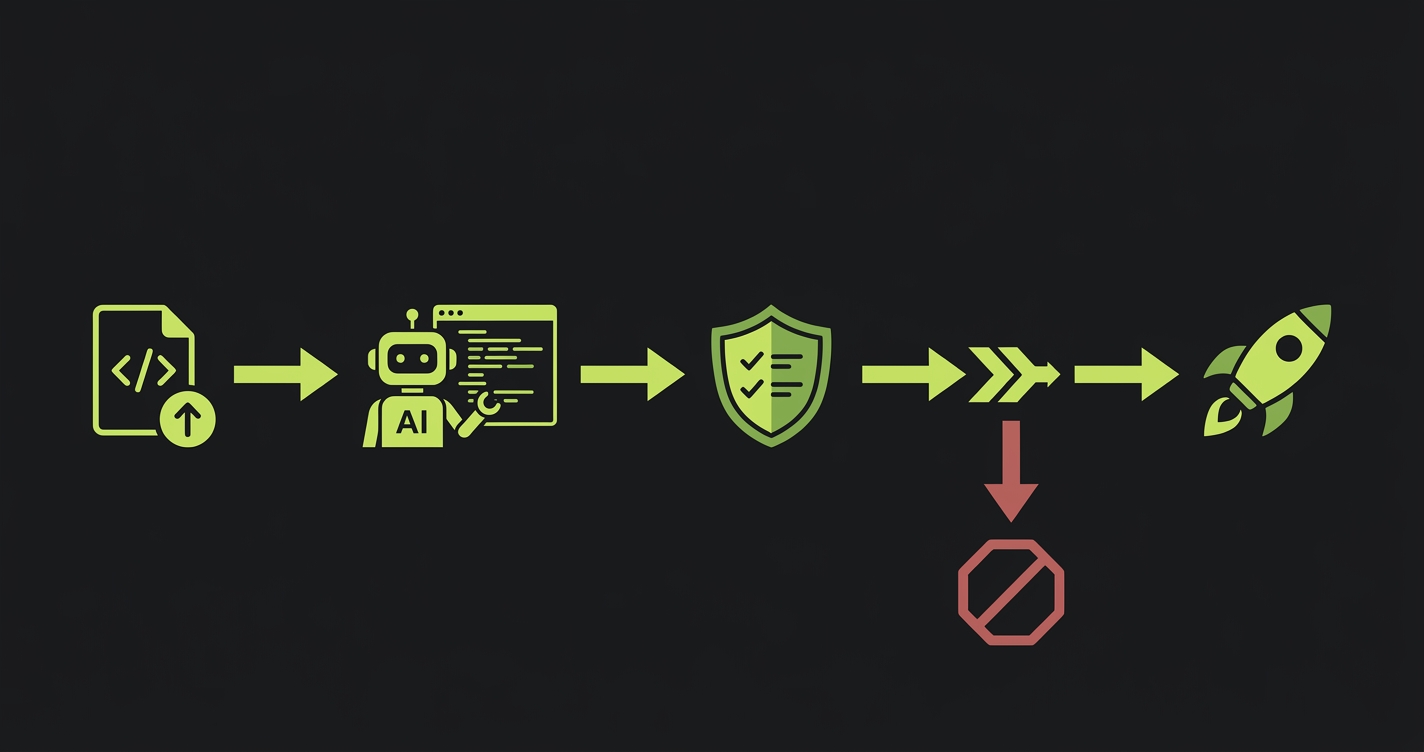

This is why the CI gate matters. If a critical path test fails in CI, the code doesn't deploy. The bug never reaches production. The customer never sees it. There is nothing to document.

The conversation with your engineering team is: "In this specific customer context, the cost of a deploy that breaks a critical path is 6 months of deal delay. The cost of holding a deploy for 2 hours while we fix the test failure is nothing. We are holding deploys that fail the pilot path tests."

That's a product prioritization decision. The test suite gives you the visibility to make it consistently.

FAQ

If the product configuration differs between subsidiaries (different SSO setup, different data formats, different language settings), yes. You need tests that reflect each environment's specific configuration. The core test logic is the same. The environment variables and configuration differ. Autonoma generates tests from your codebase, and if you have environment-specific configurations, those can be reflected in the test setup for each target environment.

No. Automated E2E tests run against your staging or production environment as a normal user would. They do not interfere with security scans. If Telefonica IT is running penetration testing or vulnerability scans, those run against your infrastructure separately from your CI test suite. The two processes are independent.

This is the high-risk scenario. A large refactor is the most likely thing to break your existing critical paths. The protocol: before starting the refactor, run your full critical path test suite against current production and confirm everything passes. Define the refactor scope clearly so you know exactly what existing paths it touches. Run the test suite against every intermediate state during the refactor. Ship the refactor in stages if possible, with a full test run between each stage.

The initial generation is fast, typically a few hours of setup and review. The longer work is mapping the specific Telefonica pilot paths before you run the generation. That mapping conversation between the founder, CTO, and the account manager who knows the pilot scope usually takes 30-60 minutes. From there, Autonoma generates the coverage and you review it for accuracy against the specific pilot environment.

It is 3 separate environment configurations, but the test logic can be largely shared if the product is the same. You parametrize the tests to run against each environment. The critical paths are the same (auth, core action, output). What differs is the environment URL, the credentials, and any country-specific field values. Autonoma handles this through environment-based test configuration.

This is a real risk in corporate pilot environments. Your critical path tests will flag this as a failure, which is correct. The key is to triage quickly: is the failure in your code or in the external dependency? If it's in their SSO or network, you document that immediately and communicate to your champion before IT does. Getting ahead of an infrastructure issue that originated on their side is a trust-building opportunity, not just a crisis to manage.

How Autonoma Protects Your Telefonica Pilot

A successful Telefonica pilot can unlock a multi-country rollout worth 10x the initial contract. Autonoma is the cheapest insurance you can buy against losing that deal to a regression. One bug incident in a corporate pilot environment can delay an entire multi-country expansion by six months. A 6-hour Autonoma setup costs you nothing by comparison.

Autonoma reads your codebase and generates E2E test coverage for the three critical paths automatically. It detects your SSO integration pattern and creates tests for the Telefonica SSO auth flow. It reads your route handlers, components, and API contracts, including the Spanish field names, the Telefonica-specific workflow steps, and the compliance report format. It generates tests that cover the approved Telefonica workflow your team has been trained on, and the data output your product produces. There is no test code to write. The tests reflect the actual product, not a generic template.

Every deploy runs those tests in CI before it ships. If a critical path fails, the code does not reach production. The bug never touches Telefonica's environment. There is nothing for IT to document, nothing for procurement to note, and nothing for security to flag. The CI gate is the reason your fix can happen at 2am and your champion never hears about it.

Autonoma covers error states specifically: the 500 responses and auth failures that IT security teams flag most aggressively in vendor evaluations. Those failures do not just frustrate users. In a Telefonica pilot, they create entries in security review documentation that can reappear months later during contract negotiations. The tests catch them in staging, before they exist in any record.

A 6-hour Autonoma setup at the start of the Telefonica pilot is the difference between a clean IT security record and a paused multi-country deal.