Seedcamp Week compresses your entire investor narrative into five days. Your customer traction story is your most powerful signal, and a pilot that breaks during that window doesn't just cost you a customer. It costs you credibility with every investor in the room simultaneously. The fix is not a QA sprint. It's targeted automated coverage on the three flows your pilot customers actually walk, locked in before Seedcamp Week starts.

Seedcamp Week is not like a typical investor event. Most pitch events give you 20 minutes on stage and a cocktail hour. Seedcamp Week is five days of structured investor meetings, portfolio introductions, and due diligence conversations, with a cohort small enough that everyone knows what everyone else is doing. If you have a pilot customer, every serious investor at that event will ask you about it. If that pilot is going well, that's your round narrative. If it broke last week, that's also your round narrative.

The math of Seedcamp Week is different from other accelerator timelines because of the concentration. You're not waiting for a single Demo Day. You're living inside five days where the relevant investor community is paying attention simultaneously, and reputations travel fast. A pilot customer who had a bug experience last Tuesday becomes a reference check story by Thursday.

Founders who go into Seedcamp Week with one or two paying customers or active pilots have a clear advantage over founders with zero revenue. But the gap between "active pilot" and "great reference customer" is narrower than it looks, and a buggy experience in the two weeks before Seedcamp Week is exactly what closes that gap in the wrong direction.

The Timeline Math That Actually Matters

Seedcamp Week typically falls at the end of a selection process. By the time you know you're in the cohort, you have somewhere between six and twelve weeks before the week itself. That's your working window.

The instinct is to use all of that time building. New features, polish, a better onboarding flow, the integration a pilot customer asked for. That instinct is correct, but incomplete. The problem is the compounding dynamic: every feature you ship in those six weeks is a potential source of regressions in the flows your pilot customer already depends on.

Here is how the timeline actually plays out:

| Week | Typical Activity | Risk Created |

|---|---|---|

| 1-3 | Build features for pilot customer feedback | Regressions in existing flows |

| 4-5 | Close pilot, onboard first users | Integration issues surface under real usage |

| 6 | Prep Seedcamp narrative, polish demo | Deploys with low test coverage |

| Seedcamp Week | Investor meetings, reference checks | Pilots must be fully functional, real-time |

The danger zone is weeks four and five. You're shipping fast to close a pilot, and you're also onboarding that pilot's users for the first time. Real usage always surfaces edge cases that staging never caught. Those edge cases show up during the exact window when the pilot customer is forming their initial impression of your product, which is also the impression they'll share with investors who ask.

The 48-72 hours before a pilot customer's first solo session without you watching over their shoulder is the highest-stakes window in the entire Seedcamp prep cycle. You can be present for their first guided demo. You cannot be present for every session during Seedcamp Week. The product has to work on its own.

Why Shipping Fast to Win Pilots Creates the Bug That Kills Them

The tension here is real and there is no way around it. To close a pilot before Seedcamp Week, you have to ship. Probably faster than is comfortable. Features get merged without complete review. Edge cases get noted and deferred. The customer asks for something on Tuesday and you have it deployed by Thursday because closing this pilot before Seedcamp is the priority.

All of that is correct behavior. The mistake is shipping fast and treating the whole product as equally stable after the fact.

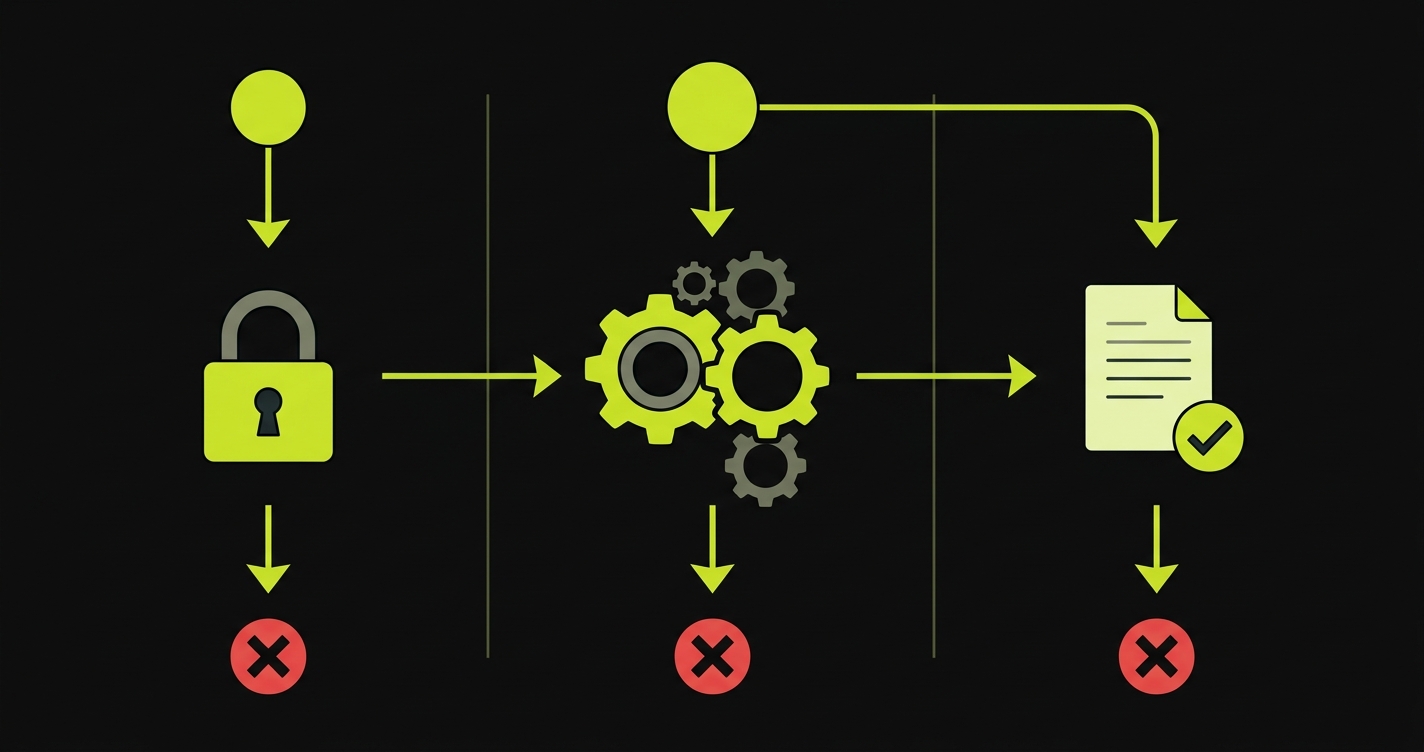

This is the regression problem. You add a new data export feature that the pilot customer asked for. The export is fine. But the route handler change you made to support the export silently broke the way results are displayed in the main table view. The pilot customer doesn't use the export feature for their first three sessions. They use the main table view. You didn't test the main table view after the deploy because it wasn't the thing you changed.

They run their first solo session during Seedcamp Week. The table is broken. They message you. You fix it in 20 minutes. But they've already formed the impression that the product is unstable, and that impression is what they share when an investor calls them for a reference check.

The fix is not slowing down shipping. The fix is adding automated coverage on the flows the pilot walks, so that the deploy that shipped the export feature would have caught the table regression before it reached production.

The "Protect the Deal" Framework

Not every part of your product needs to be stable for every pilot. The pilot customer at a Seedcamp-stage company is using a subset of the product, a specific workflow that delivers the value you sold them on. Everything else is noise.

The "protect the deal" framework is simple: map the exact path this pilot customer walks, and cover only that path with automated tests. Not the admin panel, not the settings page, not the API endpoints they haven't touched yet. The path they walk.

For most B2B SaaS products at the pre-seed stage, that path has three critical moments:

1. Authentication. They need to log in every time they use the product. If login breaks, nothing else matters. A broken login the morning of an investor call where you said "you can check with our customer" is a catastrophic failure mode.

2. The core action. The thing they actually do in your product. The analysis they run, the report they generate, the workflow they trigger. This is the value delivery moment. If it fails silently or returns wrong data, the customer doesn't just have a bug report. They have a reason to question whether your product works at all.

3. Output quality. What they see after the core action completes. The results, the export, the dashboard. This is what they screenshot to share with their team, which is also what they'd screenshot to share in a Slack message to an investor contact who asks how the pilot is going.

These three moments are your test coverage requirement. If all three work reliably after every deploy, your pilot is protected.

What Automated Coverage on This Path Actually Looks Like

Here is a concrete example of what protecting a pilot's path looks like in code. Assume the product is a B2B analytics tool. The pilot customer logs in, runs a data analysis, and downloads a report.

import { test, expect } from '@playwright/test';

test('pilot customer path: login, run analysis, download report', async ({ page }) => {

// Critical moment 1: authentication

await page.goto('/login');

await page.fill('[name="email"]', process.env.PILOT_TEST_EMAIL!);

await page.fill('[name="password"]', process.env.PILOT_TEST_PASSWORD!);

await page.click('button[type="submit"]');

await expect(page).toHaveURL('/dashboard');

// Critical moment 2: core action

await page.click('[data-testid="new-analysis"]');

await page.selectOption('[name="dataset"]', 'demo-dataset');

await page.click('[data-testid="run-analysis"]');

await expect(page.locator('[data-testid="analysis-status"]')).toContainText('Complete', {

timeout: 15000

});

// Critical moment 3: output quality

await expect(page.locator('[data-testid="results-table"]')).toBeVisible();

await expect(page.locator('[data-testid="results-table"] tbody tr')).toHaveCount(

await page.locator('[data-testid="results-table"] tbody tr').count()

);

const downloadPromise = page.waitForEvent('download');

await page.click('[data-testid="download-report"]');

const download = await downloadPromise;

expect(download.suggestedFilename()).toMatch(/\.pdf$/);

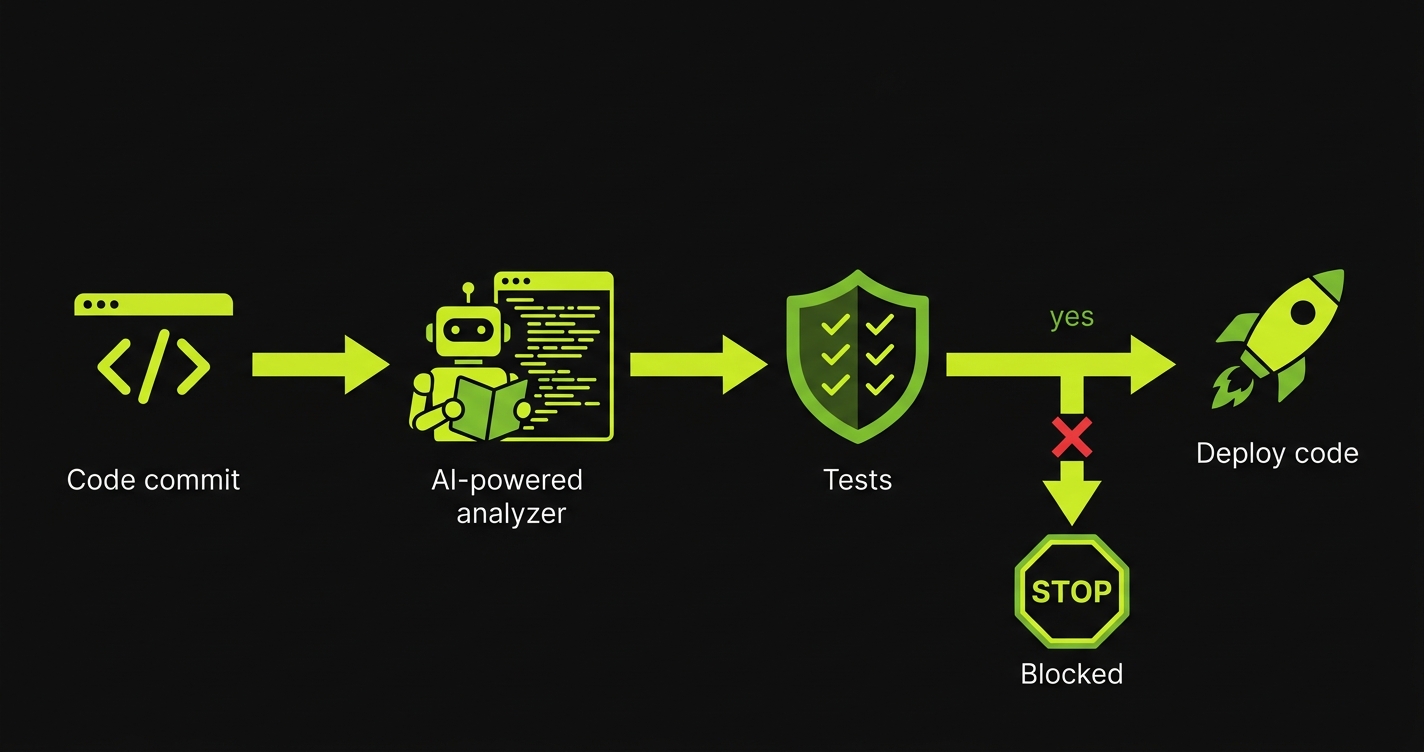

});This test runs in CI on every push to main. If any deploy breaks login, breaks the analysis, or breaks the report download, the CI check fails before the deploy goes out. The pilot customer never sees it.

The test is not comprehensive. It doesn't test edge cases, error states, or the dozen other features in your product. It tests the path the pilot walks. That's the requirement.

The 48-72 Hour Stability Window

The single most important deployment practice before Seedcamp Week is this: do not ship to production in the 48-72 hours before your pilot customer's first solo session.

This window exists because new bugs surface during the first 24-48 hours after a deploy. Not because your code is bad, but because real usage patterns exercise paths that staging and manual testing miss. The 48-72 hour window gives time for those bugs to surface during a period when you can respond quickly, rather than during the pilot's critical early sessions.

For Seedcamp Week specifically, the logic extends across the whole week. If Seedcamp Week starts on a Monday, your last production deploy should be Thursday at the latest. That gives you a full weekend to surface and fix any issues before the week begins and investors start asking about your pilot.

The objection is always: "what if we have to ship something during that window?" The answer is: you probably don't. The features that feel urgent during the Seedcamp prep period rarely matter to the pilot's experience. The pilot doesn't care about your new analytics chart. They care about the three things they use every session. Those three things need to work.

The Investor Angle: Reference Customers Are Diligence

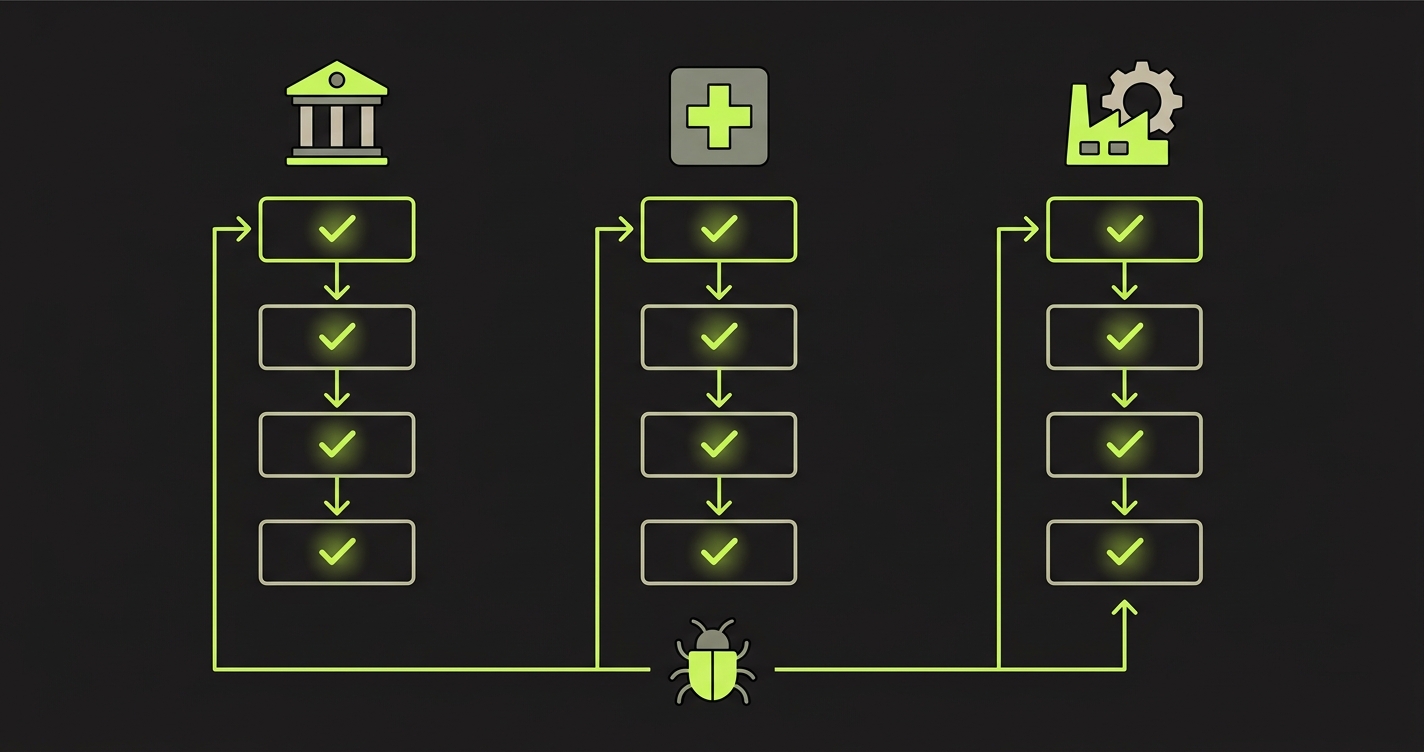

Here is the thing most Seedcamp founders underestimate. When Seedcamp or any investor in their network does diligence on your round, reference customers are a primary signal. Not just whether you have customers, but the quality of those customers' experience.

An investor call with your pilot customer goes well if the customer says: "The product works reliably, we use it every week, it's become part of our workflow." It goes badly if the customer says: "It's interesting but they're still working out some bugs" or worse, "We had a few issues early on that were a bit concerning."

The difference between those two outcomes is often a single broken session. One time the pilot tried to do their core workflow and something didn't work. That one experience is what they lead with when an investor calls.

Protecting your reference customers is not just a customer success function. It is investor diligence preparation. Every deploy you make in the six weeks before Seedcamp Week that doesn't have automated test coverage on the pilot's path is a risk to your round narrative, not just your retention.

How Autonoma Fits Into This

The honest problem with the "protect the deal" framework is time. You know you should have automated coverage on your pilot's path. You don't have time to write and maintain it while also shipping features and preparing your Seedcamp narrative.

Autonoma reads your codebase and generates the E2E tests for your critical flows automatically. You point it at your repo, tell it which flows matter, and it produces working test coverage that runs in CI. No test code to write. No selectors to maintain as the product changes. The tests stay current as the codebase evolves.

For a team of two or three people in the six weeks before Seedcamp Week, the value is not the tests themselves. It's the removal of the maintenance burden. You don't need to choose between shipping and testing. Autonoma handles the coverage while you handle the product.

The setup takes less time than writing your first Playwright test from scratch. The coverage it produces protects the three moments that matter: auth, core action, output quality, across every pilot you're running.

The Practical Prep Checklist

Six weeks out from Seedcamp Week, here is the sequence that actually protects your traction story:

First, map your pilot customer's path exactly. Log every step from login to the output they care about. This is a 30-minute exercise with your product open in front of you.

Second, write or generate automated tests for those three critical moments. If you're using Autonoma, this is automatic. If you're writing manually, follow the Playwright pattern above and timebox it to four hours.

Third, connect the tests to your CI pipeline. Every push to main runs the pilot path tests. A failing test blocks the deploy.

Fourth, establish the 48-72 hour freeze window before any high-stakes moment. Seedcamp Week start, the day before a pilot's scheduled solo session, the morning of an investor demo.

Fifth, create a test account in your production environment that mirrors the pilot customer's setup. The test runs against production, not staging, because that's what the pilot is actually using.

That's it. This is not a comprehensive QA practice. It's a targeted protection mechanism for the specific moment where a bug would do the most damage to your round.

Stop non-essential deploys 48-72 hours before Seedcamp Week starts, and ideally by the Thursday before if Seedcamp begins on a Monday. This gives time for any late-breaking bugs from your last deploy to surface while you can still respond. If something critical needs to ship during Seedcamp Week itself, run the full pilot path test suite in staging first, then deploy to production with someone ready to rollback within the hour.

Write a separate test suite for each pilot's critical path. The investment is small since each path has roughly the same three-moment structure: login, core action, output. The difference is which specific buttons they click and what output they expect. For Seedcamp Week, prioritize the pilot most likely to get an investor reference check call, which is usually the most recognizable company name or the highest contract value.

You don't need to explain automated tests to your pilot customer. What you can tell them, honestly, is that you have monitoring on the core workflows they use and you'll know before they know if something breaks. That's a credible thing to say to a pilot customer at the pre-seed stage. It signals engineering rigor without overpromising on your QA maturity.

At the pre-seed and seed stage, reference checks are usually informal. An investor knows someone at your pilot company, or they ask you for a reference and call them directly. The conversation is often: 'Is the product working? Do they respond when there are issues? Would you pay for it?' A pilot that broke once and got fixed quickly is often fine. A pilot that feels unreliable, even if individual issues were resolved, damages the reference. The pilot's overall sense of your product's stability is the signal that travels.

The fastest path is Autonoma, which reads your codebase and generates the tests without any manual scripting. If you're going DIY, use Playwright with TypeScript, write the three-moment test for your pilot's path, and connect it to GitHub Actions. Time-box the manual approach to four hours. If you're still writing tests after four hours, you're overthinking the coverage and should scope down to a simpler version of each critical moment.

Separate your deployment targets by customer segment. Changes that affect flows your pilot customer doesn't use can still ship. Changes that touch auth, the core action, or the output view require a full pilot path test run before deploy. The test suite is your gate on pilot-affecting changes, not a gate on all development. You can keep shipping aggressively to parts of the product the pilot isn't using.