TLDR: Startup Chile Demo Day is the moment you prove your product works in a new market. If you came from outside Chile, your pilot customers are the first evidence of local product-market fit. A bug-filled pilot doesn't just lose the deal. It destroys the market validation thesis you're there to prove. This post covers how to get automated coverage on the critical paths your pilot customers walk, so you can keep shipping and still show up to Demo Day with a clean story.

The specific pressure Startup Chile founders carry into Demo Day

Startup Chile is unusual among global accelerators because roughly 40% of its founders are international. They came to Santiago to enter a new market. The program gave them a base, introductions, and in many cases an equity-free grant to build with. But none of that matters if they can't prove, by Demo Day, that their product actually works here.

The word "here" is doing a lot of work in that sentence. It means: for Chilean customers, in Spanish, handling Chilean tax IDs and payment flows, inside Chilean enterprise IT environments, with Chilean procurement teams who have their own evaluation criteria. Getting a product to work "here" is a real engineering and product challenge, not just a sales challenge.

The founders who entered the Ignition program 6 months ago are now in the final stretch. They have pilot customers. They are still shipping product changes to accommodate local market requirements. And Demo Day is weeks away.

Here's the tension: every feature you ship to satisfy a local customer requirement is also an opportunity to break something the customer already depends on. You are adding Chilean RUT validation to your onboarding flow. You are adding local payment method support. You are adding Spanish-language email notifications. Each of these is a real change to your codebase, and each of them can break the auth flow, the core action, or the output that your pilot customer has already validated and is relying on.

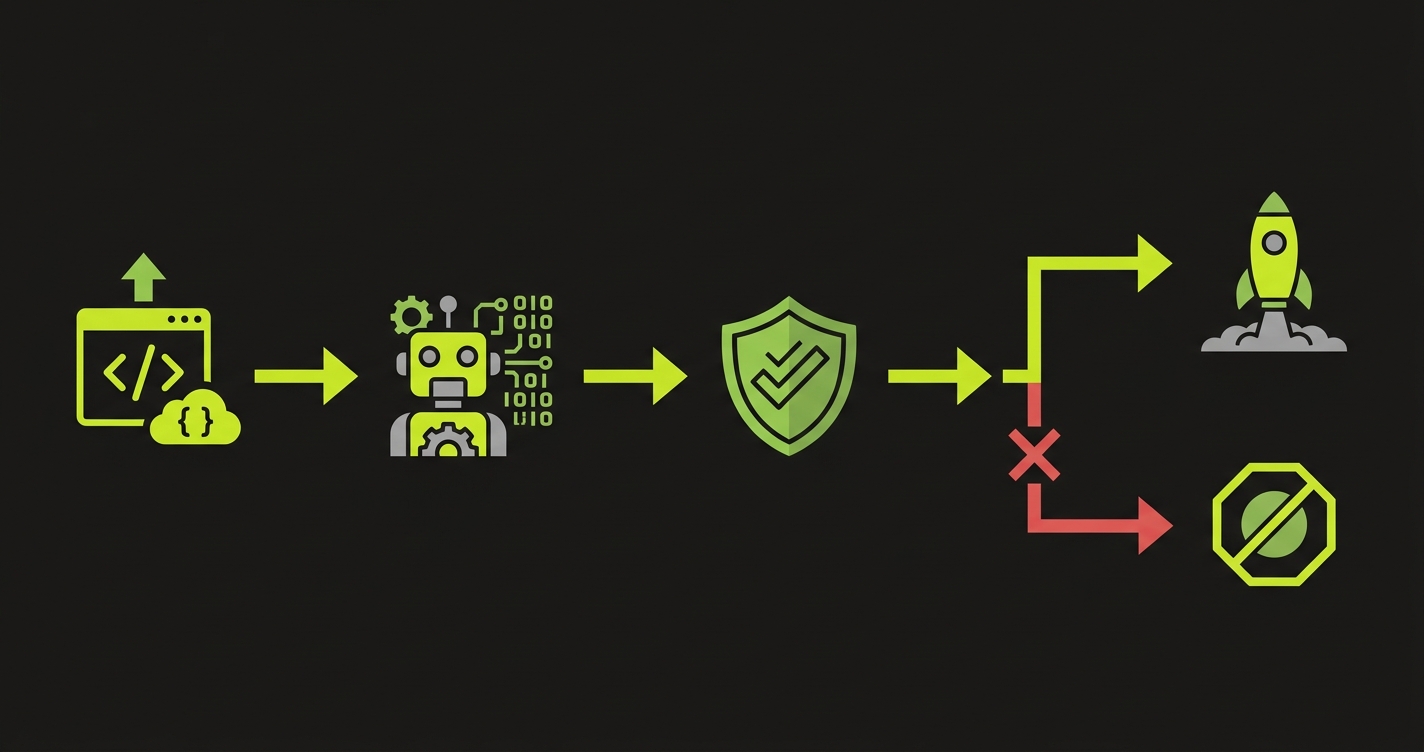

Therefore: the companies that show up to Startup Chile Demo Day with clean pilot stories are the ones that figured out how to make those market-specific changes without touching the things that already work. Automated coverage on the critical paths is how you do that.

What it actually means to "validate the market" with a pilot

When a Startup Chile founder says "we have a paying customer in Chile," they are claiming something specific. They are claiming that their product: (1) works in the local technical environment, (2) satisfies a real local business need, and (3) is stable enough for a customer to build a workflow around.

All three of those things can be undermined by a single bug at the wrong moment.

A bug during onboarding undermines claim 1. The customer sees a broken experience in their first session and concludes the product was built for a different market.

A bug in the core action undermines claim 2. The customer can't complete the workflow they signed up for. They don't get the value. The pilot pauses or ends.

A bug in the output undermines claim 3. The customer exported a report with wrong data, or the dashboard showed incorrect numbers, or the email notification went to the wrong address. They shared that output with their team. Now the bug is visible to people who were never part of the pilot approval process.

Each of these is recoverable in isolation. But when you're 5 weeks from Demo Day and trying to close the pilot, a bug is not just a technical problem. It is a threat to your ability to tell a clean story on stage.

The S Factory and Scale program dynamics

The same logic applies whether you're in Ignition, Scale, or S Factory, but the stakes are calibrated differently.

For Ignition founders (pre-seed, first market validation), the pilot customer is often the first paying customer ever. There is no backstory of reliability to fall back on. If this pilot has a bug incident, there is no "well, it's been stable for us since 2023" to say. You are building credibility from zero. The pilot needs to be flawless.

For Scale founders (growth stage, expanding in LatAm), you have a track record but you're pushing into new territories. You might be adapting a product that works well in Brazil for the Chilean market, or vice versa. The localization changes are exactly where bugs hide. Your existing test suite, if you have one, was written for your original market. It probably does not cover the new flows you added for Chile.

For S Factory founders, there's an additional dimension: you are often navigating enterprise procurement processes with fewer internal advocates than a mixed-gender founding team might have in the same company. Your champion is working harder to build internal consensus. A bug in front of an executive review can undo months of relationship-building in one session.

In all three cases, the answer is the same: you need automated coverage on the critical paths specific to each pilot customer.

Localization changes are where bugs hide

Here's something specific to international founders building for the Chilean market: the bugs that surface during localization are almost never caught by developers building in their home market context.

You add RUT validation to your signup form. You test it with a few sample RUTs. It works. But you missed the edge case where an older corporate RUT format fails the regex, and your pilot customer's company uses that format. The employee can't sign up. They send a screenshot to their manager.

You add Chilean peso formatting to your pricing display. You test it in Chrome on your MacBook. But your enterprise customer's IT department mandates Internet Explorer 11, and the number formatting library you used doesn't handle IE11. The prices display as NaN or as unformatted integers. The customer's finance team sees a screen full of raw numbers and immediately escalates.

You add a "generate monthly report" feature with Spanish field labels. You test the happy path. But you didn't test the case where a user has a special character in their name (very common in Spanish names), and the PDF generation library crashes on ñ or ü. The customer tries to generate their first report and gets a 500 error.

These bugs are not signs of incompetence. They are the natural output of building fast for a market you're still learning. The question is whether you catch them before the customer does.

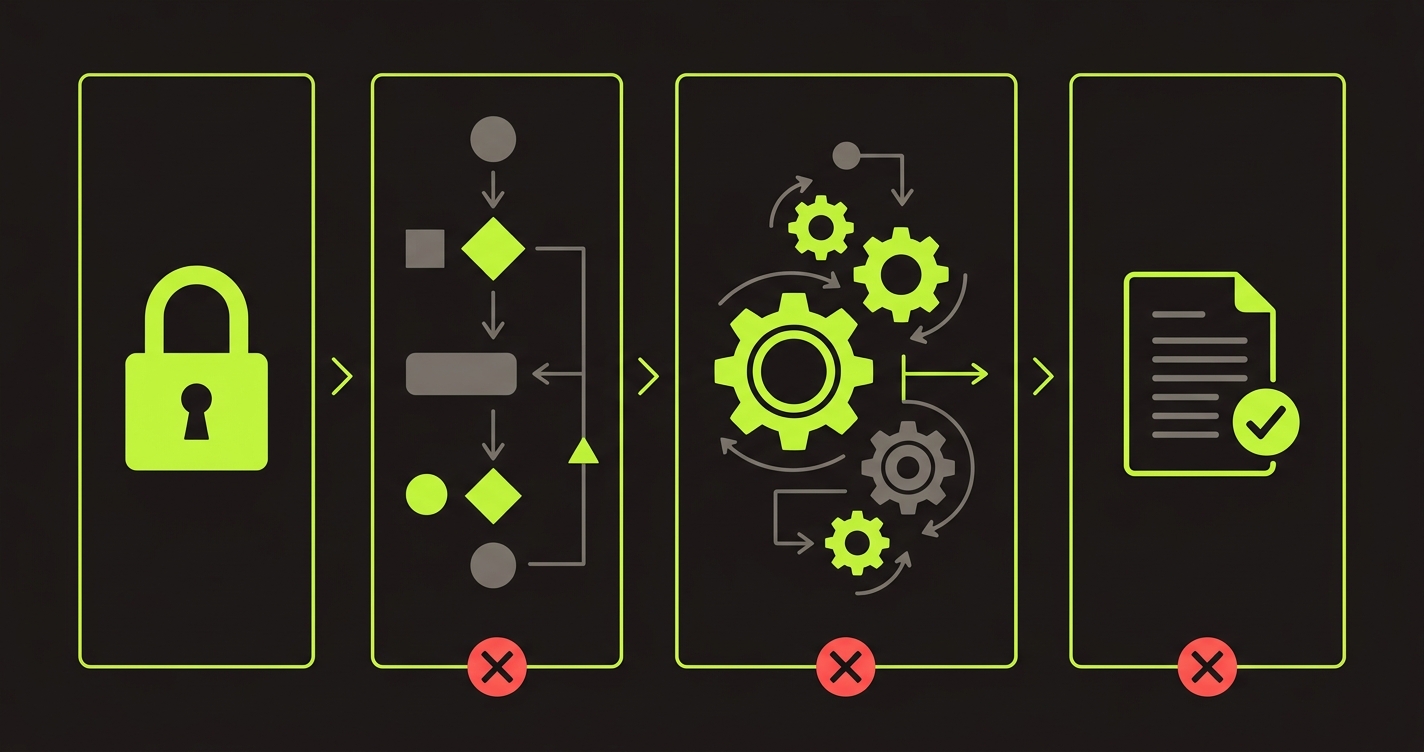

The critical path framework for Startup Chile pilots

The three paths that cannot break:

Authentication, including any Chilean-specific identity fields. If you added RUT or other local identifiers to your auth or onboarding flow, those need to be in your test coverage. Don't just test the happy path. Test the formats your pilot customers actually use.

The core action, in the localized version. Whatever your product's primary value-delivering action is, the localized version of that flow needs its own coverage. If you added Spanish-language options, local payment methods, or Chile-specific data fields, test those specific paths. Your original English-language happy path test is not sufficient.

Report and output generation, including any local formatting. If your product generates output that your customer shares with their own team, test that output. Test that it generates correctly, that the data in it is accurate, and that it doesn't fail on any special characters or formats common in Chilean business data.

Write these three sets of paths down. Then make sure they have automated test coverage that runs every time you deploy.

The practical setup with Autonoma

Here's how you get from "no test coverage" to "critical paths covered" in less than a week, without your engineers stopping feature work.

// Example: Autonoma-generated test for Chilean market pilot critical path

// This covers RUT-based login + core action + export for a B2B SaaS product

import { test, expect } from '@playwright/test';

test.describe('Chile pilot: RUT login and report generation', () => {

test('pilot user completes full workflow with Chilean locale', async ({ page }) => {

// Auth: RUT-based login

await page.goto('/login');

await page.fill('[data-testid="rut-input"]', '76.543.210-K');

await page.fill('[data-testid="password"]', process.env.PILOT_USER_PASSWORD!);

await page.click('[data-testid="login-button"]');

await expect(page).toHaveURL('/dashboard');

await expect(page.locator('[data-testid="user-greeting"]')).toBeVisible();

// Core action: create and process a Chilean invoice record

await page.click('[data-testid="nueva-factura"]');

await page.fill('[data-testid="monto-input"]', '1.250.000');

await page.selectOption('[data-testid="moneda"]', 'CLP');

await page.fill('[data-testid="rut-proveedor"]', '77.123.456-7');

await page.click('[data-testid="procesar"]');

await expect(page.locator('[data-testid="estado"]')).toContainText('Procesado', {

timeout: 15000

});

// Output: export report with special characters in company name

await page.click('[data-testid="exportar-informe"]');

const download = await page.waitForEvent('download');

const filename = download.suggestedFilename();

expect(filename).toMatch(/informe.*\.pdf/);

// Verify no error state in the download

expect(filename).not.toContain('error');

});

});Autonoma generates tests like this from your codebase. You don't write them manually. You connect your repo, point Autonoma at the flows that matter, and it produces the coverage. Then you add it to CI and it runs on every push.

The localization-specific parts (RUT format, peso amounts, Spanish field labels) are things Autonoma picks up from your actual codebase because they're in your component code and your API definitions. You review the generated tests to confirm they match reality, and then they run automatically from that point forward.

The 48-72 hour stability window for new market pilots

For international founders, the first solo session with a Chilean customer has an extra layer of risk. Your customer's users have never used your product without you on the call explaining things. Their experience of the product in that first independent session forms their first impression of whether it's "a product that works in Chile" or "a foreign product that sort of works here."

If they encounter a bug in that session, the mental model they form is the second one. And that mental model is hard to change.

The protocol: identify the date your pilot customer's team is scheduled to start using the product independently. Do not deploy anything to production in the 48 hours before that date unless the deployment passes a full run of your critical path test suite. If the test suite fails, you hold the deploy. The feature waits. The pilot comes first.

This is a prioritization decision, not a technical one. It requires the founder to make a deliberate choice to protect the pilot window. The test suite just gives you the visibility to make that choice confidently.

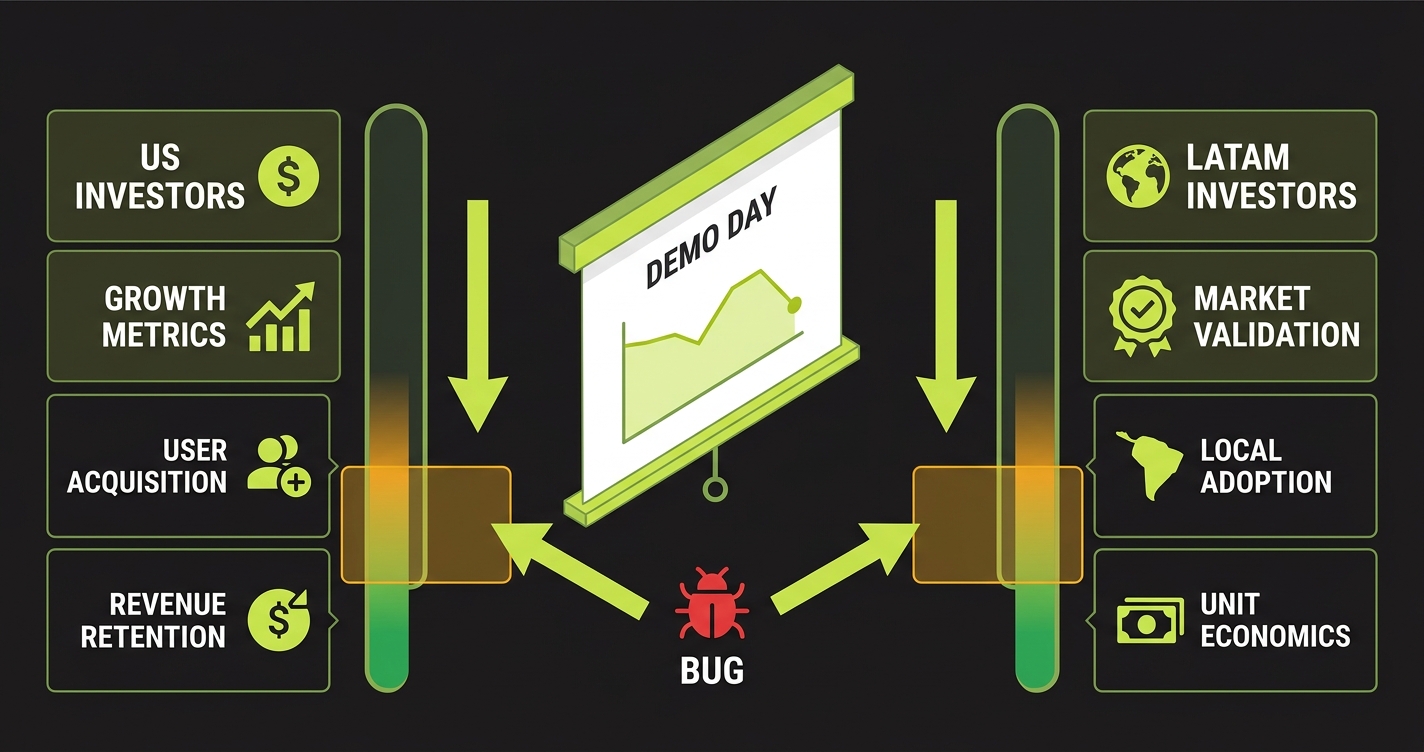

The Demo Day story you're trying to tell

On Startup Chile Demo Day, the investor story you want to tell is: "We entered the Chilean market 6 months ago, we have two paying customers, and both of them are actively using the product and expanding." That story has three requirements: real customers, active usage, and expansion signals.

Active usage is the one that testing protects. If your pilot customers stopped using the product because of a bug incident 3 weeks ago, and you've been trying to re-engage them since, you do not have active usage. You have a churned pilot that you're trying to resurrect.

The companies that show up to Demo Day with a clean story are the ones that never let their pilots go dormant. They shipped fast and they stayed stable. That combination is achievable. It requires deliberate decisions about which paths to protect and the right tools to protect them automatically.

| Pilot risk scenario | Consequence for Demo Day story |

|---|---|

| Bug in auth flow, customer can't log in for 2 days | "We had technical issues early on": weakens market validation claim |

| Bug in core action during customer's first solo session | Pilot pauses, champion loses enthusiasm, deal may not close in time |

| Bug in report output, bad data shared internally | Pilot escalates, IT gets involved, procurement review added |

| No bugs, pilot runs clean for 6 weeks | "Two active, paying customers in Chile": clean Demo Day story |

FAQ

In localization code. Anywhere you've added Chilean-specific fields (RUT, CLP formatting, Chilean address structure), Spanish-language UI changes, or new integrations with Chilean payment or tax services. These areas were not part of your original test coverage, and they're exactly where the customer-visible bugs will appear. Autonoma can generate coverage for these new flows directly from the localized codebase.

The framework is the same but the stakes are higher. Government agency IT environments in Chile often have additional constraints: specific browser requirements, network restrictions, single sign-on configurations tied to government identity systems. Map the three critical paths in the context of those constraints. If you have a staging environment that mirrors the agency's setup, run your critical path tests there before each deploy.

You need coverage for each customer's specific critical paths, but you don't necessarily need completely separate test suites. If the core product is the same and only some configuration differs, you can parametrize tests to run against each customer's environment. What you can't do is test only the first customer's paths and assume the second is covered. Autonoma generates coverage per-flow, so you can generate for both and run both in CI.

The test coverage you build before Demo Day is also the foundation of your post-program stability. If you close a customer during or after Demo Day and they expand usage, you have coverage protecting that expansion. The work is not throw-away. The critical paths you protect for Demo Day are the same paths your customers will be on for the next year.

Yes. Autonoma is designed for teams without dedicated QA. A contractor who can set up a CI pipeline and review generated test code can get you up and running. The core setup work is: connect the repo, identify the critical paths, review the generated tests, add to CI. That's a day of work for a competent contractor, not a multi-week engagement.

Same framework applies. Map the three critical paths for that customer's environment. If they're in another LatAm country, account for their local market specifics in the test setup. The pilot protection logic doesn't change based on which accelerator you're in. What changes is the specific content of the critical paths you're protecting.