The Techstars customer problem: The Techstars network is powerful precisely because mentors make warm intros to real companies who take your call. That advantage disappears the moment one of those companies has a buggy experience during their pilot. Founders in weeks 8-12 are shipping fast to win new customers while simultaneously running pilots that came from mentor intros. A bug during one of those pilot sessions does not just cost the deal. It costs you the mentor relationship and the next intro they were going to make. The solution is not to slow down. It is to build surgical coverage over the paths your pilot customers walk, so you ship fast everywhere else while protecting the deals that matter.

The Techstars pitch is simple and true: the network is everything. In 13 weeks, across programs in Berlin, NYC, Boston, Boulder, London, and 100 other cities, you get access to a mentor network that most founders spend years trying to build. Those mentors do something specific and valuable: they make introductions to potential customers who take the meeting because of the mentor relationship, not because of your cold outreach.

That network effect has a sharp edge. When a mentor intro becomes a pilot customer and that customer has a bad experience, the failure ripples backward through the relationship. Your mentor hears about it. The next intro they were considering gets delayed. The "Do More Faster" philosophy that Techstars is built on collides with the operational reality that doing more faster also means shipping more bugs.

Therefore: the question every Techstars founder needs to answer in weeks 7-12 is not just how to close customers before Demo Day. It is how to close customers before Demo Day without a bug surfacing in a mentor-sourced pilot and creating a problem that reaches back into the mentor network you depend on for the next intro.

The Techstars Timeline Math

Techstars runs 13 weeks. The program structure creates a natural customer acquisition window that most founders only see clearly in retrospect.

Weeks 1-3: orientation, mentor meetings, product positioning. You're in back-to-back meetings, refining the pitch, figuring out which mentors are relevant.

Weeks 4-8: the mentor network activates. This is when the intros happen. A mentor who runs a SaaS company hears your pitch and says "you should talk to my portfolio company, they have exactly this problem." Those conversations happen in weeks 4-8. If you handle them well, you convert a percentage of them to pilots starting in weeks 8-10.

Weeks 8-12: pilots are running. This is also when you're shipping the most code, because you're now deep enough in the program to know what your customers actually need, and you're building to their specific requirements to convert the pilots that are evaluating you.

Week 13: Demo Day. Investors from your city and beyond. Your mentor network in the audience. The question on the table: do you have customers?

Working backward from Demo Day: a pilot that starts in week 8 has four weeks to close before week 12 gives you breathing room before the event. A pilot that starts in week 10 needs to close in two weeks to make it. A pilot that should close in three weeks but has a mid-pilot incident takes four to five weeks and misses the window.

Every bug that surfaces in an active pilot adds time to the close cycle. In the Techstars timeline, that time is not available.

Why Mentor Intros Create a Specific Risk Profile

A cold-sourced customer pilot and a mentor-sourced customer pilot are different in one critical way: the accountability structure.

When you get a customer from your own outreach, the relationship runs directly between your company and theirs. If something goes wrong, you handle it with the customer. The loop is contained.

When a mentor makes the intro, the mentor is part of the relationship. The customer took the meeting as a favor to the mentor. If the pilot goes badly, the customer's feedback goes two directions: to you, and, often casually in conversation, back to the mentor. "We tried that company you introduced us to. Had some issues."

Mentors are not going to stop making intros because one pilot had a bug. But they will make fewer intros to that founder going forward, and they will frame future intros with a caveat. "They're still early but worth a conversation" is a different intro than "I've seen what they're building and it's solid." That framing affects whether the next potential customer takes the pilot seriously.

This is the specific risk in the Techstars context. The network that makes it possible to get customers in a 13-week window is also the network that gets informed when those customers have bad experiences. Protecting your pilots is not just protecting the deals. It is protecting your access to the deal pipeline.

The "Protect the Deal" Framework

The instinct when you're running three pilots and building a fourth feature is to ship continuously and handle issues as they surface. That instinct is correct for feature development. It is wrong for pilot management.

The right mental model: separate your shipping surface from your pilot surface. Your pilots walk a narrow path through your product. They log in, they run a specific workflow, they look at a specific output, they export or share it. That path is maybe five to eight screens wide. Your product is fifty screens wide. Bugs on the forty-five screens your pilot customer never touches are irrelevant to the deal. Bugs on the five screens they do touch are deal-ending.

The practical implementation: before any deployment reaches production, run automated checks on the paths your active pilot customers walk. Not a full regression suite. Not comprehensive QA. A check covering the login flow, the primary workflow, and the output they evaluate at the end of each session.

This check takes two minutes to run. It runs on every pull request. When a change you made to support a new pilot breaks something in an existing pilot's path, you catch it in CI before it reaches production. The existing pilot's session happens cleanly. The deal stays on track.

What to Cover: The Three Moments That Cannot Break

For every active pilot, there are three moments where a bug is guaranteed to cost the deal.

Authentication. If a pilot customer cannot log in, the session does not happen. This sounds obvious. It is also the most common first impression bug. You added SSO for a new customer. The change touched an auth library version. A different customer's Google OAuth stopped working. You didn't know because you don't test the auth flow before every deploy. They found out Monday morning.

Cover every auth path that any active pilot uses. If one pilot uses username/password and another uses Google SSO and a third has a custom SAML configuration, test all three before every deploy. Auth is three minutes of test code. It protects 100% of sessions.

Core action. The thing your product does. For a data tool, it's the data transform or analysis. For a workflow tool, it's the workflow execution. For an AI tool, it's the generation or extraction. Whatever the pilot customer came to evaluate: that action must complete successfully. Cover it with an end-to-end test that runs the action and asserts on completion and output shape.

Output quality. Pilot customers are evaluating your product against a criterion: does this work well enough for us to pay for it? Their evaluation happens when they look at the output. If the output is malformed, missing, or wrong, the session ends in the wrong direction. Assert on the output. Not that it's beautiful: that it's present, structurally correct, and contains the expected elements for the scenario you're testing.

These three cover the moments that kill deals. Everything else is secondary.

The 48-72 Hour Stability Window

The most dangerous deployment in the Techstars context is the one that lands right before a pilot customer's first solo session.

Here's the pattern that founders run into. They onboard a pilot customer on Tuesday, walk them through the product, and the session goes great. The customer is excited. They'll do their first solo session Thursday or Friday. On Wednesday, you ship a new feature that Prospect D asked for, the feature that will convert them from interested to pilot. The deploy goes out Wednesday afternoon.

Thursday morning, your Tuesday onboarding customer tries to run the workflow they saw in the session. Something is broken. Not badly, but the output format changed slightly, or a button moved, or a loading state is different than it was during the demo. They send you a message. You fix it and respond. But the session they were going to have on Thursday becomes a check-in call on Friday, which pushes their evaluation by a week.

That week costs you the deal before Demo Day.

The fix: when you onboard a pilot customer, mark the next 48-72 hours as a stability window. During that window, do not deploy to production unless you have a critical bug fix. After the customer completes their first independent session and sends you positive feedback, resume normal shipping. They've now seen the product work on their own. Their mental model is set. Subsequent changes are evaluated in context of an established experience rather than a fragile first impression.

This does not require slowing down your shipping velocity overall. It requires awareness of which customers are in their first-session window and timing your deploys accordingly. In a 13-week program, this is a 3-day operational discipline, not a structural constraint.

The Automated Coverage Stack for Weeks 8-12

For a Techstars company running three to five pilots in weeks 8-12, here is the test coverage stack that protects the deals without requiring a QA team.

// Techstars Demo Day protection suite

// Run before every deployment to production

// One test file per active pilot + shared auth/core coverage

// --- SHARED: Auth coverage for all pilot auth paths ---

test.describe('auth: all pilot entry paths', () => {

test('password login', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_TEST_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_TEST_PASSWORD!)

await page.click('[data-testid="submit"]')

await expect(page).toHaveURL('/dashboard')

await expect(page.locator('[data-testid="user-nav"]')).toBeVisible()

})

test('google oauth redirect', async ({ page }) => {

// Verify OAuth entry is reachable and redirects correctly

await page.goto('/login')

const googleBtn = page.locator('[data-testid="google-login"]')

await expect(googleBtn).toBeVisible()

await expect(googleBtn).toBeEnabled()

})

})

// --- CORE ACTION: primary workflow must complete ---

test('core workflow: runs to completion and produces output', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_TEST_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_TEST_PASSWORD!)

await page.click('[data-testid="submit"]')

await page.waitForURL('/dashboard')

// Trigger core action

await page.click('[data-testid="primary-action"]')

await page.waitForSelector('[data-testid="output-ready"]', { timeout: 60000 })

// Output must be present and non-empty

const output = await page.locator('[data-testid="output-container"]').textContent()

expect(output).toBeTruthy()

expect(output!.length).toBeGreaterThan(10)

expect(output).not.toMatch(/error|failed|undefined/i)

})

// --- OUTPUT QUALITY: saved state and configuration persist ---

test('pilot state: configured settings survive deploy', async ({ request }) => {

const res = await request.get('/api/workspace/settings', {

headers: { 'Authorization': `Bearer ${process.env.PILOT_API_TOKEN}` }

})

expect(res.status()).toBe(200)

const settings = await res.json()

expect(settings).toHaveProperty('configured')

expect(settings.configured).toBe(true)

// Pilot-specific configuration must not be reset by deploy

expect(settings.custom_fields).toBeDefined()

})This suite runs in under three minutes. It catches auth regressions, core workflow breaks, and configuration loss, the three bug categories that surface during pilot sessions and cost deals. Everything outside this path ships without a gate.

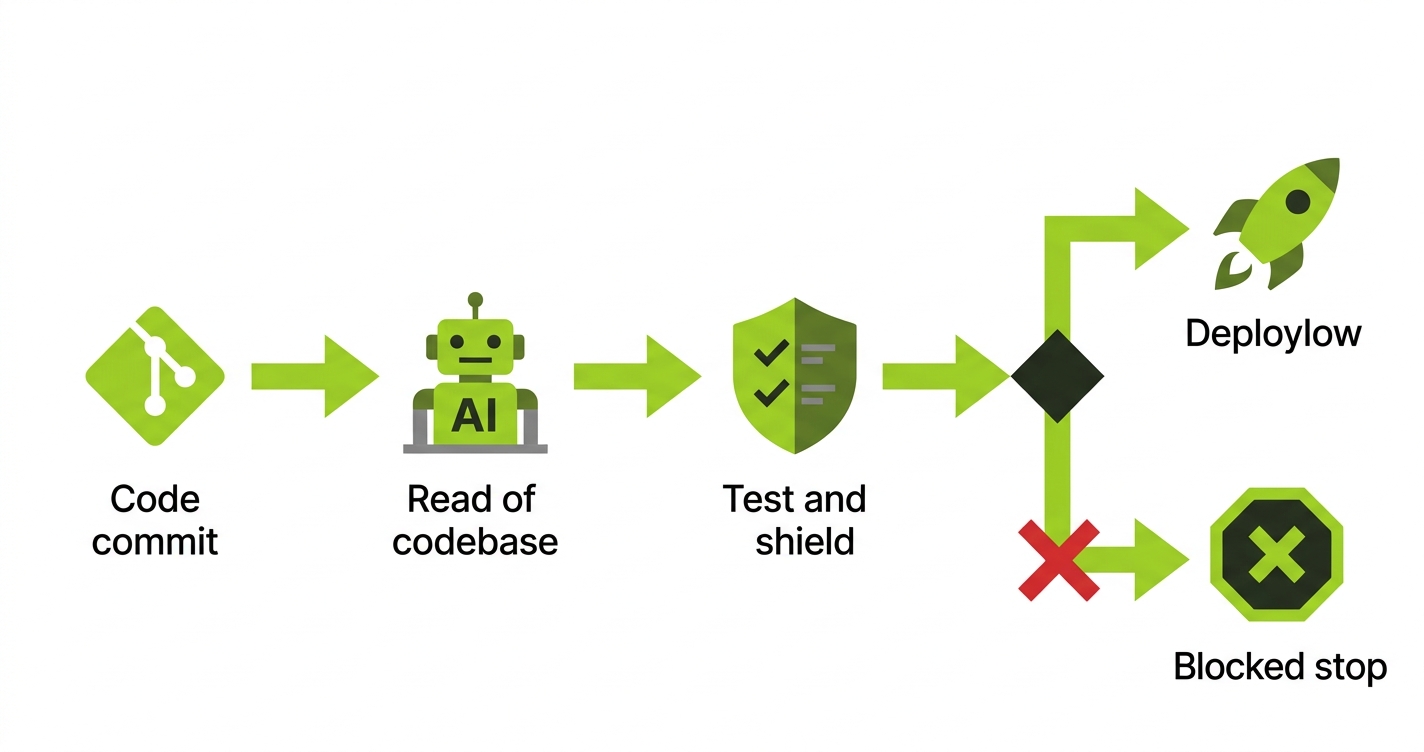

How Autonoma Protects Your Pilots in Weeks 8-12

Every engineering hour in weeks 8-12 goes to features. Those are the features that will convert your current pipeline into closed customers before Demo Day. There is no time left over to write tests, and there should not be.

Autonoma generates E2E critical path coverage directly from your codebase. There is no test code to write and nothing to maintain. You point it at the flows your active pilots walk: auth, core workflow, and output. It generates and runs those checks automatically on every deploy.

That means CI catches regressions before they reach production. A change you ship on Wednesday to win Prospect D does not break the pilot session Customer A has scheduled for Thursday. The deploy is gated. The session happens cleanly. The deal stays on track.

Setup takes a few hours, less time than writing a single Playwright test from scratch. You don't need a QA function, a dedicated testing sprint, or a separate tooling evaluation. It works against your actual routes and components, not a generic template.

The Techstars mentor network is your distribution advantage. Mentor intros create pipeline. Bugs in active pilots travel back through that same network. Autonoma keeps the product clean on the paths that matter so the network keeps working in your favor, all the way to Demo Day.

Running Multiple Pilots in the Techstars Cohort

Techstars cohorts have 10-30 companies depending on the program. Your peers are running the same customer acquisition sprint you are. That cohort dynamic is useful and creates a specific opportunity: other companies in your batch are potential customers or connectors to customers.

But it also creates a comparison risk. Investors and mentors talking to multiple companies in your batch will notice who has solid customer relationships and who is still working through pilot issues. Your operational quality during pilots is visible to the network in ways it would not be in a solo fundraise.

This makes the case for protecting your pilots even stronger. In a Techstars cohort, your customer relationships are partially public. A mentor who hears from one of their portfolio companies that your product had issues during the pilot is a mentor who factors that into how they position you to investors when Demo Day arrives.

The cohort mentality that makes Techstars valuable also means that operational discipline during pilots is a competitive signal, not just an operational nicety.

Pilot Tracking During the Techstars Sprint

Managing multiple pilots in the 13-week timeline requires a minimum viable operational system. Not a CRM. Not a project management tool. One document reviewed weekly, with these four columns:

| Column | What to capture | Alert threshold |

|---|---|---|

| Pilot stage | Onboarded / First session / Active eval / Decision | Any pilot in first session: no deploys to their path |

| Last signal date | Date of last customer interaction | Silent 5+ days: send a nudge |

| Critical path | Specific screens or features they use | Changed in a deploy: gate with automated test |

| Close deadline | Latest date they can decide and still count for Demo Day | Within 5 days: escalate internally to close |

This document takes ten minutes to maintain and prevents the situation where you discover, in week 11, that a pilot you thought was on track stalled three weeks ago and nobody noticed.

The Investor Angle: Reference Customers in Techstars Diligence

Techstars investors and the mentor network make warm intros to their LPs and co-investors during and after Demo Day. When a lead investor is doing diligence on a company that came out of Techstars, they will call two or three people in the Techstars network who know the company.

Those calls often include one of your pilot customers, especially if the customer came from a mentor intro. The mentor who made the intro knows both parties. The investor calls the mentor. The mentor gives their read. If the pilot went well, the mentor says "the team is sharp, the product works, the customer is happy." If the pilot had issues, the mentor says "promising team but had some early product issues." That framing, delivered by a trusted network node, shapes the investor's price and terms.

Your pilot quality is not just a customer success metric. It is a fundraising input that runs through the Techstars network itself. The founders who understand this protect their pilots not because they've been told to care about quality, but because they've understood how the Techstars investor network actually processes information.

What to Do This Week

If you're in a Techstars program with active pilots or pilot conversations in progress, here is the minimum viable action this week.

List every company in a pilot or about to start one. Map the path each of them walks: entry point, core action, output they evaluate. Write one end-to-end test per pilot covering that path. Get those tests running in your CI before deployment merges reach main.

This is one to two days of engineering work. If you're using Autonoma, it is a few hours of setup and the coverage generates from your codebase. Either way, the investment is small relative to the cost of a mid-pilot incident in week 10 that delays a close past Demo Day.

The network is everything, Techstars says. Keep it working for you by making sure the products your network introduces to customers actually run cleanly when those customers use them.

Frequently Asked Questions

The Techstars customer timeline runs on mentor intros. Mentors make introductions to potential customers in weeks 4-8; those conversations convert to pilots in weeks 8-10. To close a pilot before Demo Day (week 13), it needs to start by week 9 and run cleanly for three to four weeks. The constraint is not getting the intro: mentors provide those. The constraint is pilot quality. Pilots that have bugs surface during evaluation close slow or stall. Pilots that run cleanly close on schedule. Automated coverage of the paths your pilot customers walk is the operational practice that determines which outcome you get.

Mentor intros create a three-party relationship: you, the customer, and the mentor. When something goes wrong during a pilot, feedback travels back to the mentor informally. A mentor who hears from three of their intro recipients that your product had issues will make fewer intros and frame future ones differently. This does not mean mentors stop supporting you: it means the quality of your pilot experiences directly affects the quality and volume of future intros. Protecting pilots from bugs protects your access to the pipeline.

The stability window is the period after a pilot customer is onboarded but before they complete their first independent session. During this window, their first impression is still being formed. A bug encountered during their first solo session has disproportionate weight compared to a bug encountered in week three of an established pilot. The discipline is simple: when you onboard a pilot customer, avoid deploying to production for 48-72 hours unless you have a critical fix. In a 13-week program, this costs you nothing and prevents first-session incidents that add weeks to close cycles.

Separate the shipping surface from the pilot surface. Your pilots walk a narrow path through your product. Automated tests covering that path run before every deployment. When a change you're making to win a new prospect breaks something in an existing pilot's path, the test fails in CI before the change reaches production. You fix it or roll back. The existing pilot's session happens cleanly. The new prospect's feature ships cleanly too, once you've addressed the regression. The gate is surgical, not comprehensive: it slows down nothing except the specific changes that break active pilots.

Keep a simple document with four fields per pilot: current stage (onboarded, first session complete, in active evaluation, at decision), date of last customer interaction, the critical path they walk in your product, and the date they need to reach a decision to close before Demo Day. Review it weekly. Pilots that go silent for five days need a nudge. Pilots whose critical path is affected by an upcoming deployment need advance warning and confirmation that the change won't disrupt their session. This is ten minutes per week and prevents the situation where you discover a stalled pilot in week 11.

Yes. Autonoma analyzes your codebase to understand your application's structure and generates E2E test coverage for the paths you specify as critical. For a Techstars company with no existing tests, you describe which flows your pilot customers walk, and Autonoma generates and runs coverage for those flows before every deployment. There is no test code to write or maintain. The coverage runs in CI and fails the deployment if the pilot path breaks. For teams where every engineering hour counts, this removes the test maintenance burden while still protecting the outcomes that matter.