Startupbootcamp's corporate partner network puts your product in front of banks, hospitals, and logistics companies. These customers have internal approval processes that restart from zero if something breaks during a pilot. One bug can cost you four weeks of sales cycle, not just one deal. The protection is automated test coverage on the exact flow your regulated-industry customer approved. Not the whole product. That specific path.

Startupbootcamp's industry-specific programs are built around a key insight: regulated industries have procurement problems that generalist accelerators can't solve. A FinTech startup doesn't need introductions to generic VCs. They need introductions to compliance officers at tier-two banks who can approve a pilot without a six-month procurement cycle. Startupbootcamp's corporate partner network provides exactly that.

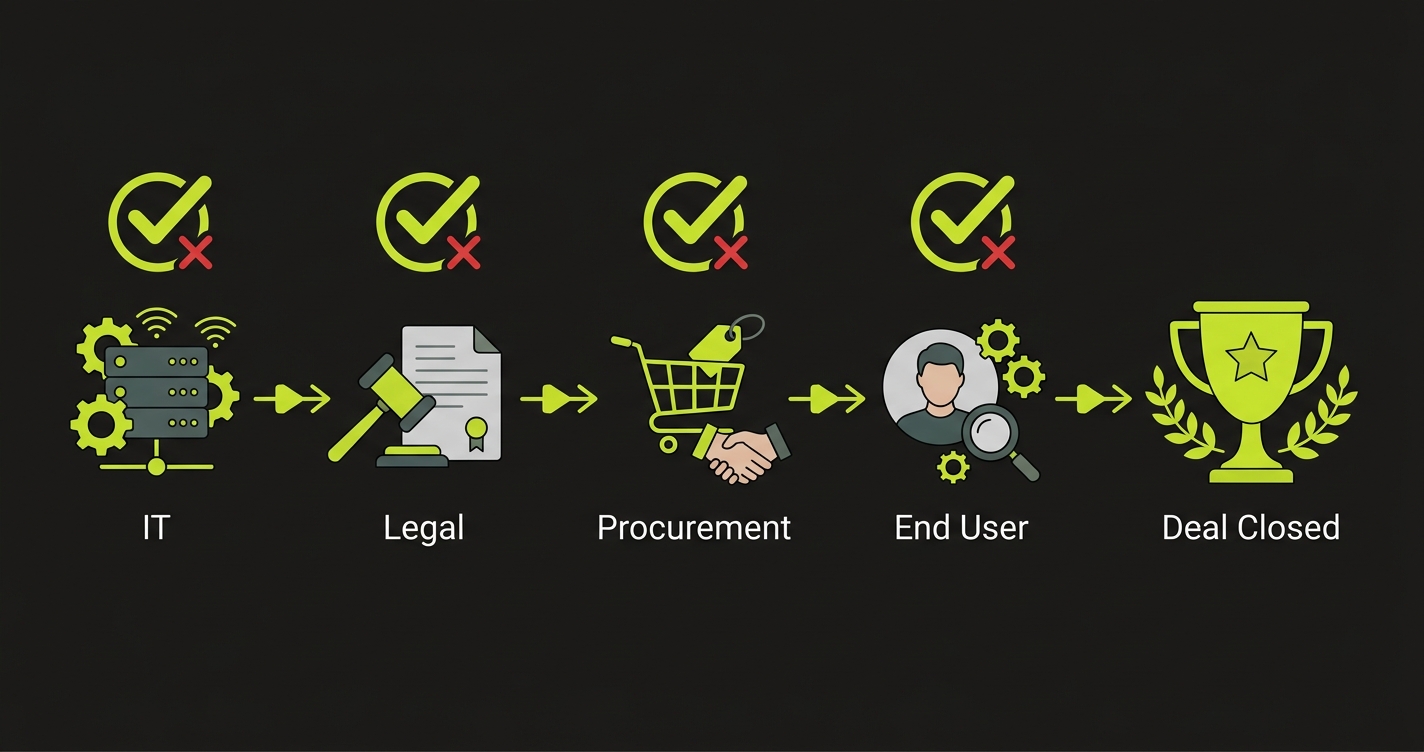

The implication for founders is that your pilot customers are not typical startup customers. They are legal, compliance, and procurement teams at banks. They are clinical informatics departments at hospital networks. They are vendor management teams at logistics companies. These customers evaluate your product differently, they approve it through a different process, and they react differently when something breaks.

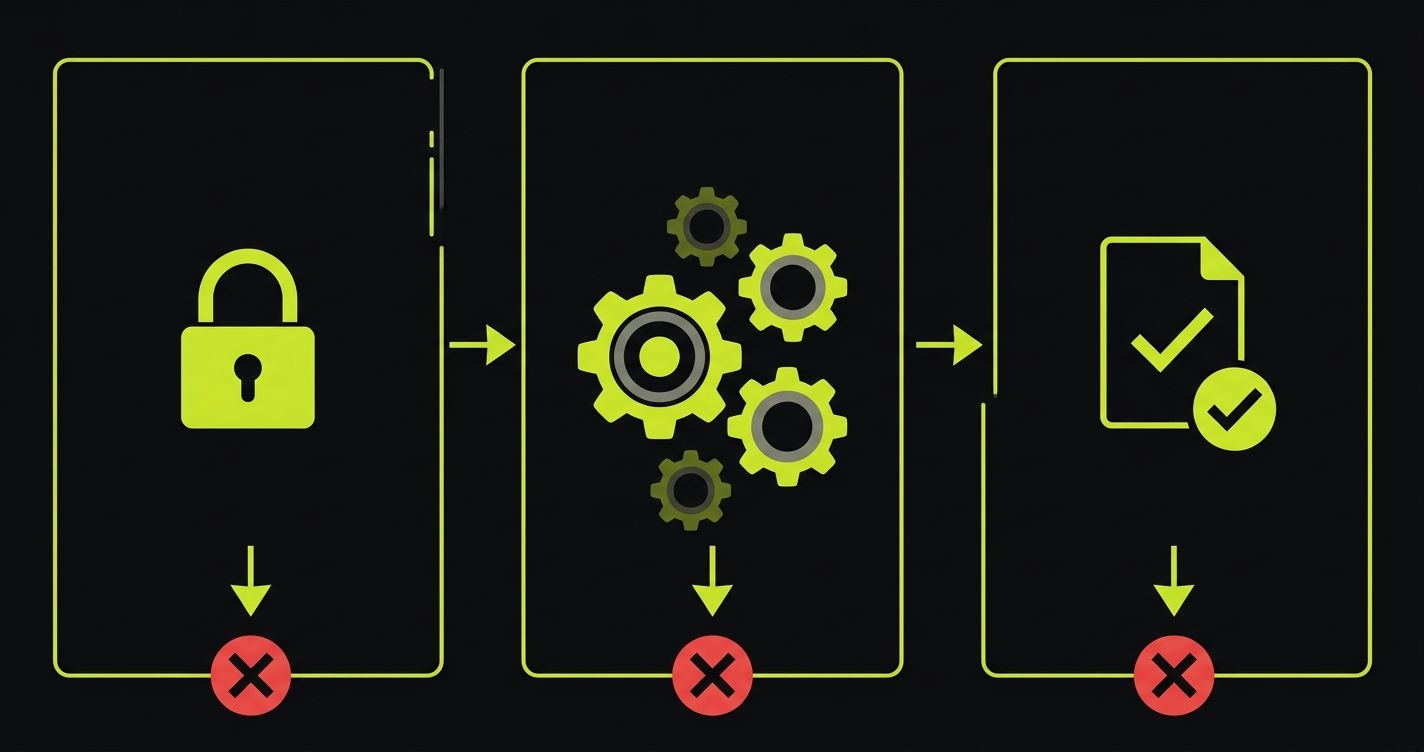

In a consumer startup, a bug means a user churns. In a regulated-industry pilot through Startupbootcamp's corporate network, a bug means the internal champion who vouched for you has to explain to their compliance committee why they approved a vendor whose product failed. That conversation goes badly, and the approval process restarts. Not resumes. Restarts.

The Three-Month Program Timeline and Where the Risk Concentrates

Startupbootcamp programs run for three months. Demo Day is at the end. The corporate partner introductions typically happen in weeks three through six, which means your pilot window is weeks seven through eleven if you move quickly and close a pilot fast after the introduction.

That leaves four weeks of active pilot before Demo Day. Four weeks is not a lot of runway to prove value to a regulated-industry customer, especially when their first week involves internal onboarding and IT security review.

| Program Phase | Weeks | What's Happening | Risk Level |

|---|---|---|---|

| Cohort onboarding | 1-2 | Team sync, mentors, pitch prep | Low |

| Corporate introductions | 3-6 | Meetings, scoping, approvals | Medium |

| Pilot activation | 7-8 | IT review, data access, first sessions | High |

| Active pilot | 9-11 | Real usage, feedback cycles | Very High |

| Demo Day prep | 11-12 | Narrative polish, reference prep | Critical |

The very high risk period in weeks nine through eleven is when your team is simultaneously doing three things: fixing bugs the pilot customer surfaced in weeks seven and eight, shipping improvements based on their feedback, and preparing the Demo Day narrative. That's three different contexts requiring the same engineering bandwidth, and the one that gets deprioritized under deadline pressure is usually "making sure the fixes didn't break something else."

That's the regression that costs you the pilot.

Why Regulated-Industry Bugs Are Categorically Different

A standard B2B SaaS bug has a standard recovery arc. Customer reports the issue, you fix it, you send an apology and a credit. The customer is annoyed but they continue. Their internal investment in your product, the configured workflows, the onboarded users, the internal advocacy, keeps them in the deal.

A regulated-industry bug does not have a standard recovery arc. When something breaks in a bank's pilot deployment, the sequence that follows is:

- The internal champion reports it to their IT team.

- The IT team flags it to their vendor risk management process.

- Vendor risk management reviews whether the incident constitutes a compliance event.

- If it does, the pilot is paused pending a formal review.

- The formal review requires you to submit a root cause analysis and a remediation plan.

- The approval committee reconvenes, which may take four to six weeks given their meeting schedule.

None of this is the customer being difficult. It's their legal obligation. You signed a pilot agreement with them that had terms about product stability and incident response. A bug, especially one affecting data display or access control, may trigger those terms automatically.

The practical consequence for Startupbootcamp founders is that a bug in week nine of a twelve-week program can result in a paused pilot that does not resume before Demo Day. You show up to Demo Day with a pilot that's technically active but not producing usage data, which is significantly worse than a smaller pilot that's working well.

The "Protect the Deal" Framework for Enterprise Pilots

The protect-the-deal framework for a Startupbootcamp pilot has a tighter scope than a typical startup pilot. Your corporate partner customer approved a specific workflow for a specific use case. That approval was written down. Their internal documentation describes what the product does and what their users will do with it.

That document is your test specification.

Map the exact workflow that was approved:

Authentication with their identity provider. Enterprise customers rarely use password-based login. They use SSO, SAML, or OAuth through their company identity provider. If your SAML integration breaks after a deploy, the entire pilot is offline because none of their users can log in. This is the most common enterprise-specific failure mode, and it's almost always caused by a change that touched your auth middleware for an unrelated reason.

The approved core action. This is whatever workflow they approved. Data ingestion, report generation, transaction processing. The approved workflow is specific, and it's the only thing their users will do in the first two months of the pilot. Cover it completely.

The output or result they care about. In regulated industries, the output often has compliance implications. A financial report that rounds numbers incorrectly is not just a display bug. It's a regulatory reporting problem. A patient summary that omits a field is not just a UI issue. It might be a clinical documentation issue. The output quality test must assert the actual values, not just the presence of the element.

What the Test Coverage Looks Like for a FinTech Pilot

Concrete example: a FinTech startup running a pilot with a corporate bank through Startupbootcamp's FinTech program. The approved workflow is SAML login, transaction data ingestion via CSV upload, and compliance report generation.

import { test, expect } from '@playwright/test';

test('bank pilot: SAML login, CSV ingestion, compliance report', async ({ page }) => {

// Critical moment 1: SSO authentication path

// (Assumes test environment is configured with SAML bypass for automation)

await page.goto('/auth/saml/initiate?tenant=pilot-bank');

await page.fill('[name="username"]', process.env.PILOT_SSO_USER!);

await page.fill('[name="password"]', process.env.PILOT_SSO_PASS!);

await page.click('[type="submit"]');

await expect(page).toHaveURL('/dashboard');

await expect(page.locator('[data-testid="user-tenant"]')).toContainText('Pilot Bank');

// Critical moment 2: core approved workflow

await page.click('[data-testid="new-ingestion"]');

const fileInput = page.locator('[data-testid="csv-upload"]');

await fileInput.setInputFiles('./fixtures/pilot-bank-sample.csv');

await page.click('[data-testid="process-ingestion"]');

await expect(page.locator('[data-testid="ingestion-status"]')).toContainText('Processed', {

timeout: 30000

});

await expect(page.locator('[data-testid="records-count"]')).not.toContainText('0');

// Critical moment 3: compliant output quality

await page.click('[data-testid="generate-report"]');

await expect(page.locator('[data-testid="report-status"]')).toContainText('Ready', {

timeout: 20000

});

// Assert report contains required regulatory fields

await expect(page.locator('[data-testid="report-period"]')).toBeVisible();

await expect(page.locator('[data-testid="report-total"]')).not.toContainText('NaN');

await expect(page.locator('[data-testid="report-total"]')).not.toContainText('undefined');

const downloadPromise = page.waitForEvent('download');

await page.click('[data-testid="export-pdf"]');

const download = await downloadPromise;

expect(download.suggestedFilename()).toMatch(/compliance-report.*\.pdf$/);

});The specific assertions on the report output (not.toContainText('NaN'), not.toContainText('undefined')) are not standard testing practice for consumer apps. For a compliance report that a bank's team will submit to regulators, those assertions are critical. A report showing "NaN" in a financial field is a compliance incident, not a display bug.

The 48-72 Hour Stability Window for Enterprise Customers

Enterprise customers schedule their usage. They don't open your product when they feel like it. They have standing weekly sessions, automated jobs that run at fixed times, and end-of-month reporting cycles. This predictability is actually useful: you can know exactly when your product needs to be stable.

For Startupbootcamp pilots specifically, the weeks eleven and twelve period often includes scheduled pilot review meetings where the corporate partner's team presents their findings internally. Those meetings are when they decide whether to move to a paid contract, extend the pilot, or exit. You want the product working perfectly in the 72 hours before that meeting.

Get this information directly. Ask your pilot champion: "When is your team planning to run the next reporting cycle?" "When are you presenting your pilot findings internally?" Mark those dates in your calendar and establish a deploy freeze for 72 hours before each one.

The pattern that works:

- Week 11, Monday: last allowed production deploy.

- Week 11, Thursday onward: no deploys. All changes go to staging only.

- Demo Day: product is in the exact state it was in on Monday, two weeks of stability behind it.

If a critical bug surfaces during the freeze window, you have a choice: deploy the fix and accept the risk of a new regression, or manually verify the specific broken flow and communicate the issue to the pilot customer proactively. In most cases, proactive communication to the corporate partner ("we identified an issue and here is what we're doing about it") is better received than a silent deploy that might introduce a new problem.

The Demo Day Investor Narrative

Demo Day at Startupbootcamp is not just about the product. It's about the corporate partner validation. When a bank or hospital or logistics company shows up to Startupbootcamp Demo Day as a pilot customer, investors interpret that signal differently than a typical early customer. Corporate partners in regulated industries don't pilot vendors casually. Their presence in your Demo Day narrative is evidence of technical credibility and compliance readiness.

But that validation only holds if the pilot is genuinely active and working. Investors in the room know the program structure. They know that corporate partner pilots are part of the Startupbootcamp model. The question they ask is not "do you have a corporate pilot?" but "how is the pilot going, specifically?"

The answer needs to be specific and confident. "They're running weekly sessions, we've processed X transactions through the platform, and they've scheduled their pilot review for next month." That answer requires a pilot that's actually running. A pilot that had a bug incident two weeks ago and is currently paused pending vendor review does not produce that answer.

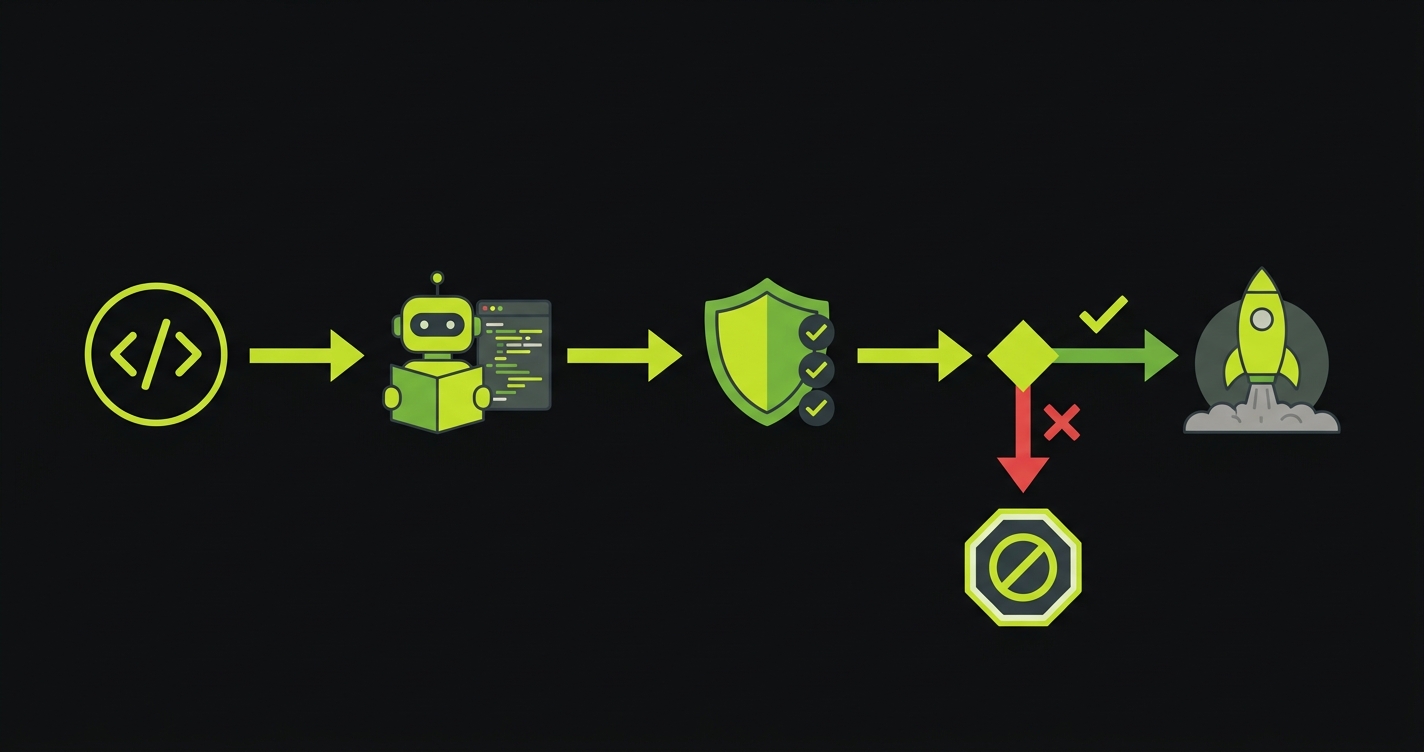

How Autonoma Fits the Startupbootcamp Context

The specific challenge for Startupbootcamp founders is the combination of enterprise compliance requirements and a small engineering team. Enterprise pilots need more test coverage than consumer pilots because the failure modes are more severe. But Startupbootcamp-stage teams rarely have dedicated QA resources.

Autonoma generates E2E test coverage from your codebase without requiring your engineers to write test code. For an enterprise pilot where the critical path is well-defined (the approved workflow is documented), Autonoma can cover that path completely. The generated tests run in CI, block deploys that would break the pilot, and stay current as the product evolves.

The specific coverage that matters for a regulated-industry pilot: SSO/SAML authentication, the approved data workflow, and the output validation assertions. Autonoma generates all three from your existing routes and component structure.

The Practical Approach for the Last Four Weeks

With four weeks of active pilot before Demo Day, here is the sequence that protects the deal:

Week nine: run Autonoma or write manual Playwright tests on your pilot's approved path. Get them into CI. Do this at the start of the week, before anything else.

Week nine, continued: audit every open PR and pending feature. For anything that touches auth, the core workflow, or the output layer, require the pilot path test suite to pass before merge. This is a conversation with your co-founder, not a policy document. "We can't merge anything that breaks the bank pilot" is the rule.

Week ten: schedule a check-in call with your pilot champion. Ask them for their internal review meeting date. Get it on your calendar as a deploy freeze start.

Week eleven: establish the freeze. Last deploy goes out on Monday or Tuesday. Communicate to your team that the product is locked until after Demo Day.

Week twelve (Demo Day): show up with two weeks of stable pilot data, a prepared answer for "how is the pilot going," and the pilot customer's internal champion briefed on what to say if investors call.

Prioritize communication over silent fixes. Tell your pilot champion immediately: 'We identified an issue with X. We're deploying a fix now and monitoring closely.' Regulated-industry customers respond better to proactive communication than to discovering issues themselves. If the fix is small and well-isolated, deploy it with a rollback plan ready. If it's complex, communicate the workaround and schedule the fix for after Demo Day. A known issue with a workaround is much better than a unknown issue discovered during a weekly session.

Set up a test environment configuration that uses a mock SAML identity provider, or configure your staging environment to accept a specific test credential that bypasses the corporate IdP. Most enterprise SSO implementations have a development bypass mechanism. Store the test credentials in environment variables and never commit them to the repo. The test should exercise the full SSO flow including the redirect and token exchange, not just the post-SSO authenticated state.

Create a dedicated test tenant or test account that mirrors the pilot customer's configuration but uses synthetic test data. Run your automated tests against that test tenant, not the customer's actual data. This gives you realistic coverage of the pilot path without risk of corrupting customer data or triggering their compliance monitoring. Name the test account something obvious like 'qa-pilot-[customername]' so it's clearly not a production account.

Feature flags. Every new feature goes behind a flag that is off by default for the pilot tenant. Deploy the feature to production with the flag off. Run your pilot path test suite. Confirm everything still passes. Only then enable the flag for the pilot tenant, during a window when you can monitor actively. The approved workflow must be unaffected by features the pilot hasn't opted into. This is especially important in regulated industries where the pilot scope was explicitly defined in a scoping document.

Identify the specific fields that have regulatory significance: totals, dates, reference numbers, category classifications. For each, write an assertion that checks the value is present, is not NaN or undefined, matches the expected format (use a regex for date formats or currency formats), and is within a plausible range if applicable. Don't assert exact values for dynamic data, but do assert structural integrity. A compliance report with a missing regulatory field is worse than a missing feature request.

Three things. First, the approval process: a corporate partner pilot went through internal review, which means a bug can restart that process rather than just causing a support ticket. Second, the usage pattern: enterprise users have scheduled, predictable usage rather than organic, ad-hoc usage, so you know exactly when the product needs to be stable. Third, the output expectations: regulated industries often use your product's output in their own compliance workflows, so data accuracy and formatting are functional requirements, not UI polish.