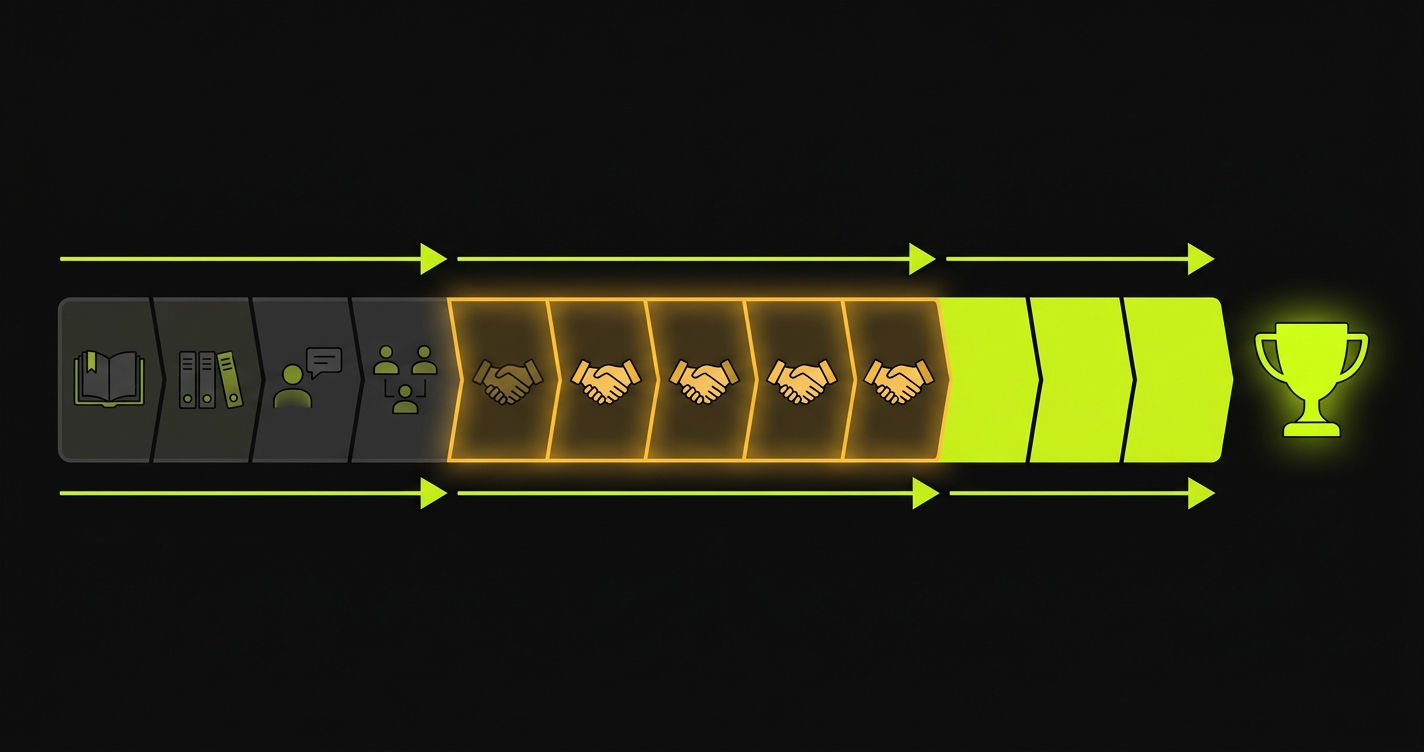

The EF customer problem: Entrepreneur First is a six-month cohort split into Form, Launch, and Raise phases. You spend the first six weeks finding a co-founder. Then you have roughly ten weeks to build a product, find customers, and get something investors will back at Investor Day. The product timeline is compressed by definition. The codebase is young. The co-founder relationship is new. And the customer sprint runs simultaneously with the highest shipping velocity of the program. A bug that surfaces during a pilot in the Launch or Raise phase does not just cost the deal. It can cost the raise.

Entrepreneur First runs in London, Singapore, Bangalore, Paris, and Berlin. The model is specific: join alone, find a co-founder during the Form phase, build and validate during Launch, raise during Raise. Investor Day is at the end of the six months.

The math that most EF participants only understand partway through the program: six weeks of Form phase means ten weeks of Launch and Raise combined. Ten weeks to build something that works, find customers who will use it, and close enough to show investors at Investor Day that you have a real business. That is not a lot of time.

The teams that make it through EF with a raise underway by Investor Day have one thing in common: they started getting customer signals early in the Launch phase and kept those customer relationships healthy through the Raise phase. The teams that don't raise have often built good products but lost customers to product instability at exactly the wrong moment.

Therefore: at EF, the ability to close customers before Investor Day is not just about building the right product. It is about building the operational discipline to protect customer relationships during the fastest shipping period of the program.

The EF Phase Structure and What It Means for Customer Timing

EF's six-month cohort breaks into three phases that each create distinct operational constraints.

Form phase (weeks 1-6). You join as an individual. You meet dozens of potential co-founders. You evaluate ideas, skill overlaps, and working styles. By week six, you either have a co-founder or you leave the program. During Form, the product does not exist. You might be doing customer discovery, but you're not building.

Launch phase (weeks 7-14). You have a co-founder. You build the product. You get first customers. EF's expectation during Launch is that you are testing your idea in the market, getting evidence of traction, and refining your positioning. The first customer conversations happen in weeks 7-9. The first pilots start in weeks 9-11.

Raise phase (weeks 15-24). You are pitching investors and closing your round. Investor Day is mid-Raise phase. Your customer evidence needs to be solid by the time Investor Day arrives, because investors you meet at the event will do diligence before committing.

The critical window is Launch phase, weeks 9-14. This is when you have active pilots running and you're also shipping the fastest you ever will, building features that the pilots asked for, fixing issues, iterating on the product based on real user feedback. The bug risk is highest here. The deal risk is highest here. The two are connected.

Why the Co-founder Timeline Creates Technical Debt at Exactly the Wrong Moment

The Form phase creates a specific technical situation that affects the Launch phase customer sprint. When you form a co-founder team in week four or five and start building in week six, you build fast. Very fast. The codebase that gets you through the first customer demos in week nine or ten is built in three to four weeks, by two people who are still calibrating their working styles.

That codebase is not production-grade. It is demo-grade. It handles the happy path you practiced for the demo. It does not robustly handle the configurations that different customers will set up. It does not handle error states well. It does not have retry logic or graceful degradation. It is the code you write when you need to get to a demo in three weeks.

The problem: your pilot customers use that codebase not in demo conditions but in real-world conditions. They configure the product in ways your demo never covered. They run it at odd hours when background processes are slow. They use it with data that has edge cases your demo data did not include.

The bugs that emerge from this gap between demo-grade and production-grade code are not random. They cluster around state management, edge case input handling, and integration reliability. These are also the bugs that are hardest to catch without automated checks, because they don't show up when you test the happy path manually.

Automated coverage of the pilot path catches these bugs before they reach the customer. Not because the tests are comprehensive, but because the tests run the real path, with real-ish data, every time you deploy. That is the gap between "it worked in the demo" and "it works for the pilot customer."

The EF-Specific Risk: Building the Feature the Pilot Asked For Breaks the Pilot You Have

Here is the failure mode that hits EF teams during the Launch phase with consistent regularity.

You have two pilots. Pilot A started three weeks ago and is going well. They're evaluating the core workflow and are positive. Pilot B started this week. Pilot B asked, during the sales call, for a specific feature that would make the product much more useful for their team. It's a reasonable feature and you can build it in a few days.

You build the feature and ship it on Wednesday. On Thursday, Pilot A's champion logs in and finds that the output format from their saved workflow run has changed. Not broken, but different. The column order in the export changed, which breaks the downstream spreadsheet they were feeding the output into. They send you a message.

You fix it quickly. But they've already had to explain to their manager why the report they were preparing looks different from last week. The manager is now slightly skeptical about the reliability of this evaluation. The pilot that was going to close next week needs another two weeks of stable operation before the champion feels comfortable recommending it internally.

Two weeks is a long time in the EF Launch phase. A pilot that closes in week 12 instead of week 10 is a pilot that is still in evaluation when you enter the Raise phase. Investors meeting you at Investor Day see "pilots in progress" instead of "customers signed." That framing affects terms.

The "Protect the Deal" Framework for EF Teams

The EF version of pilot protection is specific to the two-phase overlap that happens in weeks 9-14: you are doing customer development for new prospects while running pilots for customers you've already onboarded. Every feature you build for new prospects is a potential regression for existing pilots.

The framework: before any deployment reaches production, check that the paths your active pilots walk are still intact. This is not a full regression. It is a targeted check covering three things for each active pilot:

The entry path. Login, session initiation, whatever gets them into the product state they work in.

The core action. The workflow they run, the feature they're evaluating, the thing that generates the value they're assessing.

The output they use. The specific output, export, or display that they reference in their evaluation and share with their team internally.

These three checks run in CI before every merge to main. When a change for Pilot B breaks something in Pilot A's path, the check fails. You see it before the change reaches production. You fix the regression or hold the Pilot B feature. Either way, Pilot A's session on Thursday is clean.

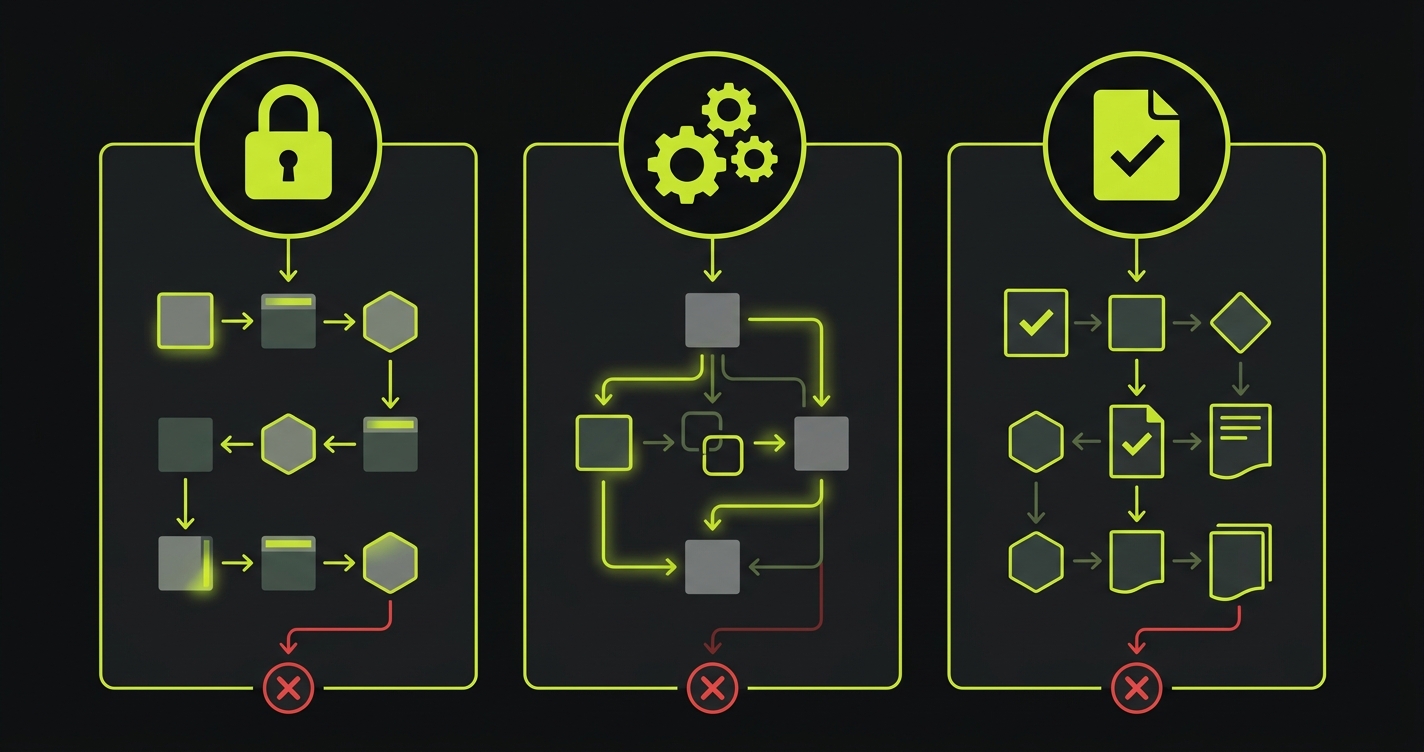

The Three Moments That Cannot Break at EF

For every active pilot in the EF Launch phase, three moments are non-negotiable.

Login and session state. Early EF codebases have a tendency to implement session management quickly and not robustly. When you add a new auth method, update a JWT library, or change a session duration setting to accommodate a new customer's security requirements, you introduce a risk of breaking existing sessions. Cover all auth paths that active pilots use before every deployment.

Core workflow execution. Whatever your product does at its core: that must complete and produce the correct output. For AI companies building in the EF cohort, this means the generation or processing step. For data companies, the transform or analysis. For workflow companies, the primary workflow execution. Test the full path end-to-end, not just the API endpoint in isolation.

Output format consistency. This is the one that specifically hits teams building fast. The output format, the exact structure and content of what your product generates, is something pilot customers configure their downstream workflows around. When you change the output format to support a new feature, you break the downstream workflows of existing customers. Cover the output format with explicit assertions: field presence, data types, approximate content shape.

// EF Investor Day protection suite

// Designed for a team building fast with active pilots in Launch phase

// Auth: session state must survive library updates and config changes

test('auth: existing session state is valid after deploy', async ({ page }) => {

// Simulate a returning pilot customer's session

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_PASSWORD!)

await page.click('[data-testid="submit"]')

await expect(page).toHaveURL('/dashboard')

// Confirm user context is loaded (not a blank or error dashboard)

await expect(page.locator('[data-testid="user-display"]')).toBeVisible()

await expect(page.locator('[data-testid="error-banner"]')).not.toBeVisible()

})

// Core workflow: runs and produces output with correct structure

test('core workflow: output structure matches expected format', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_PASSWORD!)

await page.click('[data-testid="submit"]')

await page.waitForURL('/dashboard')

await page.click('[data-testid="run-workflow"]')

await page.waitForSelector('[data-testid="output-complete"]', { timeout: 90000 })

// Output must be present

const output = page.locator('[data-testid="output-panel"]')

await expect(output).toBeVisible()

// Check output via API for structure validation

const outputId = await page.locator('[data-testid="output-id"]').getAttribute('data-id')

const res = await page.request.get(`/api/outputs/${outputId}`)

const data = await res.json()

// Structure assertions: these are the fields pilot customers build on top of

expect(data).toHaveProperty('results')

expect(Array.isArray(data.results)).toBe(true)

expect(data.results.length).toBeGreaterThan(0)

// Each result must have the expected shape

const firstResult = data.results[0]

expect(firstResult).toHaveProperty('id')

expect(firstResult).toHaveProperty('value')

expect(firstResult).toHaveProperty('timestamp')

// Format stability: this is what breaks downstream customer workflows

expect(typeof firstResult.value).toBe('string')

})

// Output persistence: previous runs must still be accessible

test('history: prior workflow runs are accessible', async ({ page, request }) => {

const res = await request.get('/api/runs', {

headers: { 'Authorization': `Bearer ${process.env.PILOT_API_TOKEN}` }

})

const runs = await res.json()

expect(res.status()).toBe(200)

expect(Array.isArray(runs.items)).toBe(true)

expect(runs.items.length).toBeGreaterThan(0)

// First run must be retrievable in full

const runId = runs.items[0].id

const runRes = await request.get(`/api/runs/${runId}`, {

headers: { 'Authorization': `Bearer ${process.env.PILOT_API_TOKEN}` }

})

expect(runRes.status()).toBe(200)

const runData = await runRes.json()

expect(runData.status).toBe('completed')

expect(runData.output).toBeTruthy()

})This suite focuses specifically on the failure modes that are common in early EF-stage codebases: auth session bugs, output structure changes, and history/persistence bugs. It runs in under five minutes and catches the categories of regressions that most often surface during pilot sessions.

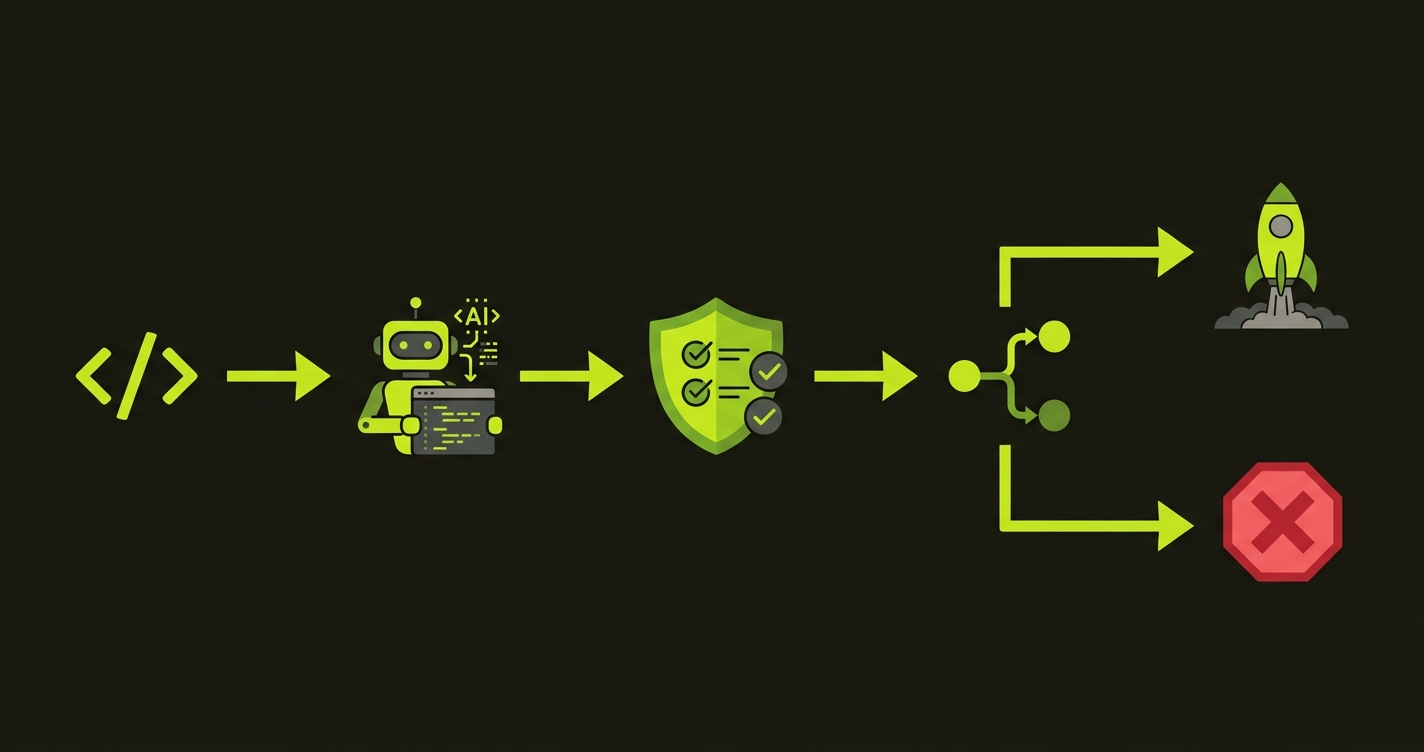

How Autonoma Fills the QA Gap in the EF Launch Phase

EF teams build a codebase in three to four weeks, then put it in front of real customers. Staging environments never fully replicate the configurations, data shapes, and session patterns those pilot customers bring. The edge cases that emerge in early pilot sessions are structural. They come from the gap between demo-grade code and real-world use, not from carelessness.

Autonoma reads your actual codebase, reading your routes, components, and API patterns, and generates E2E tests for the critical paths your pilots walk. It works with demo-grade code as well as production-grade. You do not write the tests. Autonoma generates them and they stay current as the codebase evolves, which matters when you're shipping daily during the Launch phase.

The CI gate is what closes the risk loop. Every deploy is checked against the paths your active pilots use. The regression introduced by a Tuesday afternoon feature for a new prospect does not surface Thursday during an existing pilot's session. It fails in CI before it ships, and you fix it or hold the deploy.

For a two-founder EF team, nobody has QA capacity. Both founders are building, selling, and managing pilots simultaneously. Autonoma replaces the QA function that the team cannot staff, without adding engineering overhead during the sprint.

The Launch phase is eight weeks. A pilot that stalls in week 11 because of a product regression may not recover in time for Investor Day. Autonoma keeps the pilots running while the team ships at full speed.

Co-founder Coordination: The Overlooked Part of Pilot Operations

EF teams are two people. In the Launch phase, both founders are doing everything: building, selling, managing pilots, running the company. The coordination surface is small, which is an advantage, but the communication overhead is not zero.

The specific coordination failure that hits EF pilots: one founder manages a customer relationship and knows what flow that customer uses and when their next session is. The other founder ships a change that touches that flow on the day before the session. Neither connected the dots.

The prevention: a shared document with one row per active pilot. Before any deployment, the shipping founder checks it. If a change touches a pilot's critical path and a session is within 48 hours, the deployment gets held until after the session.

| Field | Content | Pre-deploy check |

|---|---|---|

| Customer name | Company name | Reference |

| Critical path | Specific feature names they use | Does this deploy touch these? |

| Next session | Scheduled or expected date | Is it within 48 hours? |

| Stage | Onboarded / Active / Decision | First-session pilots get stability window |

| Coverage | Test name that covers their path | If blank: add test before next deploy |

This takes two minutes per deployment and prevents the most common co-founder coordination failure around pilot operations. It does not require a project management tool or a formal process. It requires a shared document and a habit.

The EF Investor Audience: What They're Looking For

Investors at EF Investor Day are evaluating seed-stage companies where the team just formed six months ago. They are making a judgment call on a team they've known, in many cases, for weeks or months through the EF network. The customer question is central: does this product actually work for real customers?

EF investors are sophisticated about the stage. They do not expect perfection. They do expect a team that operates well under pressure and handles customer relationships with professionalism. The evidence they look for is not zero incidents. It is "when incidents happened, this team caught them fast and handled them proactively."

A customer reference call that goes "the product had a rough patch in week three, but the team was on top of it, deployed a fix quickly, and checked in to make sure we were good" is a positive diligence signal. It tells the investor: this is a team that runs a tight operational ship. That matters for a seed bet on a team that has six months of history.

Your automated coverage is what makes the difference between "caught it fast" and "heard about it from the customer." Teams with automated critical path checks catch their own bugs before customers do. Teams without it are relying on customers as the bug detection system, which is the wrong system for early-stage pilot management.

Getting Started in the EF Launch Phase

If you are an EF team in the Launch phase with pilots starting or running, here is what to do this week.

Map the exact flows your active pilots walk. Not your product's full feature set. The specific steps each pilot takes from login to their primary value moment. Write one automated test per pilot covering that path. Three tests for three pilots. Run them in CI before every merge to main.

Set up the shared document with pilot name, critical path flows, and next session date. Make it the first thing both founders check before any significant deployment.

The investment is three to four hours. The alternative is discovering in week 12 of the program that two of your three pilots stalled because of bugs in weeks 10 and 11, and you're entering the Raise phase with pilots still in evaluation rather than customers signed.

The window is ten weeks. Protect it.

Frequently Asked Questions

EF's Launch phase is roughly eight weeks, starting after the co-founder formation period. First customer conversations typically happen in weeks two and three of Launch; pilots start in weeks three through five. To have customers to show at Investor Day (mid-Raise phase), pilots that start in week three of Launch need to close within four to five weeks. Pilots close fast when they run cleanly. Pilots close slow when they have incidents. Automated coverage of the paths your pilot customers walk is the operational practice that determines which outcome you get. For EF teams where engineering time is limited, the minimum is one test per active pilot covering their critical path, running in CI before every deployment.

When you start building in earnest in week seven of the program (after Form), you have three to four weeks before you need to demo to first prospects. That is not enough time to build a robust codebase. The code you ship to first demos is demo-grade: it handles the happy path you practiced, but not real-world configurations, edge case inputs, or integration reliability. Pilot customers use the product in real-world conditions and surface the bugs that demo conditions never exposed. Automated checks on the paths pilots walk catch these bugs at deploy time rather than during customer sessions.

The minimum viable coordination system for a two-founder team: a shared document with one row per active pilot, listing the customer name, the critical path they walk (specific feature names), and their next expected session date. Before any deployment, the shipping founder checks this document. If a change touches a pilot's critical path and a session is within 48 hours, the deployment is held until after the session. This takes two minutes per deployment and prevents the most common coordination failure: one founder ships a change that breaks a pilot the other founder manages.

The four categories that show up most often: auth session bugs triggered by library updates or configuration changes, output format changes that break downstream customer workflows, history and persistence bugs where previous outputs become inaccessible, and state management failures in edge case configurations. All four are coverable with targeted automated tests. Auth tests catch the first; output structure assertions catch the second; persistence checks catch the third; end-to-end workflow tests with varied input configurations catch the fourth.

The Form phase (weeks 1-6) is co-founder formation: no product, no customers. The Launch phase (weeks 7-14) is where customer acquisition happens. The first customer conversations should start in weeks 8-9, before the product is fully polished, because the feedback from those conversations shapes what you build. First pilots should start in weeks 10-12 to have enough time to close before Investor Day. The constraint is that you're also building the fastest in weeks 10-14, which is when the pilot-breaking bug risk is highest. Automated coverage of active pilot paths is what makes that overlap survivable.

EF investors at Investor Day are seed-stage investors who have been watching companies in the cohort for weeks or months through the EF network. They know the teams. Their due diligence on customers is a validation check: is this product working for real users? They will call one or two reference customers. The reference conversation they want to have is 'product works well, team is responsive, planning to expand.' The reference conversation that introduces uncertainty is 'there were some reliability issues during evaluation.' Your pilot quality in the Launch and early Raise phases determines which conversation happens.