IndieBio Demo Day investors fund scientific rigor, not just product utility. A bug in a research lab or pharma pilot doesn't just frustrate a user. It raises the question of whether your platform's data can be trusted at all. That question, once raised, is very hard to answer in a four-month program. The protection is automated test coverage that validates data integrity, not just UI function.

IndieBio is a different kind of accelerator. SOSV's flagship life sciences program funds companies where the technical credibility of the platform is the investment thesis. Whether you're building computational biology tools, clinical data platforms, or lab automation software, the implicit claim is: this platform produces scientifically valid results.

That claim creates a different relationship between bugs and business outcomes. At a B2B SaaS company, a bug is a product problem. At a life sciences company, a bug is a scientific credibility problem. The distinction matters because scientific credibility is much harder to recover than product satisfaction.

When a research lab pilot sees a calculation discrepancy, their first question is not "is this a display bug?" Their first question is: "Has this been happening since we started using it? Is our previously collected data affected?" That question can cause a lab to discard weeks of work if the answer is unclear. Your pilot customer's internal risk tolerance is calibrated to the consequences of using bad data, which in clinical research or pharmaceutical development can mean wasted experiments, incorrect conclusions, or worse.

This is the standard you're building to when you run a pilot through IndieBio.

The Four-Month Program and Where Your Pilot Window Falls

IndieBio runs four months. The program is intensive, with lab space, mentorship, and investor exposure built in. Corporate pilots typically get arranged in months two and three, through the network of pharma partners, hospital systems, and research institutions that SOSV has cultivated.

The timeline for a typical IndieBio company looks like this:

| Program Month | What's Happening | Testing Risk Level |

|---|---|---|

| Month 1 | Lab setup, MVP refinement, early feedback | Low: no external pilots yet |

| Month 2 | Network introductions, scoping calls with institutions | Medium: building toward pilots |

| Month 3 | Pilot agreement, data access, first sessions | Very High: trust is being established |

| Month 4 | Active pilot, Demo Day prep, investor meetings | Critical: data integrity on the line |

The critical window is months three and four together. Month three is when a research lab or pharma team is using your platform for the first time with real data, and every result they see is being evaluated not just for usefulness but for scientific credibility. Month four is when you need that credibility intact while also preparing a Demo Day presentation that will be evaluated by scientists-turned-investors who will ask hard questions about your methodology.

A bug in month three that calls your results into question does not heal by month four. It creates a cloud of uncertainty that persists through Demo Day.

Why Life Science Bugs Are Existential, Not Just Operational

The standard framing for bugs at tech startups is operational: bugs cause churn, support burden, and customer frustration. The fix is responsive support and reliable engineering.

That framing does not apply in life sciences.

Consider what your platform likely does: it processes biological data, runs computational analyses, extracts signals from experimental results, integrates clinical records, or surfaces insights from assay data. Your customers use the output of that processing to make decisions. Research decisions. Clinical decisions. Drug development decisions.

A bug that causes an incorrect calculation in a financial SaaS tool is a problem. A bug that causes an incorrect calculation in a genomics analysis tool means a researcher may have drawn wrong conclusions from their data. A display bug that shows the wrong patient cohort in a clinical data platform means a clinical team may have made decisions based on the wrong group.

This asymmetry changes the economics of bug prevention significantly. One bug in a research pilot doesn't just threaten that pilot. It threatens every other pilot you're running simultaneously, because life science customers talk to each other within their institutional networks. A pharmaceutical company's IT team and a hospital research department may not overlap, but their scientific advisors and conference circuits do.

A credibility problem that originates in one pilot can travel to every conversation you're having in the IndieBio network before Demo Day.

The Three Moments That Cannot Break in a Life Science Pilot

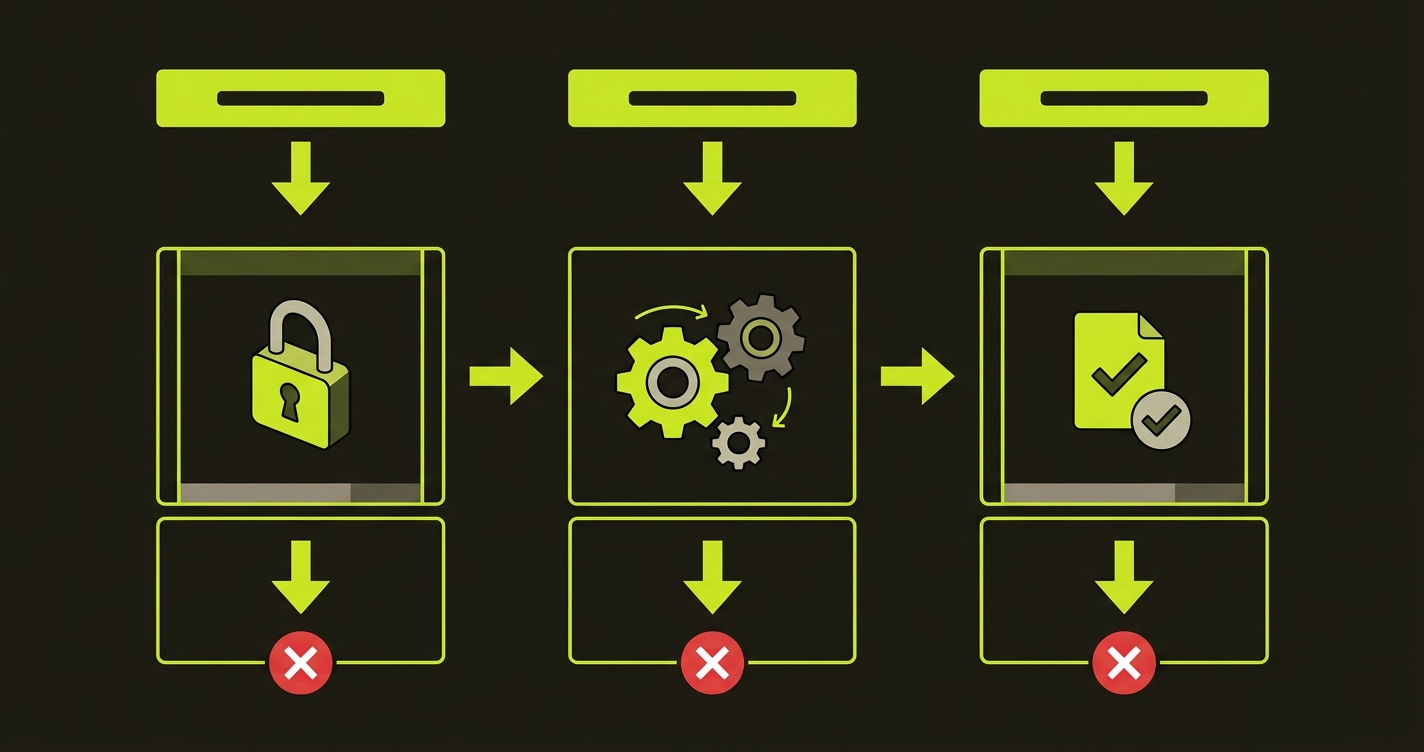

The "protect the deal" framework for a life science pilot has the same three-moment structure as any pilot, but each moment has additional requirements driven by scientific context.

Authentication with institutional credentials. Research institutions and hospitals use federated identity. LDAP, Active Directory, institutional SSO. Researchers log in with their institutional email. If that login path breaks after a deploy, their IT team escalates it immediately because institutional systems breaking is always taken seriously. Test the exact authentication path your pilot customer uses, including the credential type and the tenant routing.

The core scientific action. This is the analysis, the query, the data processing step. For a life sciences platform, this is where scientific validity is established or destroyed. The test must assert not just that the action completes, but that it produces a result consistent with known inputs. Use a fixed test dataset with known outputs. Assert that the output matches those known outputs exactly. Any discrepancy between the expected and actual output is a data integrity signal that must fail the CI build.

The output and its integrity. Life science outputs are often used downstream: exported for statistical analysis, copied into lab notebooks, submitted to institutional repositories. The output test must assert the data is present, correctly formatted, and numerically consistent. For calculated values, test the calculation directly. Don't just check that a number is displayed. Check that it's the right number.

What the Coverage Looks Like for a Computational Biology Pilot

Concrete example: a computational biology platform running a pilot with a university research lab. The pilot workflow is institutional SSO login, upload a sequence alignment file, run a variant calling analysis, and download results in VCF format.

import { test, expect } from '@playwright/test';

import * as fs from 'fs';

import * as path from 'path';

// Reference values computed from the known test dataset

const KNOWN_VARIANT_COUNT = 47;

const KNOWN_HIGH_CONFIDENCE_COUNT = 31;

test('research lab pilot: SSO login, variant analysis, VCF export', async ({ page }) => {

// Critical moment 1: institutional authentication

await page.goto('/auth/institutional');

await page.fill('[name="institution-email"]', process.env.PILOT_INSTITUTION_EMAIL!);

await page.fill('[name="password"]', process.env.PILOT_INSTITUTION_PASS!);

await page.click('[data-testid="institutional-login"]');

await expect(page).toHaveURL('/workspace');

await expect(page.locator('[data-testid="institution-badge"]')).toBeVisible();

// Critical moment 2: core scientific action with known inputs

await page.click('[data-testid="new-analysis"]');

const fileInput = page.locator('[data-testid="alignment-upload"]');

// Use a fixed reference file with known expected outputs

await fileInput.setInputFiles('./fixtures/reference-alignment-NA12878-chr22-subset.bam');

await page.selectOption('[name="reference-genome"]', 'GRCh38');

await page.click('[data-testid="run-variant-calling"]');

await expect(page.locator('[data-testid="analysis-progress"]')).toContainText('Complete', {

timeout: 120000 // Scientific computation takes longer than UI operations

});

// Assert scientific integrity of results

const variantCount = await page.locator('[data-testid="total-variants"]').textContent();

expect(parseInt(variantCount || '0')).toBe(KNOWN_VARIANT_COUNT);

const highConfCount = await page.locator('[data-testid="high-confidence-variants"]').textContent();

expect(parseInt(highConfCount || '0')).toBe(KNOWN_HIGH_CONFIDENCE_COUNT);

// Critical moment 3: output integrity

const downloadPromise = page.waitForEvent('download');

await page.click('[data-testid="export-vcf"]');

const download = await downloadPromise;

expect(download.suggestedFilename()).toMatch(/\.vcf(\.gz)?$/);

// Verify the download completed and has content

const downloadPath = await download.path();

expect(downloadPath).not.toBeNull();

const stats = fs.statSync(downloadPath!);

expect(stats.size).toBeGreaterThan(1000); // VCF with 47 variants should be > 1KB

});The key difference from a standard application test is the assertion on scientific output. expect(parseInt(variantCount || '0')).toBe(KNOWN_VARIANT_COUNT) is checking data integrity, not UI function. If a deploy changes the variant calling pipeline and this count shifts, the test fails before any researcher sees the discrepancy. That's the protection that matters in life sciences.

The 120-second timeout on the computation step is realistic. Scientific workloads take time. Your CI pipeline needs to be configured to handle longer-running tests without killing them prematurely.

The Data Integrity Audit Before Closing a Pilot

Before you close a life science pilot and before you enter the active pilot period in month three, do one thing that most software teams skip: run a data integrity audit on your test environment.

Take your reference dataset, run it through your platform from end to end, and record the expected outputs for every calculation your platform performs. Store those expected outputs as test fixtures. Then write tests that assert those exact values whenever the platform processes that dataset.

This is not just a testing practice. It's a scientific credibility practice. If your platform's variant calling, molecule docking, or patient cohort filtering ever produces a different result on the same input, you need to know before a researcher does. And you need to be able to answer "when did this change?" when they ask.

Source control your test fixtures alongside your application code. When the test fails because output changed, the diff in source control shows you exactly which deploy introduced the change. That audit trail is what separates "we have a bug" from "we have a data integrity problem we can't explain."

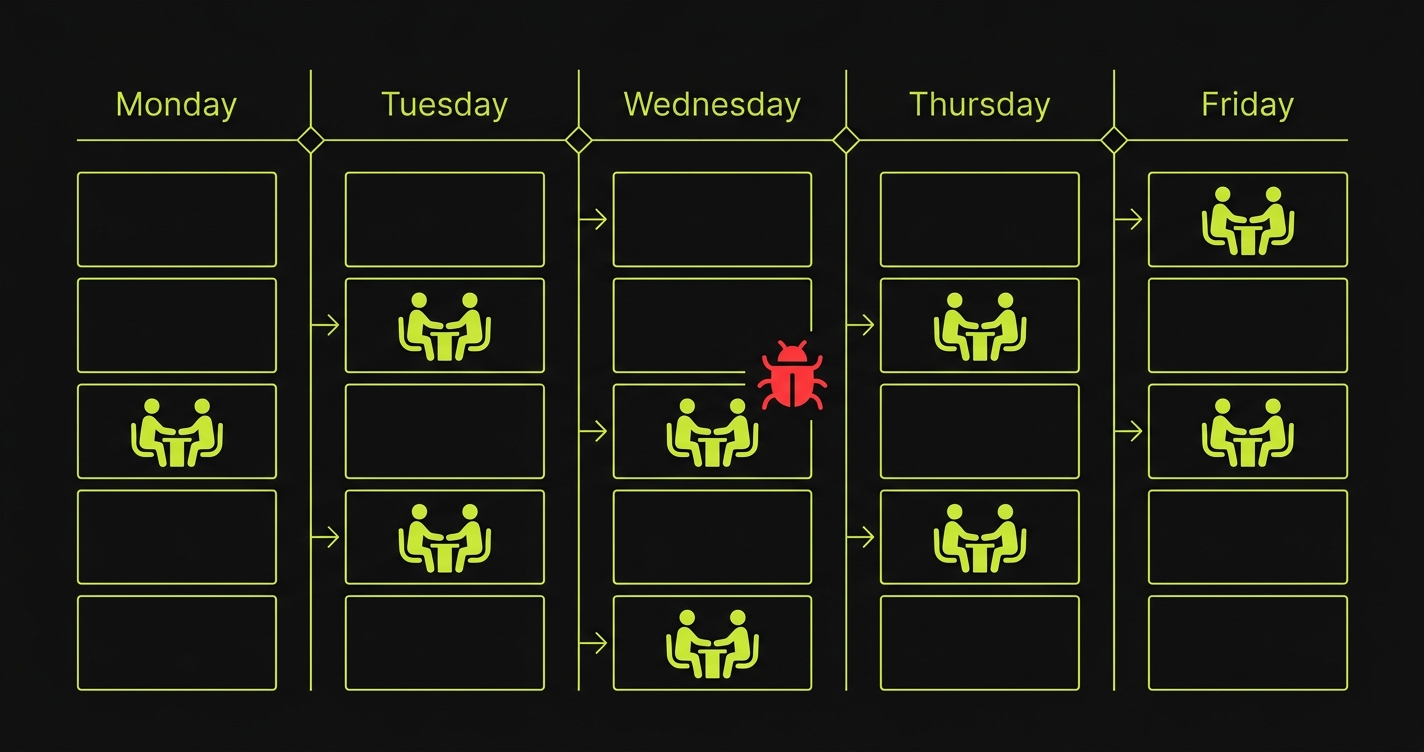

The 48-72 Hour Stability Window in a Research Context

Research labs and clinical teams often have structured experimental timelines. A genomics lab might run weekly analysis batches on Thursday afternoons. A clinical team might have their weekly data review on Monday mornings. These are predictable, high-stakes windows.

Get these from your pilot customer directly. Ask: "When does your team typically run analyses with our platform?" "Are there any scheduled reporting sessions where you'll need the platform to be available?" Map those sessions in your calendar and protect the 72 hours before each one.

In the four-month IndieBio window, the highest-stakes sessions are:

- The pilot's first real-data session (month three, week one): this is when they form their first impression of your platform's scientific credibility.

- Any session where they present IndieBio-network introductions they facilitate: SOSV has deep pharma relationships, and a partner introduction that goes sideways because your platform showed unexpected results during the demo is a significant setback.

- The week before Demo Day: their data needs to look clean and trustworthy for any investor who asks to see it during due diligence.

For each of these windows, establish a deploy freeze 72 hours prior. No production changes. Any pending features go to a staging branch and wait.

The IndieBio Demo Day Investor Angle

IndieBio Demo Day investors include life science VCs, corporate venture arms from pharma companies, and SOSV's own partners. They are scientifically literate. They will ask questions that a typical SaaS investor would not.

"How do you validate your platform's computational outputs?" is a reasonable Demo Day question from an IndieBio investor. So is: "Has your pilot customer validated your results against an orthogonal method?"

The founders who answer these questions well are the ones whose pilots have been running cleanly long enough for the customer to build trust in the platform's outputs. A pilot that's been running for six weeks without a data discrepancy is a pilot that can produce a confident answer to the validation question.

Your pilot customer's ability to say "we've run 50 analyses and the results are consistent with our expectations" depends entirely on your platform not having introduced a calculation regression in the last six weeks.

The automated test suite with known-input assertions is what makes that statement possible. Without it, you're relying on luck that no deploy in the last six weeks touched the calculation logic.

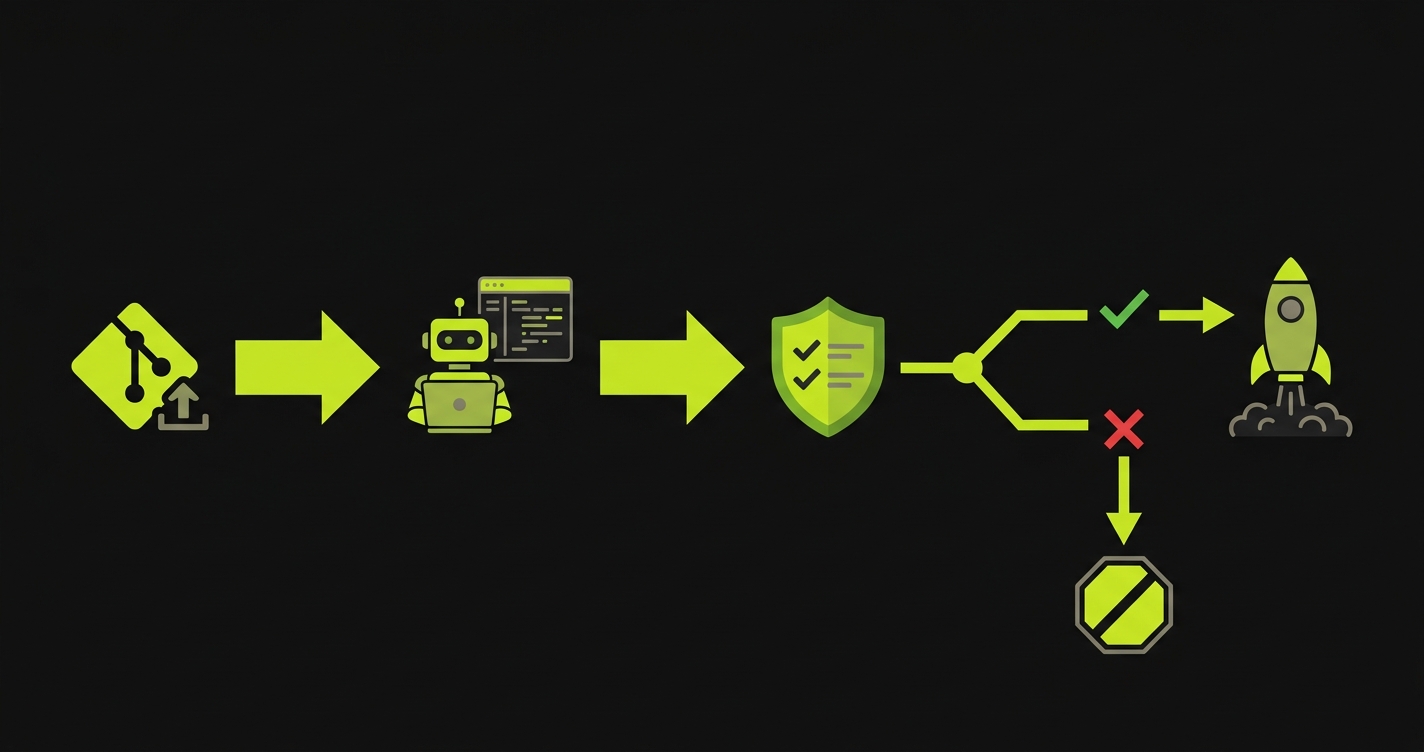

How Autonoma Fits the IndieBio Context

The specific challenge for IndieBio founders is that scientific correctness testing is harder to write manually than standard UI tests. Identifying which calculations to assert, building the reference fixtures, and maintaining those assertions as the platform evolves requires sustained engineering attention that a four-person team in a four-month program doesn't have.

Autonoma reads your codebase, identifies your critical computational paths, and generates tests that cover them. For a life sciences platform, that includes the data processing routes, the calculation endpoints, and the output generation logic. The generated tests run in CI on every deploy and block any change that would alter the platform's results on known test inputs.

The combination of Autonoma-generated coverage plus manually written data integrity assertions on your reference fixtures is the complete protection framework for an IndieBio-stage pilot.

The Practical Checklist for Month Three

Entering the active pilot period in IndieBio month three, here is the sequence that protects the deal:

First, build your reference fixture library. Run your analysis pipeline on three to five representative datasets and record the exact outputs. Store them in your test suite as golden files.

Second, write data integrity tests that assert exact outputs on those fixtures. These are the highest-value tests in your entire suite, because they're the ones that catch the category of bug that kills life science pilots.

Third, add Autonoma coverage for the UI and workflow layer. The combination of Autonoma-generated workflow tests and your manually written data integrity tests gives you complete protection.

Fourth, deploy this test suite to CI and require it to pass before any merge to main. This is the rule: no production changes without the pilot path tests passing.

Fifth, map your pilot customer's scheduled sessions and establish deploy freeze windows 72 hours before each one. Put them in the team calendar with the label "no deploys."

Run your pipeline on a reference dataset that has published expected results. For genomics, the NA12878 sample has extensively characterized variants that can serve as a ground truth. For other domains, use synthetic data you generate yourself with known properties, or partner with your pilot customer to validate a reference run before the active pilot period begins. The goal is a fixed input with a known correct output that you can assert against. If your platform's outputs change on that input, the test fails.

Don't use their proprietary data in your test suite. Use a reference dataset that mimics the structure and format of their data. What you're testing is the computational pipeline and the output generation logic, not the specific biological content. A synthetic dataset with the same file format, the same column structure, and a similar complexity profile gives you meaningful coverage without touching patient or proprietary research data.

Configure your CI pipeline with a longer timeout for the scientific workflow tests, typically 5-10 minutes rather than the 30-60 seconds for UI tests. Run the data integrity tests in a separate CI job from the UI tests, so a fast UI test failure doesn't hold up a deploy unnecessarily. Mark the scientific computation tests as a required gate for production deploys but allow them to run in parallel with the build so they don't block the pipeline for fast changes that don't touch computation logic.

Update your test fixtures intentionally. When you improve an algorithm and the outputs change, regenerate the expected outputs from your reference dataset and update the golden files in your test suite. Commit the fixture update alongside the algorithm change in the same pull request, with a clear commit message explaining the scientific rationale for the change. This creates an auditable record: every change to your platform's computational behavior is documented in source control. That audit trail is what you show to a pharma partner during diligence.

Yes, but frame it correctly. Tell them you have a dedicated QA environment that mirrors their configuration and that you run automated validation checks before every deployment to ensure the platform's computational results remain consistent. This is a feature, not a liability. In life sciences, a vendor who can say 'we validate computational consistency on every deploy' is demonstrating exactly the kind of scientific rigor that research institutions want from a software partner.

Add new analysis features as separate pipeline branches that don't share code with existing approved workflows. If a new feature requires changes to shared utility code, those changes need to pass the full data integrity test suite before merging. The existing pilot's approved workflow is your regression test target: any change that causes the reference fixture outputs to diverge is a blocker, regardless of what new capability it enables. New features are additive. They don't modify existing computational paths.