On Deck is not a traditional accelerator. There's no single Demo Day with a captive investor audience. Instead, the network itself is your sales channel, your investor pipeline, and your reputation engine. A bug that one On Deck founder experiences gets shared in Slack before you can fix it. Network reputation is harder to rebuild than a customer relationship. Protecting your demos and early pilots within the cohort is the highest-leverage sales activity you have.

On Deck works differently from every other accelerator in this list. There's no three-month cohort with a Demo Day. There's a rolling fellowship, a large distributed network of founders and operators, and Showcase events that are less "pitch to investors" and more "demonstrate genuine traction to a community that knows each other well."

That difference changes the math entirely. At Y Combinator or Techstars, your goal is a strong Demo Day performance that wins investor meetings. At On Deck, your goal is a strong network reputation that compounds over months. Customers come from within the network. Investors come from within the network. Referrals come from within the network. Everything flows from your standing in a community where everyone has the same Slack channels, the same weekly events, and the same group of mutual connections.

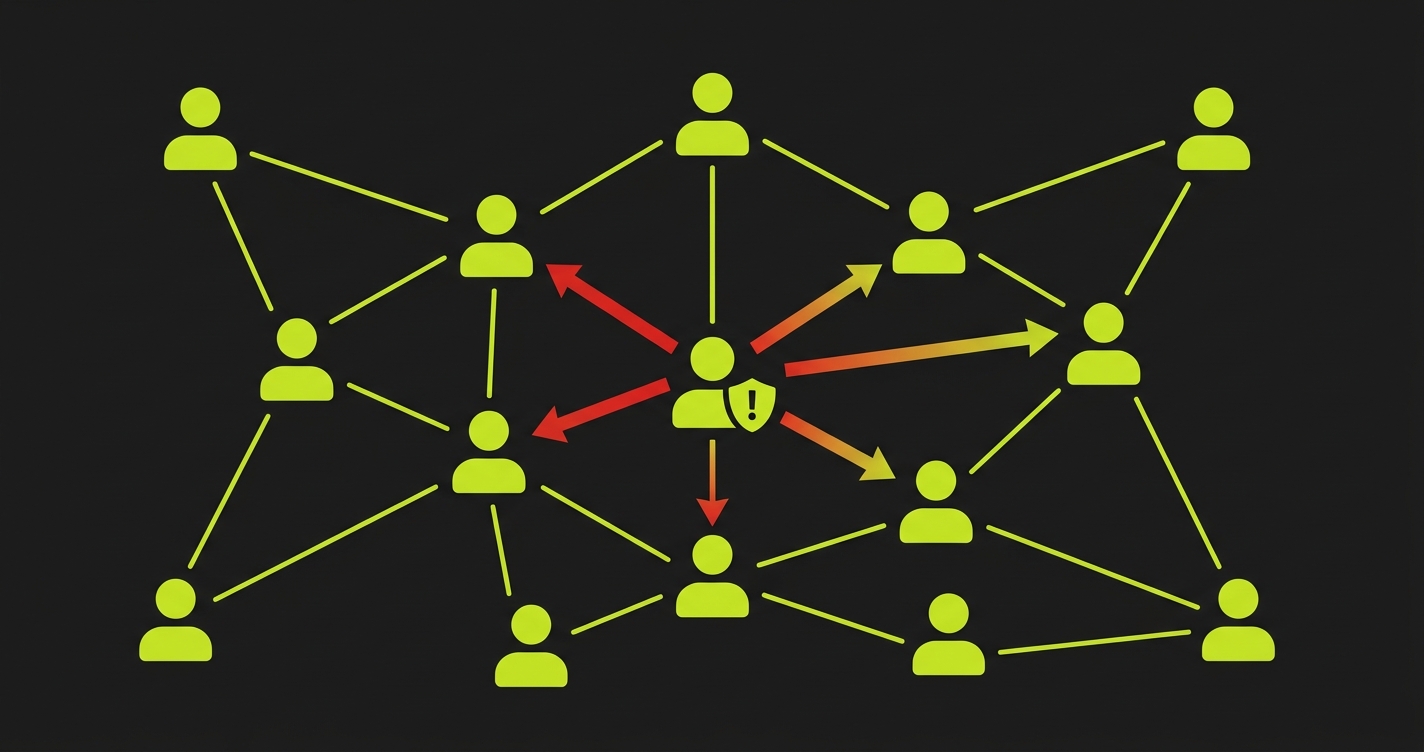

The practical implication: a bad product experience doesn't just cost you one customer. It gets shared in the cohort Slack within hours. On Deck has over 10,000 founders and operators in its network. A message in the right channel saying "heads up, I tried [your product] and ran into a weird bug" reaches your entire prospect pipeline before you even know the bug exists.

This is why bugs in an On Deck context are a sales problem, not just an engineering problem.

The On Deck Timeline Is Ongoing, Not Event-Driven

The traditional accelerator advice is: "protect the X weeks before Demo Day." In On Deck, there is no single convergence point. The relevant windows are whenever you're actively selling into the network, which is continuously.

But there are higher-stakes moments. On Deck Showcases are periodic events where founders present to the broader network. The weeks leading up to a Showcase are when you're doing demos with prospects, and those prospects are often On Deck members who will share their impressions with other On Deck members immediately after.

The timeline pressure looks like this:

| Activity | When | Word-of-Mouth Risk |

|---|---|---|

| Initial demos with network contacts | Continuous | Medium: one-on-one impression |

| Pilot close with a well-connected ODF member | Weeks 1-4 | High: they'll mention it to their cohort |

| Showcase announcement period | 2-3 weeks pre-Showcase | Very High: everyone is evaluating products simultaneously |

| First solo sessions for new pilot customers | Ongoing | Critical: the experience they describe to peers |

| ODS (On Deck Scale) operator introductions | Varies | Very High: operators talk to each other constantly |

The ODS context deserves special mention. On Deck Scale is for scaling companies and experienced operators, not just early-stage founders. When an ODS operator tries your product and has a bad experience, they're not just a lost customer. They're someone with significant industry credibility who will have an opinion about your product quality, and their opinions carry weight in the broader network.

The Word-of-Mouth Dynamics of a Tight-Knit Network

Most founder communities have a norm against publicly criticizing other founders' products. On Deck generally follows that norm in formal settings. But informal Slack communication is different. If an On Deck founder runs into a bug in your product, they're not going to write a public post about it. But they might:

- Mention it casually in a channel conversation about the problem space you're solving.

- Reply "I tried it, had some issues" when someone asks in Slack for recommendations.

- Bring it up during a virtual coffee chat with another founder who's evaluating similar tools.

- Tell a mutual investor connection about their experience when asked for thoughts.

None of these are hostile. All of them reduce your conversion rate across the network in ways you can't directly observe. You'll notice it as a pattern: demos that go well but don't convert, introductions from network contacts that don't land, a general sense that word isn't spreading the way it should be for a product people seem to like.

The positive version of this dynamic is even more powerful. An On Deck founder who has a genuinely good experience with your product becomes a proactive referral engine. They mention you in Slack unprompted. They make introductions because it reflects well on them to know useful tools. They become the kind of reference customer who tells investors before you ask them to.

Getting from "pilot customer" to "unprompted advocate" requires one thing: a product that works reliably every time they use it.

The Three Moments That Define Network Reputation

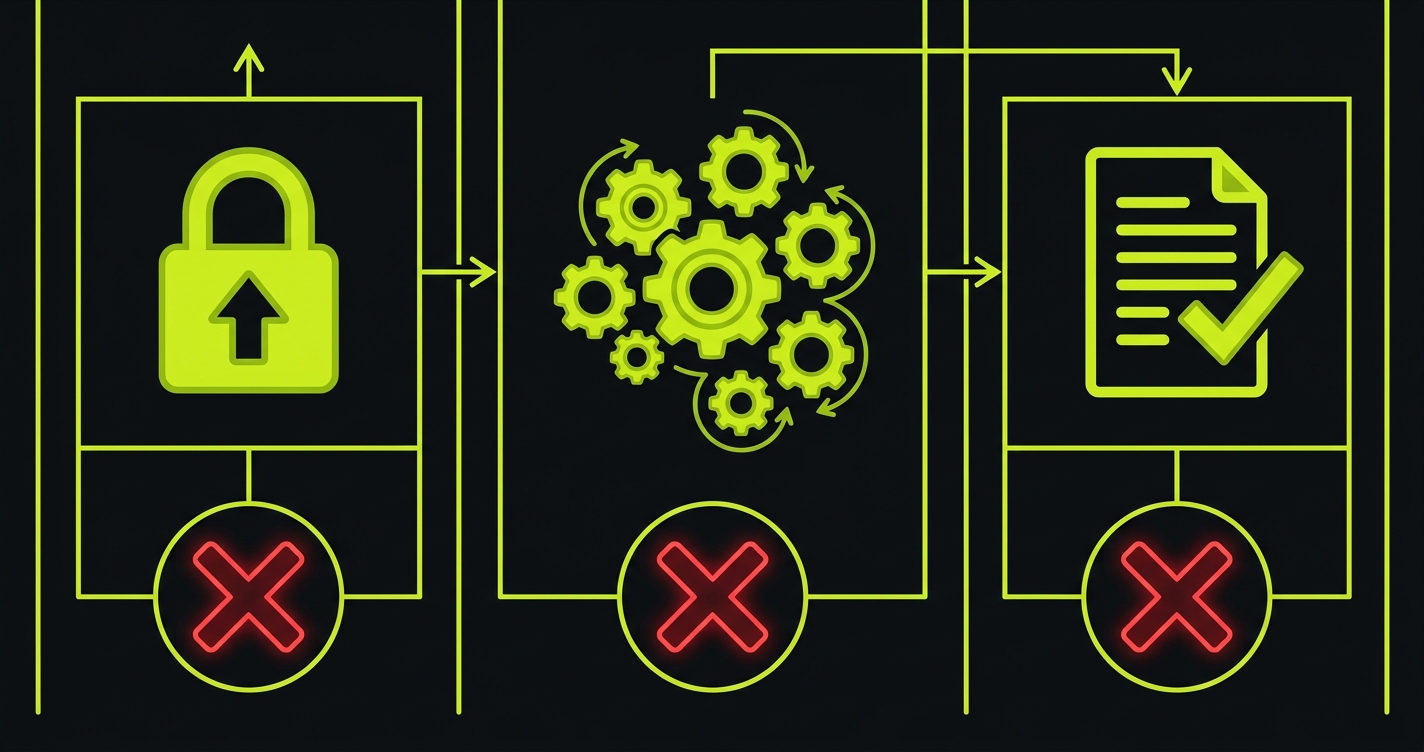

The three-moment framework applies in the On Deck context, but the stakes around each moment are shaped by the network effect rather than enterprise compliance.

Authentication. In On Deck, founders and operators often share quick product recommendations in real time. "Try this, sign up here" is a common Slack interaction. If the signup or login flow has a bug at that exact moment, the person who tried it reports immediately in the same channel. You've lost the recommendation and gained a public bug report simultaneously.

The core action in the first session. On Deck network members are time-poor and product-savvy. They've seen hundreds of demos and tried dozens of tools. Their patience for a product that doesn't work in the first session is low. If the first thing they try to do doesn't work, they move on. They might come back if a trusted contact insists it's worth another try, but that requires a second warm recommendation to overcome the first cold experience.

What they see when they come back. If a network member tries your product, hits a bug, and comes back a week later, the most important thing is that the exact thing that broke before now works. If it's still broken, that's a different signal. It tells them the team doesn't prioritize fixing known issues, which is a judgment they'll carry into any future recommendation.

The Specific Risk: Sharing-Triggered Traffic Spikes

On Deck has a distinctive pattern that other accelerators don't: organic product sharing creates sudden traffic spikes. Someone posts your product in a channel, 30 people click the link within five minutes, and your onboarding flow handles 30 simultaneous signups. If your product has race conditions or performance issues under concurrent load, this is exactly when you'll see them.

This isn't a hypothetical. On Deck network effects are real and fast. A good product mention in a high-traffic channel can send a startup's signups up 10x in an hour. If your auth flow has a concurrency bug that only surfaces under load, you'll discover it in that exact moment.

The test for this is different from a standard functional test. It's a load test on your critical flows, specifically auth and the first-time user onboarding sequence. You don't need sophisticated load testing infrastructure. A simple concurrent test that hits your signup flow with ten parallel requests will surface most concurrency bugs:

import { test, expect, chromium } from '@playwright/test';

test('concurrent signups do not conflict', async () => {

// Simulate 10 simultaneous signups (On Deck sharing scenario)

const browser = await chromium.launch();

const testEmails = Array.from({ length: 10 }, (_, i) =>

`ond-test-${Date.now()}-${i}@testdomain.example`

);

const signupResults = await Promise.allSettled(

testEmails.map(async (email) => {

const context = await browser.newContext();

const page = await context.newPage();

await page.goto('/signup');

await page.fill('[name="email"]', email);

await page.fill('[name="password"]', 'TestPass123!');

await page.click('[data-testid="create-account"]');

// Each user should reach their own dashboard, not a conflict state

await expect(page).toHaveURL('/dashboard', { timeout: 10000 });

await expect(page.locator('[data-testid="welcome-message"]')).toBeVisible();

await context.close();

return email;

})

);

// All 10 concurrent signups should succeed

const failures = signupResults.filter(r => r.status === 'rejected');

expect(failures).toHaveLength(0);

await browser.close();

});This test runs ten parallel browser sessions through your signup flow. If any of them fail with a conflict error, a duplicate key constraint, or a race condition in your session handling, the test surfaces it before it surfaces in an On Deck channel.

Network Reputation as a Compounding Asset

The flip side of the word-of-mouth risk is the word-of-mouth opportunity. On Deck network reputation compounds in a way that investor-focused Demo Day performance does not. A strong Demo Day impression lasts for the next fundraising cycle. A strong On Deck network reputation lasts for years, because the network persists and people in it keep building companies.

The founders and operators who become your advocates in On Deck are not just customers. They're part of your long-term distribution strategy. When they start their next company or join a company that needs your product, they remember. When they're in a room with an investor who asks about your space, they mention you favorably.

The investment in product stability during your On Deck period is not just an investment in closing current deals. It's an investment in the reputation that pays out over the next five years of fundraising, recruiting, and business development across the alumni network.

This framing helps when you're making the tradeoff between shipping a new feature and investing time in test coverage. "Should I spend four hours writing tests or four hours on this feature?" is a harder question when you're optimizing for a single Demo Day. When you're optimizing for long-term network reputation, the test coverage becomes more clearly worth it.

Protecting Showcase Moments Specifically

On Deck Showcases are different from traditional Demo Days. The audience is the network itself, not primarily outside investors. But the stakes are the same: you're demonstrating your product to the most concentrated group of potential customers, advocates, and investor connections you have access to.

For the two to three weeks before a Showcase, your product needs to be demonstrably stable. Not feature-complete. Not polished. Stable.

The specific risks during Showcase prep are:

- You're doing demos with multiple network contacts simultaneously, which means multiple people experiencing your product's current state in the same week. Any bug is experienced by many people at once.

- You're trying to close pilots before the Showcase so you have traction to reference in the presentation. The pilots you close in the two weeks before a Showcase will use the product for the first time during the Showcase itself.

- You're stressed and shipping more than usual to look ready, which means more regression risk from rapid deploys.

The 72-hour freeze still applies. Stop shipping to production 72 hours before the Showcase. Run your critical path tests against the production environment directly. Walk your own product as if you were a first-time On Deck network member who just received a Slack recommendation.

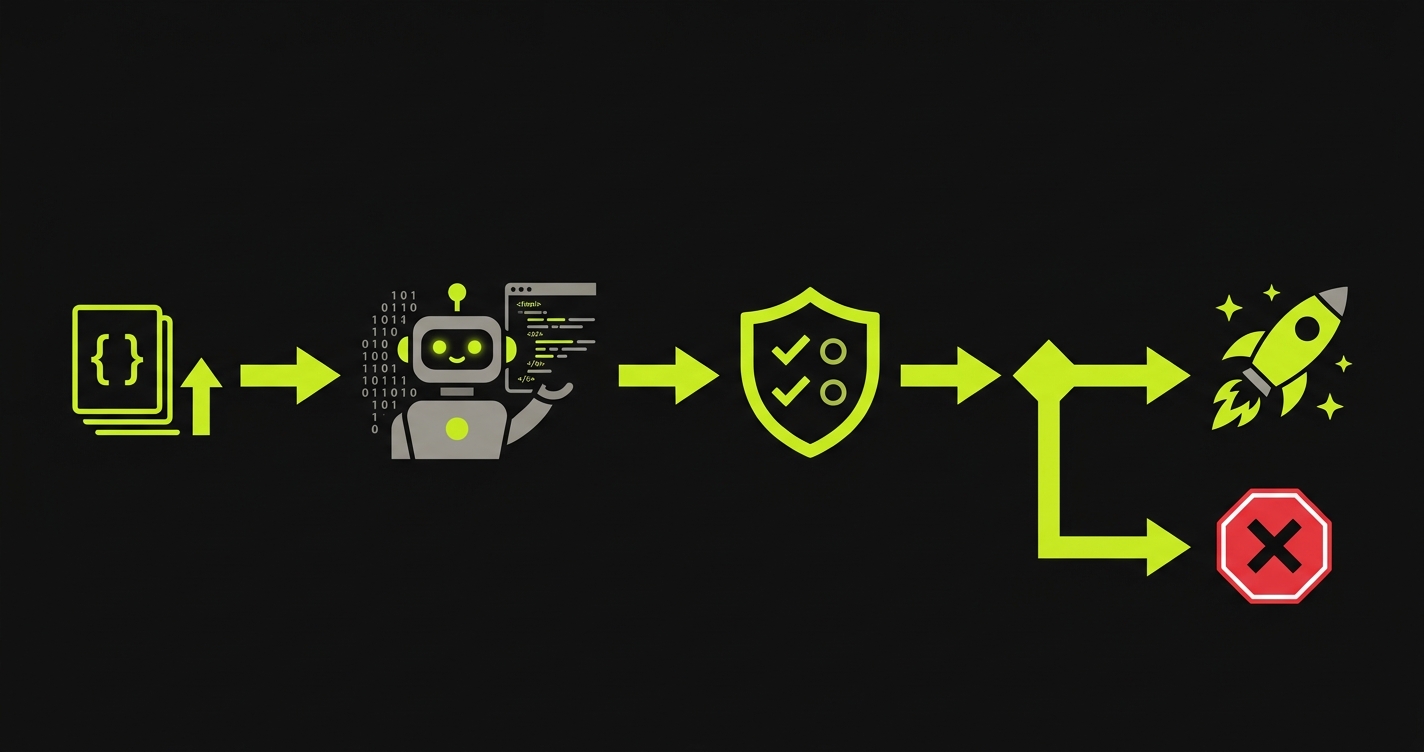

How Autonoma Fits the On Deck Context

The On Deck context has a specific characteristic that makes automated test coverage especially valuable: the user who might try your product is often another technical founder or an experienced operator. They can tell the difference between a product with automated test coverage and one without. They've seen both, and they can feel the difference in product stability.

Autonoma generates comprehensive E2E test coverage from your codebase. For an On Deck-stage company, the coverage that matters most is auth, the first-session flow, and the core action. These are the moments that define the network's first impression of your product.

The concurrency test above is something you write yourself once. The functional coverage of every critical path is what Autonoma handles continuously as your codebase evolves.

The Practical Approach for On Deck Sales

In On Deck, the sales process is continuous rather than event-driven. That means the testing discipline needs to be continuous too. Here is what that looks like:

Before any demo with a network contact: run the full pilot path test suite manually against production. If you haven't set up automated CI yet, at minimum do a manual walkthrough of auth, core action, and output in the five minutes before the call.

When closing a pilot with a network member: treat the 72 hours after they sign up as the highest-priority stability window. They will try the product within 24 hours of signing up, usually in a quick session to get oriented. That session defines their initial impression.

For each pilot customer's first three solo sessions: monitor your error logs actively. Set up alerts for any 5xx errors or slow response times on the core action endpoints. Respond to anything that surfaces within minutes, not hours.

For Showcase prep: establish the 72-hour freeze window, run your full test suite against production, and do a live product walkthrough as yourself the morning of the event.

Long-term: build toward a CI pipeline where every push to main runs the critical path tests automatically. This is the infrastructure that lets you ship fast between Showcase events without accumulating network reputation risk.

At a traditional accelerator, your sales effort peaks at Demo Day and then continues through follow-up. At On Deck, your sales effort is continuous because the network is always active and people are always evaluating products and making recommendations. The word-of-mouth channel is always on. This means there's no pre-Demo Day sprint followed by a wind-down. The stability bar needs to be maintained consistently, with extra attention during Showcase periods and whenever you're actively demoing to multiple network contacts simultaneously.

ODS operators are experienced. They've seen many products and they know what good looks like. Before their evaluation session, run your critical path tests, do a personal walkthrough of the exact flow they'll use, and make sure there are no known issues outstanding. Send them an agenda for the session so you can control the demo path. After the session, follow up within 24 hours and ask specifically if they ran into anything unexpected. Operators will often test edge cases that standard demos don't cover.

Respond in the same channel, quickly and specifically. Acknowledge the bug, give a realistic timeline for the fix, and offer to set up a direct conversation. Don't be defensive and don't minimize the issue. The On Deck community values transparency and directness. A founder who responds to public bug reports with honesty and speed earns more credibility than a founder who prevents all bugs by shipping slowly. Then fix the bug and post a follow-up in the channel confirming it's resolved.

Message them the day before their likely first session and ask: 'Let me know if you want me to walk you through anything on the first session or if you prefer to explore independently.' This creates an opening for them to flag issues directly to you rather than to the Slack channel. If they prefer to explore independently, monitor your error logs and application metrics during their likely usage window. Being proactively responsive during the first 72 hours of a pilot is worth more than any feature you could ship in that time.

Only announce updates that are meaningfully shipped and stable. Don't announce 'we just shipped X' and then have X break for the first people who try it. The announcement pattern that works is: ship the feature, run it in production for 48-72 hours with no issues, then announce it. This means your announcement is accompanied by the confidence that comes from a few days of clean production data. Announcements that follow immediately after a deploy are high-risk because the bug that surfaces in the first 24 hours surfaces in front of your announcement audience.

Focus on the path that a new user walks in their first session, not the full feature set that long-term users have explored. New users in your On Deck pipeline all walk approximately the same path: they sign up or log in, they try the core action once, and they form their initial impression. Coverage on that path protects the first impression for every new user simultaneously. Long-tail feature coverage can come later, once the core path is locked down.