Pear Demo Day is attended by enterprise buyers and investors who may overlap. A bug that surfaces during an enterprise pilot doesn't just cost you that customer. It creates a diligence signal that follows you into every investor conversation with someone in that network. The enterprise pilot is simultaneously a revenue event and an investor evidence event. It needs to be protected like both.

Pear VC runs a focused accelerator program, Pear Garage, with a distinct identity: Stanford-connected, Silicon Valley, and oriented toward enterprise software and deep tech. The Pear network includes senior enterprise buyers, procurement professionals, IT leaders, and functional heads at large companies who can be customers. Those same people often have LP relationships, board advisory roles, or investor connections that put them in rooms with Pear's investment team.

This overlap is the defining feature of Pear Demo Day that founders need to understand. When a senior enterprise buyer at a Fortune 500 company experiences your product, they're potentially forming two impressions simultaneously: their impression as a potential customer evaluating a purchase, and their impression as a member of the Pear network who will be asked for their view by investors doing diligence.

A bug that would normally just be a customer support issue becomes a signal that travels into your fundraising pipeline. This is not paranoia. It's the network structure of Silicon Valley enterprise VC. The people who buy enterprise software and the people who fund enterprise software companies know each other. Their opinions travel.

The Enterprise Sales Cycle and the Demo Day Convergence

Enterprise software pilots have long sales cycles. Getting from first contact to signed pilot agreement at an enterprise company typically takes six to twelve weeks: initial conversations, internal evaluation, legal review, security review, procurement approval. Pear Garage is a pre-seed program, which means the companies going through it are trying to close enterprise pilots while simultaneously building the product and preparing for Demo Day.

This creates a specific time pressure that is more compressed than any individual part of it:

| Stage | Duration | Parallel Activities |

|---|---|---|

| Initial enterprise contact | Week 1-2 | Building core product, Pear curriculum |

| Internal evaluation at enterprise | Week 2-6 | Feature development for pilot scope |

| Security and legal review | Week 4-8 | Continued building, addressing security questions |

| Pilot agreement and onboarding | Week 7-9 | Onboarding pilot users, Demo Day prep begins |

| Active pilot | Week 9-12 | Intensive building + Demo Day preparation |

| Demo Day | Week 12 | Everything converges |

The active pilot period in weeks nine through twelve is when the enterprise customer is running your product in their environment while your team is simultaneously shipping the features they asked for during the evaluation phase, preparing the Demo Day narrative, and handling the other operational demands of a growing startup.

That convergence is where regressions happen. A feature ships for the enterprise pilot's benefit that breaks something the enterprise pilot was already using. The enterprise customer reports it to their IT team, not directly to you. By the time you know about it, the IT team has flagged it in their vendor review process and the business champion who advocated for the pilot is fielding questions about whether the vendor is ready for production.

The Multi-Stakeholder Veto Problem

Enterprise pilots don't fail because one person has a bad experience. They fail because someone in the approval chain has a bad experience or a concern that they surface to the decision-making process.

In a standard B2B SaaS pilot, there's usually one champion and maybe one other stakeholder. In a Pear-type enterprise pilot, you're dealing with a chain:

- Business unit sponsor: The person who identified the need and championed the pilot internally. This is your main contact.

- IT security: Approves the technical integration and ongoing access. If they see unusual behavior or errors, they raise a concern.

- Legal and procurement: Reviewed the contract. If there's a major incident during the pilot, they're asked to review whether the contract terms were violated.

- End users: The actual operators who use the product daily. If the product is unreliable, they complain to their manager, who tells the business unit sponsor, who has a harder time defending the pilot at the next review meeting.

Any one of these stakeholders can pause or kill the pilot. The business unit sponsor can't protect you from IT security's concerns. The legal team can invoke pilot exit clauses if there are enough incidents. End users who stop using the product because it's unreliable create a usage gap that makes the pilot look unsuccessful regardless of whether the product actually works.

This is why protecting the enterprise pilot is not just about the champion's experience. It's about every stakeholder's experience, including the IT team that monitors your API activity and the end users who use the product when no one is watching.

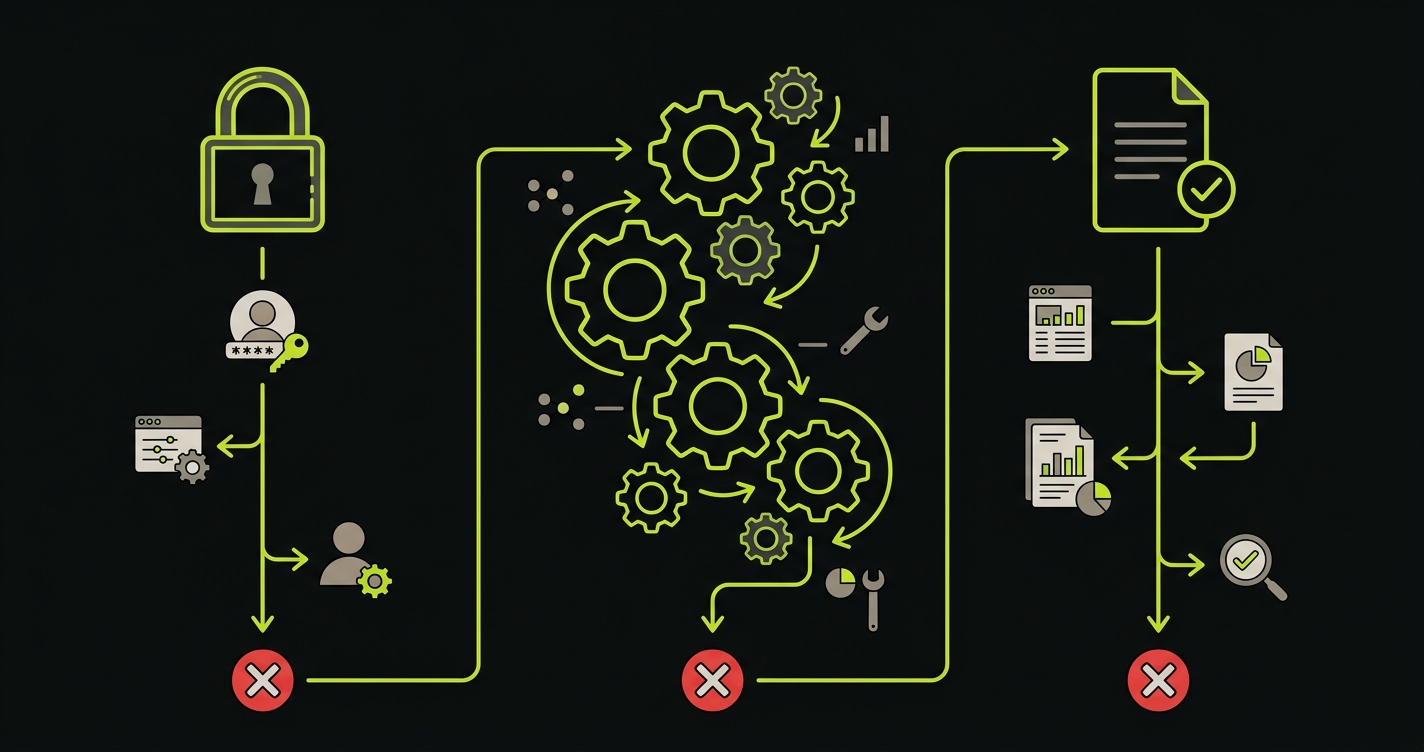

The Three Moments in an Enterprise Context

The three-moment framework for an enterprise pilot requires additional specificity because each moment has multiple stakeholders interacting with it.

Authentication with enterprise identity infrastructure. Enterprise companies use SSO, SAML, and SCIM provisioning. The IT team configured your integration during onboarding. Any change to your auth middleware that affects the SAML assertion handling or the session token format will silently break authentication for all enterprise users at once. They won't see a helpful error message. They'll see an opaque failure that their IT team will investigate, log, and flag.

The test for this must exercise the full SAML flow, including the IdP redirect, the assertion parsing, and the session creation. It must run on every deploy and block any merge that breaks it.

The approved pilot workflow. During the evaluation phase, the business unit sponsor defined what they were going to do with your product. That scope was written into the pilot agreement. Those specific workflows are what the IT team approved for data access, and those workflows are what end users are trained to run. Changes to the product that break those specific workflows break the entire organizational investment in the pilot, because the training, the data access grants, and the internal documentation all reference those workflows.

The output that goes to other systems or stakeholders. Enterprise products don't exist in isolation. Your product's output often feeds into other enterprise systems: reports that go to managers, data that feeds dashboards, exports that go into compliance systems. If the format of your output changes in a way that breaks downstream consumption, you've broken something outside your own product that the enterprise team depends on. This is a category of failure that takes days to diagnose because the broken downstream system doesn't point back to your product as the cause.

What Full Enterprise Pilot Coverage Looks Like

Concrete example: an enterprise software startup building a workflow automation tool. The Pear network pilot customer is a logistics company. The approved workflow is SAML login, workflow template configuration, execution trigger, and report delivery to their BI system via webhook.

import { test, expect, request } from '@playwright/test';

test.describe('enterprise pilot: logistics company workflow', () => {

test('SAML auth, workflow execution, webhook delivery', async ({ page }) => {

// Critical moment 1: enterprise SSO authentication

await page.goto('/auth/sso/initiate?domain=logistics-pilot.enterprise.example');

// Test IdP redirects correctly and returns to app

await expect(page).toHaveURL(/\/dashboard/);

await expect(page.locator('[data-testid="user-org-badge"]')).toContainText('Logistics Pilot');

// Verify user has correct role (not just authenticated, but authorized correctly)

await expect(page.locator('[data-testid="user-role"]')).toContainText('Operator');

// Critical moment 2: approved pilot workflow

await page.click('[data-testid="workflows-nav"]');

// Pilot uses a specific saved template - it must still exist and be loadable

await page.click('[data-testid="template-freight-approval"]');

await expect(page.locator('[data-testid="template-status"]')).toContainText('Ready');

// Configure and trigger the workflow execution

await page.fill('[data-testid="order-reference"]', 'TEST-ORD-001');

await page.selectOption('[name="priority"]', 'standard');

await page.click('[data-testid="execute-workflow"]');

// Workflow must complete, not just start

await expect(page.locator('[data-testid="execution-status"]')).toContainText('Completed', {

timeout: 45000

});

const executionId = await page.locator('[data-testid="execution-id"]').textContent();

expect(executionId).toMatch(/^exec-[a-f0-9]{8}$/);

// Critical moment 3: webhook delivery to their BI system

// Use the API to verify the webhook was dispatched with correct schema

const apiContext = await request.newContext({

baseURL: process.env.API_BASE_URL,

extraHTTPHeaders: { Authorization: `Bearer ${process.env.TEST_API_TOKEN}` }

});

const webhookLog = await apiContext.get(`/api/executions/${executionId}/webhook-delivery`);

expect(webhookLog.ok()).toBeTruthy();

const webhookData = await webhookLog.json();

expect(webhookData.status).toBe('delivered');

expect(webhookData.response_code).toBe(200);

// Assert the payload schema matches what their BI system expects

expect(webhookData.payload).toHaveProperty('order_reference', 'TEST-ORD-001');

expect(webhookData.payload).toHaveProperty('completion_timestamp');

expect(webhookData.payload).toHaveProperty('outcome');

// The payload schema cannot change without breaking their downstream system

expect(Object.keys(webhookData.payload)).toEqual(

expect.arrayContaining(['order_reference', 'completion_timestamp', 'outcome', 'metadata'])

);

});

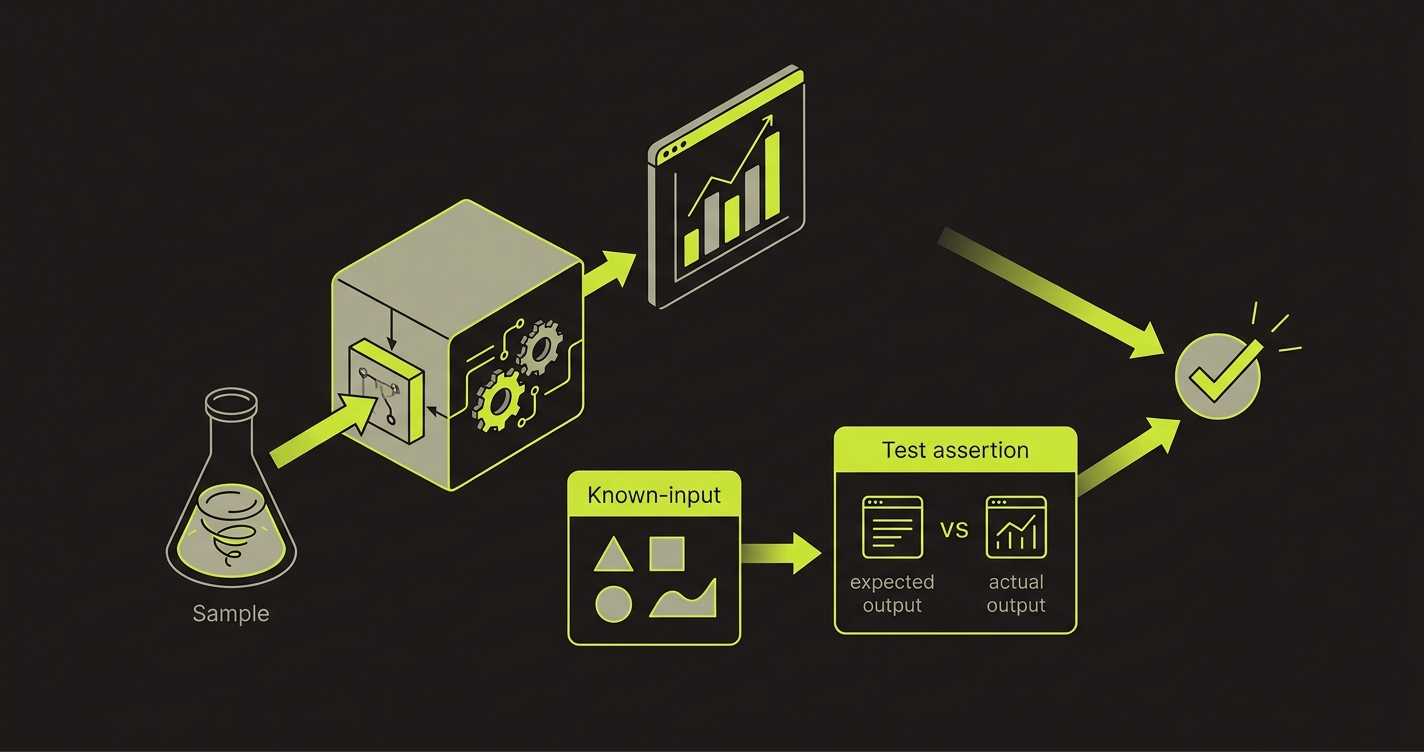

});The webhook schema assertion at the end is the piece most teams miss. The enterprise customer's BI system is parsing your webhook payload format. If you add or rename a field, their parser may fail. That's a downstream system break that looks like "your BI dashboard stopped updating" to their team, not "your vendor shipped a breaking change." They spend a day debugging their own system before tracing it back to your format change.

The assertion expect(Object.keys(webhookData.payload)).toEqual(expect.arrayContaining([...])) ensures that every expected field is still present in every payload. Adding new fields is fine. Removing or renaming existing fields fails the test.

The IT Security Stakeholder Is Watching Your Error Rates

Enterprise IT teams monitor the vendors they've onboarded. They watch your API error rates, your availability, and your response times. Not because they're hostile to you, but because it's their job to maintain uptime for the tools their organization uses.

If your error rate spikes after a deploy, their monitoring system may flag it before your own alerting does. They'll create an internal incident ticket. The incident ticket enters their vendor management system. If it hits certain thresholds, it automatically escalates to procurement for vendor review.

This is not a theoretical risk. Enterprise security and IT teams genuinely run this process. A startup that had their pilot paused for "vendor stability review" because of three 5xx error spikes in one week knows how real this is.

The practical implication: your testing coverage needs to include the API layer, not just the UI layer. Endpoints that the enterprise customer's systems call programmatically need the same CI coverage as the UI flows the users walk. A broken webhook endpoint or a broken API response format is an IT security incident, not just a bug report.

The Pear Network Overlap Between Buyers and Investors

Pear VC is deeply connected to the Stanford engineering community and the Silicon Valley enterprise buyer community. The people in Pear's investor network know the people who are piloting your product. Sometimes they're the same person.

This means your pilot customers may appear in two contexts: as a customer reference when investors do diligence on your round, and as a network contact who investors talk to informally about the enterprise software market. In both contexts, their experience with your product is relevant.

The favorable version of this looks like: an investor asks a contact in their network about your space, and that contact says "oh, we're piloting [your product] and it's actually quite good." That's an unsolicited validation that carries significant weight.

The unfavorable version looks like: an investor does diligence on your round and calls the enterprise pilot customer, who says "the product is interesting but it had some reliability issues that we're still working through." That single comment shifts the investor's perception of your engineering quality and your team's ability to serve enterprise customers.

The 48-72 hour stability window before Pear Demo Day is therefore protecting two things simultaneously: the enterprise pilot's experience and the diligence narrative. Both require the same underlying condition: a product that has been stable for long enough that the pilot customer's overall impression is "this works."

The Pear Demo Day Specific Preparation

Pear Demo Day has a specific format that creates a particular vulnerability. It's attended by enterprise buyers and investors together, which means the networking after the presentations can immediately turn into product evaluation conversations. Someone at your Demo Day presentation might walk up to you afterward and say "we have a problem like what you're solving, can we try it right now?"

That "right now" conversation is a live demo with an enterprise buyer who has just seen your pitch, is potentially a Pear network member, and may be connected to Pear's investment partners. The stakes are high and the timing is uncontrolled.

Prepare for this by having a clean demo environment that is separate from your production environment and separate from any active pilot. This demo environment should be freshly seeded with realistic data, have no known bugs outstanding, and be verified with a full test run the morning of Demo Day.

Run your automated test suite against the demo environment before Demo Day starts. Walk through the critical flows manually with fresh eyes. Note anything that looks slightly off, even if it's not technically a bug. Fix it.

The demo environment version of your product is the one investors and buyers see first. It needs to be the best version of your product.

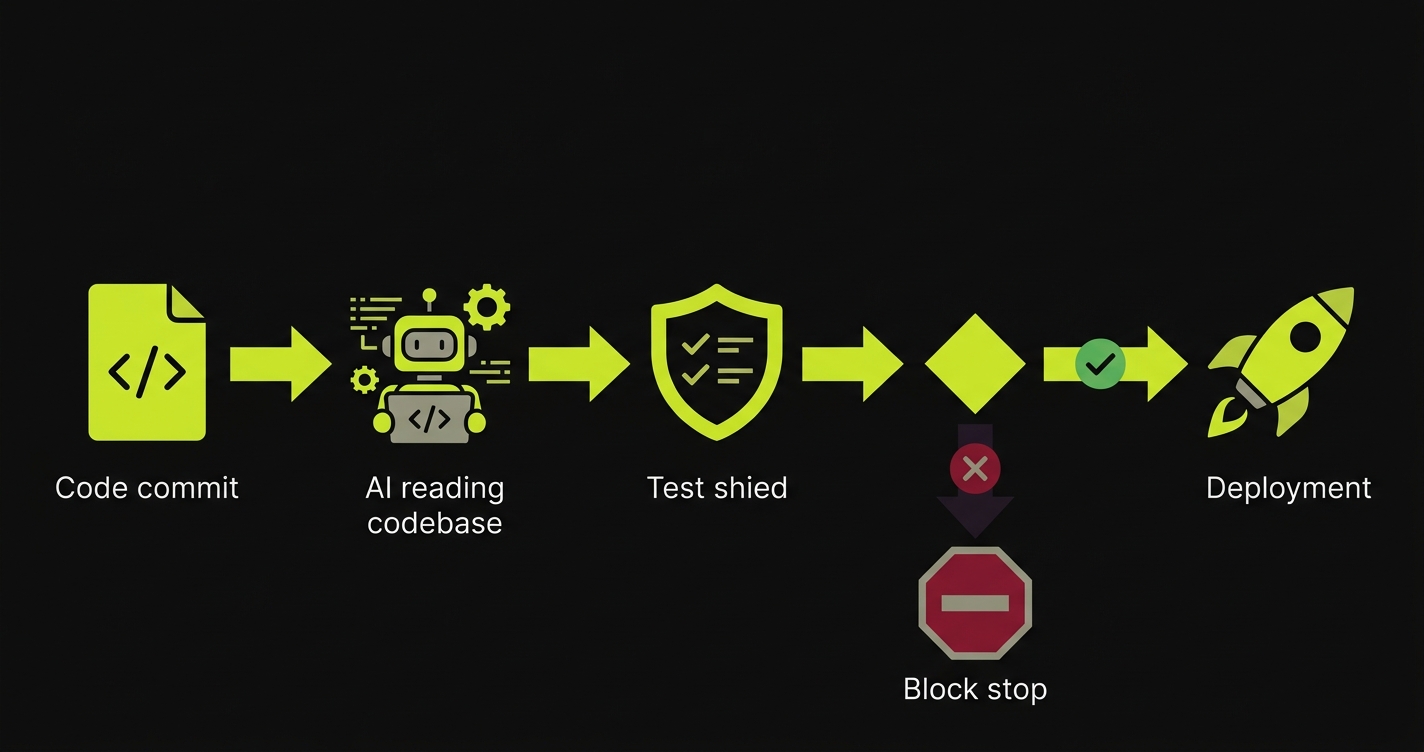

How Autonoma Fits the Pear Enterprise Context

Enterprise pilots require more coverage than consumer pilots for two reasons: the consequences of failure are higher (multi-stakeholder veto risk, IT security escalation), and the surfaces that need coverage are broader (UI layer, API layer, webhook/integration layer).

Autonoma generates E2E test coverage across all three layers from your codebase. It identifies your API routes, your UI workflows, and your integration endpoints, and generates tests that cover each. For a Pear-stage enterprise team of two or three engineers, Autonoma replaces the need for a dedicated QA function during the critical pilot period.

The webhook schema assertion in the example above is something you write once and maintain. Autonoma handles the UI and API functional coverage automatically. Together, they give you the complete picture: "our enterprise pilot is protected from regressions in the UI, the API, and the integration layer."

The Practical Checklist for Pear Demo Day Prep

Eight weeks before Pear Demo Day, with an enterprise pilot either closed or in closing:

Week eight through six: establish automated CI coverage on the three critical moments for your enterprise pilot. Auth, approved workflow, output and integration layer. Run Autonoma to generate the UI and API layer coverage. Write the webhook schema tests manually.

Week six: map every stakeholder in the enterprise pilot. For each stakeholder, identify what they interact with: the end users and their UI flows, the IT team and the API and SSO integration, the business sponsor and the reporting and output layer. Verify you have test coverage for each stakeholder's surface.

Week four: establish contact with your business sponsor and ask about their internal review schedule. Get the date of their next internal pilot review presentation on your calendar. Mark it as a deploy freeze start minus 72 hours.

Week two: no new features that touch the enterprise pilot's approved workflow. Fixes only. Every fix goes through the CI suite before merge.

Demo Day week: last deploy is Monday or Tuesday. From Wednesday through Demo Day, the product is locked. Run the full test suite against the demo environment Thursday morning. Walk through the demo manually with fresh eyes. Show up on Demo Day knowing exactly what the product does and that it does it reliably.

Provide them proactively. Create a shared document or a read-only dashboard showing your test coverage summary, your CI pass rate over the past 30 days, and your production error rate trend. Enterprise IT teams love this because it means they don't have to ask. More importantly, sharing this proactively signals engineering maturity and gives your champion an artifact they can show to their IT security committee when they're advocating for extending the pilot.

Write a contract test. A contract test verifies that your integration produces output that conforms to the schema the enterprise customer's system expects, without requiring their actual system to be available in CI. Define the expected payload schema as a fixture, and assert that every execution of the integration produces output matching that schema. When the enterprise customer's system changes and they need you to update your output format, the contract test forces that change to be intentional rather than accidental.

Have a direct conversation with your business champion two weeks before Demo Day. Tell them: 'We're presenting at Pear Demo Day and some investors may reach out to you for a reference check. We want to make sure your experience has been positive enough to feel confident recommending us.' This serves two purposes. It gives you honest feedback about any remaining friction in their experience, and it signals to the champion that you take their perception seriously. If there are outstanding issues, this conversation surfaces them with enough time to fix them.

Define the pilot scope in writing, specifically in terms of which features and workflows are in scope. This document serves three purposes: it aligns expectations with the enterprise customer, it limits the surface area that needs to be stable during the pilot, and it gives you a defensible boundary if something breaks in an out-of-scope feature. 'That feature was outside the agreed pilot scope and we're actively working on it' is a much cleaner response to an IT security inquiry than 'that feature exists but isn't ready yet.'

Create a data tier in your test infrastructure. Your automated tests should run against synthetic data that mirrors the structure of the enterprise customer's real data, not the real data itself. Run your CI tests with synthetic data and keep the real data isolated to the production environment. For the integration layer tests that need to validate against real schemas, use data that's structurally equivalent but doesn't contain personally identifiable or commercially sensitive information. This is also a selling point: telling an enterprise IT team that your test pipeline never touches production data is a security audit answer they like to hear.

The main difference is the buyer-investor overlap and the Stanford network concentration. Many people at Pear Demo Day have both commercial relationships and investment relationships within the same network. That means a single poor product experience can propagate through both your sales pipeline and your fundraising pipeline simultaneously. The demo environment you present at Pear Demo Day should be treated as a live product evaluation, not just a pitch prop. Have the real product working, not a mockup, and have someone monitoring it in real time during the event.