TLDR: Platanus is the closest thing Chile has to YC. That means tight community, fast information travel, and pilot customers who are within 2-3 degrees of your investors. A bug-filled pilot in Santiago's enterprise community gets talked about at the same dinners your investors attend. This post covers the testing framework that keeps your critical pilot paths stable while you ship the features that get you to Demo Day with a clean story.

Why Platanus is the accelerator where reputation travels fastest

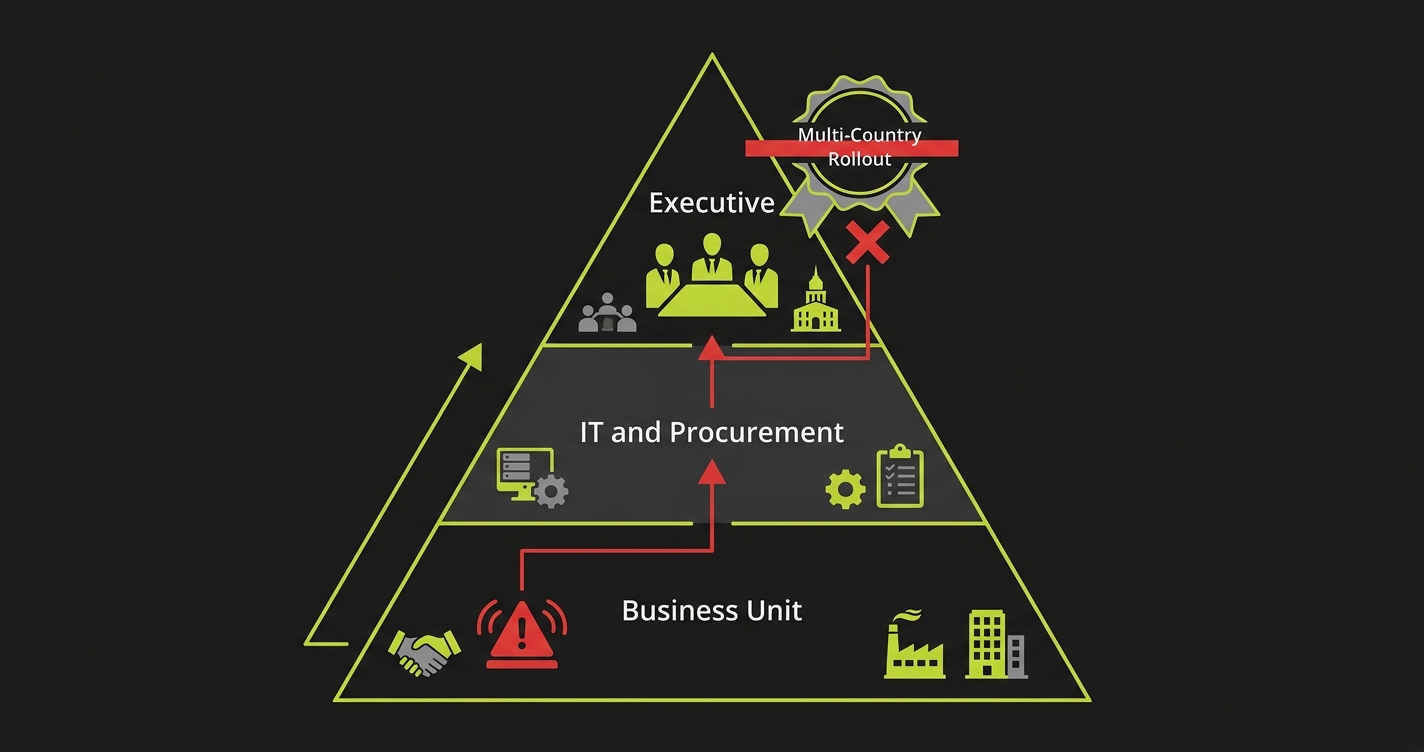

Platanus has built something rare: a tight-knit founder community in Santiago that is genuinely connected to the city's enterprise buyer ecosystem. The people who run procurement at Falabella, at Codelco, at Soprole, at the major Chilean banks, are in the same WhatsApp groups, attend the same industry dinners, and play golf with the same people as the angels and operators who attend Platanus Demo Day.

This is the accelerator's superpower. It means that an introduction from Platanus to a Chilean enterprise carries genuine social weight. Your champion inside that company is often a person who knows the Platanus founders personally, or knows someone who does.

But this tight network is also the thing that makes a bug incident uniquely costly. In a normal startup context, a bug in a pilot is between you and the customer. In the Platanus ecosystem, a bug in a pilot is between you, your customer, your customer's VP who talks to three Platanus alumni, two of your potential follow-on investors, and three other founders in your batch who are trying to sell into the same company.

This is not hypothetical. Santiago's startup ecosystem is small. Information travels. Your reputation is being built in real time, and it is being built in a community where everyone is connected to everyone.

Therefore: the stakes of a bug in a Platanus pilot are not just about the deal. They are about your reputation in the community you need to operate in for the next 5 years.

The Platanus batch timeline and what it compresses

Platanus runs batches modeled on the YC structure. You enter with an idea or early product, you get capital, you get community, you get mentors, and at the end of the program you present at Demo Day to a room of investors and operators.

The timeline is compressed by design. That compression is the point. Platanus wants to see how fast you can go from idea to traction. Demo Day is the deadline that forces prioritization. You have to decide what to build, what to sell, and who to sell it to, with a hard date on the calendar.

For most Platanus founders, the last 6 weeks of the batch look like this: you have 2-3 enterprise pilots running with Chilean companies, usually mid-to-large size (retail, mining, agriculture, financial services). You are still shipping features to close those pilots. Your engineer is implementing something the customer asked for in week 3 of the pilot. You are also trying to coordinate Demo Day logistics, prepare your investor pitch, and keep up with the weekly Platanus founder sessions.

In this window, the biggest risk to your Demo Day story is not the pitch. It is the pilot. Specifically: you ship a feature, it breaks something in the critical path, and your enterprise customer has a bad experience in the week before Demo Day. You find out about the bug at 6pm on a Thursday. You fix it by 10pm. But your customer already told their manager, who mentioned it to a Platanus alumni who knows your investors.

The fix happened too late. The story already traveled.

What Chilean enterprise customers are like as buyers

Chilean enterprise buyers, particularly in retail, mining, and agriculture, are conservative. They have seen many technology vendors come through. They have a healthy skepticism of startups. When they agree to a pilot, they are taking a calculated risk, and they are watching carefully for signs that the risk was the wrong call.

The bar they hold you to is not the YC "move fast and break things" standard. It is the standard they would hold a larger, more established vendor to. They expect the product to work. They expect it to be stable. They expect that when they show it to their team, it will not embarrass them.

This is especially true for the internal champion who got you in the door. That person told their colleagues that this startup was worth piloting. They used their own credibility to advocate for you. When a bug surfaces, their first concern is not your product. It is whether they made a mistake vouching for you.

If you protect that champion's credibility, they will be your strongest internal advocate for closing the deal and expanding. If you damage it with a stability incident, they will become risk-averse and start adding friction to the process.

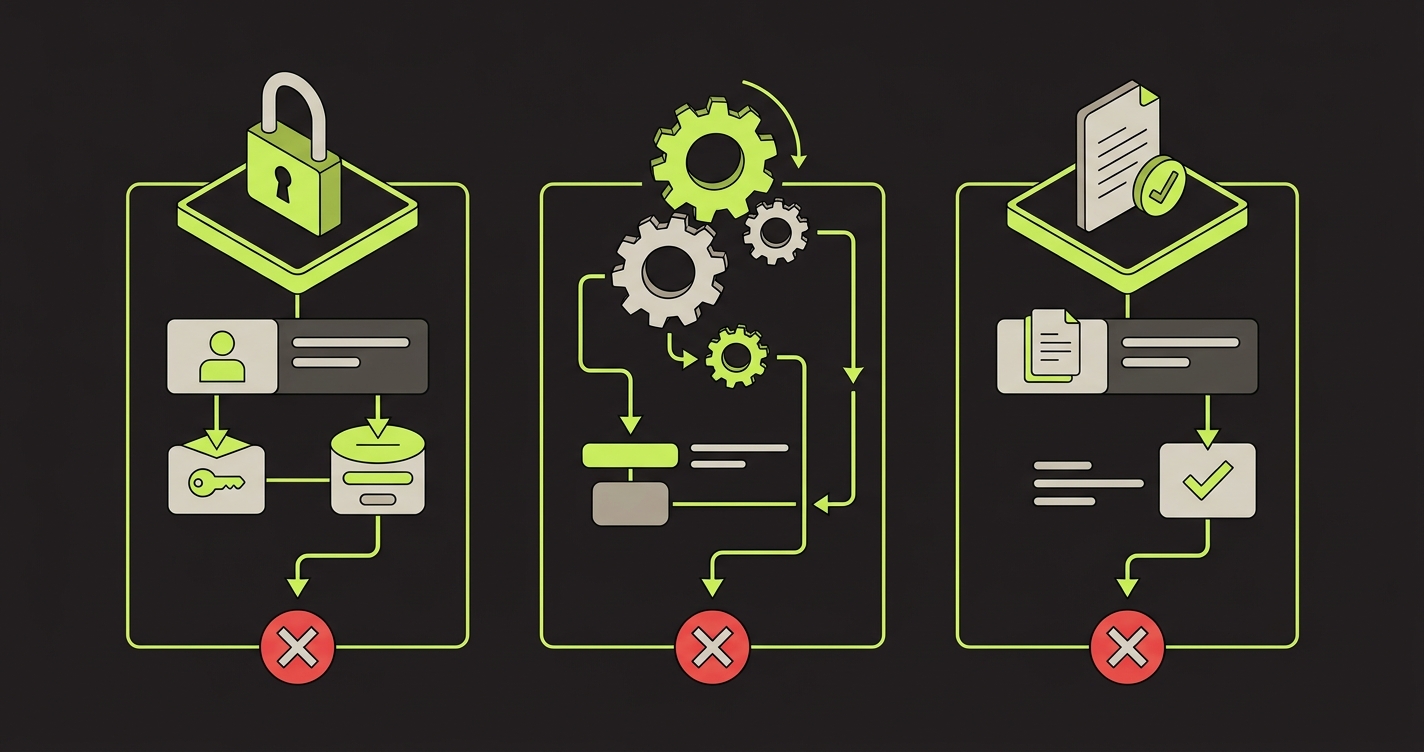

The 3 moments in a Platanus pilot that cannot break

In a conservative enterprise environment, these three paths are where customer trust is made or lost.

Login and access. Conservative enterprise customers often have employees who are not tech-forward. When a login breaks, they do not try different approaches. They stop, they send an email to IT, and they send a message to your champion. The message to your champion is: "the system doesn't work." Not "there's an issue with the authentication flow." Just: "it doesn't work." That phrase has exactly the connotation you think it does.

The primary workflow their team was trained on. When you onboard a Chilean enterprise customer, you train their team on a specific workflow. You show them exactly what to click and in what order. That workflow is now fixed in their mental model of your product. If a subsequent deploy changes the UI, renames a button, moves an element, or adds a required field that wasn't there before, the trained users are confused. They try what they were taught. It doesn't work the same way. They conclude the product changed on them without notice.

The output their manager sees. In Chilean enterprises, many buying decisions are made by people who never log into the product. They see the output. A report. A dashboard export. A summary email. If that output is wrong, it reaches a senior decision-maker who was not part of the pilot approval, was not briefed on the product, and has no context for why there might be an error. That person's first exposure to your product is a wrong number on a report. That is a very hard first impression to recover from.

The community reputation calculus

Here's a specific scenario that plays out in Santiago's startup ecosystem more than founders realize.

You're in a Platanus batch. You have a pilot running with a major retailer. Week 5 of the batch, a deploy breaks the export feature. Your customer's team tries to generate the weekly report. It fails. Their manager calls the IT team. IT escalates to your champion. Your champion sends you a message at 9am.

You fix it by noon. You send an apology. Your champion appreciates the responsiveness.

That night, your champion is at a dinner with two of the Platanus founding partners and three other people in Santiago's startup community. Someone asks how the pilot is going. Your champion, who genuinely likes your product and wants the deal to close, says something like: "It's been good overall. Had a bit of a hiccup last week with an export bug, but they fixed it quickly." They are being generous. They are giving you credit for the fast fix.

But what the Platanus people hear is: "there was a stability incident." That phrase goes into their mental model of your company. It will come up when they're talking to potential investors who ask about your traction. It will come up when they're making introductions to other potential customers. It will come up in Demo Day context conversations.

You fixed the bug in 3 hours. But the story took 3 minutes to travel and it will live for months.

The technical setup for protecting Platanus pilots

Here's what the critical path coverage looks like in a typical Platanus-stage company. Assume you're a B2B SaaS tool for retail operations:

// Platanus pilot protection: retail operations dashboard

// Covers the three paths that cannot break

import { test, expect } from '@playwright/test';

test.describe('Retail pilot critical paths', () => {

test('enterprise user login with corporate email domain', async ({ page }) => {

await page.goto('/login');

// Test with the exact domain format the pilot customer uses

await page.fill('[data-testid="email"]', `testuser@${process.env.PILOT_DOMAIN}`);

await page.fill('[data-testid="password"]', process.env.PILOT_TEST_PASSWORD!);

await page.click('[data-testid="ingresar"]');

// Verify landing on dashboard, not an error page

await expect(page).toHaveURL('/panel');

await expect(page.locator('[data-testid="bienvenida"]')).toBeVisible();

});

test('trained workflow: create and approve inventory adjustment', async ({ page }) => {

await page.goto('/panel');

// Follow the exact sequence from onboarding training

await page.click('[data-testid="ajuste-inventario"]');

await page.fill('[data-testid="sku"]', 'SKU-TEST-001');

await page.fill('[data-testid="cantidad"]', '50');

await page.selectOption('[data-testid="motivo"]', 'devolucion');

await page.click('[data-testid="guardar-borrador"]');

await expect(page.locator('[data-testid="estado"]')).toContainText('Borrador');

await page.click('[data-testid="enviar-aprobacion"]');

await expect(page.locator('[data-testid="estado"]')).toContainText('Pendiente de aprobación');

});

test('manager output: weekly summary report export', async ({ page }) => {

await page.goto('/reportes');

await page.click('[data-testid="informe-semanal"]');

// Verify the report data makes sense before export

const totalItems = await page.locator('[data-testid="total-ajustes"]').textContent();

expect(Number(totalItems?.replace(/\./g, ''))).toBeGreaterThan(0);

// Export for manager

const [download] = await Promise.all([

page.waitForEvent('download'),

page.click('[data-testid="exportar-excel"]'),

]);

expect(download.suggestedFilename()).toMatch(/resumen-semanal.*\.xlsx/);

});

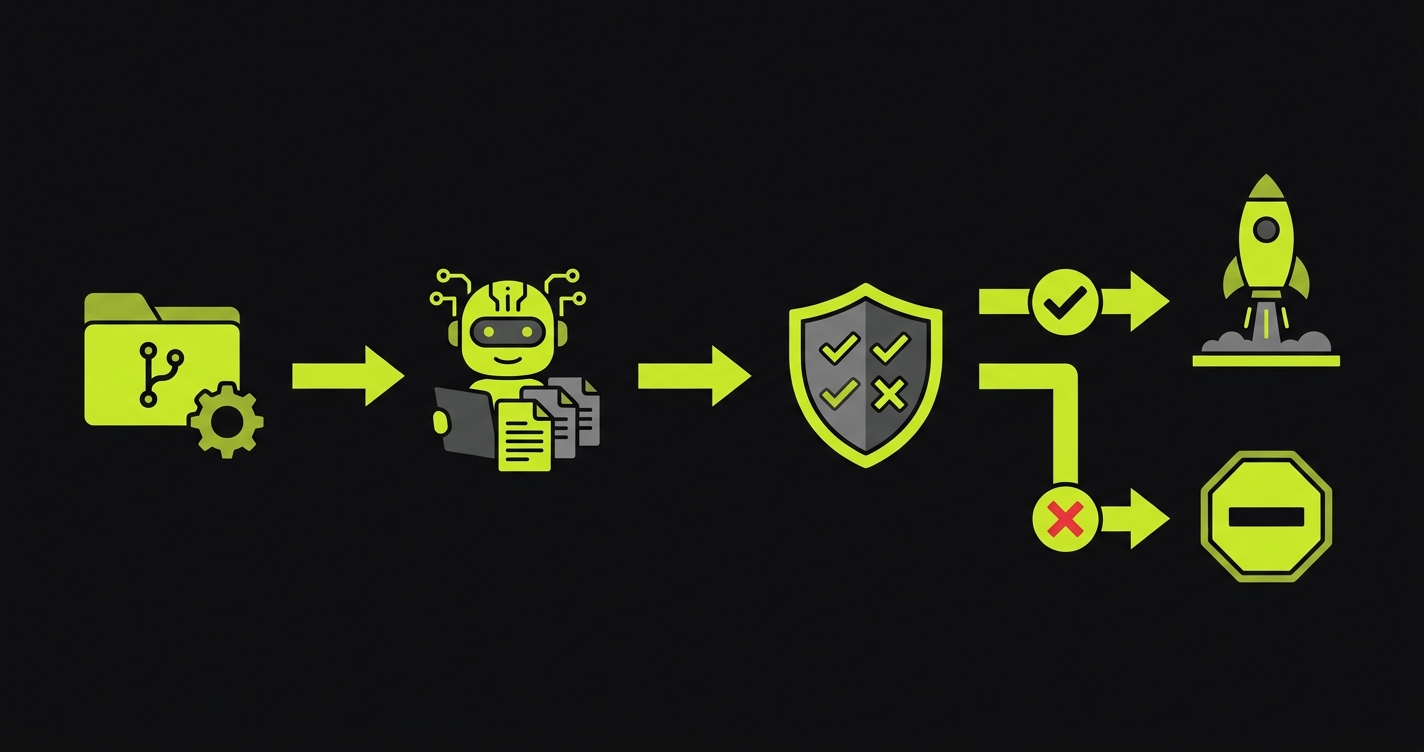

});Autonoma generates this from your actual codebase. The field names in Spanish, the specific workflow steps, the export format, all of it comes from reading your components, your API routes, and your route handlers. You review the generated tests, confirm they match the pilot customer's actual workflow, and connect them to CI. From that point, every deploy runs these tests before reaching production.

The deploy-freeze protocol for Santiago pilots

Given the community dynamics in Santiago, you need a stricter deploy discipline than most YC-stage companies maintain.

Here is the protocol that works:

Define three categories of deploys. Green: no changes to any code path touched by your pilot customer's critical flows. Yellow: changes to adjacent code (new features, UI changes in areas the pilot customer doesn't use). Red: changes to any code path in the critical flows.

Green deploys: ship anytime, test suite runs in CI as usual.

Yellow deploys: ship after the test suite passes, but notify your champion that you've deployed. "Just deployed a small update, everything on your side should be unaffected, let me know if you see anything different."

Red deploys: require the test suite to pass, require a manual review by the CTO, and get scheduled for off-hours in your customer's timezone. Notify your champion in advance: "We're deploying an update to the [X] workflow tonight at 11pm. This is the feature you asked for in week 3. We've tested it thoroughly and everything should be smooth, but I'll be available if you see anything."

That last communication is important. It sets the expectation, it demonstrates that you're thoughtful about their workflow, and it makes you the first person they contact if something unexpected happens, rather than the last person to find out.

How this plays in investor diligence

Platanus Demo Day is specifically modeled on YC Demo Day. The investors in the room include the same types of funds that attend YC Demo Day: early-stage VC, angels, some growth-stage funds. They are evaluating whether you have product-market fit in Chile and whether you can scale.

Your reference customers are a primary data source for that evaluation. When an investor is excited about your Demo Day pitch and starts due diligence, they will ask for customer references. They will call your pilot customers. The questions they ask will include: "Is the product reliable?" and "Have there been any significant issues during the pilot?"

A pilot customer who answers those questions with "it's been solid, we haven't had any stability issues" is a strong reference. A pilot customer who answers with "we've had some bugs, but the team fixes things quickly" is a qualified reference. The investor hears the qualification and prices it into their offer. Lower valuation. Longer timeline. More conditions.

The difference between those two outcomes is whether your critical paths were stable for the duration of the pilot.

| Timeline | Action | Risk level |

|---|---|---|

| 6 weeks out | Map critical paths, generate coverage with Autonoma | Low (setup only) |

| 5 weeks out | CI pipeline running, first deploy with full test gate | Medium (any issues caught here) |

| Weeks 3-4 | Stability phase begins, no Red deploys without CTO sign-off | High (customer using independently) |

| 48 hours before first solo session | Deploy freeze unless all tests pass | Critical |

| 2 weeks out | No Red deploys at all. Feature freeze on pilot paths | Critical |

| Demo Day | Pilot story is clean. Reference customers are strong. | Done |

FAQ

Move fast on features. Move carefully on critical paths. This is not a contradiction. You can ship 10 new features a week and still maintain stability on the 3 flows your pilot customer depends on. The key is knowing exactly which code changes touch which paths. Autonoma's test coverage is what gives you that visibility. If a change touches a critical path, the test suite tells you before the customer does.

Conservative IT means you need to be especially careful about changes that are visible to their users. The test suite should run against a staging environment that mirrors their production setup. When you have a critical fix that needs to go out, communicate the change to your champion and IT contact before it deploys, not after. In Chile's mining sector, proactive communication is interpreted as professionalism, not weakness.

Yes, it changes in both directions. On one hand, an alumni founder is more likely to be forgiving of early-stage bugs and understand the context. On the other hand, they are more likely to discuss the pilot in depth with the Platanus community because it's their peer relationship. A strong pilot with an alumni company becomes a very strong public reference. A buggy pilot with an alumni company is an awkward conversation at every community event. Treat it with the same care as any other pilot.

Focus the test coverage on the stable parts. Even in a rapidly evolving product, some parts stabilize before others. Auth almost always stabilizes early. The primary value-delivering action stabilizes once the customer has validated it. Test those. For the parts still in flux, use feature flags to isolate the experimental code from the stable critical paths. The test suite covers the stable paths and ignores the flagged experimental ones.

One day of focused work with Autonoma. Connect your repo, walk through the three critical paths with your CTO, run Autonoma's generation, review the output, connect to CI. The whole process is a day of setup, not a week. The 5 weeks before Demo Day are then protected automatically. Every deploy is gated on those critical paths passing. You don't have to think about it again unless a test fails.

Absolutely. In fact, the stakes may be higher. If a free trial customer has a bug incident, they have no sunk cost incentive to continue. They just stop using the product. The path from free trial to paying customer in a conservative Chilean enterprise requires a clean, uninterrupted experience from the first session. A bug in week 2 of a free trial often just ends the trial. Apply the same critical path framework even without a paid contract.

How Autonoma Protects Your Reputation in Santiago

In Santiago's startup ecosystem, a bug reaches investors the same day it happens. The community is that tight. Autonoma is what prevents the bug from happening at all. Not by slowing you down, but by catching it in CI before it ever ships to your pilot customer.

Autonoma generates E2E tests for the three paths your Chilean enterprise customer walks, automatically from your codebase. It reads your components, your API routes, and your route handlers and produces coverage for login and access, the primary workflow their team was trained on, and the output their manager sees. The Spanish field names, the specific UI sequence from onboarding, the export format: all of it is picked up directly from your code. There is no test code to write during the final 6-week sprint. You connect the repo, review the generated tests, and connect them to CI.

From that point, every deploy is tested before it ships. The Tuesday afternoon feature does not become the Thursday bug that your investor hears about on Friday. If something breaks a critical path, CI flags it and the deploy does not go out. Your champion never has to send that message at 9am. The dinner conversation that night is just a dinner conversation.

The tests stay current as your codebase changes. When you ship the feature your customer asked for in week 3, Autonoma's coverage updates to reflect the new state of the codebase. There is no maintenance overhead when you're shipping fast to close the pilot. The CI gate just keeps running.

Santiago's startup community talks about everything. Make sure what they say about your product is "it works flawlessly."