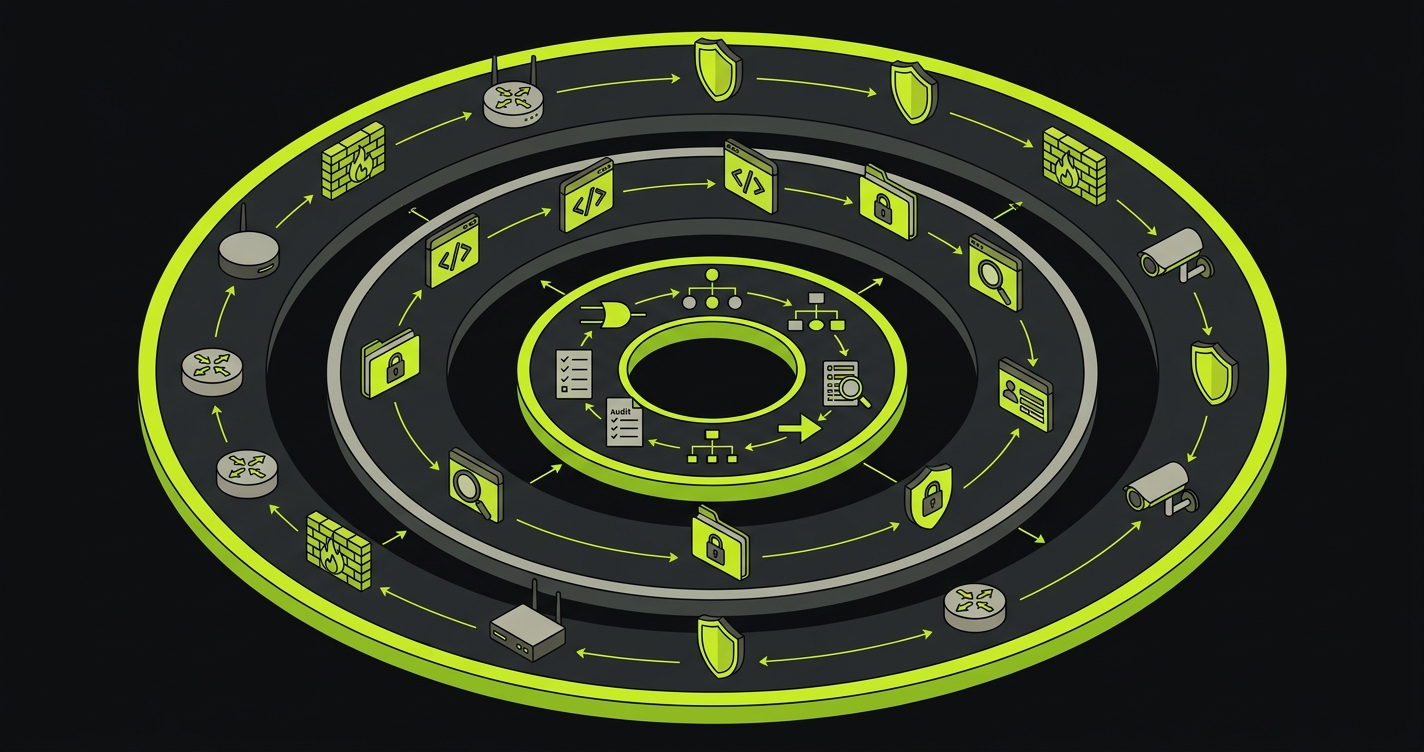

Web application security testing is the discipline of finding and validating security vulnerabilities in web applications before attackers do. It spans four main layers: SAST (static code analysis), DAST (dynamic runtime scanning), penetration testing (manual adversarial research), and behavioral E2E testing (validating that your application enforces its own business rules under real conditions). Most engineering teams run the first two and stop there. The third and fourth layers are what enterprise buyers, SOC 2 auditors, and PCI assessors increasingly demand evidence for - because code scanners cannot prove that access controls, payment flows, and multi-tenant isolation actually work.

The engineering teams that move fastest through enterprise security reviews are not the ones with the most expensive scanners. They are the ones who walk into a review with continuous functional evidence, not just scan output. Scan output says your code was written without known vulnerability patterns. Functional evidence says your application's security controls work correctly under real user conditions. Enterprise security architects can tell the difference immediately.

Building that second category of evidence is what separates an AppSec testing program that satisfies a checklist from one that satisfies a security architect. It requires one additional layer beyond SAST and DAST, and that layer is where most Series A and B teams have nothing deployed.

This guide covers how to build the complete stack, what each layer actually catches, and how to sequence it in CI/CD without making your pipeline unusably slow.

The Four Layers of Application Security Testing

Security testing for web applications is not a single tool or a single scan. It is a stack of complementary methods, each catching a distinct class of vulnerability that the others cannot see. Understanding what each layer actually does - and where each one stops - is the prerequisite for building a program that can survive real scrutiny.

Layer 1: SAST

Static Application Security Testing reads your source code, bytecode, or compiled binaries without running your application. The scanner traverses your abstract syntax tree, follows data flows, and matches against known vulnerability patterns. It catches hardcoded credentials, SQL string concatenation, use of deprecated cryptographic functions, calls to unsafe deserialization methods, and dependency versions with known CVEs.

The value proposition is speed. A Semgrep scan on a 100k-line TypeScript codebase takes under two minutes. You run it on every pull request, before any deployment, and get findings while the context is fresh. For OWASP Top 10 categories like A02 (Cryptographic Failures), A03 (Injection), and A06 (Vulnerable Components), SAST delivers genuine value at low cost.

The honest limitation is the false positive rate: 20-40% in production codebases, according to NIST's SATE studies. On a codebase with 400 findings, that is 80 to 160 tickets your developers will triage and suppress. Over time, suppression becomes the default. Developers stop reading findings carefully. SAST is still running. The dashboard is still green. It has stopped doing security work.

SAST also cannot see what your application does at runtime. A server that returns stack traces. A missing Strict-Transport-Security header. A session that does not invalidate on logout. These are not code mistakes - they are runtime behaviors that only appear when the application is live. Read our SAST tools comparison for a breakdown of Semgrep, CodeQL, Checkmarx, and others including false positive rates by language.

Layer 2: DAST

Dynamic Application Security Testing takes the opposite approach. You deploy your application and point a scanner at it. The scanner sends HTTP requests, injects payloads, and observes responses - effectively automating what a low-sophistication attacker would try. It catches runtime and configuration issues that SAST cannot see: missing security headers, reflected XSS that actually executes in a browser, authentication endpoints that accept weak credentials, rate limiting that does not exist.

For OWASP categories like A05 (Security Misconfiguration) and A07 (Authentication Failures), DAST earns its place. But a full scan against a mid-sized application takes 30 to 90 minutes, which makes it impractical on every pull request. The standard approach is to run DAST against a staging environment after merge to main, meaning findings arrive after code is already deployed.

Coverage is the other constraint. Modern SPA architectures and API-first applications are difficult to crawl automatically. JavaScript-driven navigation, authenticated flows that require session state, and multi-step workflows that depend on specific request sequences are all places where generic DAST crawlers lose the thread. The scanner covers the surface it can reach. The surface it cannot reach includes some of the most interesting attack paths.

See our DAST tools comparison for coverage of OWASP ZAP, Burp Suite, StackHawk, BrightSec, and Invicti.

Supporting Methods: IAST and SCA

Two additional approaches complement the core layers. IAST (Interactive Application Security Testing) instruments your running application to correlate runtime behavior with source code location. When a DAST probe triggers a vulnerability, IAST pinpoints the exact line of code responsible. The tradeoff is deployment complexity: IAST agents run inside your application process, which adds overhead and requires language-specific instrumentation. For teams already running DAST, IAST reduces triage time significantly.

SCA (Software Composition Analysis) scans your third-party dependencies for known CVEs. Tools like Snyk and Dependabot run continuously, alerting when a library you depend on publishes a security advisory. SCA addresses OWASP A06 (Vulnerable and Outdated Components) directly. For most teams, SCA is the lowest-effort, highest-signal security tool to adopt first, because it catches risks you did not introduce yourself.

Neither IAST nor SCA replaces the four core layers. They improve the precision and speed of your existing application security testing program.

Layer 3: Penetration Testing

Manual penetration testing is human security researchers attacking your application with the goal of finding vulnerabilities that automated tools miss. A skilled pentester understands your application's business context, can chain low-severity findings into critical attack paths, and can identify logic flaws that no scanner would flag.

For most Series A and B startups, penetration testing is an annual or semi-annual event run by a third-party firm. The deliverable is a report with findings categorized by severity, remediation recommendations, and evidence screenshots. SOC 2 auditors expect to see this report. Enterprise security reviewers ask for it. It is table stakes for deals above a certain size.

The limitation is cost and frequency. A thorough penetration test for a mid-complexity application runs $15,000-$40,000. At that price, most teams run it once a year, which means the test reflects a snapshot. Code you shipped three months after the pentest has never been tested by a human security researcher.

Layer 4: Behavioral E2E Testing

This is the layer most engineering teams do not have, and the layer that matters most for the class of vulnerabilities that drive compliance requirements and enterprise security reviews.

Behavioral E2E testing validates that your application enforces its own rules correctly under real user conditions. Not "does this code path handle user input safely" but "can a user with a free-tier account access premium features by manipulating a request parameter at step three of the checkout flow." Not "is this endpoint protected by authentication middleware" but "does the authorization actually hold when a user switches roles mid-session."

These are business logic vulnerabilities. Neither SAST nor DAST can find them, because they require understanding your application's intended behavior and verifying that the actual behavior matches. They require traversing real user journeys. They require testing the same flow with different user types and comparing outputs.

What Scanners Miss: The Business Logic Gap

The OWASP Top 10 for 2021 ranked Broken Access Control (A01) as the top application security risk, up from fifth place in 2017. According to the Verizon 2024 Data Breach Investigations Report, web application attacks remain the primary vector in over 25% of confirmed breaches. This is not an accident. It reflects a pattern in real-world incidents: access control failures do not come from bad code or misconfigured servers. They come from logic that works in isolation but fails in context.

The 2023 MOVEit Transfer breach is a clear example. Attackers exploited CVE-2023-34362, a SQL injection vulnerability that security scanners could theoretically detect. But the real damage came from chaining that injection with business logic in the file transfer workflow, allowing mass exfiltration of sensitive data across thousands of organizations. The breach cost Progress Software over $100 million in settlements and affected more than 2,600 organizations. Scanners found the injection pattern. None of them modeled the file transfer workflow that made it catastrophic.

Consider three examples that no scanner will catch:

The payment flow with negative amounts. Your DAST tool tested the payment endpoint for SQL injection, XSS, and authentication bypass. It did not test what happens when a user submits a payment with amount: -50. If your application processes the request without validating that amount is positive, a user can receive a credit instead of making a payment. This is not an injection vulnerability. It is a missing business rule validation in a specific workflow step.

Role-based access that breaks across user types. Your authorization middleware is correct. A unit test proves it. But a user who starts a session as a free-tier account, completes step one of a premium feature flow, upgrades mid-session, then downgrades back can sometimes retain premium access because the session state is not fully re-evaluated on role change. SAST does not find this because the code looks correct in isolation. DAST does not find this because the crawler does not know to attempt this specific sequence. Only a test that traverses the full workflow with deliberate role manipulation will catch it.

Multi-tenant data isolation at the edges. Your data access layer enforces tenant scoping correctly in normal flows. But a specific combination of filter parameters in a reporting endpoint, in a sequence that a real user would follow when exporting data, returns records from a neighboring tenant. The endpoint is authenticated. The endpoint is not vulnerable to SQL injection. The logic is just wrong for this specific parameter combination.

For a startup in a healthcare, fintech, or B2B SaaS context, these are not theoretical risks. They are the exact class of vulnerability that security architects at enterprise prospects probe for, because they are the class that drives compliance failures - PHI data leakage, financial controls bypass, authorization model collapse under real usage.

Building a DevSecOps Pipeline for Application Security

The practical question for any DevSecOps program is how to sequence these security testing layers in a real pipeline without making it unusably slow.

Stage 1 runs on every pull request. SAST belongs here: fast, requires no deployed environment, catches code-level issues before review. Configure Semgrep (or CodeQL for JavaScript/TypeScript) to scan changed files on PR branches and run full scans on main merges. Under five minutes for most codebases.

# GitHub Actions: SAST on every PR

name: Security - SAST

on:

pull_request:

branches: [main, develop]

push:

branches: [main]

jobs:

sast:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run Semgrep SAST

uses: semgrep/semgrep-action@v1

with:

config: >-

p/owasp-top-ten

p/secrets

p/typescript

env:

SEMGREP_APP_TOKEN: ${{ secrets.SEMGREP_APP_TOKEN }}

- name: Upload SARIF results

uses: github/codeql-action/upload-sarif@v3

if: always()

with:

sarif_file: semgrep.sarifStage 2 runs after deployment to staging. DAST belongs here, not in the PR stage. Run it after your application is deployed to a preview or staging environment. Gate the merge to main on DAST passing, or run it asynchronously and alert on high-severity findings.

# GitHub Actions: DAST against preview environment

name: Security - DAST

on:

deployment_status:

jobs:

dast:

runs-on: ubuntu-latest

if: github.event.deployment_status.state == 'success'

steps:

- name: Checkout

uses: actions/checkout@v4

- name: Run OWASP ZAP Baseline Scan

uses: zaproxy/action-baseline@v0.12.0

with:

target: ${{ github.event.deployment_status.target_url }}

rules_file_name: .zap/rules.tsv

cmd_options: '-a -j'

- name: Upload ZAP Report

uses: actions/upload-artifact@v4

if: always()

with:

name: zap-report

path: report_html.htmlStage 3 runs continuously against staging. Behavioral E2E tests validate user workflows and access controls on every release. Unlike SAST and DAST, these tests require understanding your application's business logic, which is why the test generation needs to be informed by your codebase rather than derived from generic HTTP crawling.

# Full security pipeline: SAST, DAST, and behavioral in sequence

name: Full Security Pipeline

on:

push:

branches: [main]

jobs:

sast:

name: Static Analysis

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Semgrep SAST

uses: semgrep/semgrep-action@v1

with:

config: p/owasp-top-ten p/secrets

env:

SEMGREP_APP_TOKEN: ${{ secrets.SEMGREP_APP_TOKEN }}

deploy-staging:

name: Deploy to Staging

needs: sast

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Deploy

run: ./scripts/deploy-staging.sh

dast:

name: Dynamic Analysis

needs: deploy-staging

runs-on: ubuntu-latest

steps:

- name: ZAP Full Scan

uses: zaproxy/action-full-scan@v0.10.0

with:

target: ${{ vars.STAGING_URL }}

behavioral:

name: Behavioral / E2E Tests

needs: deploy-staging

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run Autonoma Tests

run: npx autonoma run --env staging

env:

AUTONOMA_API_KEY: ${{ secrets.AUTONOMA_API_KEY }}DAST and behavioral tests run in parallel against staging after SAST passes. The pipeline fails if any layer reports a high-severity finding. This is the foundation of a DevSecOps workflow: security testing integrated into the delivery pipeline, not bolted on after release.

Autonoma adds continuous behavioral testing to your security strategy — AI agents run E2E tests on every PR that validate authorization flows, input handling, and business logic on real browsers.

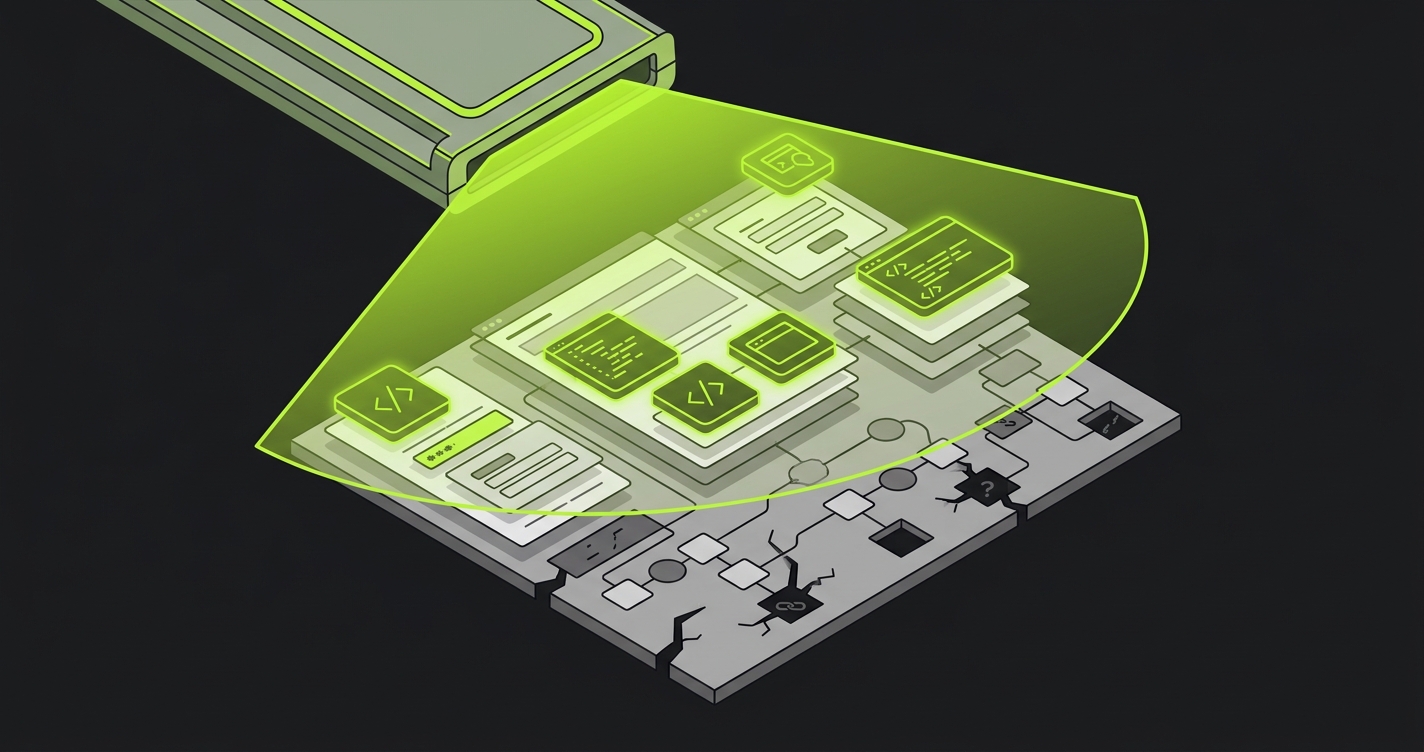

How AI-Driven E2E Testing Closes the Gap

The reason behavioral testing has historically been under-invested is manual cost. Writing comprehensive security-focused E2E tests that cover authorization logic, multi-step workflows, and role-based access across all user types requires a significant upfront investment and ongoing maintenance as the application evolves. Most teams run partial coverage at best.

This is where we built Autonoma to operate differently. Instead of writing tests manually or recording click-throughs, Autonoma connects to your codebase. A Planner agent reads your routes, components, and user flows, the same code that defines how your application is supposed to behave. From that analysis, it generates test cases that cover the business logic your application actually implements.

The Planner agent also handles a problem that makes behavioral security testing particularly hard: database state. Testing that a free-tier user cannot access a premium feature requires that a free-tier user actually exists in the database when the test runs. Testing multi-tenant data isolation requires separate tenant accounts with overlapping but distinct data. The Planner agent generates the API calls needed to set up that state before each test, so you get reliable, repeatable coverage without manual fixture management.

An Automator agent executes those tests against your running application. A Maintainer agent keeps them passing as your code changes. The result is behavioral test coverage that reflects your current codebase without manual intervention as you ship.

Mapping to Compliance Requirements

The three frameworks most commonly blocking enterprise deals each require something that scanner output alone cannot satisfy.

SOC 2 Type II requires evidence of continuous monitoring and that logical access controls operate as designed. Auditors want to see that access control testing runs on an ongoing basis, not just at point-in-time. A quarterly SAST scan does not satisfy this. Behavioral E2E tests running on every release, with logged results, do.

PCI DSS (Requirement 6.3) requires vulnerability scanning and penetration testing, but the more demanding requirement is 6.2: that all in-scope system components are protected from known vulnerabilities. Payment flow testing - specifically verifying that amounts cannot be manipulated, that discount logic cannot be abused, that refund flows require appropriate authorization - falls under the behavioral testing category, not the scanner category.

HIPAA technical safeguards require that access controls "allow access only to those persons or software programs that have been granted access rights." Demonstrating compliance requires functional evidence that your access controls work correctly under real conditions. An auditor who understands their job will ask for test results showing that User A cannot access User B's PHI through any sequence of actions your application supports, not just a statement that you have access control middleware.

For a deeper look at how these frameworks map to automated test coverage, see our API security testing guide, which covers the specific endpoints and flows most likely to surface compliance-relevant vulnerabilities.

The Tool Stack by Team Size

The right tooling depends on where you are.

For teams at early Series A, the priority is coverage without operational overhead. Semgrep free tier for SAST, OWASP ZAP in your CI pipeline for DAST, and Autonoma connected to your codebase for behavioral coverage. This stack costs under $500/month and gives you findings you can present to auditors and enterprise security reviewers without manual configuration.

| Layer | Tool (Startup) | Tool (Growth) | Runs On | Approximate Time |

|---|---|---|---|---|

| SAST | Semgrep (free) | Semgrep Team, Checkmarx | Every PR | 1-5 min |

| DAST | OWASP ZAP | BrightSec, Invicti | Post-deploy to staging | 30-90 min |

| SCA | Dependabot | Snyk | Continuous | Background |

| Pen Testing | Annual third-party | Annual third-party + bug bounty | Scheduled | 1-2 weeks |

| Behavioral E2E | Autonoma | Autonoma | Post-deploy to staging | 10-30 min |

For teams at late Series B pushing toward enterprise deals, the additional investment is in compliance-grade reporting. SAST tools like Checkmarx and Veracode produce outputs that map findings directly to PCI DSS and SOC 2 controls. DAST tools like Invicti generate compliance reports that auditors accept directly. The underlying technical coverage matters less than the ability to produce audit-ready evidence quickly.

What Enterprise Buyers Actually Ask

The shift in enterprise security reviews over the last three years is worth understanding directly. Five years ago, a questionnaire asking "do you perform vulnerability scanning" was satisfied by "yes, we run Snyk." Today, sophisticated security architects at mid-market and enterprise buyers are asking more targeted questions about your application security program.

They want to know your mean time to remediate high-severity findings. They want evidence that remediation was verified, not just closed in a ticket. They want to understand how you test authorization logic specifically, because authorization failures in multi-tenant SaaS are the most common root cause of enterprise-impacting security incidents. They want your penetration test report from the last 12 months, and they want to see whether findings from that report were remediated and re-tested.

The teams that move through these reviews fastest are not the ones with the most sophisticated scanners. They are the ones who can produce continuous test evidence - not just scan results, but functional test results showing that their application's security controls work correctly under real user conditions.

SAST and DAST get you into the conversation. Behavioral testing is what ends it.

Key Takeaways

- SAST and DAST together miss the #1 OWASP risk category. Broken Access Control (A01) requires behavioral testing to validate, because access control only fails in the context of real user workflows.

- Business logic vulnerabilities drive the most consequential breaches. Payment flow manipulation, role escalation, and multi-tenant data leakage are not injection patterns. Scanners cannot find them.

- Gate your CI/CD pipeline on all three layers. SAST on every PR, DAST and behavioral E2E tests on every merge to main. This is the DevSecOps standard for startups pursuing enterprise deals.

- Compliance frameworks require functional evidence, not just scan output. SOC 2, PCI DSS, and HIPAA auditors increasingly ask for proof that access controls work under real conditions.

- Enterprise security reviews have evolved. Sophisticated buyers now ask how you test authorization logic specifically, not just whether you run vulnerability scans.

- Start with Semgrep + OWASP ZAP + behavioral E2E testing. This three-tool stack covers all four layers for under $500/month and produces audit-ready evidence from day one.

Frequently Asked Questions

Web application security testing evaluates a web application for vulnerabilities that attackers could exploit. It spans static code analysis (SAST), dynamic runtime scanning (DAST), penetration testing, and behavioral E2E testing. Each layer catches a different class of vulnerability. The most consequential class for enterprise compliance - business logic flaws, authorization edge cases, and multi-step workflow vulnerabilities - requires behavioral testing, because code scanners cannot find bugs that only appear when real users traverse your application.

The main types are SAST (scans source code), DAST (sends live HTTP requests to a running app), IAST (instruments the running application for gray-box testing), SCA (scans third-party dependencies for known CVEs), penetration testing (manual adversarial research by security professionals), and behavioral E2E testing (validates that your application enforces its own business rules and access controls correctly). Most teams run only SAST and DAST. The behavioral layer is where the OWASP A01 Broken Access Control category lives, and it is the gap most commonly cited in enterprise security reviews.

The OWASP Top 10 is the standard list of the most critical web application security risks. The 2021 edition ranks: A01 Broken Access Control, A02 Cryptographic Failures, A03 Injection, A04 Insecure Design, A05 Security Misconfiguration, A06 Vulnerable Components, A07 Authentication Failures, A08 Software and Data Integrity Failures, A09 Security Logging Failures, and A10 SSRF. SAST covers A02, A03, and A06 well. DAST covers A05 and A07. A01 - the top risk - requires behavioral testing to validate properly, because access control only fails in the context of real user workflows.

SAST and DAST together miss business logic vulnerabilities: a payment flow that accepts negative amounts, role-based access that breaks when a user changes state mid-session, multi-tenant data isolation that fails for a specific combination of filter parameters. These require behavioral E2E tests that traverse real user journeys. Tools like Autonoma (https://getautonoma.com) address this by reading your codebase to understand intended behavior and generating tests that verify the application actually enforces its own rules.

Run SAST on every pull request (fast, no deployment needed). Run DAST against a staging or preview environment post-deploy. Run behavioral E2E tests in parallel with DAST against staging, continuously. Gate on SAST for PRs; gate on DAST and behavioral tests for merges to main. This three-stage pipeline catches code vulnerabilities before deployment, runtime vulnerabilities after deployment, and logic vulnerabilities in actual user workflows.

For SAST: Semgrep (fast, free tier, customizable rules), CodeQL for JavaScript/TypeScript, Bandit for Python. For DAST: OWASP ZAP (open-source, CI-friendly), BrightSec for SPA architectures, Invicti for enterprise compliance reporting. For SCA: Dependabot or Snyk. For behavioral and business logic testing: Autonoma (https://getautonoma.com) connects to your codebase, reads your routes and flows, and generates tests that validate authorization, payment flows, and multi-tenant isolation automatically.

SOC 2 Type II does not mandate specific tools, but requires evidence of continuous monitoring and that access controls operate as designed. Auditors want vulnerability scanning results, an annual penetration test report, and functional test evidence that access controls work correctly in practice. SAST and DAST satisfy the first requirement. A penetration test satisfies the second. Behavioral E2E testing is what produces the functional evidence that access controls hold under real user conditions - the third requirement that most teams address incompletely.