The Antler customer problem: Antler's model is unique. You join as an individual, form a team during the 10-week residency, and then have roughly 6-8 weeks to build a product and get customers before Investor Day. By the time you have a co-founder, a working product, and your first customer conversations, you are already in the final sprint. The window to show customer traction before Investor Day is the shortest of any major accelerator. In that window, every deployment you ship to win a new pilot is a potential bug that hits a pilot you already have running. Speed to customer is existential at Antler. So is protecting the customers you get.

Antler's model strips away the usual advantage that founders rely on: time. In most accelerators, you show up with a team and a product and have three to four months to find customers. At Antler, you show up alone, spend the first ten weeks finding a co-founder and validating a direction, and then have six to eight weeks to build something people will pay for and demonstrate that to investors at Investor Day.

That compressed timeline is what makes Antler distinctive and what makes it hard. The founders who raise at Antler Investor Day are the ones who moved from "we just formed a team and decided what to build" to "we have paying customers who can speak to the product" in six to eight weeks. That is an extremely fast customer acquisition sprint.

But there is a problem hiding in that sprint that most Antler teams only see after it happens. Shipping fast enough to win customers in six weeks means shipping new features almost daily. Every feature shipped is a potential regression. Every regression that surfaces during an active pilot extends the close cycle. In a timeline where you have six weeks to close customers, an incident that adds ten days to a close cycle does not just delay the deal. It misses the Investor Day window entirely.

Therefore: at Antler, protecting your pilots from bugs is not a quality discipline. It is the difference between having customers to show at Investor Day and not.

The Antler Timeline in Concrete Terms

Let's make the math explicit, because this is the critical constraint that shapes everything.

The Antler residency is 10 weeks. Week 10 is when the cohort investment decisions are made and teams get funded to continue. After the residency, funded teams have roughly six to eight weeks before Investor Day where they present to external investors.

Working backward from a six-week post-residency window:

Week 6 (Investor Day): you need customers you can reference. Not just conversations. Paying customers, signed LOIs, or deep pilots at companies that an investor can call.

Week 5: to have a signed customer at Investor Day, you need to close by week five. Any pilot that starts in week four or later is too late to close in time.

Week 4: pilots need to be in active evaluation. To be in active evaluation in week four, the product needs to have been deployed and demoed in weeks two and three.

Week 1-2: you are finishing the product and running first demos. This is when you are shipping the most code. It is also when your first pilot customers are having their first sessions.

This is the brutal constraint: your highest shipping velocity overlaps with your pilots' most fragile sessions. The features you're building to win customer three are shipping at the same time customer one is having their first evaluation sessions. The probability of a regression that affects customer one is highest exactly when it would be most damaging.

The Co-founder Timeline Creates a Technical Debt Pattern

Antler teams form mid-residency. A team that forms in week four of the residency has six weeks of residency remaining to validate the idea and start building. By the time they're post-residency and into the customer sprint, they've been building together for six to eight weeks maximum.

That short co-founder timeline creates a specific technical pattern. The early codebase was written fast, by two people who were still figuring out how to work together, under extreme time pressure. Abstractions are rough. Test coverage is zero. The code works for the happy path that was demoed, but the error handling, edge cases, and state management that make a product robust under different user configurations are all missing.

This matters because pilot customers are not controlled environments. They configure the product in ways you did not anticipate. They run workflows in sequences that your demo never showed. They use edge cases that your fast-built early code does not handle. The first wave of pilot bugs at Antler companies are almost always edge cases in the early codebase surfaced by real users doing real things.

The protection approach is not "rewrite the codebase before the customer sprint." That's not possible. It is: cover the specific path your pilot customer walks with automated checks, so that when an edge case in your codebase surfaces, you catch it before the customer does.

Speed to Customer Is Existential: The Constraint Shapes the Coverage Strategy

At most accelerators, a bug during a pilot is a setback. At Antler, a bug during a pilot in week three of the customer sprint is potentially a program-ending event. You don't have time to recover a stalled pilot and start a new one and close it before Investor Day.

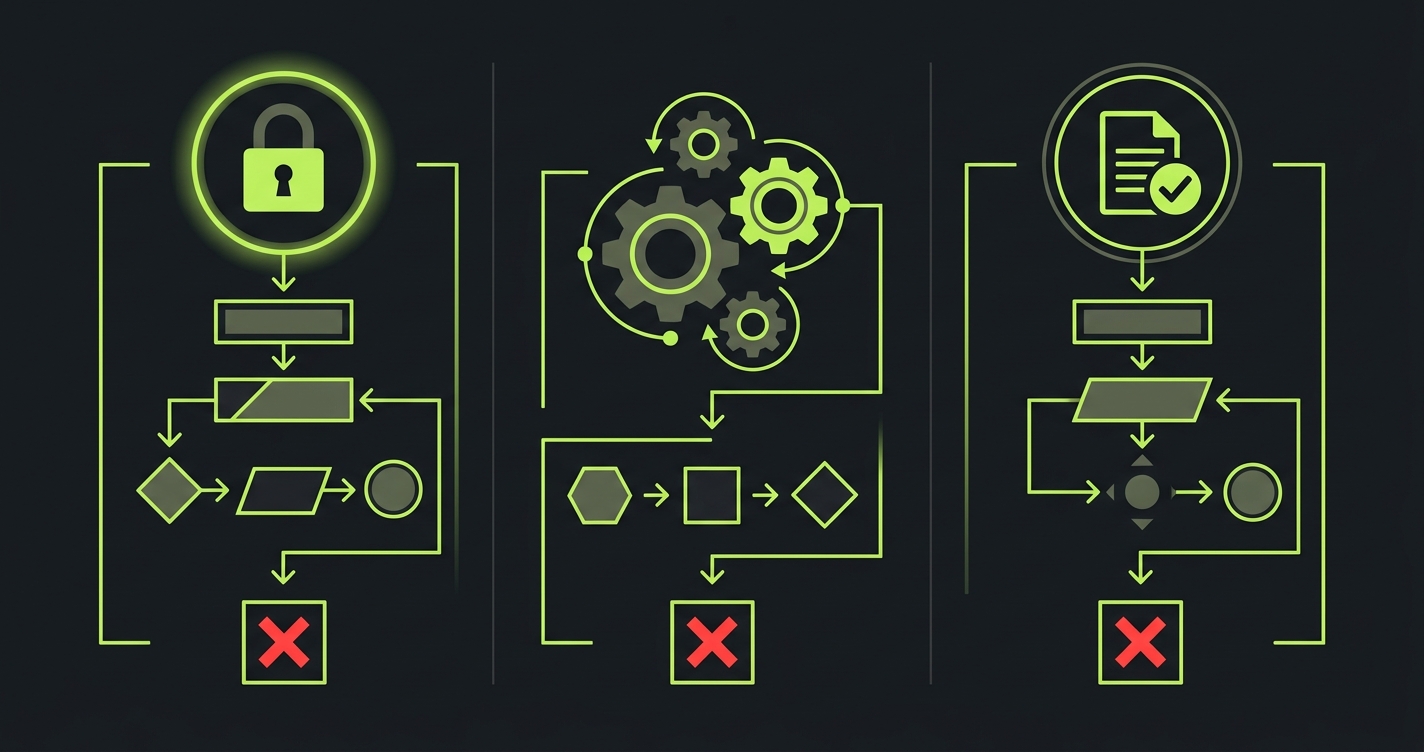

This shapes the coverage strategy: be extremely focused. You are not trying to cover your whole product. You are trying to cover the paths that your active pilots walk, with zero tolerance for bugs that affect those paths, and zero constraint on everything else.

The implementation: maintain a list of active pilots. For each pilot, maintain a document of the exact flow they walk: entry point, configuration, core action, output they evaluate. Before every deployment, run automated checks on those exact flows. If a check fails, the deployment does not go to production.

Everything else ships freely. New features for prospects who are not yet pilots. Admin tools. Secondary workflows. Edge case configurations. Those ship without a gate because a bug in those paths does not affect an active evaluation.

The gate is surgical. It protects the deals you have running without slowing down the shipping you need to win deals you don't have yet.

The Three Moments That Cannot Break in the Antler Customer Sprint

For Antler founders with a six-to-eight-week customer window, three moments are absolute non-negotiables for every active pilot.

Authentication. An Antler company often onboards customers in multiple cities. NYC, London, Singapore, Berlin, Stockholm all have Antler programs, and your customers may be in a different city than where your program ran. Auth flows that work in your local environment may behave differently for users in different regions with different browser configurations. More critically, if you push an auth change on a Thursday evening while a pilot customer in Singapore is trying to log in Friday morning, they hit a broken login screen at the start of their workday with no way to use the product. Test every auth path before every deployment.

Core action. The central workflow of your product. For an AI product, the generation or processing step. For a data product, the transform or analysis. For a workflow product, the primary workflow execution. This must complete and produce output. Test it end-to-end, not just as a unit test. Run it the way the pilot customer runs it.

Output persistence. Early Antler codebases have a specific tendency: outputs generated in one session may not reliably persist to the next. Database state management in a codebase built in six weeks has a higher probability of bugs around persistence than in a mature codebase. Test that the output from session one is still accessible in session two. This is the bug that hits early-stage pilots most often and is the easiest to cover with a simple assertion.

What to Automate: The Minimal Suite That Protects the Window

// Antler Investor Day protection suite

// Philosophy: cover only the pilot path, ship everything else freely

// Auth: must work from any region, any time

test('auth: login succeeds for pilot account', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_PASSWORD!)

await page.click('[data-testid="login-btn"]')

// Dashboard must load: session is valid

await expect(page).toHaveURL('/dashboard')

await expect(page.locator('h1')).toBeVisible()

})

// Core action: the thing the pilot is evaluating

test('core action: runs to completion', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_PASSWORD!)

await page.click('[data-testid="login-btn"]')

await page.waitForURL('/dashboard')

// Trigger primary workflow

await page.click('[data-testid="start-workflow"]')

// Wait for completion: give enough timeout for AI/data processing

await page.waitForSelector('[data-testid="workflow-complete"]', {

timeout: 90000

})

// Output must be present

const output = page.locator('[data-testid="workflow-output"]')

await expect(output).toBeVisible()

const text = await output.textContent()

expect(text).toBeTruthy()

expect(text!.length).toBeGreaterThan(0)

})

// Output persistence: session 2 can access session 1 output

test('persistence: previous output is accessible on next session', async ({ page }) => {

await page.goto('/login')

await page.fill('[data-testid="email"]', process.env.PILOT_EMAIL!)

await page.fill('[data-testid="password"]', process.env.PILOT_PASSWORD!)

await page.click('[data-testid="login-btn"]')

await page.waitForURL('/dashboard')

// Navigate to history/saved outputs

await page.click('[data-testid="history-nav"]')

// Previous outputs must be listed and accessible

const historyItems = page.locator('[data-testid="history-item"]')

await expect(historyItems.first()).toBeVisible()

// Click first item: it must open without error

await historyItems.first().click()

await expect(page.locator('[data-testid="output-view"]')).toBeVisible()

await expect(page.locator('[data-testid="error-state"]')).not.toBeVisible()

})

// API: core backend endpoint must respond correctly

test('api: core endpoint returns valid response', async ({ request }) => {

const res = await request.post('/api/workflow/run', {

headers: {

'Authorization': `Bearer ${process.env.PILOT_API_TOKEN}`,

'Content-Type': 'application/json'

},

data: { input: process.env.TEST_WORKFLOW_INPUT }

})

expect(res.status()).toBe(200)

const body = await res.json()

expect(body).toHaveProperty('output')

expect(body.output).toBeTruthy()

})This suite runs in under five minutes. It catches the four most common pilot-breaking bug categories in early-stage Antler codebases: auth regressions, core action failures, persistence bugs, and API contract breaks. Everything outside these paths ships without a gate.

How Autonoma Fits the 6-Week Antler Sprint

With only 6 weeks, writing tests means not closing customers. That is not a tradeoff you can make. But running the sprint without any coverage means your pilot customers become your bug detection system. In a 6-week window, there is no time to recover a stalled pilot.

Autonoma resolves that tradeoff. It reads your codebase and generates the critical path tests automatically. No writing, no maintaining, no engineering hours diverted from shipping. The coverage comes from your actual routes and components, not a generic script that needs adapting.

This matters especially for a codebase built in 3-4 weeks under time pressure. Edge cases accumulate in fast-built code: state management gaps, persistence bugs, auth edge cases that only surface with real user configurations. Those edge cases do not appear in your demos. They appear in a pilot customer's second session, on a Tuesday morning, when you cannot afford the delay.

CI coverage catches them before the pilot does. Every deploy is checked. If a regression touches the paths your active pilots walk, the deploy is blocked before it reaches production. Setup takes a few hours, with coverage running from day one of the sprint.

Every pilot you close in 6 weeks stays closed. Autonoma is how you make sure the product holds up through each of those evaluations.

The 48-72 Hour Stability Window Is Non-Negotiable at Antler

The compressed Antler timeline makes the stability window discipline more important, not less. Here is why.

In a six-week customer sprint, you might be running three or four pilots simultaneously by week four. Each of those pilots started at a different time. Some are in their first sessions. Some are in their evaluation phase. Some are approaching a decision.

The pilots in their first sessions are the most fragile. But in the Antler timeline, "first session" status is spread across the whole customer sprint. You're constantly onboarding new pilots while shipping features at full speed.

Without the stability window discipline, you will ship a breaking change into a first-session window for at least one of your pilots. The math is against you. In a six-week sprint with three to four pilots at different stages, the overlap between "deploying new features" and "pilot customer in their first 72 hours" is almost constant.

The practice: maintain a simple tag on each pilot indicating whether they're in their first-session window. Check that tag before every deployment. If any pilot is in the first 72 hours, hold the deployment until the window closes or confirm the deploy does not touch their critical path. This takes two minutes per deploy and prevents the category of incident that is most damaging to early-stage pilot relationships.

Investor Day: What Antler Investors Are Actually Evaluating

Antler Investor Day is different from most Demo Days. The audience is external investors, not the Antler partner group (who already made their investment decision during the residency). These are seed-stage investors evaluating whether to lead or join the round.

For a seed investor looking at an Antler company with six to eight weeks of post-residency history, the customer question is simple: is this real? Are these paying customers or friendly pilots? Are they using the product or is it essentially vapor? And if there are bugs or issues, how does the team handle them?

The investor will talk to one or two pilot customers. Those conversations happen fast, often in the 48 hours before or after Investor Day. What the investor wants to hear: the customer chose this product for a real reason, they've been using it and getting value, and the team has been responsive and professional when issues came up.

That last part is the one that protects your raise even when bugs happen. An investor talking to a reference who says "they had an issue last week but caught it before we saw it and deployed a fix, very on top of it" hears competence. An investor talking to a reference who says "there was a bug that affected our session and we had to wait for them to fix it" hears something different.

Your bug prevention system shapes those conversations even when it doesn't prevent every bug. A team that catches bugs before customers do, or fixes them proactively with good communication, demonstrates operational maturity that seed investors are specifically looking for in a team this early.

The Operations Minimum for a Six-Week Sprint

For an Antler team in the post-residency customer sprint, here is the minimum viable operational system.

A shared document with one row per pilot, reviewed every day during the sprint. The columns that matter:

| Column | What to track | Why it matters |

|---|---|---|

| Company | Pilot name | Basic reference |

| Stage | Onboarded / First session done / Active eval / Decision | Tells you who is in the stability window |

| Last interaction | Date of last signal | Pilots silent for 5+ days need a nudge |

| Critical path | Feature names they use | Tells you which deploys need the gate |

| Decision deadline | Date by which they must decide to close before Investor Day | Tells you if you still have time |

| Critical path covered | Yes / No | Forces you to add tests before a pilot starts |

This document is reviewed every day during the sprint, not weekly.

A Slack channel (or equivalent) shared between co-founders for pilot status updates. When one founder has a customer call, they post a three-line update: what happened, what the customer said, what the next action is. This keeps both founders aware of every pilot's status without needing a synchronous check-in.

The test suite described above, running in CI before every merge to main. This is the gate that protects the product from the founders' own shipping velocity.

These three things together constitute a system that is appropriate for the pace and scale of an Antler customer sprint. They require no additional tooling beyond what the team already has and cost almost no time to maintain.

Getting Started This Week

If you are an Antler founder in the post-residency customer sprint, here is what to do today.

List your active pilots. For each one, write down the exact steps they take from login to their primary value moment. Then write one automated test covering those steps. Get it running in your CI pipeline, configured to run before any merge to main.

This is two to four hours of setup for a team with no existing tests. It is 30 minutes of setup if you use Autonoma to generate the coverage from your codebase.

The cost of not doing it is a mid-sprint incident in week four that stalls a pilot you need to close before Investor Day. At Antler, you do not have the weeks to recover that. The investment is small. The protection is real.

Frequently Asked Questions

Antler's post-residency window is six to eight weeks. In that window, you need to go from first customer conversations to signed pilots or paying customers who can be referenced at Investor Day. The constraint is not finding prospects: Antler's network and the residency give you those. The constraint is closing fast enough. Pilots close fast when they run cleanly. Pilots close slow when they have incidents. Automated coverage of the paths your pilot customers walk is what determines whether the six-week sprint ends with customers you can show or pilots still in evaluation.

Antler founders spend the first 10 weeks finding a co-founder and validating a direction. By the time the post-residency sprint starts, the team has been working together for six to eight weeks maximum and the codebase is young. Young codebases have more edge cases and persistence bugs than mature ones. The testing strategy for an Antler company is not comprehensive coverage: it is surgical coverage of the paths active pilots walk, with automated checks running before every deployment. That coverage protects the six-week window without adding the engineering overhead that would slow down a team that needs to ship daily.

Based on the pattern in early-stage codebases built under time pressure: auth regressions from library updates or configuration changes, core workflow failures from state management bugs, output persistence failures where session-one outputs disappear by session two, and API contract breaks where a backend change is not reflected in the frontend. All four of these are coverable with simple automated checks that run before every deployment. They are also the four bug categories that, if they hit during a pilot session, create the most damage to the evaluation.

The co-founder question is a residency question, not a customer sprint question. By the time you're in the post-residency customer sprint, you've already formed the team. The shipping fast question at that point is: how do you divide engineering and customer work between two founders who both need to be doing both? The answer most successful Antler teams find is: one founder owns pilot relationships and does most of the customer communication, while the other focuses on shipping. The pilot-owning founder flags when critical path changes are needed before a pilot session. The shipping founder builds the gate. Shared awareness of pilot status is the coordination layer.

Not in the traditional sense. Traditional QA, comprehensive test coverage, test-driven development: none of that is appropriate for a six-week customer sprint. What is appropriate is one hour of setup to cover the three or four flows your active pilots walk, with automated checks running before every deploy. That one hour of investment protects the entire sprint. Skip it and you are relying on pilot customers to be your bug detection system. In a six-week window with no time to recover from stalled pilots, that is the wrong system.

Antler Investor Day is a seed-round pitch event. External investors are evaluating whether to lead or join the round. For a team with six to eight weeks of post-residency history, the primary signal is customer evidence: are these real customers who can confirm the product works? Investors will call one or two references before committing. Those references were your pilots in the six weeks before Investor Day. How your pilots talk about the product experience determines how the diligence conversation goes. A reference who says 'solid team, product worked well, planning to expand' is a green light. A reference who mentions reliability issues is a yellow flag.