Preview environments for Docker apps are per-PR isolated stacks where every service in your docker-compose.yml (web, API, worker, database, cache) runs in its own namespace, gets its own URL, and tears down automatically. The three properties that make a stack provisionable as a preview environment are reproducible config, isolated state, and deterministic boot. Docker Compose is the most common pattern for encoding those properties, but it is not a requirement.

Most teams ship a working single-service preview without much friction. The web container builds, a URL appears in the PR, a reviewer clicks through the UI. The interesting question shows up around the third service. What actually makes a Docker stack ready to be cloned, isolated, routed, and torn down on every pull request? The answer is rarely about which framework you picked.

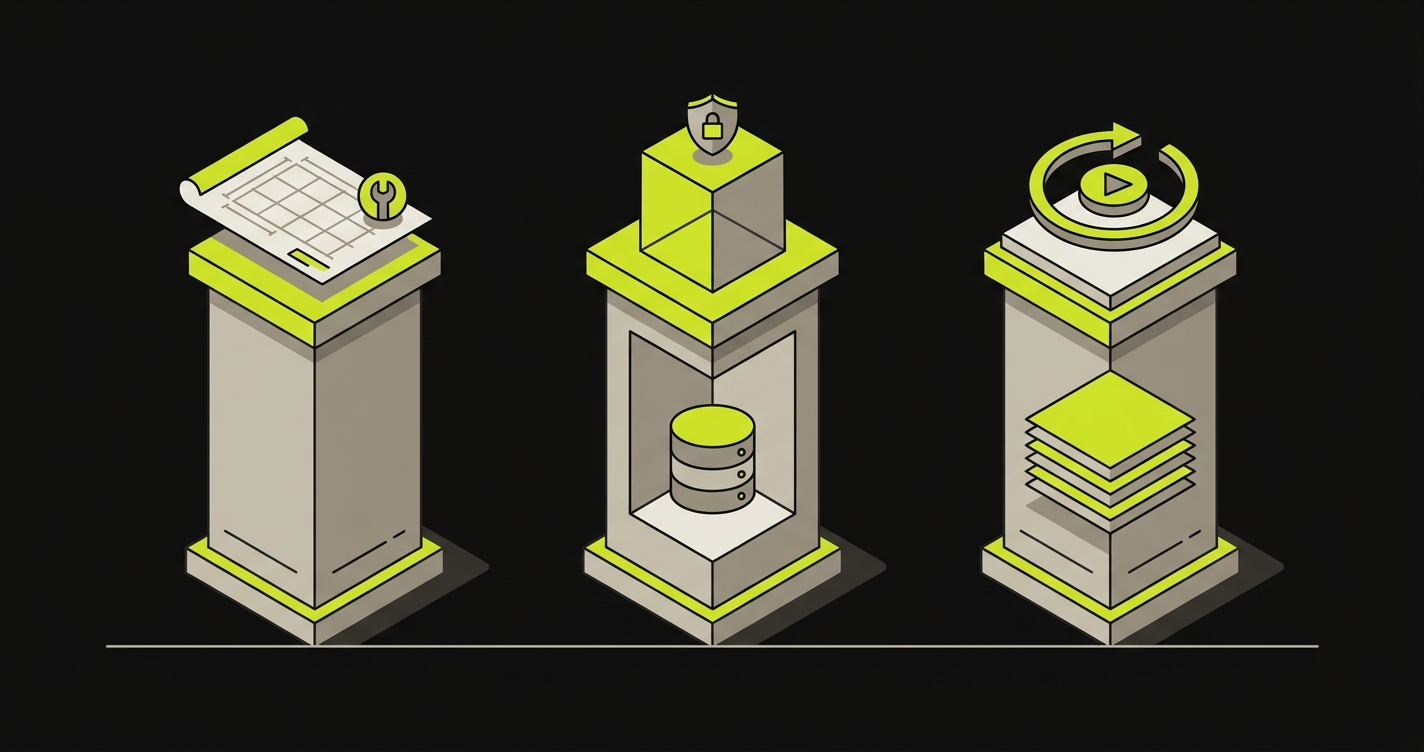

It comes down to three structural properties: reproducible config, isolated state, and deterministic boot. A stack that holds all three is straightforward to provision per-PR. A stack that misses any one of them turns into a thicket of bespoke glue: a secrets file checked into the repo, a teardown script that forgets to remove volumes, a routing layer that assumes a single hostname.

This article is a configuration reference for teams already past that inflection point. It walks through what each property looks like in practice, then maps the three onto common Docker-based stacks: Next.js with Postgres and Redis, Rails with Postgres and Sidekiq, and Django with Postgres and Celery. The same lens applies to non-container runtimes, which the final section covers.

The Three Properties of a Provisionable Stack

Not every stack is equally easy to spin up as an isolated per-PR environment. The difference is almost never about framework choice. It comes down to whether the stack satisfies three structural properties at the point a provisioning system tries to replicate it.

Reproducible Config

A stack has reproducible config when everything the services need to start can be described in an image or build manifest plus a set of environment variables. No host-machine state. No files that exist on your laptop but not in the repo. No manual steps that happen to be documented in a Confluence page nobody reads.

In Docker terms, this usually means your Dockerfile builds cleanly from the repo root, your docker-compose.yml references only images and environment variables, and your secrets are injected at runtime, not baked into the image.

The failure mode is subtle. A service that works locally because a config file lives in ~/.aws/credentials or a volume mount points to /Users/yourname/data will fail silently in a provisioning pipeline. The container starts, the health check passes, and then the first real request fails with a permissions error nobody anticipated.

Isolated State

Isolated state means each preview environment instance owns its storage. No two PRs share a database, a Redis key namespace, or a local filesystem path. Isolation is what prevents the flapping PR behavior where merging one branch corrupts the state in another reviewer's preview.

Concretely, this maps to: each Postgres container uses a named volume scoped to the PR (not a shared host directory), each Redis container has its own instance (not a shared server with key prefixes), and any local file storage the app touches goes into a volume that lives and dies with the environment.

The failure mode here is usually cost, not breakage. Shared-state previews look fine until two reviewers interact with them simultaneously, and then you spend two hours debugging what is actually a state collision, not a code bug.

Deterministic Boot

Deterministic boot means the service starts, reaches a healthy state, and is ready to accept traffic without any manual intervention after launch. No "run migrations manually," no "seed the database before testing," no "wait for the worker to warm up."

In practice, this requires health checks on every service, a startup sequence that runs migrations as part of the container entrypoint (not as a separate post-deploy step), and seed data that is either embedded in the migration or generated from a fixture the application controls.

A stack that fails deterministic boot is usually still provisionable, but it requires humans in the loop. That defeats the purpose of per-PR orchestration.

Stack 1: Next.js + Postgres + Redis

This is the most common Node.js full-stack pattern for teams moving toward containerized previews. The frontend and API routes live in the Next.js process, Postgres handles persistent data, and Redis handles session state, caching, and sometimes a job queue.

Service Replication

For each PR, the provisioning layer needs three containers: the Next.js application, a Postgres instance, and a Redis instance. The Next.js image is built from the repo; Postgres and Redis use standard upstream images. The only per-PR configuration is the values injected into environment variables: connection strings, secrets, and the public URL for the preview itself.

A common mistake is using a single shared Postgres server with per-PR database names (e.g., app_pr_123). This satisfies isolation at the data level but creates a shared failure point: if the Postgres server goes down, all previews go down simultaneously. Per-PR containers are slower to start but eliminate that coupling.

Environment Routing

The Next.js application needs to know its own URL. Anything that generates absolute URLs (emails, OAuth callbacks, API base URLs used by the frontend) breaks silently if the application thinks it is running on localhost:3000 but requests arrive at pr-456.preview.yourapp.com.

The cleanest pattern is injecting the preview URL as an environment variable (NEXT_PUBLIC_APP_URL for client-side, APP_URL for server-side) at provisioning time. The provisioning system knows the URL before the container starts; it injects the value; the application never needs to detect its own hostname.

Secrets and Config Propagation

The Postgres connection string, Redis connection string, and any third-party API keys all need to reach the Next.js container at startup. The most durable pattern is a secrets store (AWS Secrets Manager, HashiCorp Vault, or a simpler GitHub Secrets + CI pipeline approach) where each preview environment gets its own copy of the secret values rather than sharing credentials with staging.

Shared staging credentials in previews create a specific failure mode: a developer testing a payment integration against a real Stripe test key can trigger rate limits that affect every other active preview simultaneously. Per-environment secrets are not optional for anything touching external APIs.

Stack 2: Rails + Postgres + Sidekiq

Rails is the canonical example of a framework with strong opinions about its environment, which makes it slightly more demanding on the deterministic-boot property than Next.js.

Service Replication

Three containers: the Rails web process, a Postgres instance, and a Sidekiq worker process. The web and worker images are built from the same Dockerfile but started with different commands (rails server vs bundle exec sidekiq). Both depend on the same Postgres and Redis instances within the environment.

Sidekiq is frequently forgotten in staging preview setups because it runs as a background process. In a per-PR preview, forgetting the Sidekiq container means any flow that enqueues a job (email confirmation, webhook delivery, report generation) will silently fail. The job sits in Redis, the UI shows a spinner, and the reviewer closes the PR without finding the actual behavior.

Rails Migrations and Deterministic Boot

Rails migrations are the most common failure point for the deterministic-boot property. A container that starts with rails server and has no pending migrations will work fine. A container that starts against an empty database (new Postgres volume) will fail on the first request that touches the database.

The pattern that works: the container entrypoint runs bundle exec rails db:prepare (which runs db:create if the database does not exist, then db:schema:load if there are no migrations, then db:migrate otherwise) and then starts the server. This is idempotent: running it on an already-migrated database is a no-op.

For seed data, db:seed is less reliable because seeds are often not idempotent. A safer approach is fixture-based seeds that check for existence before inserting, or a separate db:seed:replant call wrapped in a guard.

Environment Routing for Action Mailer

Rails uses default_url_options for Action Mailer, and the value must match the preview URL or email links will point to production. Injecting this as an environment variable (APP_HOST) and reading it in config/environments/preview.rb keeps the application config clean and the provisioning system in control of the value.

How Autonoma Provisions Full-Stack Preview Environments on Any Stack

The patterns above describe the configuration surface a team owns when running per-PR orchestration themselves. At two services it's contained. At three or more, the glue compounds: a teardown script that misses volumes, a secrets file committed by accident, an ALLOWED_HOSTS value that nobody injected at provisioning time so every preview returns 400s for two hours. At five services, most teams end up owning a bespoke per-PR orchestration system maintained by one engineer who eventually leaves.

Autonoma is our managed preview environments product. Layer 1 handles the infrastructure work described in this article: image builds, full-stack service replication across your containers, environment routing with per-PR URLs injected at provisioning time, secrets and config propagation from your existing secrets store, database isolation with per-environment volumes, and automatic teardown when the PR closes. Any stack that satisfies the three properties here (reproducible config, isolated state, deterministic boot) connects to Autonoma's per-PR orchestration without requiring Docker specifically. Node.js, Ruby, Python, Go, and other runtimes all provision through the same control plane. Layer 2 runs Autonoma's three testing agents (Planner, Automator, Maintainer) against every preview automatically: the Planner reads your codebase to generate test cases and handles database state setup, the Automator executes them against the running environment, and the Maintainer keeps the test suite passing as the code changes. The preview environment exists and gets tested without a separate configuration step.

If you're sizing this against the configuration burden of running per-PR orchestration in-house, schedule a call with our founder and walk through your stack, your service count, and the parts you'd want managed versus the parts you'd keep.

Stack 3: Django + Postgres + Celery

Django shares the same structural pattern as Rails but has different boot mechanics. The configuration conventions differ enough that the failure modes are distinct.

Service Replication

Three containers: the Django web process (via Gunicorn or runserver depending on fidelity requirements), a Postgres instance, and a Celery worker process. Like the Rails pattern, the web and worker containers are built from the same image with different entrypoint commands (gunicorn app.wsgi vs celery -A app worker).

A Celery Beat container (the scheduler) is sometimes needed if the application uses periodic tasks. Beat is easy to omit from a preview definition because it runs silently. If any tests depend on scheduled task behavior, Beat needs to be in the compose definition.

Django Migrations and Deterministic Boot

Django's migration command is python manage.py migrate. Like Rails, this is safe to run on an already-migrated database, so the pattern is the same: run it in the container entrypoint before starting the web process.

Django's ALLOWED_HOSTS setting is the most common silent failure for isolated state in previews. If ALLOWED_HOSTS does not include the preview URL hostname, Django returns a 400 Bad Request for every request. The symptom looks like the container is unhealthy; the actual cause is a missing hostname in a config variable. Injecting the preview URL hostname as an environment variable and appending it to ALLOWED_HOSTS at startup resolves this.

Secrets and the Django Settings Pattern

Django settings are frequently split into a base module and environment-specific overrides imported at startup. This makes secrets injection straightforward: the provisioning system sets environment variables; the settings module reads them with os.environ.get(); no file-based config is needed.

DATABASE_URL is the standard environment variable pattern (compatible with dj-database-url) and the same convention works across all three stacks described in this article. A provisioning system that follows the DATABASE_URL convention needs no per-framework database config logic.

Beyond Docker

Docker and Docker Compose are the most common way to express the three properties above, but they are not the only way. A Node.js application with a managed database connection, a Procfile-based boot sequence, and environment-variable-driven configuration satisfies all three properties without a container in sight. A Rails app deployed with Kamal to a VPS satisfies them differently than one deployed with Compose.

The property-based mental model matters here because it tells you when a stack is ready for per-PR provisioning regardless of containerization choice. If the build artifact is reproducible from the repo, the state is isolated per instance, and the service starts without manual steps, the stack is ready. The tooling layer above that (Compose, Kubernetes, a PaaS API, or managed preview infrastructure) is an implementation detail.

This also means that the transition from a Docker-based self-hosted preview setup to managed preview infrastructure does not require rewriting your stack. The same three properties you verified when getting Compose-based previews working are the same properties the managed layer relies on. The configuration reference in this article is valid in both directions. If you're evaluating the build-vs-buy question for that managed layer, the managed preview environments build-vs-buy breakdown covers the infrastructure categories and engineering-week estimates in detail.

If you're sizing the configuration work for per-PR full-stack previews on a non-trivial Docker stack, our co-founder Eugenio is happy to compare notes. Grab 20 min with a founder

FAQ

No. Docker Compose is the most common pattern for encoding reproducible config, isolated state, and deterministic boot, but any stack that satisfies those three properties can be provisioned as a preview environment. Managed preview infrastructure like Autonoma's is not Docker-specific and handles any runtime that meets those criteria.

A staging environment is a single, shared, long-lived environment used to validate changes before production. A preview environment is a short-lived, per-PR, isolated environment that exists for the duration of a pull request. The key difference is isolation: staging is shared state; preview environments are independent. See the [preview environment provisioning lifecycle](/blog/preview-environment-provisioning-lifecycle) article for the full lifecycle from PR open to teardown.

Run migrations as part of the container entrypoint, not as a separate post-deploy step. For Rails, bundle exec rails db:prepare is idempotent and handles both new databases and already-migrated ones. For Django, python manage.py migrate has the same property. Running migrations before the server starts ensures the deterministic boot property: the service is ready to accept traffic when the health check passes. If you're using Autonoma for per-PR provisioning, Layer 1 replicates each service in your stack and preserves the container entrypoint sequence, so migrations run on first boot without your platform team writing separate orchestration logic for the startup order.

You can use per-environment databases on a shared server (e.g., app_pr_123 on a shared Postgres host). This saves container startup time but creates a shared failure point: if the shared Postgres instance goes down, all previews go down simultaneously. Per-PR Postgres containers are slower to start but eliminate that coupling. For most teams with fewer than 20 active PRs, per-PR containers are the more reliable default.

Each preview environment should get its own copy of test-mode API keys, not shared credentials. Shared credentials create cross-environment interference: rate limits triggered by one reviewer affect all other active previews. A secrets store (AWS Secrets Manager, HashiCorp Vault, or GitHub Secrets via CI) that provisions per-environment values is the standard pattern. Never bake API keys into images. Autonoma's Layer 1 handles this propagation step: it reads from your connected secrets store and injects per-PR scoped values into each environment at provisioning time, so no manual secret-copy step is needed for each pull request.

Environment routing is the mechanism by which each per-PR environment gets its own URL and the application inside the environment knows that URL. It covers two things: the external routing layer (a reverse proxy or load balancer that maps pr-123.preview.yourapp.com to the correct container network) and the internal config propagation (injecting the URL as an environment variable so the application can generate correct absolute URLs for OAuth callbacks, emails, and client-side API calls).