Test automation ROI (also called QA automation ROI) measures the financial return of investing in automated QA relative to its total cost -- licensing, implementation, and ongoing overhead. In the traditional calculation (circa 2018-2022), ROI was mostly about replacing Selenium scripts with something that breaks less. In the AI era, the calculation is fundamentally different: AI coding tools now generate code significantly faster than human developers, which means QA has become the rate-limiting step in your delivery pipeline. The ROI of AI-powered test automation is no longer about eliminating manual testers -- it is about eliminating the QA bottleneck that is quietly capping how fast your team can ship. Break-even typically occurs within 2-4 months. The ROI compounds from there.

Your developers now ship in hours what used to take weeks. Your QA process has not changed. Here is the math on why that gap is costing you.

A 10-person engineering team using AI coding tools generates meaningfully more code output than the same team without AI assistance. GitHub's Copilot research measured ~55% faster task completion, and teams report even larger gains on full-feature work. Feature branches that took two weeks now close in three days. PR volume doubles. Deploy frequency climbs. And sitting at the end of every one of those PRs: a QA review process built for a slower era. If you are still relying on a human QA team -- or worse, manual testing -- to validate each release, your bottleneck is not engineering capacity anymore. It is QA.

This is not a conversation about whether testing matters. It is a business case calculation. Here is the framework to build it, with real numbers, for a CFO who will ask hard questions. Whether you are calculating the cost of QA automation for the first time or evaluating in-house QA vs automated testing, this guide gives you the spreadsheet-ready numbers.

If you want the philosophical argument for why manual vs automated testing costs diverge so sharply in the AI era, start there. This article skips to the spreadsheet.

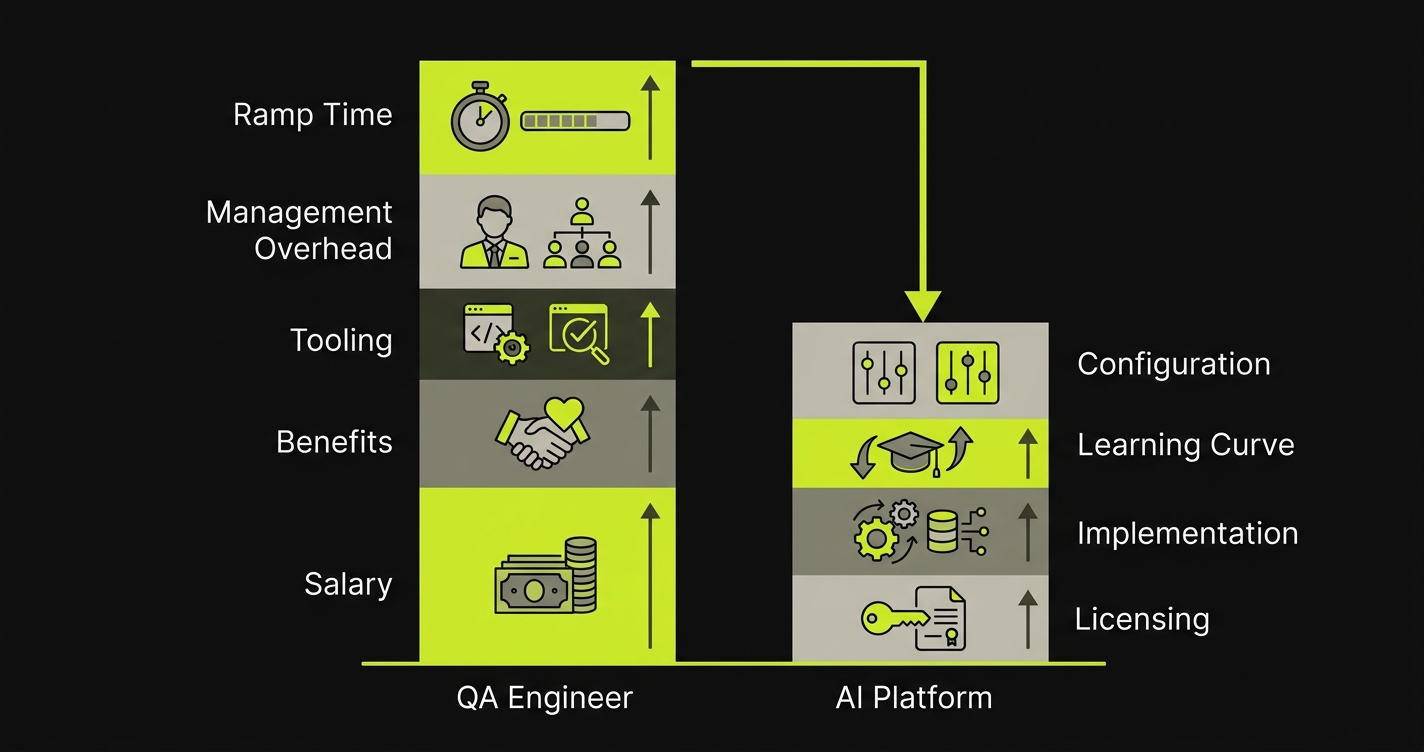

The Real Cost of a QA Engineer

Most engineering leaders significantly underestimate fully-loaded QA headcount cost. The base salary is the visible number. The actual cost is higher.

For a mid-level QA engineer in a US-based tech company:

| Cost Component | Low Estimate | High Estimate | Notes |

|---|---|---|---|

| Base salary | $120,000 | $180,000 | $120K-$180K is the 2026 US market range for mid-level QA engineers (Glassdoor, Levels.fyi) |

| Benefits + payroll taxes | $30,000 | $54,000 | 25-30% of base salary: health, dental, 401K match, FICA, unemployment |

| Tooling | $5,000 | $15,000 | Test management platform, CI seats, monitoring, device lab access |

| Management overhead | $10,000 | $18,000 | ~10% of engineering manager time spent on QA direction and review |

| Ramp time | $15,000 | $30,000 | 3-6 months to full productivity, including onboarding and codebase ramp |

| Fully loaded annual cost | $180,000 | $297,000 | Annualized including ramp amortized over 2 years |

The ramp figure deserves attention. A new QA engineer takes 3-6 months to reach full productivity in a mature codebase. That is $45,000-$90,000 in salary paid during partial productivity -- typically amortized over a 2-year tenure assumption, adding $22,500-$45,000 per year to the true cost. If your QA hire churns within 1-2 years (not unusual in a competitive market), you never fully amortize that ramp investment.

For a team that needs 2-3 QA engineers to cover reasonable test surface area, the annual QA team cost is $360,000-$891,000. That is the baseline the ROI calculation has to beat.

If you are still deciding whether to hire a QA engineer at all versus adopting an automated approach, this cost picture is the critical input.

AI Test Automation Cost: What Platforms Actually Charge

AI testing platforms have a different cost structure: front-loaded implementation, then flat or declining marginal cost as coverage scales.

| Cost Component | Low Estimate | High Estimate | Notes |

|---|---|---|---|

| Platform licensing | $12,000 | $60,000 | Annual SaaS cost; varies by team size and feature tier. Autonoma starts at ~$6,000/year. |

| Implementation | $3,000 | $10,000 | Engineering hours to connect codebase, set up CI integration, configure environments |

| Learning curve | $2,000 | $5,000 | 1-2 weeks of engineering time to understand results, tune coverage priorities |

| Ongoing configuration | $1,000 | $4,000 | Monthly hours to review flagged tests, adjust scope; declines as agents learn codebase |

| First-year total | $18,000 | $79,000 | Highest-cost year; subsequent years are typically 30-40% lower, because implementation and learning curve costs do not recur while platform licensing and ongoing configuration remain |

The key structural difference: AI testing cost does not scale with headcount or PR volume. Adding 5 more developers to your team does not increase your AI testing bill in proportion to the added workload. Adding 5 more QA engineers does.

For a comparison of the specific tools available in this space, the AI testing tools guide covers the market in detail with scoring across six dimensions.

QA Automation Services: What Outsourced Testing Costs

There is a third option most ROI calculations ignore: outsourced QA automation services. Before settling on in-house QA vs an AI testing platform, leadership will ask about contracting.

| Cost Component | US-Based Firm | Offshore Firm | Notes |

|---|---|---|---|

| Hourly rate | $50-$80 | $15-$35 | QA-specialized consulting firms; rates vary by seniority |

| Typical annual engagement | $120,000-$250,000 | $50,000-$120,000 | 2-3 dedicated QA resources on a retainer model |

| Ramp time | 4-8 weeks | 6-12 weeks | External teams need more time to learn codebase and domain |

| Communication overhead | Low-Medium | Medium-High | Timezone gaps, context-switching, specification overhead |

Outsourced QA solves the hiring timeline problem but introduces new friction: longer feedback loops, specification overhead, and the persistent gap between external testers who follow scripts and internal engineers who understand product intent. The cost savings over in-house QA are real for offshore engagements ($50K-$120K vs $180K-$297K), but the coverage and speed metrics rarely match either an in-house engineer or an AI platform.

For teams evaluating all three options, the comparison becomes: in-house QA at $180K-$297K with deep product knowledge, outsourced QA at $50K-$250K with slower feedback loops, or AI testing at $18K-$79K with the broadest coverage and fastest execution. The math favors AI testing in most scenarios where regression and PR validation drive the QA workload.

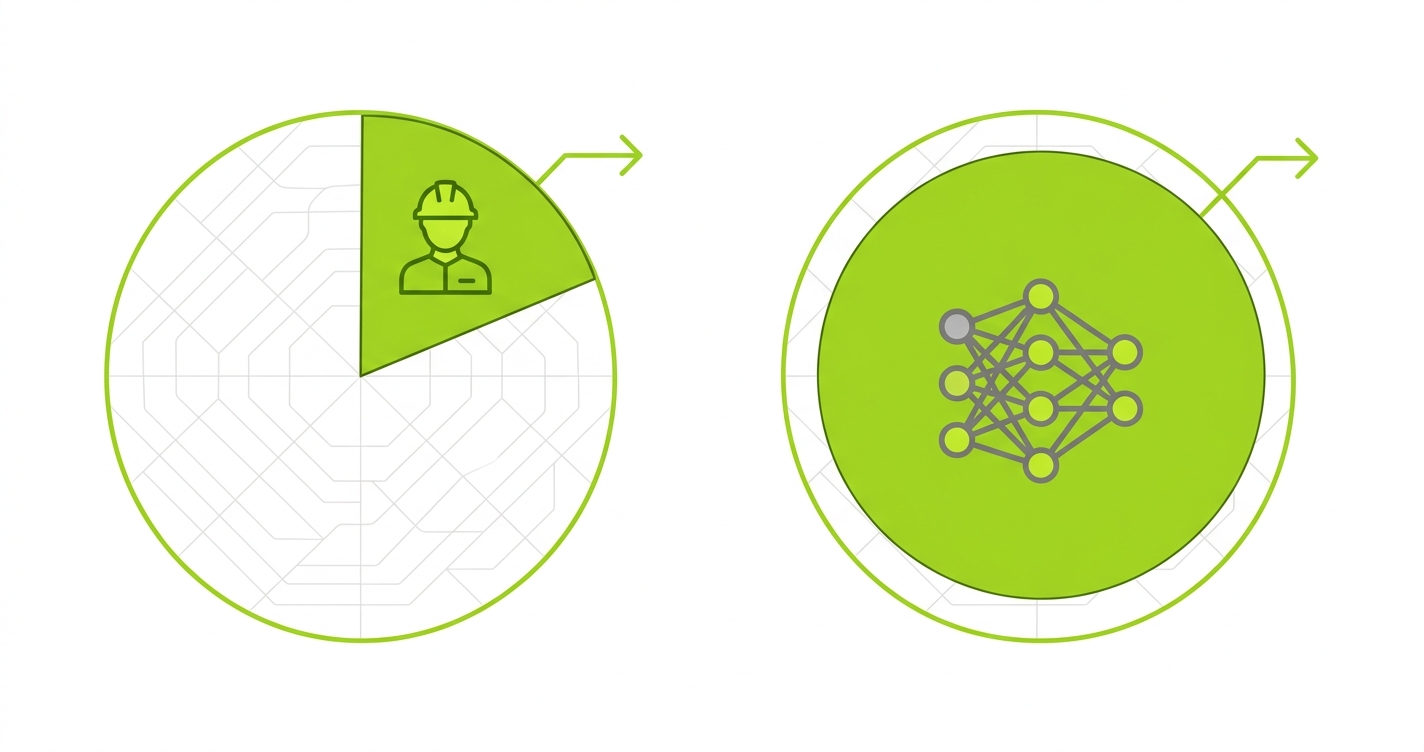

Coverage: What One QA Engineer Can Actually Do

The coverage gap is where the ROI case becomes most concrete. Before building the numbers, it is worth being honest about what human QA capacity actually delivers.

A single QA engineer, working at full productivity:

- Can manually test 5-10 critical user paths per day, depending on complexity

- Can write and maintain 3-5 automated test scripts per day (in a scripted automation workflow)

- Typically covers 20-40 critical paths in their maintained suite before maintenance burden makes the suite unscalable

- Requires 30-50% of their time on test maintenance as the codebase evolves

At 30 critical paths maintained by one QA engineer, and assuming your application has 150+ testable user flows (typical for a SaaS product with a few core modules), you are covering 20% of your surface area per engineer. Covering 80% requires 3-4 engineers -- plus the maintenance cost compounds as each engineer's suite grows.

Autonoma approaches coverage differently. The Planner agent reads your codebase -- routes, components, API endpoints, user flows -- and generates test cases from the code itself. It handles database state setup automatically, generating the API calls needed to put the application in the right state for each test scenario. The Automator agent executes those tests against your running application. The Maintainer agent updates tests when code changes.

The result: a team can go from zero to coverage of 100+ critical paths in days, not weeks. As your codebase grows, coverage scales without additional headcount. The agents do not get bored, do not take shortcuts on test quality, and do not call in sick during your release window.

Speed: Mean-Time-to-Test

The second dimension of the ROI case is not just coverage -- it is how fast that coverage runs after each code change.

In a human-QA workflow, mean-time-to-test (MTT) after a PR merge looks like this:

- PR merged: developer notifies QA

- QA picks up the work: 2-8 hours (queue time, context switching)

- QA executes relevant tests: 2-4 hours for a focused regression

- QA documents results and communicates: 1-2 hours

- Total: 5-14 hours per PR, often longer in high-velocity periods

In an AI testing workflow:

- PR merged: CI pipeline triggers tests automatically

- Tests execute: 10-20 minutes for a focused suite

- Results available: immediately, linked to the PR

- Total: under 30 minutes per PR, consistently

At 20 PRs per week (not unusual for a team using AI coding tools), that speed differential is the difference between QA taking 1,750 hours per year and taking 170 hours per year. At $60/hour blended engineering cost, that is a $95,000 annual difference in time cost -- before counting the value of faster feedback loops on bugs.

For a detailed look at what continuous testing at high PR velocity actually requires, the implementation specifics matter beyond the time comparison.

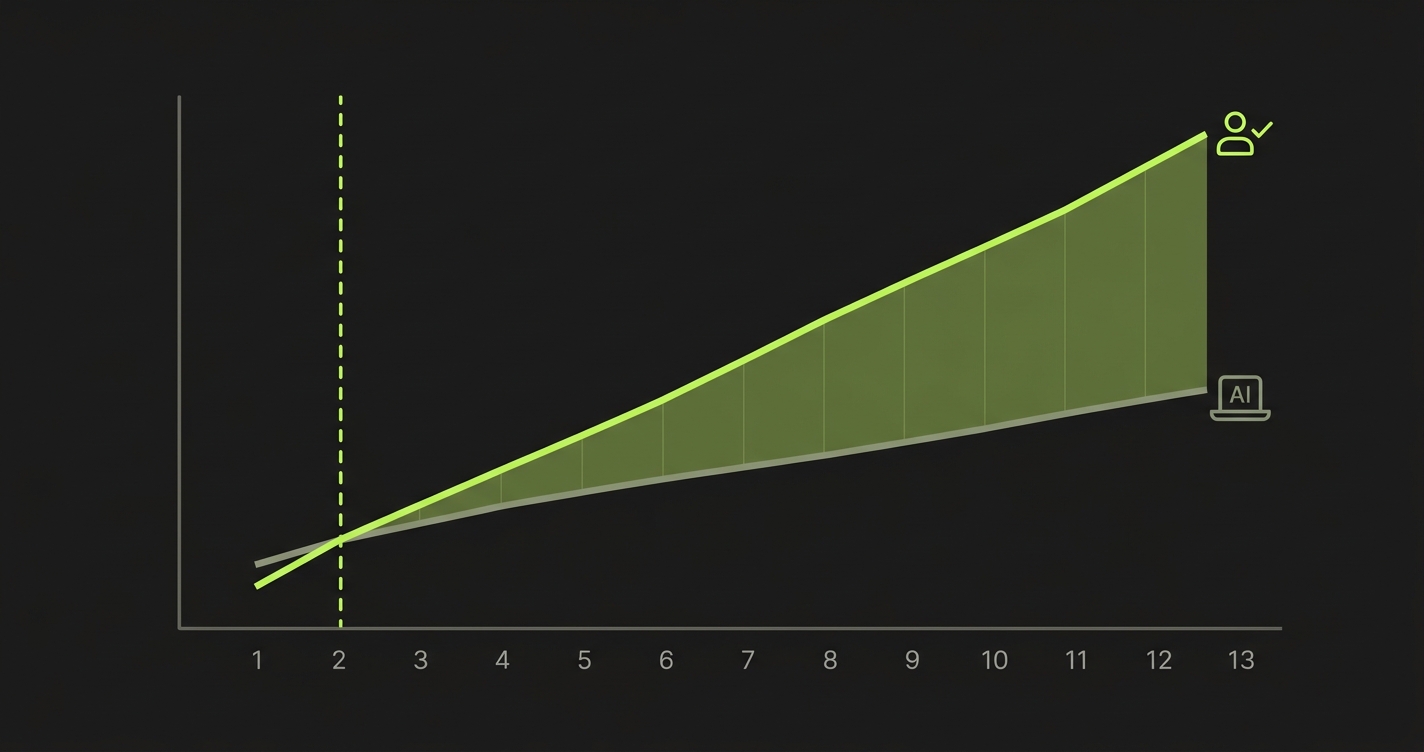

Test Automation ROI: The Break-Even Calculation

Break-even is where the CFO conversation gets concrete. Here is a worked example for a 10-person engineering team currently using 1 QA engineer and manual testing.

Current state (annual):

- 1 QA engineer fully loaded: $220,000

- QA tooling (existing): $8,000

- Bug-in-production cost: estimated $50,000 (post-release defects, incident response, engineering time diverted)

- Total: $278,000/year

AI testing platform (Year 1):

- Platform licensing: $36,000 (mid-range for a 10-person team; Autonoma starts at ~$6,000/year — a fraction of this estimate)

- Implementation: $6,000 (one-time, Year 1 only)

- Ongoing configuration: $3,000

- Bug-in-production cost (reduced): $15,000 (AI coverage catches more regressions pre-deploy, but does not eliminate all production defects)

- Total Year 1: $60,000

Month-by-month break-even:

| Month | Cumulative QA Engineer Cost | Cumulative AI Platform Cost | Net Savings |

|---|---|---|---|

| 1 | $23,167 | $10,500 (impl. + M1) | +$12,667 |

| 2 | $46,333 | $15,000 | +$31,333 |

| 3 | $69,500 | $19,500 | +$50,000 |

| 6 | $139,000 | $33,000 | +$106,000 |

| 12 | $278,000 | $60,000 | +$218,000 |

Break-even occurs in Month 1-2 for teams replacing an existing QA headcount. For teams where QA is additive (adding coverage without replacing a hire), break-even arrives when the platform prevents enough production bugs to offset its cost -- typically 2-4 months.

In-House QA vs Automated Testing: The Summary

| Dimension | In-House QA Team | AI Testing Platform |

|---|---|---|

| Annual cost (10-person eng team) | $180K-$297K per engineer | $18K-$79K total (Year 1) |

| Coverage capacity | 20-40 critical paths per engineer | 100+ critical paths |

| Time to test per PR | 5-14 hours | Under 30 minutes |

| Scales with team growth | Linearly (add headcount) | Flat (configuration only) |

| Test maintenance | 30-50% of QA engineer time | Self-healing (automated) |

| Break-even | Baseline | Month 1-2 |

This is a conservative model. It does not count: faster release velocity value, reduced developer time on bug triage, or the compound effect of shipping more features with higher confidence. Those numbers make the ROI case stronger, but they are harder to defend in a budget meeting. Stick to the hard cost comparison first.

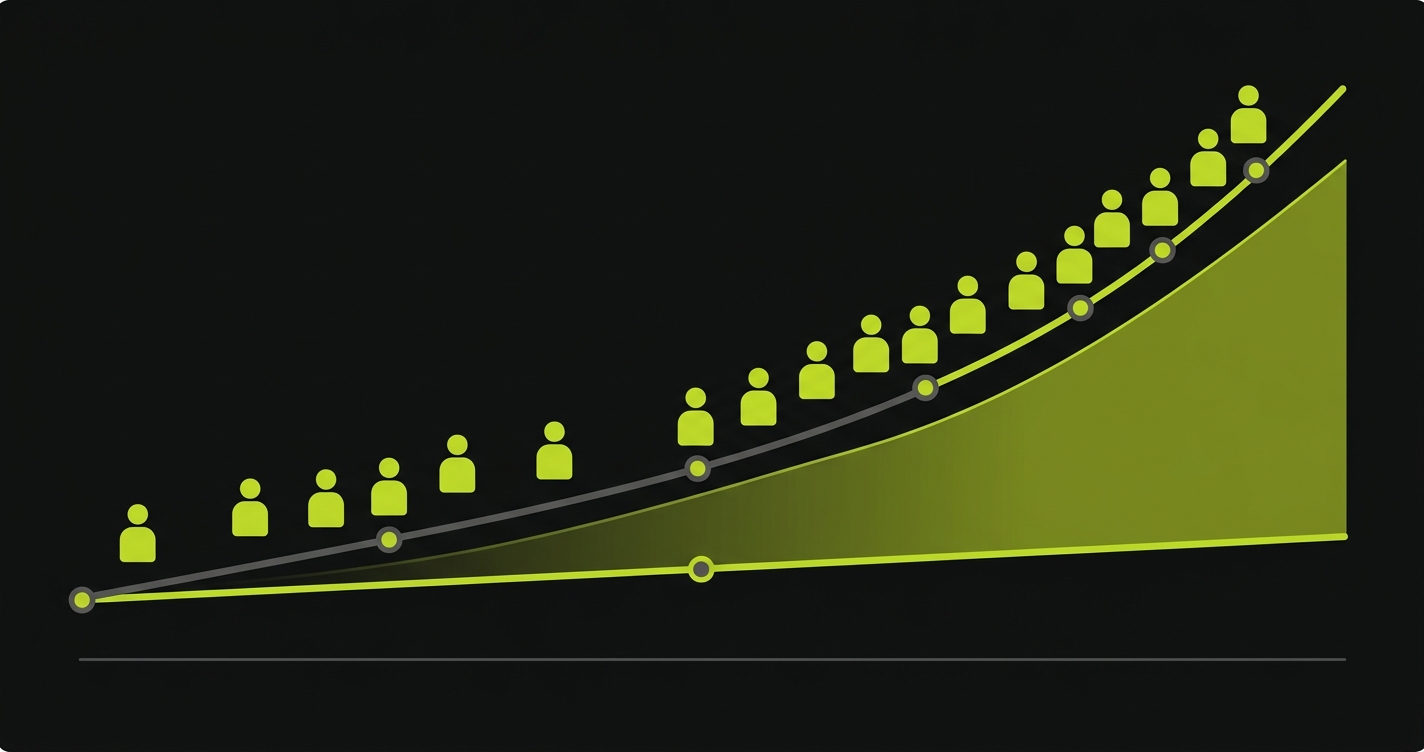

The Compounding Effect: Why ROI Widens Over Time

Here is what the 2020-era ROI articles miss entirely: in the AI coding era, the savings gap does not stay flat. It compounds.

When your team uses AI coding tools, they generate more code over time -- not linearly, but accelerating as developers get better at working with AI assistants. More code means more surface area to test. More surface area means more QA work per release. A human QA team scales with headcount; an AI testing platform scales with configuration.

By Year 2, a team that has grown from 10 to 15 developers (common at a funded startup) would typically need to scale from 1 to 2 QA engineers -- adding another $200,000+ to the annual bill. The AI testing platform cost? Likely $40,000-$50,000, with coverage that now spans the larger codebase automatically.

The ROI gap at Year 2:

- QA engineer path: $460,000-$520,000 (2 engineers, tooling, management)

- AI platform path: $40,000-$50,000

- Gap: $410,000-$470,000 per year

For teams that keep one senior QA engineer alongside the platform (the hybrid model described below), the AI-augmented path is roughly $160,000-$200,000 — still less than half the pure-headcount path.

This compounding is the argument for moving early. Every month of delay is a month where the gap closes from the right side -- the side that costs more. The QA process improvement framework for AI-era bottlenecks addresses how to restructure your QA approach as the velocity gap widens.

In-House QA vs Automated Testing: The Hybrid Model

An honest ROI case acknowledges where AI testing does not fully replace human judgment. Getting this right makes your leadership proposal more credible -- because a CFO who knows you have thought through the tradeoffs is more likely to approve.

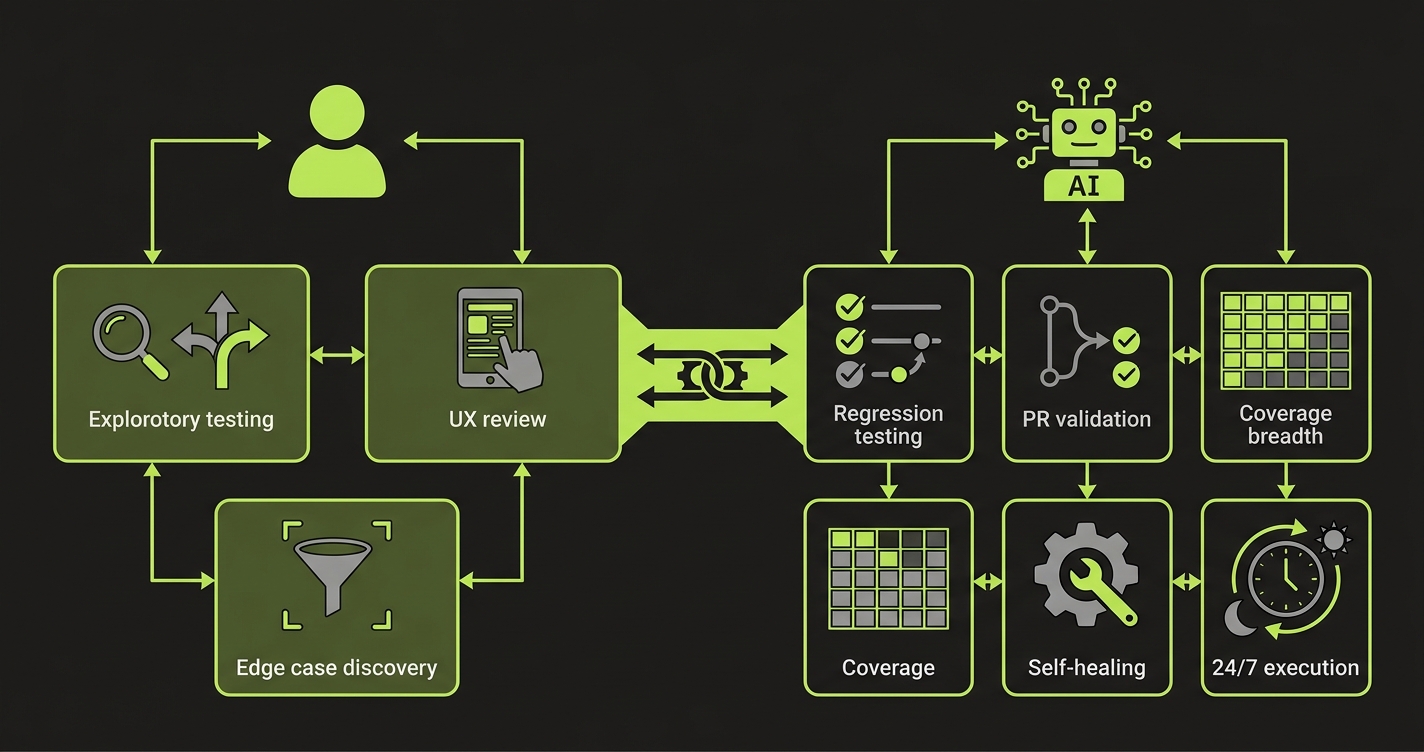

AI testing is strictly superior for:

- Regression coverage across established flows

- PR-level testing on every merge (speed and consistency)

- Coverage breadth -- testing 150 paths instead of 30

- Self-healing when UI or API changes break test selectors

- Night-time and weekend test execution without human oversight

Human QA still adds value for:

- Exploratory testing of genuinely novel features (new paradigms AI has not seen)

- Usability and UX judgment (does this flow feel right for a human?)

- Edge case discovery in ambiguous business logic where requirements are unclear

- Security testing that requires adversarial thinking beyond defined test cases

- Customer-scenario simulation for high-stakes regulated workflows

The honest version of the hybrid model: one senior QA engineer (or a QA-focused engineer embedded in the team) handling exploratory and UX review, with AI testing handling regression, PR validation, and coverage breadth. That is roughly $120,000-$150,000 in human QA cost plus $6,000-$60,000 in platform cost (Autonoma sits at the low end of that range), versus $360,000-$600,000 for a full QA team trying to cover the same ground.

If your first QA hire is not delivering the value you expected, the hybrid model reframe is often what fixes it -- the human QA role shifts from test-writer to test-strategy owner.

Test Automation ROI Framework: Plug In Your Numbers

Here is the plug-in-your-numbers version. Replace the bracketed values with your actuals.

| Line Item | Current State (QA Team) | AI Testing Platform |

|---|---|---|

| QA headcount cost | [Number of QA engineers] x $[fully loaded cost] | $0 (platform replaces or reduces headcount) |

| Platform licensing | $[existing tooling cost] | $[annual platform cost] |

| Implementation (Year 1 only) | $0 | $[hours x blended rate] |

| Test maintenance labor | [QA engineer hours x rate] x 0.35 | ~$0 (self-healing) |

| Bug-in-production cost | [Number of P1s/year] x $[avg incident cost] | [Projected reduction] x $[avg incident cost] |

| Velocity tax (delayed releases) | [Days of QA delay/sprint] x [days/year] x [daily revenue] | ~$0 (async, automated) |

| Annual total | Sum of above | Sum of above |

| Break-even month | Current state total / AI platform monthly cost | |

A few inputs most teams undercount: the velocity tax (how many days per sprint does QA review add to your cycle time?), and the production bug cost. A single P1 incident in a SaaS product -- engineer time, customer communications, potential churn -- commonly runs $10,000-$50,000 in fully-loaded cost. A QA platform that prevents 3-5 P1s per year often pays for itself on that line alone.

We built Autonoma to be a line item in this calculation that is easy to justify. Connect your codebase, and agents generate and execute tests across your critical flows automatically. No scripts, no selectors to maintain, no weekend test runs. The Maintainer agent keeps tests current as your code changes. When you are presenting this framework to leadership, Autonoma belongs on the "AI testing platform" line at a cost that break-evens within 2 months for most engineering teams.

QA Automation Business Case: The Proposal That Gets Approved

The framework is complete. The presentation is where most engineering leaders lose the room.

Here is what actually gets CFO approval:

Lead with the business problem, not the tool. Do not open with "I want to buy a testing platform." Open with "Our release velocity is being capped by QA review time, and here is the dollar cost of that cap." Frame QA as a delivery bottleneck with a measurable cost, not as a quality problem with an uncertain solution.

Show the before/after in concrete numbers. Use the framework above. Fill in your actual numbers. A CFO reading "$278,000 current cost, $60,000 replacement cost, break-even in Month 1" has a clear approval decision. A CFO reading "this will improve our testing" has nothing to sign off on.

Anticipate the three objections:

The first objection is risk: "What if it misses bugs?" Counter this with coverage math. Your current QA coverage is [X] critical paths. An AI platform covers [5-10X] critical paths in the same window. The risk is not that AI testing misses bugs -- it is that your current coverage already misses bugs, just invisibly. The cost of not testing is measurable and typically higher than the risk of adopting automation.

The second objection is control: "How do we know the tests are good?" Counter this with the verification layer argument. Autonoma's agents include verification at every step -- the Planner validates test scenarios against your actual routes and components, the Automator confirms execution results, the Maintainer checks that test updates match actual UI changes. The tests are derived from your codebase, not from human guesses about user behavior.

The third objection is "we still need QA people." Agree. Propose the hybrid model explicitly: one senior QA engineer for exploratory and UX review, AI platform for regression and PR validation. That is a cost reduction and a capability increase in the same proposal.

Frame the risk of not investing. Every month without AI testing is a month where your AI-assisted developers are generating more untested code. The maintenance backlog grows. The coverage gap widens. The cost of not testing compounds in the same way the ROI compounds -- it just compounds in the wrong direction. Put a number on that: if your team ships 3x more code with AI tools and your QA coverage has not scaled, your production defect risk has increased 3x. What is one P1 incident per quarter worth in engineering and customer cost?

The CFO who hears "we can reduce QA cost by $200K/year, break-even in 2 months, and improve coverage 5x" is not looking for a reason to say no. They are looking for assurance that you have thought through the downside. Give them that, and you have the budget.

For a 10-person team replacing one QA engineer with an AI testing platform, the annual cost reduction is typically $180,000-$230,000 after platform costs. Break-even occurs in Month 1-2 of Year 1. By Year 2, if the team has grown and avoided adding a second QA engineer, cumulative savings commonly exceed $400,000. These numbers assume a mid-range AI platform at $30,000-$50,000/year and a fully loaded QA engineer cost of $200,000-$250,000.

Start with base salary ($120,000-$180,000 for a mid-level US QA engineer in 2026). Add benefits and payroll taxes (25-30% of base), tooling ($5,000-$15,000), management overhead (10% of an EM's time), and ramp cost amortized over a 2-year tenure ($15,000-$30,000). Fully loaded cost typically ranges from $180,000 to $297,000 per year. If turnover is high and ramp costs are not fully amortized, the actual cost skews higher.

No, and making it binary is the mistake that weakens most ROI proposals. The strongest argument to leadership is the hybrid model: one senior QA engineer handling exploratory testing, usability review, and edge-case discovery, with AI testing covering regression, PR validation, and coverage breadth. This model typically costs $150,000-$200,000 per year (1 engineer + platform) versus $360,000-$600,000 for a full human QA team trying to cover the same surface area.

QA automation ROI turns negative primarily in two scenarios. First, if test maintenance cost exceeds the savings from automation -- common with brittle Selenium scripts that break every sprint. Second, if the platform is adopted but coverage is not actively expanded -- paying for automation that covers the same 20 paths as manual testing produces minimal ROI. AI-native platforms with self-healing reduce the maintenance problem. Investing in coverage breadth solves the utilization problem.

A conservative estimate for a SaaS P1 incident: 8-16 engineer hours for diagnosis and fix ($1,000-$2,000), 2-4 hours of EM and PM time ($300-$600), customer-facing impact (communications, potential churn value at $500-$5,000 per affected customer), and post-mortem overhead ($500-$1,000). A single P1 commonly costs $5,000-$25,000 fully loaded. Teams shipping with AI tools and experiencing 4-8 P1s per year are spending $20,000-$200,000 annually on production defects. This is often the strongest single number in a QA automation ROI presentation.

Implementation time varies by platform and codebase complexity. Autonoma connects to your codebase and begins generating tests within hours of setup. Initial coverage across your critical paths is typically live within 1-3 days. Full integration with CI/CD pipelines (so tests run automatically on every PR) takes 1-2 weeks including tuning. This compares to 4-12 weeks to hire, onboard, and ramp a QA engineer to equivalent productivity.

Expect three main objections. First, risk: 'What if it misses bugs?' Answer with coverage math -- AI testing covers significantly more critical paths than a human QA team of equivalent cost. Second, control: 'How do we know the quality is good?' Answer with the verification architecture -- AI-native platforms generate tests from your codebase, not from guesses, and include verification layers at every step. Third, headcount: 'We still need QA people.' Agree, and propose the hybrid model -- one senior QA engineer plus AI platform costs less than two QA engineers while providing more coverage.