Looking for a mabl alternative built for AI-first teams? Mabl is a mature, enterprise-grade testing platform built in 2017 that has evolved toward AI through features like auto-healing and smart element detection. Autonoma was built in the AI era specifically for teams using AI code generation, with agents that read your codebase and generate tests without recording or scripting. Mabl wins on enterprise compliance, platform maturity, and ecosystem depth. Autonoma wins on AI-code awareness, test creation speed, and pricing. If your team is using Cursor, Copilot, or any AI coding tool to ship code faster, the question worth asking is whether your testing tool was designed to keep up with that pace.

There's a gap that nobody talks about when evaluating AI software testing platforms. It's not flaky tests. It's not slow CI pipelines. It's the tests that never get written because the tool has no idea that the feature was shipped.

When an AI coding assistant generates a new payment flow, a new authentication path, or a new API endpoint, a recorder-based testing tool is blind to it until a human walks through the app and captures the interaction. That gap, between what your AI assistant shipped and what your testing tool knows about, is where production bugs live.

We built Autonoma specifically to close that gap. The comparison below shows where Mabl and Autonoma land on every dimension that matters for engineering teams running AI-assisted development. Some of those dimensions favor Mabl. One of them changes the framing entirely.

| Dimension | Mabl | Autonoma |

|---|---|---|

| Test creation method | Browser recorder + low-code editor | AI agent reads codebase directly |

| AI capability | Auto-healing selectors | Full test generation + maintenance |

| AI-code awareness | Limited (selector-level) | Code-level understanding |

| Setup time | 1-3 hours | Under 30 minutes |

| Enterprise maturity | Strong (SSO, audit logs, SOC 2) | Growing |

| Pricing | Enterprise contracts | Usage-based, self-serve |

| Best for | QA teams with traditional workflows | Dev teams using AI code generation |

The Origin Story Matters

Mabl launched in 2017. At the time, the dominant testing problems were: tests that broke when the UI changed, tests that required dedicated QA engineers to write, and test suites that nobody maintained. Mabl's recorder-based approach with auto-healing addressed all three well. Enterprises adopted it because it reduced the scripting burden on QA teams and kept test suites healthier through UI changes.

By 2026, the problem landscape has shifted. The bottleneck is no longer "QA can't write tests fast enough." It's "developers are shipping AI-generated code significantly faster than any testing tool was designed to handle." A recorder-based workflow, even one with smart healing, was not designed for this cadence. Neither was the assumption that a human would walk through each flow to define what needs testing.

We built Autonoma in response to this specific shift. The premise is that your codebase already contains everything a testing system needs to know, routes, components, data flows, edge cases, and that an AI agent should be able to read that codebase and generate comprehensive test coverage without any human walkthrough. The recorder never enters the picture.

That architectural difference is worth understanding before reading the comparison tables below.

AI Testing Capabilities: Generation vs. Healing

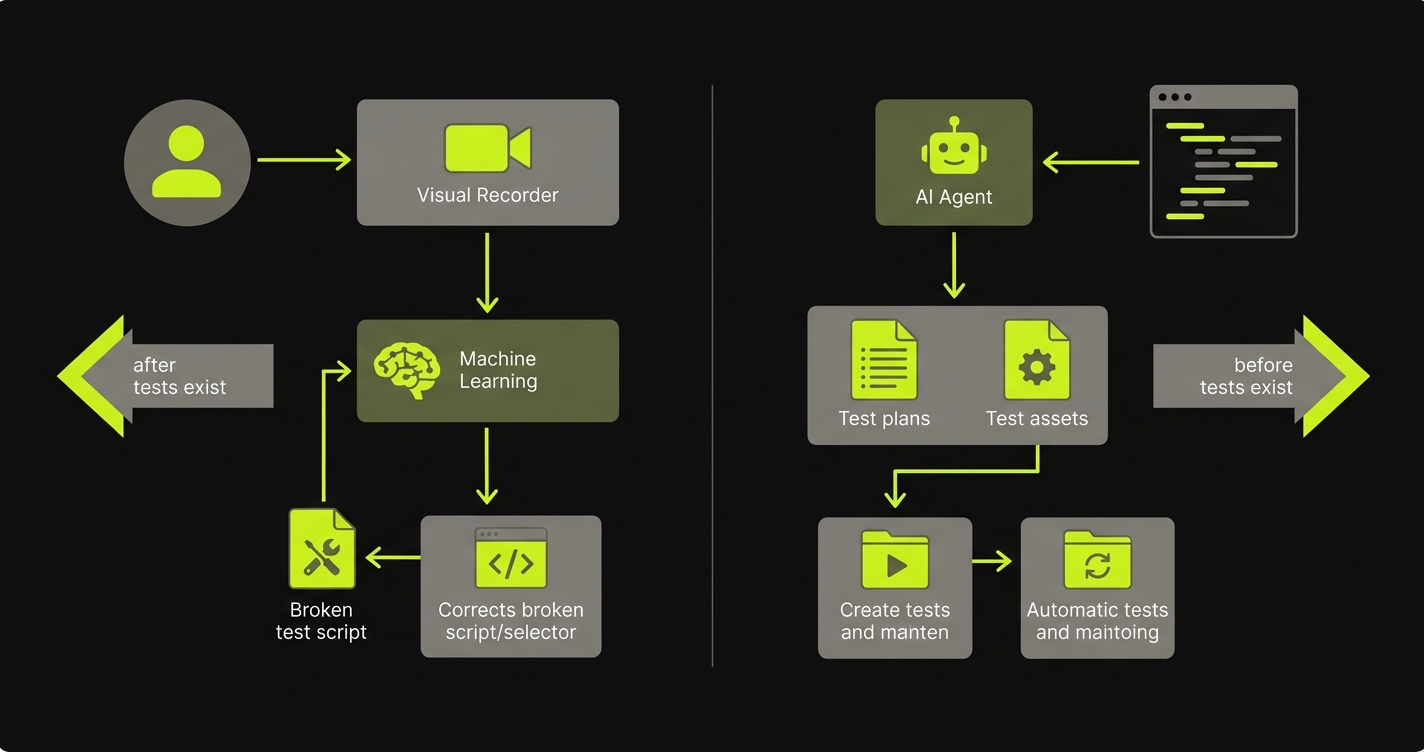

The most important comparison point is where each platform applies AI, because the answer reveals what problem each tool was optimized to solve.

Autonoma's AI is applied before tests exist. The Planner agent reads your codebase directly, scanning routes, components, API endpoints, and user flows. It generates test plans from that analysis, including edge cases and state-dependent scenarios that a human might miss in a time-pressured sprint. When AI-generated code ships and a flow changes, the system detects the change at the code level, not the selector level, and updates the relevant tests accordingly.

Mabl's AI is applied after tests exist. The platform uses machine learning for auto-healing, which detects when a UI element has changed and attempts to find the new location of that element automatically. It also uses smart element detection during recording to select more resilient selectors. These are genuine capabilities. Auto-healing reduces the maintenance burden that plagues traditional scripted suites, and smart selectors reduce the initial fragility that causes tests to break. The constraint is that both capabilities operate downstream of the recording step. A human still walks through the application to define what gets tested. The AI optimizes the selectors and keeps them alive through changes. It does not decide what to test or understand the codebase.

| AI Capability | Mabl | Autonoma |

|---|---|---|

| Auto-healing (UI changes) | Yes, ML-based selector healing | Yes, code-change awareness |

| Test generation from codebase | No | Yes, Planner agent reads code |

| Smart element detection | Yes, during recording | Not applicable (no recording) |

| AI-code change awareness | Limited (selector-level) | Yes (code-level understanding) |

| Database state setup | Manual configuration | Planner agent generates endpoints automatically |

| Verification layers | Assertion-based | Multi-layer at each agent step |

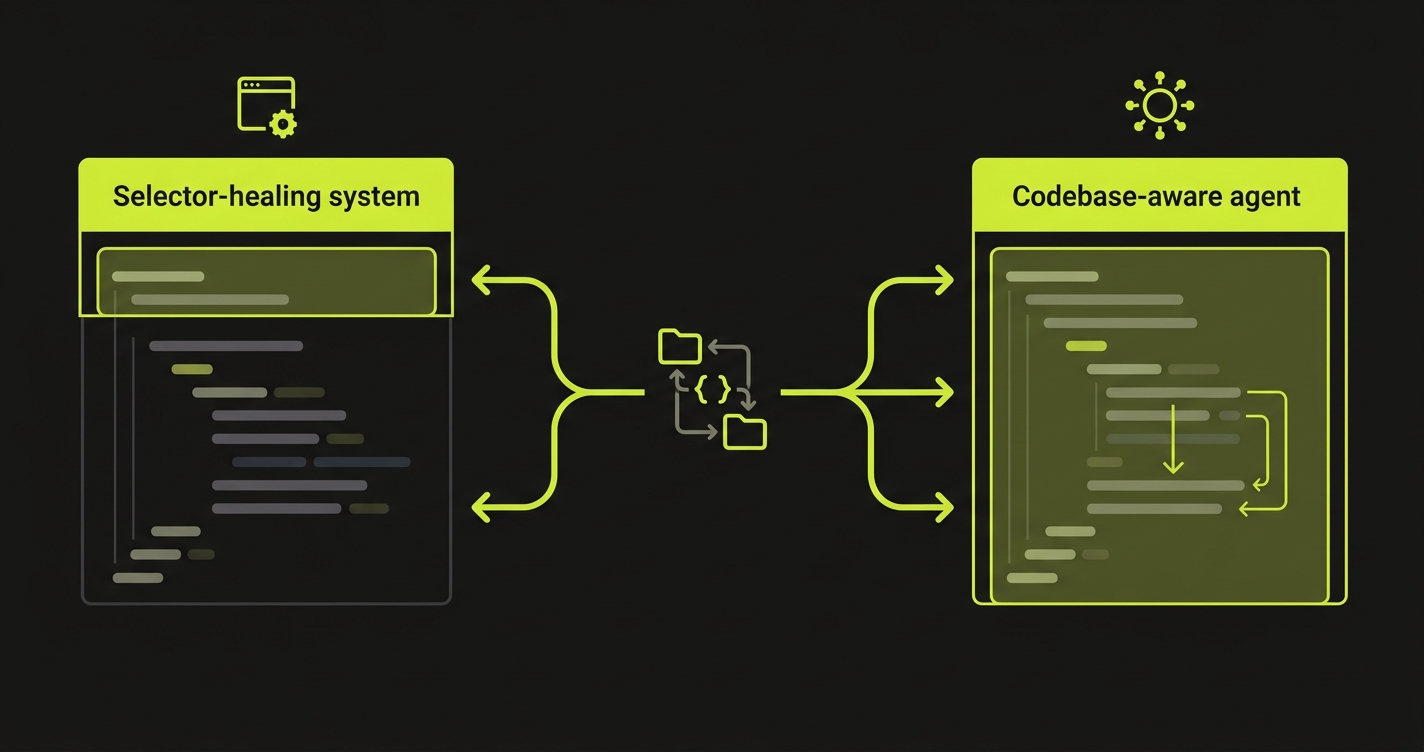

| Test maintenance model | Semi-automated (healing + manual) | Fully automated (Maintainer agent) |

The practical consequence of these differences shows up when your AI coding assistant ships a refactor. Mabl's auto-healing handles selector drift well. It was built for that. For a deeper look at how self-healing works across platforms, see our self-healing test automation guide. But when an AI assistant restructures the component architecture, changes route names, or generates a new user flow that didn't exist before, selector-level healing doesn't cover the gap. The tests still break or, worse, pass incorrectly because the flow itself changed but the selectors still resolve.

Test Creation: Time to First Test

Both platforms reduce the scripting burden compared to raw Playwright or Selenium. They reduce it in very different ways.

Autonoma connects to your codebase. You point it at your repository, the Planner agent reads your code, and tests are generated from that analysis. No recording session. No browser walkthrough. No one needs to know what flows to test, because the agent derives that from the code itself.

Mabl uses a recorder. You navigate through your application in a browser, and Mabl captures your interactions and converts them into a test. For teams used to writing Selenium by hand, this is a meaningful improvement. You don't need to know CSS selectors. You don't write JavaScript. The recorder handles the translation.

| Test Creation Benchmark | Mabl | Autonoma |

|---|---|---|

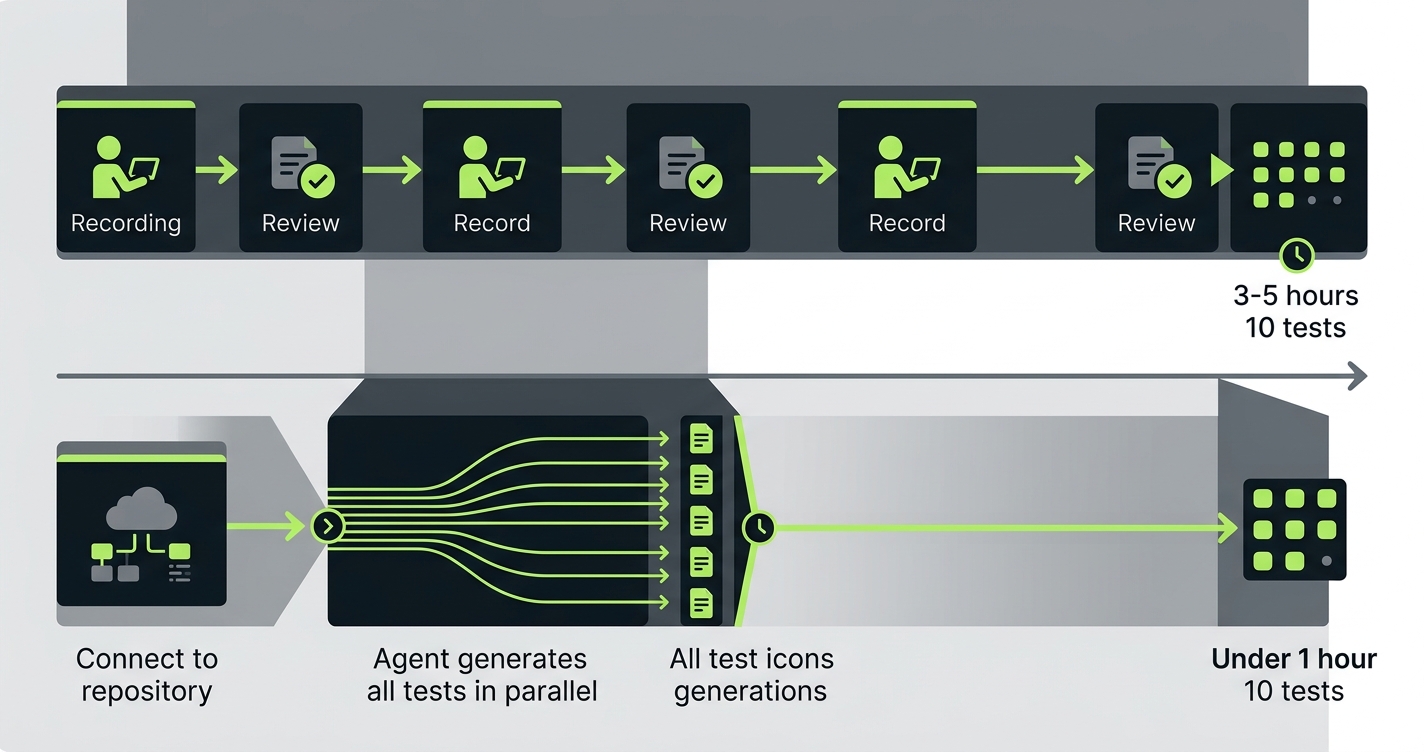

| Setup to first test running | 1-3 hours (record + configure) | Under 30 minutes (connect repo) |

| 10 tests covering a standard checkout flow | 3-5 hours (recording + review) (based on typical team reports) | Under 1 hour (agent generates) |

| Test creation requires knowing the flows | Yes | No (agent reads code) |

| New flow coverage after code change | Manual (re-record or script) | Automatic (Maintainer agent) |

| Test creation requires QA expertise | Low (recorder abstracts it) | None (fully hands-off) |

| Edge case coverage | Dependent on what was recorded | Derived from codebase analysis |

The benchmark difference compounds over time. For an initial suite of 10 tests, Mabl's recorder is fast enough. For a product that ships AI-generated code across multiple flows every week, the recording step becomes the bottleneck. Every new feature requires someone to walk through it. Every refactored flow requires a new recording session or manual test update. With Autonoma, coverage expands automatically as the codebase grows.

CI/CD Integration Depth

Both platforms integrate with standard CI/CD pipelines. The depth of integration and the philosophy behind it differ.

Autonoma is designed to sit inside the developer workflow from the start. The Automator agent runs tests against your application continuously, not just on scheduled triggers. Integration with terminal-based workflows and coding agents means developers get feedback without leaving their environment.

Mabl provides a CLI, GitHub Actions integration, and webhooks. You can trigger test runs from a pipeline, and results are reported back to the dashboard. The integration is reliable and well-documented. Mabl has been connecting to enterprise CI systems long enough that edge cases are handled.

| CI/CD Feature | Mabl | Autonoma |

|---|---|---|

| GitHub Actions integration | Yes, native | Yes, native |

| GitLab CI / CircleCI support | Yes | Yes |

| CLI for pipeline triggers | Yes, mabl CLI | Yes |

| PR-level test gating | Yes | Yes |

| Continuous background execution | Scheduled runs | Continuous agent execution |

| Developer terminal integration | Via CLI | Native, built for dev workflow |

| Coding agent compatibility | Limited (API-triggerable, not built-in) | Yes |

| Feedback loop speed | Minutes (run-and-report) | Near-continuous |

For teams running weekly release cycles, Mabl's scheduled and trigger-based execution model works fine. For teams running multiple deploys a day with AI assistance, the difference between "triggered when we ask" and "running continuously" is meaningful. Bugs caught at the PR stage cost minutes to fix. Bugs caught after deploy cost hours.

Enterprise Features: The Maturity Tradeoff

This is the area where an honest comparison requires acknowledging Mabl's genuine strengths. Nine years of enterprise adoption produces capabilities that a newer platform has not yet replicated at full depth.

Mabl has SSO, role-based access control, detailed audit logs, SOC 2 compliance documentation, and established integrations with enterprise tooling like Jira, Slack, and major CI platforms. Their support organization is staffed for enterprise SLAs. If your procurement team has a security questionnaire, Mabl can fill it out.

Autonoma is a growing platform. The core testing capabilities are production-ready, and we work with engineering teams across the stack. But if your deployment requires a mature enterprise compliance posture with full audit trail documentation on day one, that timeline matters.

Where Mabl leads on enterprise: Mabl's role-based permissions, audit logging, and compliance certifications are mature. For regulated industries or larger organizations with strict procurement requirements, this is a real differentiator.

Where Autonoma leads overall: For teams where AI code generation speed is the primary constraint, the compliance table is secondary to the question of whether your testing tool can keep up with your development pace. A mature compliance posture doesn't help if tests are always six steps behind the codebase.

The comparison articles for Autonoma vs BrowserStack and Autonoma vs Momentic cover similar enterprise tradeoffs for buyers evaluating the full landscape of AI testing tools. If you're evaluating multiple options, our mabl alternatives roundup covers the full landscape. For a broader look at the market, the AI testing tools definitive guide maps the full category.

Pricing: Structure and Accessibility

Mabl uses enterprise pricing. Exact figures are not published, but teams consistently report annual contract values starting in the five-figure range. The model is designed for procurement cycles, with credit-based pricing that scales with test volume and cloud usage. For a QA team embedded in a mid-to-large enterprise, this is expected. For a 10-person startup running heavy AI code generation, it's a significant commitment.

Autonoma is priced for growth-stage and enterprise teams, with accessible entry tiers that let teams evaluate real coverage before committing at enterprise scale.

| Pricing Factor | Mabl | Autonoma |

|---|---|---|

| Pricing model | Enterprise contracts, seat-based | Usage-based, accessible tiers |

| Entry point | Typically five figures annually | Lower entry, scales with usage |

| Free trial | Yes (limited trial period) | Yes |

| Procurement cycle required | Usually yes | No (self-serve available) |

| Best fit | Mid-market to enterprise QA teams | Startups to mid-market dev teams |

The pricing difference matters most at the evaluation stage. Mabl's enterprise model means decisions tend to involve procurement, legal review, and a structured demo process. Autonoma is available self-serve, which means a team can connect their codebase, see tests generated, and validate coverage before any contract conversation begins.

The AI-Code Awareness Gap

This is the AI software testing question that matters most for the specific buyer this article is for: teams where developers are using Cursor, GitHub Copilot, Claude Code, or similar tools to generate substantial portions of their codebase.

When AI generates code, a few things happen that traditional testing tools weren't designed for. Code changes faster. Refactors are larger. New routes and components appear in a single PR that would have taken a sprint to write manually. And critically, the person who shipped the code may not fully understand every edge case in what was generated.

Mabl's auto-healing handles the downstream consequence: when the UI changes, selectors are updated. But it doesn't address the upstream problem: the tests themselves may not cover what the AI generated. If Copilot introduces a new payment flow and nobody records a test for that flow, Mabl has no mechanism to detect the gap.

Autonoma addresses this at the source. When the Planner agent reads the codebase, it reads what is actually there, including everything the AI assistant generated. New routes get test coverage. New components get exercised. New data flows get validated. The test suite evolves with the codebase automatically, because the codebase is the spec.

This isn't a theoretical advantage. It's the reason we built the platform this way. We observed that teams shipping AI-generated code had test suites that lagged behind the codebase by weeks, not because nobody cared about testing, but because no tool was designed to close that gap automatically.

Verdict: Is Autonoma the Right Mabl Alternative for Your Team?

Autonoma is the right choice when:

- Your team is using AI code generation tools and shipping code faster than traditional testing workflows can track

- You want test coverage to expand automatically as the codebase grows, without recording sessions or scripting

- You need tests that understand code-level changes, not just selector-level changes

- Your team doesn't have dedicated QA bandwidth and needs a fully hands-off testing layer

Mabl is a strong choice when:

- Your team has dedicated QA engineers who are comfortable with a recorder-based workflow

- Your organization has enterprise procurement requirements, compliance mandates, or audit trail needs

- You have an established testing culture and want to reduce maintenance burden on existing test suites

- Your development pace is traditional or moderately accelerated, not AI-native

The honest frame is this: if AI is generating code faster than any recording-based tool can track, that is the defining constraint for your testing strategy. It's the one we built Autonoma to solve. Mabl was built to solve 2017's testing problem and has done that well. The platform is mature, the compliance story is solid, and the enterprise ecosystem is real. If those are your constraints, it's a reasonable choice.

Frequently Asked Questions

Autonoma is the right mabl alternative for teams where AI code generation speed is the primary constraint. Mabl is a mature, enterprise-grade platform with strong compliance and a recorder-based workflow that works well for traditional QA teams. Autonoma was built specifically for teams using AI coding tools, where tests need to be generated from the codebase automatically rather than recorded from browser walkthroughs. If your bottleneck is keeping test coverage up with AI-generated code changes, Autonoma addresses that directly.

Mabl's auto-healing operates at the selector level. When a UI element moves or changes, Mabl's ML detects the new location and updates the selector. This is effective for UI drift. Autonoma's Maintainer agent operates at the code level. When the codebase changes, including structural changes from AI code generation, the agent understands the intent of the test and updates it accordingly. The distinction matters most when code changes are architectural, not just visual.

Yes. Mabl's test creation model is centered on a browser recorder. You navigate through your application and Mabl captures the interactions. The platform also offers a low-code editor for adjustments after recording. Autonoma does not use a recorder. Tests are generated by the Planner agent reading your codebase directly.

Mabl has a significant advantage for enterprise compliance. With nine years of enterprise adoption, Mabl offers SSO, role-based access control, audit logs, and SOC 2 compliance documentation. Autonoma is a growing platform and is building toward full enterprise compliance features. For regulated industries or organizations with strict procurement requirements, Mabl is the more mature choice today.

Setting up database state for tests is one of the more overlooked challenges in end-to-end testing. Mabl requires manual configuration of test data and state. Autonoma's Planner agent handles this automatically: it generates the API endpoints needed to put the database in the right state for each test scenario. This removes a significant manual overhead from the test creation process.

AI software testing refers to testing approaches where artificial intelligence is used to automate or augment parts of the testing process. This includes tools like Mabl that use ML for auto-healing and smart element detection, and platforms like Autonoma that use multi-agent AI systems to generate, execute, and maintain tests from codebase analysis. The category covers a wide range: some tools apply AI narrowly to specific pain points like selector maintenance, while others use AI to replace the entire test creation and maintenance workflow.