Autonoma vs Momentic is the closest comparison in the AI testing space. Both are AI-native platforms that eliminate hand-written test scripts. The architectural difference is the source of truth: Momentic uses a visual, session-based approach where testers describe or click through flows in a no-code editor; Autonoma connects directly to your codebase and has agents derive tests from your routes, components, and data models automatically. If you are evaluating a Momentic alternative that eliminates manual test authorship entirely, this comparison covers every dimension that matters: AI architecture, code-change awareness, test creation experience, integrations, reporting, and pricing.

Here is the scenario that ends most AI testing evaluations badly. The platform demo looks great. You author fifteen test flows, they pass, everyone is happy. Then your team ships a sprint's worth of AI-generated code. New routes appear, components get refactored, edge cases multiply. You run the test suite. Half the tests are stale, broken, or simply do not cover the new surface area. Someone has to author more tests. That is not a tool failing at maintenance. That is a tool that never knew what your codebase looked like in the first place.

Engineering leads who have already ruled out the traditional options (Selenium is too much maintenance, Cypress is too brittle at scale, mabl is expensive and still semi-manual; see our mabl comparison) usually land on Momentic and Autonoma as the final shortlist, the two strongest Momentic competitors with genuinely AI-native architectures. Both are genuinely AI-native. The distinction is whether tests originate from a human-authored session (Momentic) or from the codebase itself (Autonoma). We built Autonoma around that second premise, and this comparison will show you exactly where the architectural difference compounds.

How Each Platform Uses AI

This is where the real difference lives.

Momentic's AI Approach

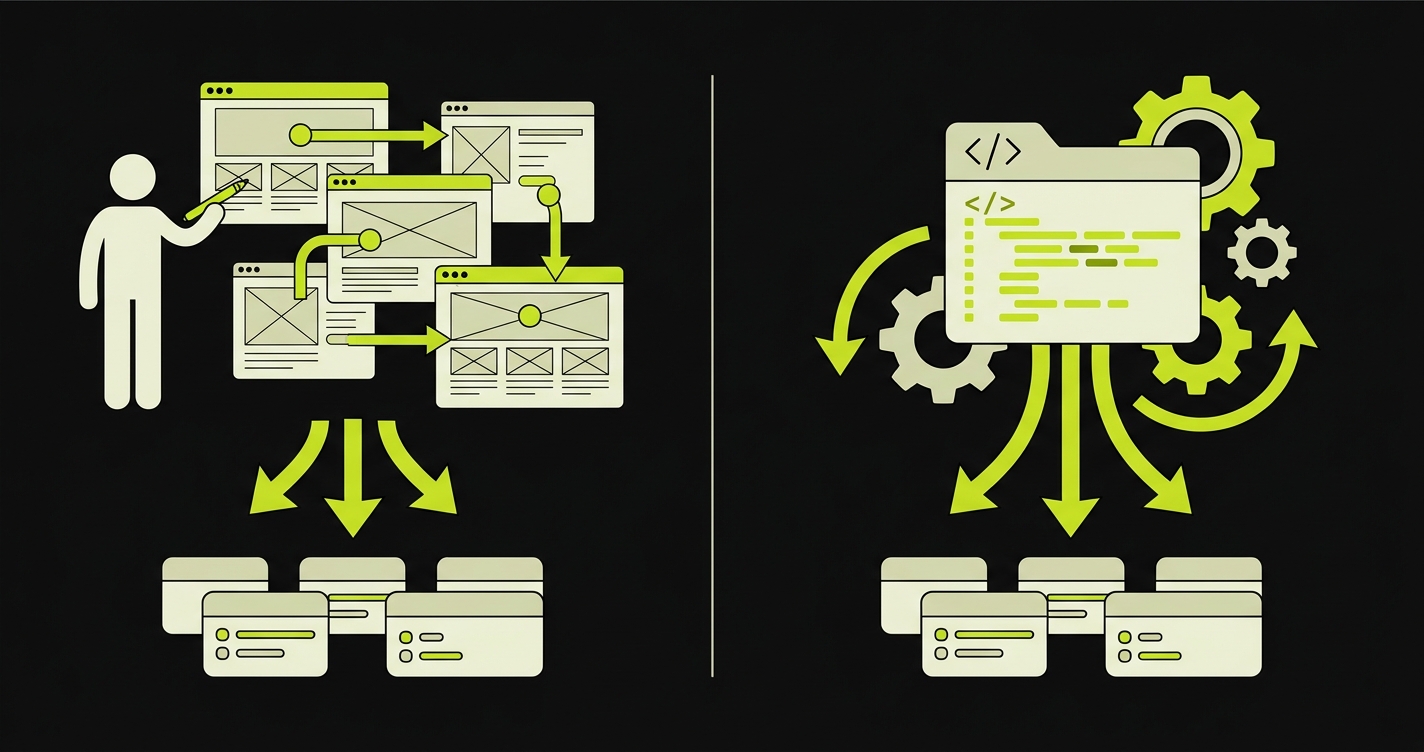

Momentic is built around a visual, no-code test editor. A tester (or developer) opens the Momentic interface, navigates to their staging environment, and either describes the flow in natural language or interacts with the UI while Momentic records the intent. The AI then converts that session into a self-healing test that runs on Momentic's infrastructure.

The self-healing layer is genuinely strong. When a selector changes, Momentic's AI re-identifies the element by context rather than brittle CSS or XPath. The test continues to pass through most typical UI refactors without manual intervention.

Where Momentic draws a boundary is at test creation. Someone still needs to author each flow. A tester, a developer, or a product manager needs to open the editor, define the scenario, and step through the path being covered. The AI handles execution and maintenance, but the initial test surface is defined by a human session.

Autonoma's AI Approach

We built Autonoma around a different premise. Your codebase already encodes everything a test needs to know: the routes that exist, the components that render them, the data models that back them, the authentication gates in front of them. The spec is already written. It is called your source code.

Three specialized agents handle the full lifecycle. The Planner agent reads your codebase and derives test cases from that analysis. It identifies critical user paths from route definitions, maps form flows from component trees, and handles database state setup by generating the endpoints needed to put the application in the right state for each scenario. No one designs the test suite manually. The Automator agent executes those test cases against your running application with verification layers at each step, so behavior is consistent rather than probabilistic. The Maintainer agent watches your code changes and self-heals tests as the application evolves.

The result is that connecting Autonoma to your codebase generates an initial suite without anyone authoring a flow. This is the meaningful architectural difference: Momentic automates execution and maintenance; we also automate test discovery and creation.

Code-Change Awareness

This is the dimension that matters most for teams shipping AI-generated code. AI coding tools produce code at a rate where manually keeping tests in sync is not realistic.

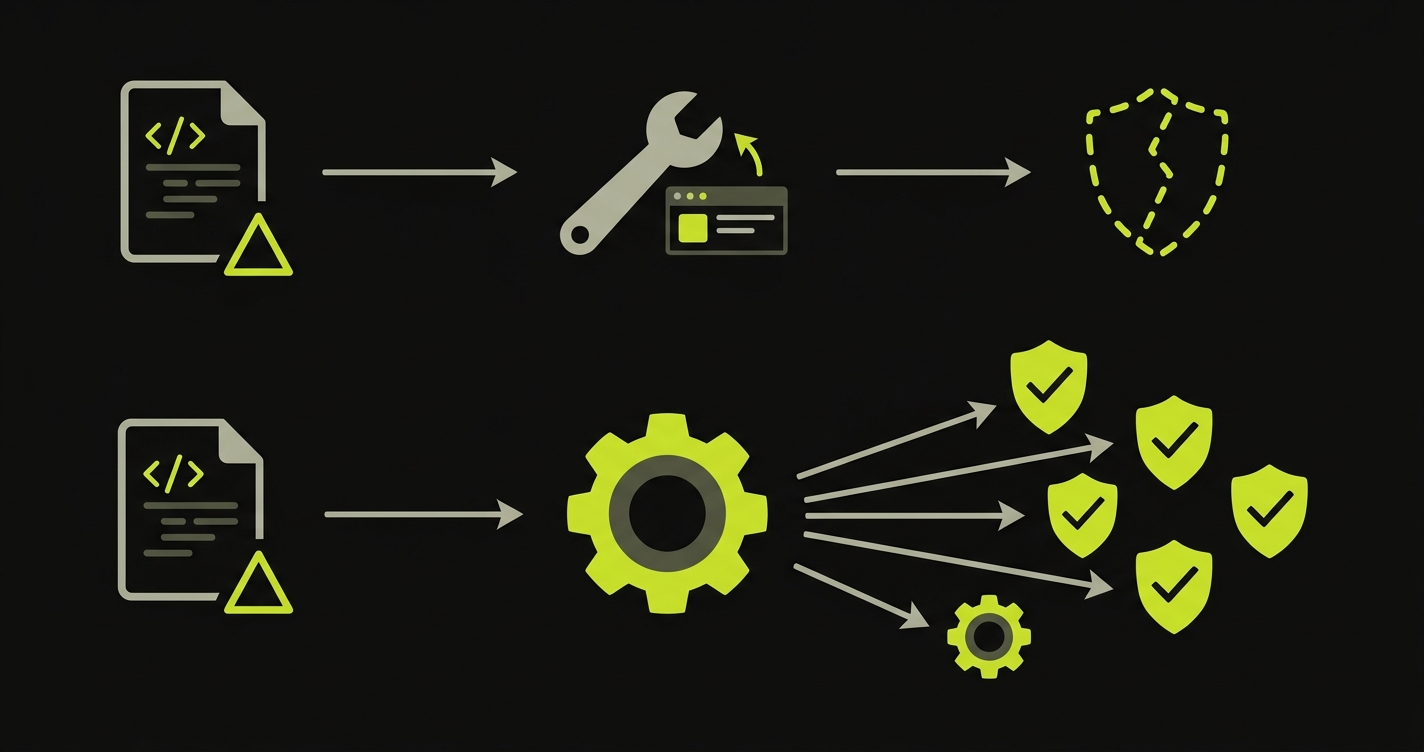

Momentic detects UI changes and self-heals selectors when elements move or rename. This handles the surface-level problem: tests do not break when a button changes its class or a form field gets reorganized. What Momentic does not do is detect when new routes are added to your codebase, when a new checkout flow gets scaffolded, or when a user-facing feature appears that has no test coverage yet. Coverage gaps from new code are invisible.

Autonoma addresses this at the Planner level. When your codebase changes, the Planner agent re-reads the delta and identifies what is new or modified. New routes get test cases planned for them. Modified flows get updated test logic. The Maintainer agent handles the mechanical self-healing that Momentic also offers, but the coverage mapping is driven by the code itself rather than by what a human previously decided to test.

For teams whose codebase is growing rapidly through AI-generated code, this distinction is practical. Coverage does not require a human to notice that a feature needs a test.

Test Creation Experience

This is where the two platforms reflect fundamentally different philosophies about what testing should look like in 2026.

Momentic's no-code editor is polished. A non-technical QA analyst can create a test flow without writing code. The interface is approachable, the feedback loop is fast, and the barrier to getting coverage on a new feature is low. For teams that have dedicated QA resources who are not developers, Momentic's creation experience is a genuine advantage.

Autonoma does not have a test creation UI in the traditional sense, because there is nothing to create manually. You connect your repository, point to a running staging environment, and the Planner agent derives coverage. No sessions, no recordings, no flow authoring. The test suite exists because the code exists.

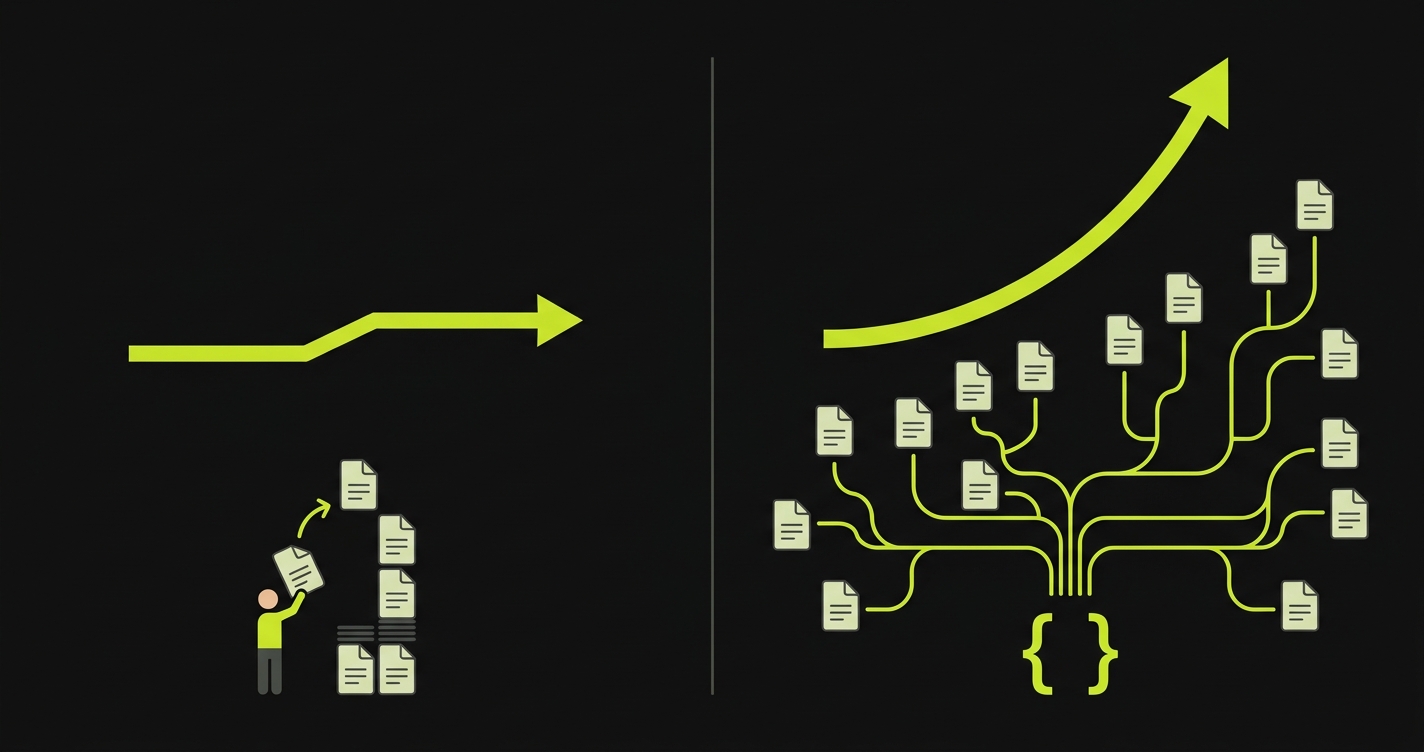

For teams shipping five or ten features per sprint through AI coding tools, this is not a minor convenience. It is the difference between coverage that scales with your codebase and coverage that scales with your QA team's authorship bandwidth. Momentic's editor is good at what it does, but the authoring step itself is the bottleneck we designed Autonoma to eliminate.

Integration Depth

Both platforms integrate with the standard CI/CD surface. GitHub Actions, GitLab CI, CircleCI, and similar systems are supported on both sides. Pull request checks, blocking deploys on failure, and Slack notifications work on both.

Momentic's integrations extend into project management and communication tooling. Jira issue creation on failure, Linear ticket routing, and Slack alerting are all supported. For teams that route failures into a structured triage workflow, Momentic's issue integration is useful.

Autonoma's integrations are developer-workflow oriented. Terminal and IDE integration means agents can be triggered from a coding context, not just from CI. This fits teams that want testing to be part of the development loop rather than a separate gate. The Planner agent's ability to run when a pull request opens and derive coverage for the new code in that PR is a pattern that does not have a direct equivalent in Momentic's flow.

Reporting and Analytics

Momentic offers a clean dashboard with test run history, pass/fail trends, and failure screenshots. Failure analysis shows the step that broke and the element Momentic was targeting. For QA leads who need to present test health in a weekly review, the reporting is sufficient and readable.

Autonoma's reporting is oriented toward coverage mapping rather than run history. Because the Planner agent derives tests from the codebase, the reports surface coverage against your actual application surface: which routes are covered, which flows have gaps, and what changed in the most recent run. For engineering leads who want to understand coverage depth rather than just pass/fail counts, this framing is more useful.

For engineering leads who care about understanding what is tested and what is not, Autonoma's coverage-first reporting is more actionable. Momentic's reporting answers "did my tests pass?" while Autonoma's answers "is my application actually covered?" Both integrate with existing observability stacks for advanced trend analysis.

Autonoma vs Momentic Feature Comparison

| Capability | Momentic | Autonoma |

|---|---|---|

| Test generation source | Human-authored flows in no-code editor | Automated from codebase analysis |

| Self-healing tests | Yes, selector-level healing | Yes, code-aware healing via Maintainer agent |

| New feature detection | No, requires manual test authorship | Yes, Planner re-reads codebase on changes |

| Database state setup | Manual configuration required | Planner agent generates endpoints automatically |

| Test creation experience | Visual no-code editor, accessible to non-devs | Fully automated, no editor required |

| CI/CD integration | GitHub Actions, GitLab CI, CircleCI | GitHub Actions, GitLab CI, terminal/IDE triggers |

| Non-technical QA support | Strong (designed for it) | Not applicable (no test authorship) |

| Coverage mapping to codebase | No | Yes, route and component level |

| Execution verification layers | Standard | Verification at each agent step |

| Issue tracker integration | Jira, Linear, Slack | Slack, GitHub issues |

| AI-generated code awareness | No, tests are independent of code changes | Yes, Planner re-derives tests when codebase changes |

| Test maintenance model | Auto-healing selectors, manual flow updates | Fully automated via Maintainer agent |

| Setup time to first test | Minutes (record or describe a flow) | Under 30 minutes (connect repo, agents generate) |

| Parallel execution | Yes | Yes |

| Free tier available | Trial only | Yes, functional free tier |

| Pricing model | Contact sales (not publicly listed) | Usage-based with free tier |

Autonoma vs Momentic Pricing

Momentic does not publish pricing publicly. Plans are available through their sales team, and enterprise pricing is custom. Based on publicly available information, Momentic targets engineering teams and offers a demo-first sales process rather than self-serve signup.

Autonoma starts with a free tier that gives teams access to the core pipeline before committing. Paid tiers are usage-based, scaling with the number of test runs and the application surface being covered. Enterprise plans are custom. The free entry point means a team can validate the architecture on a real codebase before signing a contract.

| Factor | Momentic | Autonoma |

|---|---|---|

| Free tier | Trial only | Yes, functional free tier |

| Starting paid price | Contact sales | Contact for current pricing |

| Pricing model | Not publicly listed | Usage-based |

| Enterprise | Custom | Custom |

| Test maintenance cost | Low (self-healing) | Near zero (automated) |

| Test authorship cost | Ongoing (human sessions) | None (automated) |

| Engineer time per new feature | 30-60 min to author test | 0 min (Planner handles it) |

The total cost calculation matters more than the license price. If your team spends 20 hours per month on test authorship in Momentic, that is real engineering time with a real cost. That time is closer to zero with Autonoma, which changes the ROI math significantly at scale.

Where Momentic Has Strengths

Credit where it is due. Momentic does several things well.

The no-code editor is its clearest advantage. If your QA function includes non-developers who own test coverage, Momentic's editor gives them a usable authoring experience without writing code. The learning curve is short and the interface is approachable.

Momentic's issue tracker integrations with Jira and Linear are also more polished than ours today. For QA leads who route failures into a structured ticketing workflow, that integration depth saves time.

Speed of initial onboarding for individual flows is fast. If you need a test for one specific flow today, recording a session in Momentic's editor gets you there quickly. That said, speed-to-first-test is a different metric than speed-to-full-coverage, and the gap between the two is where Autonoma's architecture pays off.

Where Autonoma Excels

For teams searching for a Momentic alternative that scales with AI-generated code velocity, the codebase-first architecture is the clearest advantage. Teams shipping code at AI velocity do not have the bandwidth to manually author tests for every new route and component. Our Planner agent reads the code and derives coverage automatically. The test suite grows with the codebase, not with the QA team's authorship time.

Database state handling is another genuine differentiator. Momentic requires manual configuration to set up application state for complex scenarios. The Planner agent in Autonoma generates the endpoints needed to put the database in the right state for each test, making stateful scenario coverage tractable without dedicated infrastructure work.

The developer-workflow orientation fits teams where QA is a shared responsibility rather than a dedicated function. Terminal and IDE triggers, PR-level coverage analysis, and integration with the coding loop mean testing happens in the same context as development rather than in a separate tool.

For teams using AI coding agents (Cursor, Copilot, or similar), Autonoma is designed for this environment. The codebase-as-spec model aligns with how AI-generated code is produced: the code is the artifact, and tests should derive from it rather than require a human to translate it into a separate test language.

Real-World Scenarios

| Scenario | Momentic | Autonoma |

|---|---|---|

| New feature shipped via AI coding agent | QA analyst must manually author test in editor | Planner auto-derives test cases from new routes |

| UI redesign (buttons, layouts, forms renamed) | Self-healing resolves most selector changes | Maintainer updates tests from code diff + verification |

| Multi-step checkout with specific DB state | Manual test data setup or custom seed scripts | Planner generates state-setup endpoints automatically |

Scenario one is where the split is sharpest. A team shipping five features per sprint via AI coding tools would need a QA analyst to author five new test flows in Momentic every sprint. In Autonoma, the Planner handles that automatically. For a team of that profile, the authorship gap compounds quickly.

Scenario two is roughly equivalent. Both platforms handle UI refactors well through self-healing. The difference is implementation depth: Autonoma's Maintainer agent works from code diffs rather than purely from UI interaction patterns, which can catch intent changes that pure selector healing misses.

Scenario three is where Autonoma's database state handling becomes a practical differentiator. Complex stateful flows are the hardest part of E2E testing infrastructure, and the Planner agent handling that automatically removes a significant friction point.

How This Compares to the Broader Market

If you are evaluating the AI testing landscape more broadly, the AI testing tools definitive guide covers the full category including codeless tools, script-assisted tools, and fully autonomous platforms. The QA Wolf comparison covers a different category entirely: QA Wolf is a managed service with human QA engineers running tests, which is a different architectural and cost model. The BrowserStack comparison covers a fundamentally different architecture: cloud execution infrastructure versus AI-native test generation. The Mabl comparison covers a recorder-based platform with enterprise maturity, where the tradeoff is between established workflows and AI-native generation. If open-source transparency and self-hosting are priorities, the open-source Momentic alternative comparison covers that angle in depth.

Momentic and Autonoma are both genuinely AI-native platforms. The choice between them comes down to whether you want to preserve a human test-authorship layer (Momentic) or eliminate it entirely (Autonoma).

The Verdict: Is Autonoma the Right Momentic Alternative?

The question is not "which tool has more features." It is "where is testing headed, and which architecture gets you there?"

Momentic is a well-built tool for teams that want to make manual test authorship faster and more reliable. If your QA function depends on non-developers owning a test library through a visual editor, Momentic serves that workflow.

But the trajectory of software development is moving away from manual authorship entirely. AI coding tools are accelerating code output beyond what any human test-authoring workflow can match. The teams we work with at Autonoma chose a codebase-first architecture because they needed tests that scale with their code, not with their QA team's bandwidth.

If your codebase is growing through AI-generated code and you want a testing layer that keeps pace automatically, Autonoma is the platform built for that future. Start with the free tier and connect your repo. The Planner agent will show you what coverage looks like when no one has to author it.

The core difference is the source of test creation. Momentic requires a human to author each test flow through a no-code editor; the AI handles execution and maintenance but not initial test discovery. Autonoma's Planner agent reads your codebase and derives test cases automatically from your routes, components, and data models. No one authors a flow. For teams shipping AI-generated code at high velocity, this distinction determines whether coverage keeps pace with output.

Momentic is the stronger choice if your QA team includes non-developers who own test authorship. The visual no-code editor is genuinely accessible and does not require coding skills. Autonoma does not have an equivalent authoring interface because test creation is automated entirely. If the goal is to empower a non-technical QA analyst to build and own a test library, Momentic fits that workflow better.

Momentic requires manual test data setup or custom seed scripts to put the application in the right state for stateful flows. Autonoma's Planner agent handles this automatically by generating the endpoints needed to set database state for each test scenario. For complex multi-step flows that depend on specific application state, Autonoma removes significant infrastructure work that Momentic leaves to the team.

They address the same category and most teams would choose one or the other rather than running both. The exception would be if your application has distinct surfaces with different testing ownership: a developer-led surface covered by Autonoma alongside a manually-managed test library in Momentic for non-developer QA. In practice, most teams consolidate on one platform once they validate the architecture.

Autonoma is specifically designed for codebases where AI coding tools are producing output faster than a human can review it line by line. The codebase-as-spec model means the Planner agent derives test coverage from the code itself, including new routes and components added by AI coding agents. Momentic handles the maintenance side well through self-healing but still requires a human to author tests for new features, which creates a coverage lag when code is shipping at AI velocity.

Momentic's no-code editor is better for non-technical test authorship. Its issue tracker integrations with Jira and Linear are more mature. And for a team that needs to quickly test a specific, well-defined flow without connecting a full codebase, Momentic's time-to-first-test is shorter. If your QA function depends on a non-developer owning test coverage, Momentic is genuinely the stronger fit.

Momentic does not publish pricing publicly; plans are available through their sales team. Autonoma offers a functional free tier for initial validation, with paid tiers that are usage-based. The license price difference is less important than the total cost of ownership. If your team spends significant engineering time authoring tests in Momentic, that time has a real cost. Autonoma's automated test creation reduces that ongoing labor cost to near zero.

For developer-led teams shipping AI-generated code at high velocity, Autonoma is the strongest Momentic alternative available. The codebase-first architecture means tests derive from your routes, components, and data models automatically, eliminating the manual authoring step that Momentic still requires. Where Momentic excels for teams with non-technical QA analysts who need a visual editor, Autonoma excels for teams that want coverage to scale with code output, not QA bandwidth. The free tier lets you validate the architecture on your own codebase before committing.