Regression testing for AI-generated code is the practice of continuously verifying that existing user flows still work as AI coding agents merge code at 100x human velocity, using codebase-aware coverage that re-derives intent from code structure rather than from recorded selectors. Unlike traditional regression suites built on hand-maintained scripts, this approach is designed to survive the selector drift, DOM restructures, and blast-radius changes that AI coding agents produce routinely, without requiring a human to update test files after each agent commit.

AI agents generate three orders of magnitude more code change per developer than 2020-era humans. Traditional regression suites can't keep up. We built Autonoma's regression coverage to scale with code volume, not engineer-hours.

The volume is not the hard part. The hard part is that no human read most of the code. An agent refactored your checkout flow at 2 AM and the PR merged by morning. Your regression testing practice either scales to meet that rate or it quietly becomes shelfware while the CI badge stays green. This is about building the practice that holds.

What Changed About Regression Testing in 2026

The numbers that define the problem are not speculative. Research across multiple AI coding studies shows that developers using agentic assistants generate between 55% and several hundred percent more merged code per week than pre-AI baselines. When agents run in fully autonomous modes, the multiplier compounds. Fifty PRs per day from a small team is no longer an edge case.

The volume alone is a challenge. Four specific characteristics of agent-generated code make the regression problem qualitatively harder.

Blast radius per PR. Human developers make surgical changes. An agent asked to improve a sorting function often refactors the table component, adjusts the shared utility it calls, and updates the type definitions that flow downstream. The blast radius of an agent PR is wider per line-of-intent than an equivalent human change. A hand-maintained regression suite designed around human-scoped PRs will miss inter-agent interactions systematically.

No human reviewer per line. Traditional regression failures are partly caught by code review: someone reads the diff and notices a selector rename or a changed prop shape. At 100x velocity, code review is pattern-matching at best. Nobody is reading 3,000 lines of agent-generated JSX before approving. The regression suite is now the primary reviewer, not a supplement to one.

Selector drift as a constant. AI agents refactor. They rename data-testid attributes when they restructure components, extract presentational markup into new subtrees, and move interactive elements between containers. In a human-written codebase, selector drift is occasional. In an agent-powered codebase, it is the steady state.

Coverage debt that accumulates faster than it can be written. If your coverage strategy is "QA engineer writes tests after features ship," the backlog in an agent-powered team is infinite by design. The agents don't slow down for coverage catch-up.

The implication: regression coverage is no longer infrastructure you build once and maintain lazily. It is the most important review process your team runs, because for a significant portion of merged code, it is the only review that happens.

Consider what that means in practice. Before agentic development, a regression failure was a signal that a human made a mistake. It was infrequent enough that a QA engineer could investigate each one thoroughly, trace the root cause, and add a test that prevented recurrence. The process was linear: one failure, one investigation, one fix. Now, a regression might mean an agent touched something it shouldn't have, or two agents' correct changes interacted incorrectly, or a refactor that was behaviorally neutral introduced a subtle timing dependency. The investigation is harder, the blast radius is wider, and the rate of new failures may exceed the rate at which the team can investigate old ones. Regression testing without the right architecture for this environment is not just ineffective. It actively creates a false sense of coverage while the real surface area expands unchecked.

Why Hand-Maintained Playwright Suites Collapse Under This Load

Playwright is an excellent tool. The problem is not Playwright. The problem is the model that surrounds it: a human QA engineer updating selectors every time a PR touches the DOM.

Do the math concretely. If your team merges 40 agent PRs per day and 10% of those touch at least one selector (a conservative estimate for agent-authored refactors), you have four selector-touch events every day. If a senior QA engineer takes 12 to 25 minutes to diagnose a flaky selector and write a confident fix, that is 50 to 100 engineering minutes per day just keeping the regression suite green. That is before any new coverage work, before any actual quality improvement work, before anything that moves a product forward.

At 40 PRs/day, selector maintenance alone starts crowding out the time QA has to think about what to test, not just how to keep existing tests from failing.

By the time the selector fix lands, three more PRs have touched the same component tree. The merge tax compounds every hour the suite is red.

Codeless record-replay tools don't escape this either. Recording a flow binds you to the UI state at recording time. Agent refactors are precisely the changes that invalidate recordings fastest. The false-positive rate on replayed recordings against an actively evolving agent codebase is high enough to erode trust in the entire suite within weeks.

AI-augmented tools that write more test scripts are a partial improvement, but they share the same underlying brittleness. You're accumulating selector-bound scripts faster. The count grows; the maintenance load grows with it. Faster brittleness accumulation is not the same as eliminating brittleness.

To see why, follow a realistic scenario through. An agent refactors the checkout page: it splits the single-page form into a two-step flow to improve conversion, restructures the DOM hierarchy, and renames several interactive elements to match the new design. The behavior from the user's perspective is identical: cart items, shipping info, payment, confirmation. The test selectors targeting the old single-page layout are now broken across at least eight separate test cases. CI goes red on a deploy that is functionally correct.

A QA engineer investigates. It takes 30 minutes to understand which selectors changed, why, and how to map them to the new structure. The engineer updates eight tests, pushes a fix, waits for CI. Two hours have passed. During those two hours, the agent shipped three more PRs. Two of them touched components that interact with checkout. The QA engineer has not looked at those yet.

This is not a hypothetical. It is the baseline operational mode for teams running AI coding agents at production velocity without codebase-aware regression coverage.

Codebase-Derived Regression Coverage

The shift that matters is not from slow tests to fast tests. It is from tests that re-run a script to tests that re-derive intent.

A selector-based script asks: "Is this button still at this XPath?" A codebase-aware regression asks: "Given what the code says this user flow is supposed to do, does it still do that?" The first question breaks when an agent renames a class. The second survives it, because intent is re-derived from the new code, not from a snapshot of the old one.

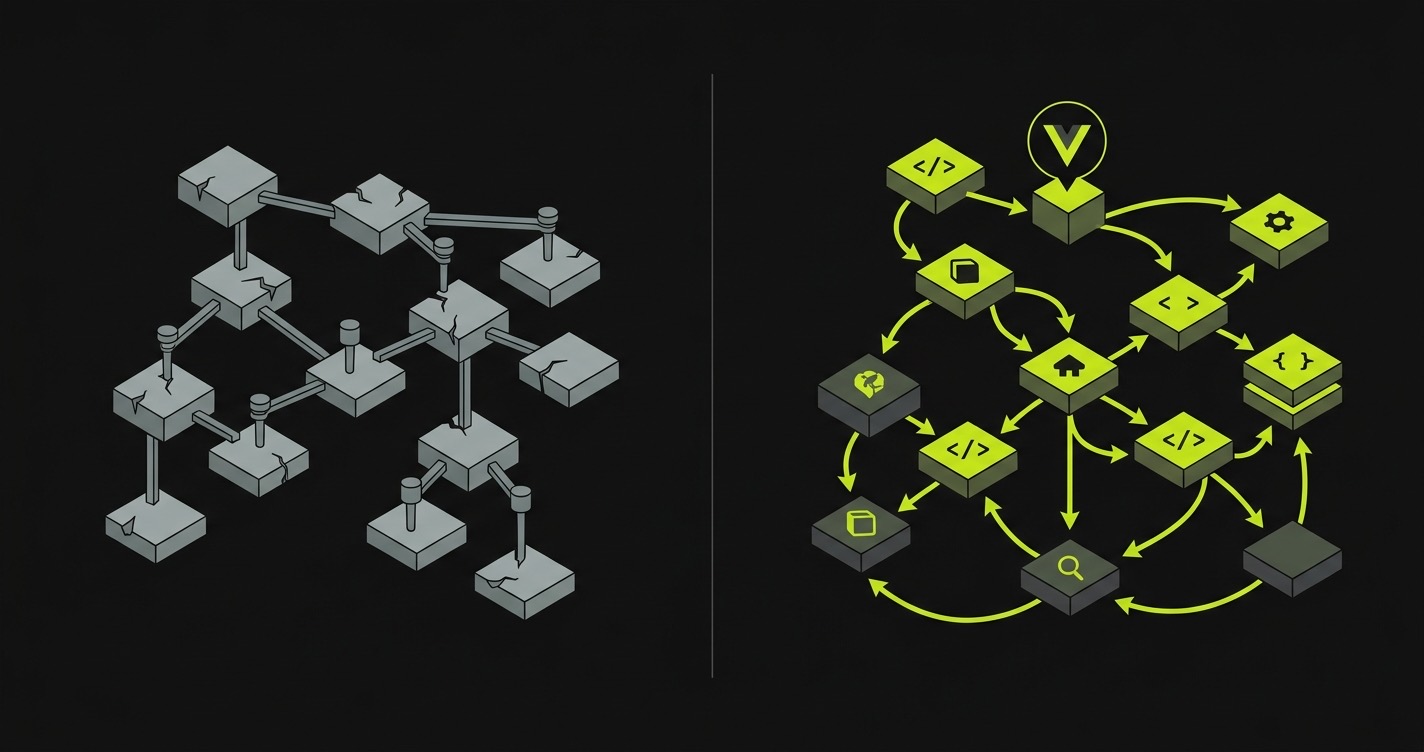

This is the architectural premise behind codebase-aware testing: the spec is the code, not the script. When the code changes, coverage updates from the new code. Selector drift becomes invisible because there are no selector anchors to drift.

In practice the shift looks like this. Instead of a test that says "click the element with id checkout-btn," a codebase-aware system reads the routes, the component tree, and the user flows that emerge from both. It derives what a valid end-to-end execution of checkout looks like. When an agent refactors the component and renames the button, the next coverage pass re-reads the new component and re-derives the execution path. No human intervention required.

This is also why automated regression testing at agent scale is a different problem than automated regression testing at human scale. The tools designed for human-velocity teams expect the spec to be relatively stable. The spec is never stable when agents are writing code. The agentic testing for vibe coding framing is useful here: when vibe coding is the development model, vibe testing (recording human intent) cannot keep pace. You need a system that reads the code.

There is a second, less obvious benefit: coverage completeness. Hand-written test suites cover what the engineer thought to write tests for. Codebase-derived coverage covers what is actually in the code. For AI-generated codebases, those two sets diverge significantly. Agents add routes, components, and flows that no QA engineer specified, because no QA engineer was involved. Codebase-aware tools discover that coverage gap automatically; hand-written tools leave it empty until someone notices a production incident and adds a test retroactively.

The practical implication is that codebase-aware regression coverage is also a discovery mechanism. When our Planner reads a codebase it has not seen before, it often identifies three to five critical flows that existed in the code but had no test coverage at all. Those are almost always agent-generated paths: the features built at 2 AM when no one was watching the pull request queue.

One more dimension worth naming: the drift between what your tests claim to cover and what your code actually does. In a hand-maintained suite, this gap grows silently. Tests that were accurate six months ago may describe flows that no longer exist, or miss flows that were added. Codebase-aware coverage closes that gap on every run, because the coverage model is regenerated from the code, not preserved from a historical snapshot. For teams where agents are adding and removing routes regularly, this distinction becomes significant within weeks. The coverage fidelity of a hand-maintained suite degrades at exactly the rate that the codebase evolves. In an agent-powered team, that rate is very high. Codebase-aware coverage is the architectural response to that rate: designed for the team that merges fifty PRs before lunch, not ten per week.

How Autonoma Keeps Regression Coverage Current

The problem our Planner was built to solve is the one described above: when the codebase changes at agent velocity, how do you know what to re-test and how do you re-test it without relying on selectors an agent just refactored away?

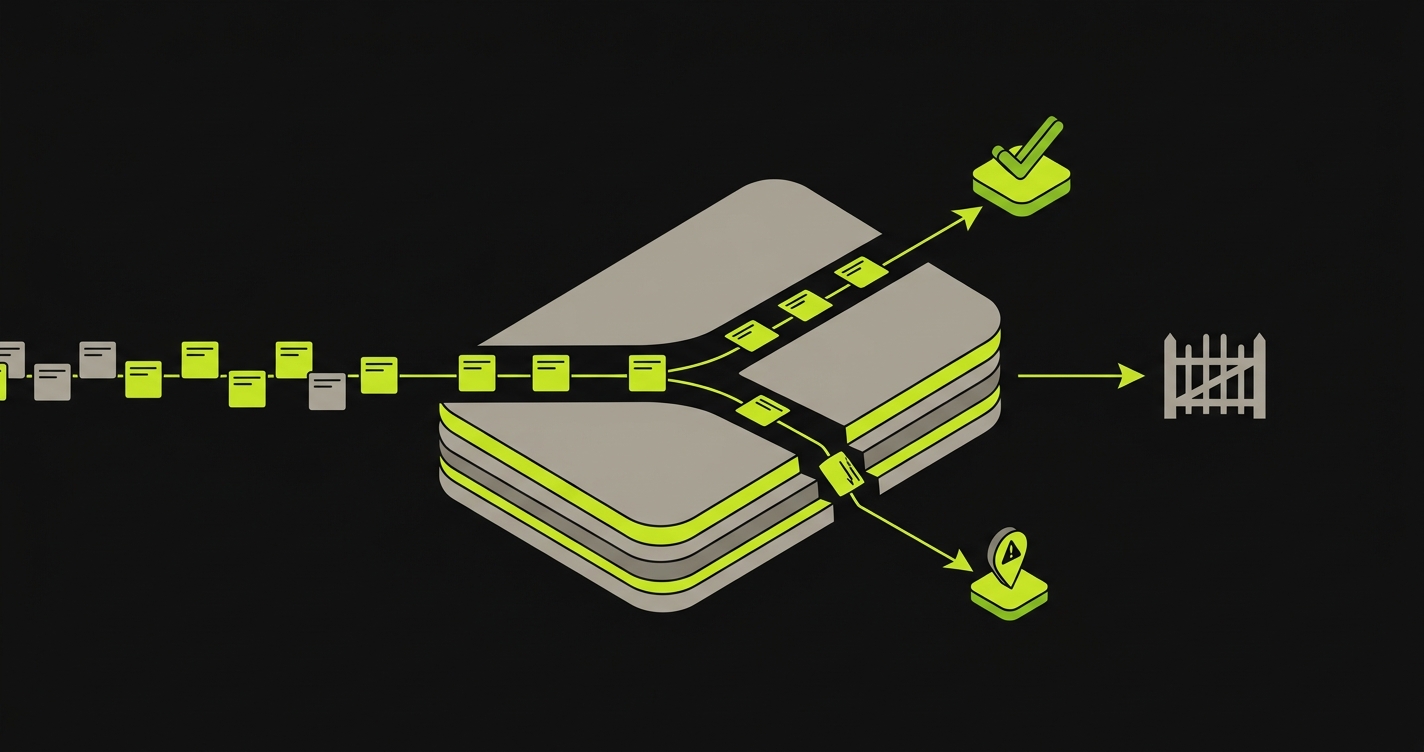

The current mechanism is Replay. Our Planner agent reads the codebase: not a recorded session, not a browser extension capture, but the actual routes, components, and user flows as they exist in the code right now. From that reading it derives a model of what the application is supposed to do and where the critical paths are. On every deploy or PR trigger, the Planner identifies which flows were touched by the diff and queues a Replay pass.

The Automator then re-executes those flows against the running application. Intent guides execution, not selectors. When an agent renamed your data-testid in the refactor, the Automator doesn't care. It was not navigating by that selector in the first place. It was executing a derived user intent: "complete the checkout flow." It finds the checkout mechanism in the current DOM because it understands what checkout looks like from the code, not from a snapshot of what it used to look like.

What you get today: regression coverage that survives most agent refactors, low false positive rates (intent is checked, not pixel or selector matches), and no manual update cycle when component trees shift. Our Planner also generates the DB state setup needed to put your database in the right shape for each test scenario, so flows requiring authenticated users or specific subscription states run correctly without manual fixture management.

One honest note on the roadmap. Diffs, our dedicated diff-aware re-test product that does surgical per-diff coverage scoping, is not shipping today. The current mechanism is Replay on every deploy or PR, which catches the inter-agent interaction regressions that are the primary failure mode in high-velocity AI development. Diffs will make coverage scoping more surgical. Replay is what ships now.

The most common regression pattern we see in customer repos is inter-agent interaction. Agent A changes a utility function to serve its feature. Agent B's PR, which merged two days ago, relied on the old behavior. The regression surfaces in Agent B's flows, not Agent A's. A hand-maintained suite would have to be watching the right flow at the right time to catch it. Replay catches it because it re-runs existing flows, not just flows touched by the most recent PR.

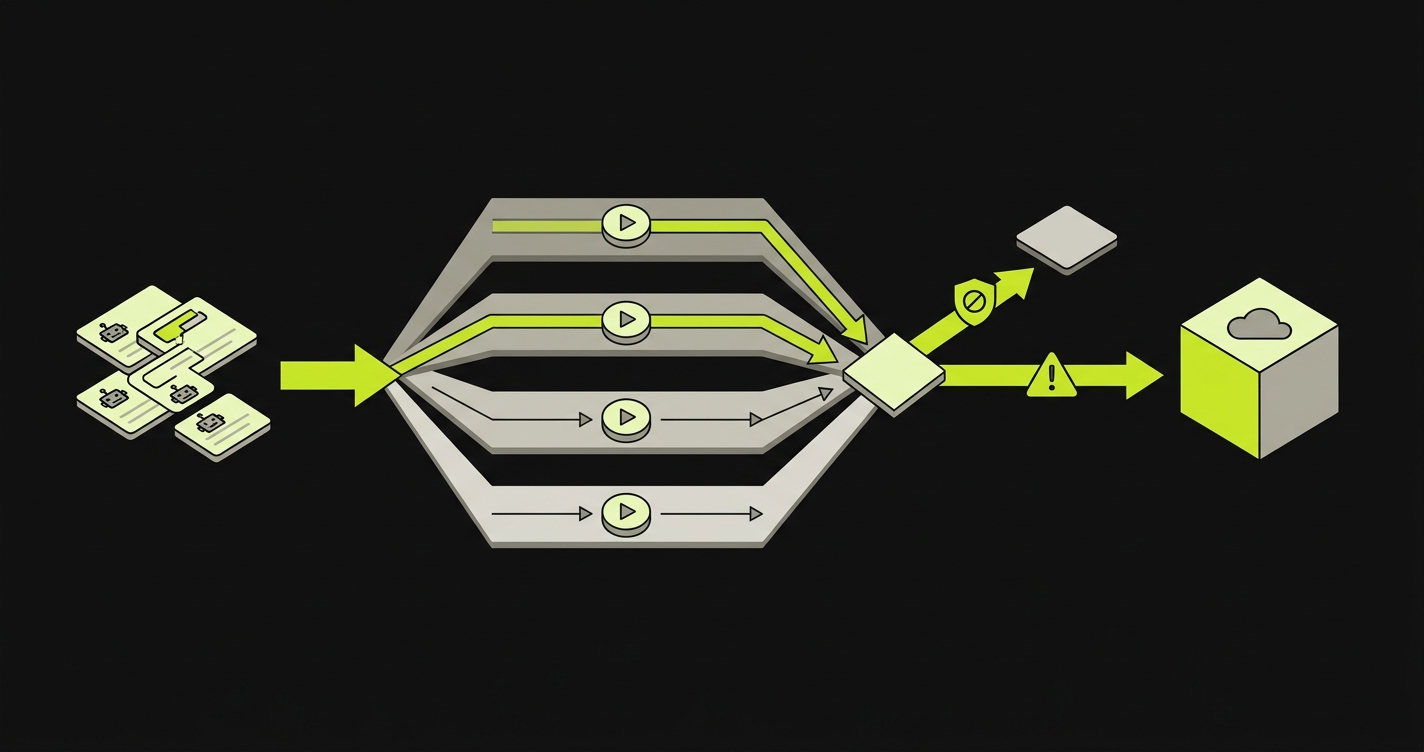

For teams running parallel agent PR workflows, Replay runs are parallelized across branches so the signal arrives before merge, not after.

Comparison: Autonoma vs Hand-Written Playwright vs Codeless Record-Replay

| Approach | Maintenance hrs/PR | Agent-resilience | False positive rate | Time to first signal |

|---|---|---|---|---|

| Autonoma (Planner + Replay) | Low (no selector maintenance) | High: intent re-derived from current code | Low (and dropping with Diffs roadmap) | Per-PR, parallel runs |

| Hand-written Playwright | High (0.2-0.5 hrs on selector-touch PRs) | Low: breaks on selector drift | High on agent refactors | Fast signal, frequent false blocks |

| Codeless record-replay | Medium (re-recording required) | Very low: recordings bind to snapshot DOM | Very high | Fast record, trust degrades quickly |

The critical dimension is agent-resilience. Hand-written Playwright is brittle by design: selectors are static and agents change selectors. Codeless record-replay captures interaction patterns rather than raw selectors, which is slightly better, but it still breaks on structural DOM changes. Intent re-derivation is the mechanism designed specifically for the agent-refactors-frequently problem.

On false positives: codeless record-replay tools generate a significant volume of failures from layout changes and responsive breakpoints. In a codebase where AI agents regularly adjust UI structure, that signal-to-noise ratio degrades quickly. Intent-based replay distinguishes "element moved to a different location in the DOM" from "element no longer behaves correctly," and only surfaces the second type.

The Autonoma column says "low (and dropping with Diffs roadmap)" because the Diffs product will add surgical diff-scoped re-testing on top of Replay. That is roadmap. The numbers above are realistic estimates, not marketing copy.

Designing a Regression Strategy for AI-Generated Code

Whatever tooling you use, the strategy has a clear shape.

Start with critical-path E2E flows. Checkout, login, primary user journey, billing. These are the flows that, if broken, produce revenue impact within hours. They are also the flows agents touch most frequently because they are the most connected parts of the codebase. Regression coverage on these flows is non-negotiable. Everything else layers on top.

Layer API contract tests on agent-touched endpoints. When agents refactor services they often change response shapes in ways that don't break unit tests but do break callers. A lightweight contract test layer on critical API boundaries catches these before they compound. This is especially important for endpoints that feed your E2E-tested flows.

Trust codebase-aware tooling for the long tail. You cannot manually cover every flow an agent touches. You are not supposed to. Codebase-aware regression coverage is designed for the long tail: flows you didn't think to manually specify, interactions that emerge from agent-generated code you never reviewed. Connect it to the codebase and let it derive coverage.

Stop trying to maintain selectors manually. This is the hardest habit to break for teams that built their QA practice on Playwright. The game is lost when agents write code at this velocity. The selector maintenance budget is zero. If your current tooling requires selector maintenance, that is the bottleneck to eliminate first.

The right mental model: critical paths covered by intent-based E2E regression, API boundaries covered by contract tests, everything else covered by codebase-aware tooling that re-derives coverage from the diff. You are not writing tests. You are configuring coverage policies.

Regression Testing in CI/CD for AI Agents

The CI/CD integration point is where the strategy becomes operational.

PR-gate vs nightly is the first decision. For agent workflows, PR-gate regression is almost always the right answer. Nightly suites accumulate failures across multiple merges, making root-cause attribution expensive. When agents merge dozens of PRs per day, a nightly failure is a cold case by morning. Run codebase-aware regression on every agent PR. Block merge on intent-failure. Surface flake suspicion as a warning rather than a block.

Block on intent-failure, warn on flake. Not all test failures are equal. A confirmed intent-failure (the flow does not complete, the core behavior is wrong) should block merge. A flake signal (execution variance that doesn't correlate with a diff) should warn but not block. Tooling that treats all red tests identically is actively harmful at agent velocity: too many false blocks erode trust in the suite, and engineers start bypassing it.

Parallelism is not optional at scale. If you're running 40 PRs per day and each regression run takes 8 minutes, serial execution means test results arrive hours after merge. Parallel agent PR workflows depend on parallel test execution. Regression runs must be parallelized across branches. This is a CI/CD infrastructure concern as much as a testing concern.

The ai-agent-merge-tax framing is useful here: every hour a valid PR sits blocked because of a false regression failure has a measurable cost. Architect the CI gate to minimize that tax, not just to maximize coverage surface.

The opening claim holds under examination: coverage that scales with code volume, not engineer-hours, requires a different architecture from what most teams built before 2024. Scripts that re-run break when agents refactor. Intent that re-derives survives. We run Autonoma's own regression coverage on our own codebase, and Replay catches the inter-agent interaction regressions that slip through review when three agents are running in parallel. That is what makes high-velocity AI development sustainable rather than reckless.

For the canonical primer on what regression testing is and when to apply each layer, see our regression testing guide. For a detailed layer-by-layer setup walkthrough including tool selection and CI/CD integration, the automated regression testing in CI/CD guide covers the full stack.

FAQ

More than ever. AI agents make confident, sweeping changes across shared surfaces without the contextual hesitation a human developer would apply. Regressions in agent-powered teams emerge primarily from inter-agent interactions: Agent A changes a utility function, Agent B's PR from two days ago relied on the old behavior. Neither agent's individual changes are wrong in isolation. The regression suite is the mechanism that catches those interaction failures before they reach production.

It can, and teams are doing it. The limitation is that AI-generated test scripts share the same selector-binding problem as hand-written scripts: they bind to the DOM at generation time and drift when the codebase evolves. Writing more test scripts with AI accumulates brittleness faster, it does not eliminate it. Codebase-aware regression coverage is qualitatively different: coverage is re-derived from the current code state on each run, not maintained as a static artifact.

Our Planner reads the diff of each PR and the current codebase to identify which flows were touched. It queues a Replay pass scoped to those flows plus any downstream flows that depend on the changed code. Diffs, our upcoming diff-aware product, will make this scoping more surgical. Today, Replay re-executes the flows the Planner derived from your codebase and surfaces labeled failures where behavior genuinely changed rather than where selectors drifted.

Flakiness in intent-based systems is qualitatively different from flakiness in selector-based systems. Selector-based flakes often mean the selector was ambiguous or DOM timing was off. In an intent-based system, execution variance is more likely to indicate a real timing issue in the application itself. We surface flake signals separately from intent-failures so teams can tune merge-gate policy independently for each signal type. We also use verification layers at each execution step, so the Automator confirms the application reached the expected state before proceeding rather than relying on fixed wait times.